Promax 2D Seismic Processing & Analysis

Diunggah oleh

amrymokoHak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

Promax 2D Seismic Processing & Analysis

Diunggah oleh

amrymokoHak Cipta:

Format Tersedia

ProMAX 2D Seismic Processing and Analysis

copyright 1998 by Landmark Graphics Corporation

626075 Rev. B

June 1998

Copyright 1998 Landmark Graphics Corporation All Rights Reserved Worldwide This publication has been provided pursuant to an agreement containing restrictions on its use. The publication is also protected by Federal copyright law. No part of this publication may be copied or distributed, transmitted, transcribed, stored in a retrieval system, or translated into any human or computer language, in any form or by any means, electronic, magnetic, manual, or otherwise, or disclosed to third parties without the express written permission of: Landmark Graphics Corporation 15150 Memorial Drive, Houston, TX 77079, U.S.A. Phone: 713-560-1000 FAX: 713-560-1410

Trademark Notices Landmark, OpenWorks, SeisWorks, ZAP!, PetroWorks, and StratWorks are registered trademarks of Landmark Graphics Corporation. Pointing Dispatcher, Log Edit, Fast Track, SynTool, Contouring Assistant, TDQ, RAVE, 3DVI, SurfCube, SeisCube, VoxCube, Z-MAP Plus, ProMAX, ProMAX Prospector, ProMAX VSP, MicroMAX, DepthTeam and Landmark Geo-dataWorks are trademarks of Landmark Graphics Corporation. Technology for Teams is a service mark of Landmark Graphics Corporation. ORACLE is a registered trademark of Oracle Corporation. IBM is a registered trademark of International Business Machines, Inc. AIMS is a trademark of GX Technology. Motif, OSF, and OSF/Motif are trademarks of Open Software Corporation. UNIX is a registered trademark of UNIX System Laboratories, Inc. SPARC, SPARCstation, Sun, SunOs and NFS are trademarks of SUN Microsystems. X Window System is a trademark of the Massachusetts Institute of Technology. SGI is a trademark of Silicon Graphics Incorporated. All other brand or product names are trademarks or registered trademarks of their respective companies or organizations.

Note The information contained in this document is subject to change without notice and should not be construed as a commitment by Landmark Graphics Corporation. Landmark Graphics Corporation assumes no responsibility for any error that may appear in this manual. Some states or jurisdictions do not allow disclaimer of expressed or implied warranties in certain transactions; therefore, this statement may not apply to you.

ProMAX 2D Seismic Processing and Analysis

Preface . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

Conventions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2

Mouse Button Help . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Exercise Organization. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Manual Organization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

Agenda . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

Day 1 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

Introductions, Course Outline, and Miscellaneous Topics . . . . . . . . . . . . . . . . . . . . . . 1 ProMAX 2D Geometry - Manual . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1 ProMAX 2D Geometry - Full Extraction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1 ProMAX 2D Geometry - Extraction with Editing . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1 Trace Editing using Trace Statistics and DBTools . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1 System Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1

Day 2 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2

Parameter Selection and Analysis. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Elevation Static Corrections . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Brute Stack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Neural Network First Break Picking. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Refraction Static Corrections . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Stack Comparisons . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2 Velocity Analysis and the Volume Viewer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2

Day 3 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

Residual Statics Corrections . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 Dip Moveout (DMO) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 PostStack Signal Enhancement. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 Velocity: QC, Editing, Modeling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 PostStack Migration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3 Additional Topics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3

Landmark

ProMAX 2D Seismic Processing and Analysis

Contents

Manual Geometry Assignment. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

1-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-2 ProMAX Geometry Assignment Map . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-3

Geometry assignment path for this exercise. . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-4

Land Geometry . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-5 View Shot Gathers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-6

First look at the data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-6

Load Geometry into the Spreadsheet and Database . . . . . . . . . . . . . . . . . . . . . 1-7

Description of Geometry for this line. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-7 Load Survey information to the spreadsheet . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-9 Receivers spreadsheet. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-11 Sources spreadsheet . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-17 Patterns spreadsheet . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-19 TraceQC spreadsheet . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-24 Binning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-25

View Database Attributes. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-29 Load Geometry to the Trace Headers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-33 Graphical Geometry QC . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-35

QC your Geometry Assignment . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-36

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-38

ii

ProMAX 2D Seismic Processing and Analysis

Landmark

Contents

Full Extraction Geometry Assignment . . . . . . . . . . . . . . . . . . . . . . . . 2-1

Chapter Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-2 ProMAX Geometry Assignment Map . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-3

Geometry assignment path for this exercise . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-3

Extract Database Files Method . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-4

Database file extraction. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-4

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-7

Full Extraction Geometry Assignment with Editing . . . . . . 3-1

Chapter Goals . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-2 ProMAX Geometry Assignment Map . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-3

Geometry assignment path for this exercise . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-3

Extract Database Files Method . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-4

Database file extraction. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-4 Spreadsheet completion and binning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-6 Load Geometry to the trace headers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-10

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-12

Landmark

ProMAX 2D Seisic Processing and Analysis

iii

Contents

Trace Editing using Trace Statistics and DBTools

. . . . . . . . . 4-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4-2 Picking a Time Window for Statistical Analysis . . . . . . . . . . . . . . . . . . . . . . . . 4-3 Running the Trace Statistics Process . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4-4 Displaying the Statistics using DBTools . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4-5 Selecting the Data of Interest Graphically. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4-9 Focusing on a Range of data on the Histogram . . . . . . . . . . . . . . . . . . . . . . . . 4-12 Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4-14

iv

ProMAX 2D Seismic Processing and Analysis

Landmark

Contents

System Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-1

Chapter Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-2 Directory Structure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-3

/ProMAX (or $PROMAX_HOME) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . /ProMAX/sys . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . /ProMAX/port . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . /ProMAX/etc. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . /ProMAX/scratch . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . /ProMAX/data (or $PROMAX_DATA_HOME). . . . . . . . . . . . . . . . . . . . . . . . 5-3 5-5 5-5 5-6 5-6 5-6

ProMAX Data Directories . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-7 Program Execution . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-8

User Interface ($PROMAX_HOME/sys/bin/promax) . . . . . . . . . . . . . . . . . . . . 5-8 Super Executive Program (super_exec.exe) . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-9 Executive Program (exec.exe) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-10 Processing Pipeline Diagram . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-11 Types of Executive Processes. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-14 Stand-Alone Processes and Socket Tools . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-14

Ordered Parameter Files . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-15

Organization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . OPF Matrices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Database Structure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . File Naming Conventions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-15 5-16 5-18 5-19

Parameter Tables . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-20

Creating a Parameter Table. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-20 ASCII Import to a Parameter Table . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-21 ASCII File Export from the Parameter Table Editor . . . . . . . . . . . . . . . . . . . . 5-22

Disk Datasets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-23

Secondary Storage . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-24

Tape Datasets. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-26

Tape Trace Datasets . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-26

Tape Catalog System . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-28

Tape Catalog Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-28

Landmark

ProMAX 2D Seisic Processing and Analysis

Contents

Getting Started . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-28

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-30

Parameter Selection and Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-2 Parameter Table Picking. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-3

Pick Parameter Tables . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-3

Parameter Test. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-5

Test True Amplitude Recovery with Parameter Test . . . . . . . . . . . . . . . . . . . . . 6-5

IF/ENDIF Conditional Processing . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-11

Compare Data With and Without Deconvolution . . . . . . . . . . . . . . . . . . . . . . 6-12

F-K Analysis and Filtering . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-16

F-K Analysis. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-16 Compare F-K filtered shots using an IF loop . . . . . . . . . . . . . . . . . . . . . . . . . . 6-20

Interactive Spectral Analysis. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-22

Spectral Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-22

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6-30

Elevation Static Corrections . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

7-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7-2 Elevation Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7-3

Calculate Elevation Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7-6 Apply Elevation Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7-8

Apply User Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7-11

Apply External Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7-11

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7-16

vi

ProMAX 2D Seismic Processing and Analysis

Landmark

Contents

Brute Stack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8-1

Chapter Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8-2 RMS Velocity Field ASCII Import . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8-3 CDP/Ensemble Stack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8-8 Display Stack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8-10 Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 8-12

Neural Network First Break Picking . . . . . . . . . . . . . . . . . . . . . . . . . . 9-1

Chapter Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9-2 Interactive NN First Break Training/Picking . . . . . . . . . . . . . . . . . . . . . . . . . . . 9-3

Interactive Training. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9-3

Batch Neural Network First Break Picking . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9-10

Pick First Breaks for entire survey . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9-10

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9-12

Refraction Static Corrections . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-1

Chapter Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-2 Refraction Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-3

Refraction Statics - 2D . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-3

Coordinate Based Refraction Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-8 Apply Refraction Statics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-13

Apply Refraction Statics to your data. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-14

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10-16

Landmark

ProMAX 2D Seisic Processing and Analysis

vii

Contents

Stack Comparisons

. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11-2 Compare Stacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11-3 Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11-4

Velocity Analysis and the Volume Viewer. . . . . . . . . . . . . . . . . . .

12-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-2 Velocity Analysis Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-3 Velocity Analysis Precompute . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-4

Precompute Velocity Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-5 Velocity Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-9 Velocity Analysis Icons . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-12 Using the Volume Viewer . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-13 Velocity Analysis PD Tool. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-16

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 12-18

Residual Statics Corrections . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

13-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-2 Autostatics Flowchart . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-3 Data Preparation for Input to Residual Statics . . . . . . . . . . . . . . . . . . . . . . . . . 13-4

Data preparation and horizon picking for residual statics . . . . . . . . . . . . . . . . 13-4

Calculation of Residual Statics. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-9

Autostatics calculation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-10

QC and Application of Residual Statics. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-12

Compare Static Solutions in the Database . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-13 Compare Autostatics Stacks . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-15

viii

ProMAX 2D Seismic Processing and Analysis

Landmark

Contents

Compare two or more Autostatics Stacks. . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-16

External Model Autostatics Overview . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-17 External Model Autostatics Flowchart . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-18

Create Eigen Stack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-19

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13-30

Dip Moveout (DMO) . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-1

Chapter Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-2 Common Offset Binning . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-3

Determine trace binning parameters . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-4 Assign DMO offset bins to the data . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-12

DMO . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-17

Apply DMO to the data. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-18 Final Stack . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-20

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14-21

Poststack Signal Enhancement . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15-1

Chapter Objectives . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15-2 F-X Decon, Dynamic S/N Filtering, and BLEND . . . . . . . . . . . . . . . . . . . . . . 15-3

Signal Enhancement . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15-3

Trace Math . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15-7

Use Trace Math to view differences between stacks . . . . . . . . . . . . . . . . . . . . 15-7

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15-9

Landmark

ProMAX 2D Seisic Processing and Analysis

ix

Contents

Velocity: QC, Editing, Modeling . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

16-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16-2 Velocity Viewer/Point Editor . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16-3

Smooth RMS velocities, and convert to interval velocity . . . . . . . . . . . . . . . . 16-3

Velocity Manipulation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16-8

Shift smoothed RMS velocities to final datum. . . . . . . . . . . . . . . . . . . . . . . . . 16-8 Shift interval velocities to final datum . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16-10 Output a single interval velocity function . . . . . . . . . . . . . . . . . . . . . . . . . . . 16-11

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 16-12

PostStack Migration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

17-1

Chapter Objectives. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-2 PostStack Migration Processes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-3 Tapering . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-4 Poststack Migration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-5

Apply FK migration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-5 Apply Phase Shift Migration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-8 Apply FD Migration . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-10 Compare Migrations . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-11

Chapter Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17-12

Appendices Appendix 1: Additional Geometry Information . . . . . . . . . . . .

1-1

Geometry Core Path Overview. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-2

How to Decide on the Primary Geometry Path . . . . . . . . . . . . . . . . . . . . . . . . . 1-2 Transferring the Database to Trace Headers . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-3

x ProMAX 2D Seismic Processing and Analysis Landmark

Contents

Details of the Geometry Programs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-4

Steps Performed by Inline Geom Header Load . . . . . . . . . . . . . . . . . . . . . . . . . Valid Trace Numbers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Valid Trace Number Origin . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Steps Performed By Extraction. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Between Extraction and Geom Load . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Geometry Load Procedures. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-4 1-5 1-6 1-6 1-7 1-8

Pre-Geometry Database Initialization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-9

Pre Geometry Initialization flow . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-11 Complete the Spreadsheet. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-12

Inline Geometry Header Load after Pre-Initialization . . . . . . . . . . . . . . . . . . 1-13

Load Geometry to Trace Headers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 1-13

Appendix 2: Supergathers . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-1

Create Supergather . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-2 Create Supergather and Horizontally Stack. . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2-7

Appendix 3: Alternate Velocity Analysis Methods . . . . . . . . . 3-1

CVS Analysis . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-2 Interactive Velocity Analysis (IVA). . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 3-7

Appendix 4: Database/Header Manipulation

. . . . . . . . . . . . . . . 4-1

Header Manipulation Processes . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4-2

Apply a Linear Moveout Correction. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4-2

Landmark

ProMAX 2D Seisic Processing and Analysis

xi

Contents

Appendix 5: Training Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

5-1

Reference Tables . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-2

Organization of Ordered Parameter Files . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-2 PostStack Migration Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-3 Apply Statics. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-4

Reference Graphs . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-5

Datum Statics Terminology . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Geometry Assignment Map . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Promax Directory Structure . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Promax Data Directories . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-5 5-6 5-7 5-8

Flows and Data Summaries . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-9

Flows . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-9 Datasets: Seismic . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-10 Datasets: OPF-TRC . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-11 Datasets: OPF-SRF. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-12 Datasets: OPF-SIN . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-13 Datasets: OPF-CDP . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-14 Datasets: OPF-CHN . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-15 Datasets: OPF-OFB . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-15 Datasets: OPF-PAT . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5-16

xii

ProMAX 2D Seismic Processing and Analysis

Landmark

Preface

Preface

About The Manual This manual is intended to accompany the instruction given during the standard ProMAX 2D course. Because of the power and flexibility of ProMAX, it is unreasonable to attempt to cover all possible features and applications in this manual. Instead, we try to provide key examples and descriptions, using exercises which are directed toward common uses of the system. For more progressive training please take Advanced 2D. The manual is designed to be flexible for both you and the trainer. Trainers can choose which topics, and in what order to present material to best meet your needs. You will find it easy to use the manual as a reference document for identifying a topic of interest and moving directly into the associated exercise or reference. You are encouraged to copy the exercise workflows and optimize them to your personal situation. How To Use The Manual This manual is divided into chapters that discuss the key aspects of the ProMAX system. In general, chapters conform to the following outline: Introduction: A brief discussion of the important points of the topic and exercise(s) contained within the topic. Topics Covered and Chapter Objectives: Brief list of skills or processes, in the order that they are covered in the exercise. Topic Description: More detail about the individual skills or processes covered in the chapter. Exercise: Details pertaining to each skill in an exercise, along with diagrams and explanations. Examples and diagrams will assist you during the course by minimizing note taking requirements, and providing guidance through specic exercises. Chapter Summary: A brief list of skills the chapter was designed to train.

This format allows you to glance at the topic description to either quickly reference an implementation, or simply as a means of refreshing your memory on a previously covered topic. If you need more information, see the Exercise sections of each topic.

Landmark

ProMAX 2D Seismic Processing and Analysis

Preface

Conventions

Mouse Button Help This manual does not refer to using mouse buttons unless they are specific to an operation. MB1 is used for most selections. The mouse buttons are numbered from left to right so: MB1 refers to an operation using the left mouse button. MB2 is the middle mouse button. MB3 is the right mouse button. Actions that can be applied to any mouse button include: Click: Briey depress the mouse button. Double Click: Quickly depress the mouse button twice. Shift-Click: Hold the shift key while depressing the mouse button. Drag: Hold down the mouse button while moving the mouse.

Mouse buttons will not work properly if either Caps Lock or Nums Lock are on.

Exercise Organization Each exercise consists of a series of steps that will build a flow, help with parameter selection, execute the flow, and analyze the results. Many of the steps give a detailed explanation of how to correctly pick parameters or use the functionality of interactive processes. The flow examples list key parameters for each process of the exercise. As you progress through the exercises, familiar parameters will not always be listed in the flow example. The exercises are organized so that your dataset is used throughout the training session. Carefully follow the instructors direction when assigning geometry and checking the results of your flow. An improperly generated dataset or database may cause a subsequent exercise to fail.

ProMAX 2D Seismic Processing and Analysis

Landmark

Preface

Manual Organization The manual will take you through a typical workflow of a geoscientist processing a land 2D seismic dataset. The processing functions of ProMAX will be introduced and discussed as they appear in the workflow.

Processing WorkFlow

1. Geometry Assignment 2. Trace Editing 3. Parameter Selection 4a. Elevation Statics 4b. Refraction Statics 5. Brute Stack 6. Velocity Analysis 7. Residual Statics 8. Dip Moveout (DMO) 9. PostStack Signal Enhancement 10. PostStack Migration Velocity Modeling Pick First Breaks Field Data

Landmark

ProMAX 2D Seismic Processing and Analysis

Preface

ProMAX 2D Seismic Processing and Analysis

Landmark

Agenda

Agenda

Day 1

Introductions, Course Outline, and Miscellaneous Topics ProMAX 2D Geometry - Manual

Input Data into the Spreadsheet CDP Binning Loading Geometry to Trace Headers QC Database Attributes

ProMAX 2D Geometry - Full Extraction

Database File Extraction

ProMAX 2D Geometry - Extraction with Editing

Database File Extraction Spreadsheet Completion and CDP Binning Loading Geometry to Trace Headers

Trace Editing using Trace Statistics and DBTools

Running Trace Statistics Display Trace Statistics using DBTools Selecting Bad Traces with DBTools

System Overview

Directory Structure Program Execution Ordered Parameter Files Parameter Tables Disk Datasets Tape Datasets

Landmark

ProMAX 2D Seismic Processing and Analysis

Agenda

Day 2

Parameter Selection and Analysis

Parameter Table Picking Parameter Test IF/ENDIF Conditional Processing F-K Analysis and Filtering F-K Filtering Comparisons Interactive Spectral Analysis (ISA)

Elevation Static Corrections

Elevation Statics Discussion Apply Elevation Statics Apply User Statics

Brute Stack

RMS Velocity Field ASCII Import Brute Stack with Elevation Statics

Neural Network First Break Picking

Interactive NN First Break Training/Picking Batch Neural Network First Break Picking

Refraction Static Corrections

Refraction Statics Refraction Statics Calculation - coordinate based Apply Refraction Statics Stack with Refraction Statics

Stack Comparisons

Compare Stacks

Velocity Analysis and the Volume Viewer

Velocity Analysis Precompute Velocity Analysis Volume Viewer/Editor

ProMAX 2D Seismic Processing and Analysis

Landmark

Agenda

Day 3

Residual Statics Corrections

Data Preparation for Input to Residual Statics Calculation of Residual Statics QC and Application of Residual Statics External Model Autostatics

Dip Moveout (DMO)

Common Offset Binning DMO Final Stack

PostStack Signal Enhancement

F-X Decon, Dynamic S/N Filtering, and BLEND Trace Math

Velocity: QC, Editing, Modeling

Velocity Viewer/Point Editor Velocity Manipulation

PostStack Migration

Poststack Migration Processes Tapering Poststack Migration

Additional Topics

Landmark

ProMAX 2D Seismic Processing and Analysis

Agenda

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1

Manual Geometry Assignment

Geometry Assignment is designed to create the ProMAX Database Files and load header information into the trace headers of ProMAX data. The sequence of steps, or ows, depends upon available information. This chapter serves as an introduction to different approaches of geometry assignment. The Geometry Overview section in the Reference Manual and online helple provides further details of the geometry assignment process. Geometry is clearly one of the most important aspects of processing. These next three chapters are examples of a difcult, an easy, and a most common approach to geometry assignment.

Topics covered in this chapter:

t Chapter Goals t Geometry Assignment Map t Land Geometry t View Shot Gathers t Load Geometry in Spreadsheet and Database t View Database Attributes t Load Geometry to the Trace Headers t Graphical Geometry QC t Chapter Summary

Landmark

ProMAX 2D Seismic Processing and Analysis

1-1

Chapter 1: Manual Geometry Assignment

Chapter Objectives

1. Geometry Assignment

Field Data

We are at step one, Geometry Assignment, of our processing workflow. Geometry is probably the longest and most difficult subject in the manual, as it is in a normal processing sequence. If we can get the geometry correct we are well on our way to having the best possible seismic data for the interpreter. Upon completion of this chapter you should: Understand what the Ordered Parameter Files (OPFs) represent Edit the OPFs via the Geometry Spreadsheet View Trace Header values for Geometry Attributes Import Observer Data into the Geometry Spreadsheet QC and Edit Geometry via DBTools and XDB Understand ProMAX Sign Conventions Understand what a Pattern Represents Understand the steps of Binning Graphically QC Geometry with Farr Displays

1-2

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

ProMAX Geometry Assignment Map

All Possible Geometry Assignment Paths

UKOOA O.B. Notes ASCII Field Data

Manual Input

UKOOA Import Spreadsheet Import Database Import

SEG-? Input

Seismic Data (ProMAX) Extract Database Files Inline Geom Header Load

Geometry Spreadsheet

Ordered Parameter Files

Disk Data Output Inline Geom Header Load Valid Trace Numbers Overwrite Trace Headers Seismic Data (ProMAX)

Disk Data Output

Seismic Data (ProMAX)

Geometry assignment map for output to disk

Landmark ProMAX 2D Seismic Processing and Analysis 1-3

Chapter 1: Manual Geometry Assignment

Geometry assignment path for this exercise ProMAX geometry assignment is designed to be both flexible and robust. The previous map, however, displays the complicated price we pay for that flexibility. The following map shows a simplified path that we will use for geometry assignment in this exercise.

Manual Geometry Assignment Path

O.B. Notes and Survey Information ASCII

Spreadsheet Import Manual Input Field Data

SEG-Y Input

Geometry Spreadsheet Ordered Parameter Files

Inline Geom Header Load

Disk Data Output

Seismic Data (ProMAX)

1-4

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Land Geometry

The 2D Land Geometry Spreadsheet is used to assign the geometry. The spreadsheet is an editor used to input/modify geometry information, residing in the ProMAX database. While you can manually key in data, the spreadsheet has options to import geometry information, such as source and receiver coordinates from ASCII files. If the input seismic data has pertinent geometry information in the trace headers, you can extract this information using the process Extract Database Files prior to working with the spreadsheet.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-5

Chapter 1: Manual Geometry Assignment

View Shot Gathers

First look at the data Before we get into the geometry assignment steps, let us look at the data that we will be using. First we will create a workspace by adding an Area and Line, then we will build a flow to display the raw shots. 1. From the Area menu add a new area. Give your area a descriptive name that has meaning to you. You might want to use your name in this case. 2. When the Line menu appears add a new line named Watson Rise. 3. Add the following ow.

Editing Flow: 1.1-View Shots

Add Delete Execute View Exit

SEG-Y Input

Type of storage to use: ------------------------------Disk Image Enter DISK le path name: ---------------------------------------------------/misc_les/2d/segy_0_value_headers ----Default the remaining parameters----

Automatic Gain Control

----Default all parameters for this process----

Trace Display

Number of ENSEMBLES (line segments)/screen: -------2 ----Default the remaining parameters---4. Execute the ow. Use the Next Ensemble icon to move through all 20 shots for this line. Notice how the shot rolls onto the spread and that there is a discontinuity between channels 60 and 61.

1-6

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Load Geometry into the Spreadsheet and Database

Description of Geometry for this line The following figure and table describe the acquisition geometry for the Watson Rise line. Pattern for Source 1

388.5

Channel 1 Station

60

61

120

387 388 389 390 391 392 393 394 . . . 446

449 450 451 452 453 . . . 505 506 507 508

392.5

Pattern for Source 2

60 61 120

Channel 1 Station

387 388 389 390 391 392 393 394 . . . 446

449 450 451 452 453 . . . 505 506 507 508

Pattern for Source 16

448.5

Channel 1 Station

60

61

120

388 389 390 391 392 393 394 395 . . . 447

450 451 452 453 454 . . . 506 507 508 509

20 Sources 120 Channels 55 ft. Receiver Interval 220 ft. Source Interval 2 Second Record Length 4 ms Sample Rate Dynamite Source

Landmark

ProMAX 2D Seismic Processing and Analysis

1-7

Chapter 1: Manual Geometry Assignment

Observers Report

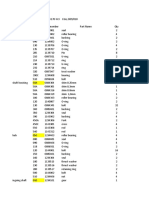

Group Int.=55 Shot Loc. File no. Shot Int.=220 Depth Offset Sample Int.=4 ms Uphole Time Chan 1 Chan 60 # of Chan=120 Chan. 61 Chan 120

388.5 392.5 396.5 400.5 404.5 408.5 412.5 416.5 420.5 424.5 428.5 431.5 436.5 440.5 444.5 448.5 452.5 456.5 458.5 464.5

2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 22 23

93 93 93 93 93 93 93 93 93 93 93 93 93 93 93 93 93 93 93 93

0 0 50 0 15 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

First Live Station=387

22 20 20 23 18 24 20 19 17 20 22 19 19 20 21 23 22 20 20 20

387 387 387 387 387 387 387 387 387 387 387 387 387 387 387 388 392 396 398 404

446 446 446 446 446 446 446 446 446 446 446 446 446 446 446 447 451 455 457 463

449 449 449 449 449 449 449 449 449 449 449 449 449 449 449 450 454 458 460 466

508 508 508 508 508 508 508 508 508 508 508 508 508 508 508 509 513 517 519 525

Source. and Receiver Azimuth=90 degrees

Last Live Station=525

Source Type = Shot, Units=ft

1-8

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Load Survey information to the spreadsheet In this exercise, you will assign geometry to the 2DTutorial dataset, Watson Rise, using the geometry spreadsheet. Two flows are required to accomplish this task. One ow will use the spreadsheet as an editor to automatically enter data to the database. The second ow will load the geometry from the database to the trace headers.

The following spreadsheet guide is designed to help you assign geometry to the line you are processing in the class. It is by no means a complete description of all the capabilities. Please consult the Reference Manual for additional documentation. 1. Build the following ow :

Editing Flow: 1.2-Geometry Spreadsheet

Add Delete Execute View Exit

2D Land Geometry Spreadsheet*

2. Execute the ow. The following 2D Land Geometry Assignment window appears:

Landmark

ProMAX 2D Seismic Processing and Analysis

1-9

Chapter 1: Manual Geometry Assignment

3. Select Setup, and ll out the menu with information from the observers log.

4. Select to assign midpoints by Matching pattern numbers using rst live chan and station. 5. Enter source and receiver station interval, and leave the survey azimuth blank as it will be calculated later. 6. Enter the rst and last live station numbers, select Yes to base source station numbers on receiver station numbers. Set source type to shot holes, and units are feet. You may also enlarge the font. 7. Select OK when you have entered all the information.

1-10

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Receivers spreadsheet 1. Select Receivers from the main spreadsheet window.

2. Mark all rows active by clicking MB3 on any of the numbered blocks under the Mark Block column. Marked blocks will turn a different color. Station, X, and Y are required in 2D geometry. 3. Insert enough rows to accommodate all receiver stations. Notice how many rows are present in your default spreadsheet (this number will vary depending on your font). There are 139 receiver stations in this survey, so you will need to insert rows into the default spreadsheet so that there are 139 rows. Select Edit Insert, and insert the proper number of rows after the last marked block. Scroll to the bottom of the spreadsheet. If you created more than 139 blocks, mark the excess blocks by selecting block 140 with shift-MB2. This will select all blocks numbered 140 and greater. Select Edit Delete, and OK. After you are certain that you have exactly 139 rows in the spreadsheet, mark all rows active with MB3 again, so that you can easily work with the entire spreadsheet.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-11

Chapter 1: Manual Geometry Assignment

4. Fill in the appropriate values for the Station column. Mark the Station column by clicking MB1 on the Station column heading. From the menu bar select Edit Fill. This will bring up a popup menu. Enter 387 as a starting value and an increment of 1, then select OK. (An easier way to ll, is to click MB2 on the column header. This immediately causes the ll window to display.) 5. Follow the same procedure to ll the X coordinate, starting with 0 and incrementing by 55 and the Y coordinate with all 0s. This is an old land line, for which there were no XY values recorded. We will make up some fake XYs assuming that the line is straight, runs from West to East, and has a nominal receiver spacing of 55ft. 6. Import the Elevation values from an ASCII le. When working with ASCII le import there are three required steps: Open the ASCII le. Dene which numbers are in which columns. Dene which cards or rows to exclude from the import.

1-12

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

7. Select File Import to import ASCII elevation values. Two windows will pop up allowing you to open an ASCII le.

1 3

In the Filter box of the File Import Selection window, enter the directory path (.../misc_files/2d/*) to your ASCII le, followed by /*, then select Filter. Select the ASCII lename and OK.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-13

Chapter 1: Manual Geometry Assignment

8. Click Format and enter a name recs for a format description containing ASCII import column denition information. You will see a new window at this point.

Example ASCII Import Column Denition 9. In the Column Import Denition menu, click on a parameter attribute name, such as station, to dene that columns information Note that the selection turns white.

NOTE: Look at the Mouse Button help descriptions at the bottom of the ASCII text window. Note that they reect the MB1 press and drag operation for column denition

10. Highlight the columns that contain the numbers for the attribute you selected by holding down MB1 and dragging from left to right. 11. Repeat the previous two steps for elevations.

1-14

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Switch to card or row exclusion mode. 12. Now freeze the column denitions by clicking MB3 over the Parameter Column. 13. Click MB3 with the cursor positioned over the word Station or one of the other columnar attributes.

NOTE: Look at the Mouse Button help descriptions at the bottom of the ASCII text window. Note that they now reect block selection and deletion options.

14. Use MB1 to select the rst row to exclude, and MB2 to select the last row to exclude, and press Ctrl-d. You will want to exclude title rows, blank rows, and rows with information that you do not want to import.

This writes a Ignore Record for Import message on all the dened rows. 15. There are also rows at the bottom of this le containing source information that need to be ignored. 16. From the main import menu, select Filter. This will check for any cards with inappropriate information, and allows you to interactively delete them.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-15

Chapter 1: Manual Geometry Assignment

17. From the main import menu, select Apply. 18. Select Merge existing station values with matching station data and click OK.

This will add the elevations to your spreadsheet by matching the station numbers in the ASCII le with those already in the spreadsheet. The import windows will disappear. 19. Leave the Static column lled with zeros. 20. Make sure you have 139 stations dened in your receiver spreadsheet, and the information looks correct. 21. Select File Save. 22. Use the display capabilities in the spreadsheet to QC the imported elevations. Select View View All XYGraph from the menu bar. Click MB1 in the X column heading, and MB2 in the Elev column heading.

1-16

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

After the XYGraph displays, select Color Bar from the menu.

Notice that the X Coordinate is displayed on the horizontal axis, the Elevations are on the vertical axis, and the Station numbers are represented by color. Activate the Notebook icon. When this icon is activated, you can select a point in the XYGraph, and automatically jump to that line in the spreadsheet. Select a point in the XYGraph with MB1. This is a powerful QC tool. You can easily locate bad values in the XYGraph, and then edit the value in the spreadsheet. Exit the XYGraph by selecting File Exit Conrm. 23. Use the File Exit pulldown menu to save the information and exit the receiver spreadsheet.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-17

Chapter 1: Manual Geometry Assignment

Sources spreadsheet 1. Select Sources from the main spreadsheet window.

2. The Sources (SIN) spreadsheet appears. You must go through the same procedure as in the Receiver spreadsheet to make 20 rows in the spreadsheet to accommodate the 20 shots in this survey. 3. Fill the Station column. Start at 388, and increment by 4. Notice that you did not input 388.5 as the observers report states. This is because the spreadsheet will only accept integer numbers. You will specify this half station difference using the skid column later. Also notice that the x, y, and z values updated. Because you told the spreadsheets that the source and receiver station numbers were linked, the Sources spreadsheet uses the x, y, and z values entered in the Receivers spreadsheet. Therefore, the source elevations are the elevations of the previous receiver location. In our case, you need to interpolate elevations between receiver locations. We will do this later from the Database tool. Finally, you can see from the Observers Report that a few of the shot station numbers do not increment by four. Fix the station numbers for those shots in the spreadsheet now. Notice that the x, y, and z values change as you change the Station number.

1-18

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

4. Fill the Source column to match the Station column. Source numbers are user dened and could be set to any value. Some people prefer to use this number as a counter, and will ll the column starting with 1, and incrementing by 1. 5. Fill the FFID column starting at 2, and incrementing by 1. Notice from the Observers Report that there is a gap in the FFID numbers between 19, and 22. Enter this gap in the spreadsheet. 6. Enter the offsets of 50 and 15 for the appropriate stations in the Offset column of the spreadsheet. Instead of North, South, East, and West, ProMAX uses the following sign convention:

Offset Sign Convention

(-) Negative Offset

Shot (x,y)

Direction of Increasing Station Numbers (Source Azimuth)

(+) Positive Offset 7. Scroll the spreadsheet to the right, and ll the Skid column with 27.5. This is where you specify the inline offsets that move the shots from integer station numbers to half station numbers. ProMAX uses the following sign convention:

Skid Sign Convention

Shot (x,y) (-) Negative Skid Toward lower stations Source Azimuth (Direction of Increasing Station Numbers)

(+) Positive Skid Toward higher stations

8. Import the Uphole time and Hole Depth information from the ASCII le using the same procedure as described in the Receivers spreadsheet.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-19

Chapter 1: Manual Geometry Assignment

Patterns spreadsheet At this point, leave the Sources spreadsheet, and fill in the patterns spreadsheet. After filling out the pattern, you will finish the remainder of the Sources spreadsheet. There are two methods of defining patterns. If the shot gap stays in a constant location, use the Static Gap Method. This method is only available if you chose to assign midpoints by matching pattern numbers using first live chan and station in the setup menu. If your shot gap changes locations, use the Dynamic Gap Method. This method is available if you chose either to assign midpoints by matching pattern numbers using first live chan and station, or matching pattern number using pattern station shift. Static Gap Method:

Static Gap Size and Gap Chan Denition

Stn 387 Ch 1 Stn 446 Ch 60 Shot Sources Spreadsheet Gap Chan=0 and Gap Size=0 Patterns Spreadsheet Pat 1 1 Min Max/Gap Chan Rcvr Chan Chan Inc MinChan 1 61 60 120 1 1 387 449 Rcvr Rcvr MaxChan Inc 446 508 1 1 Stn 449 Ch 61 Stn 508 Chn 120

In this method gap size and location is specified in the Patterns spreadsheet. In the Sources and Receivers spreadsheets, each shot or receiver used one row of the spreadsheet. In the Pattern spreadsheets, one pattern can use as many rows of the spreadsheet as necessary.

1-20

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Dynamic Gap Method:

Dynamic Gap Size and Gap Chan Denition

Stn 387 Ch 1 Stn 446 Ch 60 Shot Sources Spreadsheet Gap Chan=60 and Gap Size=2 Stn 449 Ch 61 Stn 508 Chn 120

Patterns Spreadsheet Pat 1 Min Max/Gap Chan Rcvr Rcvr Chan Chan Inc MinChan MaxChan 1 120 1 387 506 Rcvr Inc 1

In this method, you specify the rst and last channels and stations in the Pattern spreadsheet. The shot gap size and location is specied in the Sources spreadsheet. 1. Select Patterns from the main spreadsheet window. You will now dene your cable conguration, that is the relationship of channels to receiver locations. When you enter the Pattern spreadsheet for the rst time, a window will appear that asks you to enter some information about the number of channels.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-21

Chapter 1: Manual Geometry Assignment

2. Enter 120 for the maximum number of channels, select Constant number of channels/record, then OK. These values will be used for error checking when you exit the patterns spreadsheet. If you dene your pattern for more or less than 120 channels, the error column in the spreadsheet lls with ***** and will force you to correct your error before exiting the Patterns spreadsheet. If you need to edit the number of channels later select Edit NChans. 3. Since our shot gap is in a constant location, ll in the Pattern spreadsheet using the Static Gap Method.

4. Select File Exit to save the information, and exit the Patterns spreadsheet. 5. Return to the Sources spreadsheet, and reorder the columns so that the pattern description columns will be displayed next to the Station column. With the default column order, you cannot see the Station column after scrolling the spreadsheet to the right. To change the displayed order of the columns select Setup Order the menu bar. Follow the mouse button help, and click MB1 in the column heading for Station, Pattern, Num Chn, Shot Fold, 1st Live Sta, 1st Live Chn, Gap Chan Dlt, Gap Size Dlt, and Static.

1-22

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Finish the selection by clicking MB2 in the column heading for Static. The columns you selected will now move to the left of the spreadsheet as pictured below.

6. Fill in the Pattern column with ones. This tells the Sources spreadsheet to use pattern number 1 from the Patterns spreadsheet. Recall that you only dened one pattern for this survey. 7. Fill the Num Chn column with 120. This species that there are 120 channels for each shot on this survey. 8. You cannot edit the Shot Fold* column. This column will be calculated and lled when you assign midpoints later in the exercise. 9. Fill the 1st Live Sta column with information from the Observers Report. Notice that the rst live station for this survey is 387 for all but the last ve shots. 10. Fill the 1st Live Chn column with ones. This species that the rst live channel for each shot is 1.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-23

Chapter 1: Manual Geometry Assignment

11. Leave the Gap Chan Dlt column blank, and leave the Gap Size Dlt column lled with zeros. The information entered in these two columns depends on which method you chose for entering the pattern in the Patterns spreadsheet. Since you chose the Static Gap method, you have already specied the shot gaps size and location in the pattern spreadsheet, and do not need to specify it here. If you had chosen the Dynamic Gap method, you would enter the shot gaps location in Gap Chan Dlt, and the shot gaps size in Gap Size Dlt. 12. Leave the Static column lled with zeros. If the information were available, you could enter any previously calculated datum static values in this column. 13. Select File Save.

1-24

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

14. Display a basemap of both the shots and receivers, and measure the station azimuth. Select View View All Basemap

Notice that the receivers are displayed as a plus + sign, and the shots are displayed as an asterisk *. Also notice the two offset shots. To get a better view of the shots select Display Sources Control Points White. Now select the Cross Domain icon to allow you to measure the station azimuth. Press MB3 (notice the mouse button help) near the rst shot on the line, and drag the mouse to the end of the line. While still holding down MB3, make note of the azimuth (Azi) readout in the mouse button help. For this line, the azimuth should be 90 degrees. Select File Exit Conrm in the XYGraph display.

15. From the main Land Geometry window, select Setup, and enter 90 for the Nominal Survey Azimuth. Select OK to save the information an close the window. 16. Make sure that you only have 20 rows in the Sources spreadsheet. 17. Exit the Sources spreadsheet by selecting File Exit.

TraceQC spreadsheet 1. The information in the traces spreadsheet will be calculated by the binning process. You can not edit this information.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-25

Chapter 1: Manual Geometry Assignment

Binning 1. Select Bin from the main window. There are three steps to be completed in order: Assign Midpoints One of the several Binning options Finalize database

2. Select Assign midpoints by: Matching pattern numbers using rst live chan and station, and then select OK. In this case the Assignment step is performing the following calculations: Computes the SIN and SRF for each trace and populates the TRC OPF. Computes the Shot to Receiver Offset (Distance.) Computes the Midpoint coordinate between the shot and receiver. Computes the Shot to Receiver Azimuth.

1-26

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

An Assignment Warning window will pop up warning that some or all of the data in the Trace spreadsheet will be overwritten. Click Proceed.

A number of progress windows will ash on the screen as this step runs. A nal Status window appears notifying that you Successfully completed geometry assignment. Click Ok. If this step fails, you have an error in your spreadsheets somewhere. Not much help is given to you, but, the problems are usually related to the spread and/or pattern denitions. 3. Choose Binning with a method of Add source and receiver stations, user dened OFB parameters. Fill in the parameters in the bottom of the window, and select OK.

This step calculates CDP numbers for each trace by adding source and receiver numbers. The rst CDP will be 775 (387 + 388), the last CDP will be 989 (464 + 525). This step also creates the OFB ordered parameter le. 4. Select OK in the nal status window when successfully completed.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-27

Chapter 1: Manual Geometry Assignment

Select Finalize Database, then click OK.

Clcik OK in the nal status window when successfully completed. Click Cancel in the Land 2D Binning window to exit the binning window.

5. Open the Receivers spreadsheet. 6. The binning step lled in the data in the Traces spreadsheet. You can QC this information from a basemap. From the Receivers spreadsheet, select View View All Basemap.

7. Highlight the Cross Domain icon. Click and hold MB1 near a source location to see which receivers contributed to that shot. Drag your mouse to the end of the line to see the receiver range change. Click and hold MB2 near a receiver location to see which shots contributed to that receiver.

1-28

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

8. Select File Exit Conrm to exit the basemap display. 9. Select File Exit from the Receivers spreadsheet. 10. Select File Exit from the main spreadsheet window.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-29

Chapter 1: Manual Geometry Assignment

View Database Attributes

1. Select Exit from the Flow Editing menu of the User Interface. In the Flows menu select Database. The DBTools window allows basic viewing and editing of the 8 orders (spreadsheets) of the database: LIN, TRC, SRF, SIN, CDP, CHN, OFB, PAT. The contents of the OPF les are summarized in Table 1: Table 1: Organization of Ordered Parameter Files

LIN (Line) Contains constant line information, such as nal datum, type of units, source type, total number of shots. Contains information varying by trace, such as FB Picks, trim statics, source-receiver offsets. Contains information varying by surface receiver location, such as surface location x,y coordinates, surface location elevations, surface location statics, number of traces received at each surface location, and receiver fold. Contains information varying by source point, such as source x,y coordinates, source elevations, source uphole times, nearest surface location to source, source statics. Contains information varying by CDP location, such as CDP x,y coordinates, CDP elevation, CDP fold, nearest surface location. Contains information varying by channel number, such as channel gain constants and channel statics. Contains information varying by offset bin number, such as surface consistent amplitude analysis. OFB is created when certain processes are run, such as surface consistent amplitude analysis. Contains information describing the recording patterns.

TRC (Trace)

SRF (Surface location)

SIN (Source Index #)

CDP (Common Depth Point) CHN (Channel)

OFB (Offset Bin)

PAT (Pattern)

1-30

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

To graphically QC and edit the database select Database XDB Database Display.

2. From the XDB window select Database Get. 3. Project SRF elevations into SIN. By projecting the SRF elevations into the SIN elevations you will correct for the skid of the elevation being on the half station. For example, compare the land geometry database for receiver and shot elevations at station number 428. You see that they both read an elevation of 842 feet. Looking at the elevation for station number 429, however, you see an elevation of 845.3. From the observer notes and geometry assignment you remember that the shot is actually at station location 428.5, and therefore at an elevation around 843.6. To x the source elevations go to the attribute selection window, and click on the SIN order, then GEOMETRY ELEV. After this is displayed, click on the SRF order, then GEOMETRY ELEV.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-31

Chapter 1: Manual Geometry Assignment

While SRF Geometry Elev is highlighted, select New Project Sin.

In the popup window, type in ELEV for the new attribute name, then click on OK. Your new attribute will be plotted. Notice how station 428 has been corrected.

4. To save this new attribute, select Database Save. In the popup list, click on the name of the new attribute, SIN:GEOMETRY:ELEV. Select OK from the overwrite warning and from the acknowledgment window, then Exit the Database tool. 5. You can verify the source elevation was corrected by going back into the source spreadsheet.

1-32 ProMAX 2D Seismic Processing and Analysis Landmark

Chapter 1: Manual Geometry Assignment

6. There are several useful QC plots that can be made from the DBTools or from the XDB Database Display. Some examples are listed below.

XDB CDP: GEOMETRY: FOLD (DBTools: double click on FOLD from CDP tab)

Used to check CDP fold for variations.

XDB SIN: GEOMETRY: NCHANS (DBTools: double click on NCHANS from SIN tab)

Used to check for variations in number of channels per source.

XDB 3D XYGraph: TRC:SRF, SIN, OFFSET (DBTools: View Predefined SIN-SRF-offset)

Used to check the live receivers for each shot.

XDB 3D XYGraph: TRC: OFFSET, CDP, SIN (DBTools: View Predefined offset-CDP-SIN)

Used to check offset distribution in CDPs for velocity analysis placement and DMO binning.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-33

Chapter 1: Manual Geometry Assignment

Load Geometry to the Trace Headers

1. Build the following ow:

Editing Flow: 1.3-Inline Header Load

Add Delete Execute View Exit

SEG-Y Input

Type of storage to use: ------------------------------Disk Image Enter DISK le path name: ---------------------------------------------------/misc_les/2d/segy_0_value_headers ----Default the rest of the parameters----

Inline Geom Header Load

Primary header to match database: ---------------------FFID Secondary header to match database: ----------------None Match by valid trace number?: -------------------------------No Drop traces with NULL CDP headers?: --------------------No Drop traces with NULL receiver headers: ----------------No Verbose diagnostics?: --------------------------------------------No

Disk Data Output

Output Dataset Filename: -----------Shots-with geometry New, or Existing, File?: ----------------------------------------New Record length to output: ------------------------------------------0. Trace sample format: ----------------------------------------16 bit Skip primary disk storage?: -----------------------------------No 2. In SEG-Y Input, select Disk Image and enter the path given to you by your instructor for the raw shot dataset. 3. In Inline Geom Header Load, select FFID as the Primary and None as the Secondary headers to match the database. A trace is excluded from further processing if it is not described in the geometry. 4. In Disk Data Output, enter a name for a new output dataset. 5. Execute the ow.

1-34

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

6. Edit your ow 1.1-View Shots to check the trace headers of your dataset.

Editing Flow: 1.1-View Shots

Add Delete Execute View Exit

<SEG-Y Input> Disk Data Input

Select dataset: ----------------------------Shots-with geometry Trace read option: -----------------------------------------------Sort Select Primary trace header entry:--------------SIN Select secondary trace header entry:---OFFSET Select order list for dataset----------------------------------*:*

Automatic Gain Control

----Default all parameters for this process----

Trace Display

Number of ENSEMBLES (line segments)/screen: -------2 Do you want to use variable trace spacing?------------Yes ----Default the remaining parameters---7. Change the sort order as shown in the ow. 8. In the trace display use variable trace spacing to highlight the source gap in the shots. 9. While viewing the data in Trace Display, use the dx/dt icon to measure the rst break velocity of a few shots. Write down this value as it will be used later in the Graphical Geometry QC section.

Landmark

ProMAX 2D Seismic Processing and Analysis

1-35

Chapter 1: Manual Geometry Assignment

Graphical Geometry QC

Graphical Geometry QC* is a macro designed to quickly find mistakes in your geometry assignment. The process applies linear moveout to shots and splices multiple shots together in a vertical fashion based on receiver surface station. This display is often referred to as a Farr display.

Shot t

LMO Shot

Farr Display

Mistakes in geometry assignment show up as obvious anomalies, such as the last panel in the Farr display. In other cases, you may find that your first break data is far from being flat, with your onset of energy coming in much later with longer offsets. Another indicator is when all first breaks tend to line up at 100 ms, but for one shot they line up at 200 ms. Check the geometry in these areas.

1-36

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

QC your Geometry Assignment 1. Build the following ow:

Editing Flow: 1.4-Graphical Geometry QC

Add Delete Execute View Exit

Graphical Geometry QC*

Select input trace data le: ----------Shots-with geometry SIN and SOU_SLOC range of dataset: ---------------------*:* dB/sec gain value to apply: ------------------------------------6. Specify LMO velocity function(s): -------------------1:0:8000 Additional bulk shift: -------------------------------------------100 Maximum time for each spliced trace: -------------------400 Maximum number of shots (traces) to vertically splice: --------------------------------------------------------------------4 Resulting maximum number of traces per screen: --139 Select display device: -------------------------------This Screen Scalar for sample value multiplication: ---------------------1. Trace scaling option: -----------------------------------Individual 2. Select your input dataset name. 3. Specify the LMO velocity function. An editor appears for specifying a velocity function; 1:0:8000 should work ne. In this example, we will enter one LMO velocity for the entire dataset. Therefore, we only need to specify one primary value (1) for the rst shot, one absolute offset value (0 ft), and one velocity (8000 ft/sec). 4. Enter 4 for the Maximum number of shots to vertically splice. For a quick check of all the data, you could input all 20 shots instead of 4. 5. Set the maximum number of traces per screen to 139. This will cover the full spread 120 channels plus 5 extra shots 4 channels apart. 6. Select Individual for Trace scaling option, if you have any spikes in your data.

Landmark ProMAX 2D Seismic Processing and Analysis 1-37

Chapter 1: Manual Geometry Assignment

The spikes will bias the entire screen scaling scalar and cause many of the traces to appear having zero amplitude. 7. Execute the ow using MB2. This process uses Screen Display for displaying your data, instead of Trace Display. When you execute with MB2, the data is automatically displayed. Use the Header tool icon to check vertically constant SRF_SLOC trace header values. Note what shot you are on. Look for anomalies, such as a back spread shifted 50-100 ms higher than a front spread, or severely undercorrected or overcorrected shots. Also, any reversed traces should remain at a constant surface location.

NOTE: If you nd any mistakes you must go back to the spreadsheets and correct them. Then you will need to rebin. Finally, to get the proper trace headers loaded you need to rerun the inline header load ow.

1-38

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 1: Manual Geometry Assignment

Chapter Summary

Upon completion of this chapter you should be able to answer the following questions: Do you understand what the Ordered Parameter Files represent Can you edit the OPFs via the Geometry Spreadsheet Can you view Trace Header values for Geometry Attributes Can you import Observer Data into the Geometry Spreadsheet Can you QC and edit Geometry via DBTools and XDB Do you understand ProMAX Sign Conventions Do you understand what a Pattern Represents Do you understand the steps of Binning Can you graphically QC Geometry with Farr Displays

Landmark

ProMAX 2D Seismic Processing and Analysis

1-39

Chapter 1: Manual Geometry Assignment

1-40

ProMAX 2D Seismic Processing and Analysis

Landmark

Chapter 2

Full Extraction Geometry Assignment