CAQA5e ch1

Diunggah oleh

Mohammad Bilal MirzaDeskripsi Asli:

Judul Asli

Hak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

CAQA5e ch1

Diunggah oleh

Mohammad Bilal MirzaHak Cipta:

Format Tersedia

The University of Adelaide, School of Computer Science

4 September 2013

Computer Architecture

A Quantitative Approach, Fifth Edition

Chapter 1 Fundamentals of Quantitative Design and Analysis

Copyright 2012, Elsevier Inc. All rights reserved.

Introduction

Computer Technology

!!

Performance improvements:

!!

Improvements in semiconductor technology

!!

Feature size, clock speed Enabled by HLL compilers, UNIX Lead to RISC architectures

!!

Improvements in computer architectures

!! !!

!!

Together have enabled:

!! !!

Lightweight computers Productivity-based managed/interpreted programming languages

Copyright 2012, Elsevier Inc. All rights reserved. 2

The University of Adelaide, School of Computer Science

4 September 2013

Introduction

Single Processor Performance

Move to multi-processor

RISC

Copyright 2012, Elsevier Inc. All rights reserved.

Introduction

Current Trends in Architecture

!!

Cannot continue to leverage Instruction-Level parallelism (ILP)

!!

Single processor performance improvement ended in 2003

!!

New models for performance:

!! !! !!

Data-level parallelism (DLP) Thread-level parallelism (TLP) Request-level parallelism (RLP)

!!

These require explicit restructuring of the application

Copyright 2012, Elsevier Inc. All rights reserved. 4

The University of Adelaide, School of Computer Science

4 September 2013

Classes of Computers

Classes of Computers

!!

Personal Mobile Device (PMD)

!! !!

e.g. start phones, tablet computers Emphasis on energy efficiency and real-time Emphasis on price-performance Emphasis on availability, scalability, throughput Used for Software as a Service (SaaS) Emphasis on availability and price-performance Sub-class: Supercomputers, emphasis: floating-point performance and fast internal networks Emphasis: price

Copyright 2012, Elsevier Inc. All rights reserved.

!!

Desktop Computing

!!

!!

Servers

!!

!!

Clusters / Warehouse Scale Computers

!! !! !!

!!

Embedded Computers

!!

Classes of Computers

Parallelism

!!

Classes of parallelism in applications:

!! !!

Data-Level Parallelism (DLP) Task-Level Parallelism (TLP)

!!

Classes of architectural parallelism:

!! !! !! !!

Instruction-Level Parallelism (ILP) Vector architectures/Graphic Processor Units (GPUs) Thread-Level Parallelism Request-Level Parallelism

Copyright 2012, Elsevier Inc. All rights reserved.

The University of Adelaide, School of Computer Science

4 September 2013

Classes of Computers

Flynns Taxonomy

!!

Single instruction stream, single data stream (SISD) Single instruction stream, multiple data streams (SIMD)

!! !! !!

!!

Vector architectures Multimedia extensions Graphics processor units

!!

Multiple instruction streams, single data stream (MISD)

!!

No commercial implementation

!!

Multiple instruction streams, multiple data streams (MIMD)

!! !!

Tightly-coupled MIMD Loosely-coupled MIMD

Copyright 2012, Elsevier Inc. All rights reserved. 7

Defining Computer Architecture

Defining Computer Architecture

!!

Old view of computer architecture:

!! !!

Instruction Set Architecture (ISA) design i.e. decisions regarding:

!!

registers, memory addressing, addressing modes, instruction operands, available operations, control flow instructions, instruction encoding

!!

Real computer architecture:

!! !!

!!

Specific requirements of the target machine Design to maximize performance within constraints: cost, power, and availability Includes ISA, microarchitecture, hardware

Copyright 2012, Elsevier Inc. All rights reserved.

The University of Adelaide, School of Computer Science

4 September 2013

Trends in Technology

Trends in Technology

!!

Integrated circuit technology

!! !! !!

Transistor density: 35%/year Die size: 10-20%/year Integration overall: 40-55%/year

!!

DRAM capacity: 25-40%/year (slowing) Flash capacity: 50-60%/year

!!

!!

15-20X cheaper/bit than DRAM

!!

Magnetic disk technology: 40%/year

!! !!

15-25X cheaper/bit then Flash 300-500X cheaper/bit than DRAM

Copyright 2012, Elsevier Inc. All rights reserved.

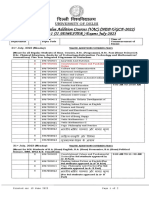

Bandwidth vs. Latency !!!!

Copyright 2012, Elsevier Inc. All rights reserved.

10

The University of Adelaide, School of Computer Science

4 September 2013

Trends in Technology

Bandwidth and Latency

!!

Bandwidth or throughput

!! !! !!

Total work done in a given time 10,000-25,000X improvement for processors 300-1200X improvement for memory and disks

!!

Latency or response time

!! !! !!

Time between start and completion of an event 30-80X improvement for processors 6-8X improvement for memory and disks

Copyright 2012, Elsevier Inc. All rights reserved.

11

Trends in Technology

Bandwidth and Latency

Log-log plot of bandwidth and latency milestones

Copyright 2012, Elsevier Inc. All rights reserved. 12

The University of Adelaide, School of Computer Science

4 September 2013

Trends in Technology

Transistors and Wires

!!

Feature size

Minimum size of transistor or wire in x or y dimension !! 10 microns in 1971 to .022 microns in 2013 !! Transistor performance scales linearly

!!

!!

Wire delay does not improve with feature size!

!!

Integration density scales quadraticly

Copyright 2012, Elsevier Inc. All rights reserved.

13

Trends in Power and Energy

Power and Energy

!!

Problem: Get power in, get power out Thermal Design Power (TDP)

!! !! !!

!!

Characterizes sustained power consumption Used as target for power supply and cooling system Lower than peak power, higher than average power consumption

!!

Clock rate can be reduced dynamically to limit power consumption Energy per task is often a better measurement

Copyright 2012, Elsevier Inc. All rights reserved. 14

!!

The University of Adelaide, School of Computer Science

4 September 2013

Trends in Power and Energy

Dynamic Energy and Power

!!

Dynamic energy

!! !!

Transistor switch from 0 " 1 or 1 " 0 ! x Capacitive load x Voltage2

!!

Dynamic power

!!

! x Capacitive load x Voltage2 x Frequency switched

!!

Reducing clock rate reduces power, not energy

Copyright 2012, Elsevier Inc. All rights reserved.

15

Trends in Power and Energy

Power

!! !!

!!

!!

Intel 80386 consumed ~ 2 W 3.3 GHz Intel Core i7 consumes 130 W Heat must be dissipated from 1.5 x 1.5 cm chip This is the limit of what can be cooled by air

Copyright 2012, Elsevier Inc. All rights reserved.

16

The University of Adelaide, School of Computer Science

4 September 2013

Trends in Power and Energy

Reducing Power

!!

Techniques for reducing power:

!! !! !! !!

Do nothing well Dynamic Voltage-Frequency Scaling Low power state for DRAM, disks Overclocking, turning off cores

Copyright 2012, Elsevier Inc. All rights reserved.

17

Trends in Power and Energy

Static Power

!!

Static power consumption

!! !! !!

Currentstatic x Voltage Scales with number of transistors To reduce: power gating

Copyright 2012, Elsevier Inc. All rights reserved.

18

The University of Adelaide, School of Computer Science

4 September 2013

Trends in Cost

Trends in Cost

!!

Cost driven down by learning curve

!!

Yield

!!

DRAM: price closely tracks cost Microprocessors: price depends on volume

!!

!!

10% less for each doubling of volume

Copyright 2012, Elsevier Inc. All rights reserved.

19

Trends in Cost

Integrated Circuit Cost

!!

Integrated circuit

!!

Bose-Einstein formula: Defects per unit area = 0.016-0.057 defects per square cm (2010) N = process-complexity factor = 11.5-15.5 (40 nm, 2010)

!! !!

Copyright 2012, Elsevier Inc. All rights reserved.

20

The University of Adelaide, School of Computer Science

4 September 2013

Dependability

Dependability

!!

Module reliability

!! !! !! !!

Mean time to failure (MTTF) Mean time to repair (MTTR) Mean time between failures (MTBF) = MTTF + MTTR Availability = MTTF / MTBF

Copyright 2012, Elsevier Inc. All rights reserved.

21

Measuring Performance

Measuring Performance

!!

Typical performance metrics:

!! !!

Response time Throughput

!!

Speedup of X relative to Y

!!

Execution timeY / Execution timeX

!!

Execution time

!! !!

Wall clock time: includes all system overheads CPU time: only computation time

!!

Benchmarks

!! !! !! !!

Kernels (e.g. matrix multiply) Toy programs (e.g. sorting) Synthetic benchmarks (e.g. Dhrystone) Benchmark suites (e.g. SPEC06fp, TPC-C)

Copyright 2012, Elsevier Inc. All rights reserved. 22

The University of Adelaide, School of Computer Science

4 September 2013

!!

Look at SPEC handout Fig. 1.16

Copyright 2012, Elsevier Inc. All rights reserved.

23

Principles

Principles of Computer Design

!!

Take Advantage of Parallelism

!!

e.g. multiple processors, disks, memory banks, pipelining, multiple functional units

!!

Principle of Locality

!!

Reuse of data and instructions (more on next slides)

!!

Focus on the Common Case

!!

Amdahls Law (more on next slides)

Copyright 2012, Elsevier Inc. All rights reserved.

24

The University of Adelaide, School of Computer Science

4 September 2013

The Principle of Locality

!!

The Principle of Locality:

!!

Programs access a relatively small portion of the address space at any instant of time. Temporal Locality (Locality in Time):

!!

!!

Two Different Types of Locality:

!!

If an item is referenced, it will tend to be referenced again soon (e.g., loops, reuse) If an item is referenced, items whose addresses are close by tend to be referenced soon (e.g., straight-line code, array access)

!!

Spatial Locality (Locality in Space):

!!

!!

Last 30 years, hardware relied on locality for memory performance

P $ MEM

25

Capacity Access Time Cost CPU Registers 100s Bytes 300 500 ps (0.3-0.5 ns) L1 and L2 Cache 10s-100s K Bytes ~1 ns - ~10 ns $1000s/ GByte Main Memory G Bytes 80ns- 200ns ~ $100/ GByte Disk 10s T Bytes, 10 ms (10,000,000 ns) ~ $1 / GByte Tape infinite sec-min ~$1 / GByte

Levels of the Memory Hierarchy

Staging Xfer Unit

Registers Instr. Operands L1 Cache Blocks L2 Cache Blocks Memory Pages Disk Files Tape

Upper Level faster

prog./compiler 1-8 bytes cache cntl 32-64 bytes cache cntl 64-128 bytes

OS 4K-8K bytes

user/operator Mbytes

Larger

Lower Level

26

The University of Adelaide, School of Computer Science

4 September 2013

Focus on the Common Case

!!

Common sense guides computer design

!!

Since its engineering, common sense is valuable

!!

In making a design trade-off, favor the frequent case over the infrequent case

!! !!

E.g., Instruction fetch and decode unit used more frequently than multiplier, so optimize it first. E.g., If database server has 50 disks / processor, storage dependability dominates system dependability, so optimize it 1st

!!

Frequent case is often simpler and can be done faster than the infrequent case

!! !!

E.g., overflow is rare when adding two numbers, so improve performance by optimizing more common case of no overflow May slow down overflow, but overall performance improved by optimizing for the normal case

!!

What is frequent case and how much performance improved by making case faster => Amdahls Law

27

Amdahls Law

& Fractionenhanced # ExTimenew = ExTimeold ( $(1 ' Fractionenhanced )+ Speedupenhanced ! % "

Speedupoverall =

ExTimeold = ExTimenew

(1 ! Fractionenhanced ) +

Fractionenhanced

Speedupenhanced

Best you could ever hope to do:

Speedupmaximum =

(1 - Fractionenhanced )

28

The University of Adelaide, School of Computer Science

4 September 2013

Principles

Principles of Computer Design

!!

The Processor Performance Equation

Copyright 2012, Elsevier Inc. All rights reserved.

29

Principles

Principles of Computer Design

!!

Different instruction types having different CPIs

Copyright 2012, Elsevier Inc. All rights reserved.

30

Anda mungkin juga menyukai

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDari EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RacePenilaian: 4 dari 5 bintang4/5 (895)

- Housing Dte IssueDokumen1 halamanHousing Dte IssueMohammad Bilal MirzaBelum ada peringkat

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDari EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifePenilaian: 4 dari 5 bintang4/5 (5794)

- NaatDokumen2 halamanNaatMohammad Bilal Mirza0% (1)

- Shoe Dog: A Memoir by the Creator of NikeDari EverandShoe Dog: A Memoir by the Creator of NikePenilaian: 4.5 dari 5 bintang4.5/5 (537)

- Nopcommerce: Presented By: Muhammad Bilal (Bilal M.) Pse Datumsquar It ServicesDokumen5 halamanNopcommerce: Presented By: Muhammad Bilal (Bilal M.) Pse Datumsquar It ServicesMohammad Bilal MirzaBelum ada peringkat

- Grit: The Power of Passion and PerseveranceDari EverandGrit: The Power of Passion and PerseverancePenilaian: 4 dari 5 bintang4/5 (588)

- Assembly Language Programming - CS401 Power Point Slides Lecture 08Dokumen27 halamanAssembly Language Programming - CS401 Power Point Slides Lecture 08Mohammad Bilal MirzaBelum ada peringkat

- The Yellow House: A Memoir (2019 National Book Award Winner)Dari EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Penilaian: 4 dari 5 bintang4/5 (98)

- Presented To:: Dr. Nadir ShahDokumen15 halamanPresented To:: Dr. Nadir ShahMohammad Bilal MirzaBelum ada peringkat

- ACA Microprocessor and Thread Level ParallelismDokumen41 halamanACA Microprocessor and Thread Level ParallelismMohammad Bilal MirzaBelum ada peringkat

- High Performance ComputingDokumen6 halamanHigh Performance ComputingMohammad Bilal MirzaBelum ada peringkat

- The Little Book of Hygge: Danish Secrets to Happy LivingDari EverandThe Little Book of Hygge: Danish Secrets to Happy LivingPenilaian: 3.5 dari 5 bintang3.5/5 (400)

- Text Figure (4.2) 1D Discrete Fourier TransformDokumen20 halamanText Figure (4.2) 1D Discrete Fourier TransformMohammad Bilal MirzaBelum ada peringkat

- Never Split the Difference: Negotiating As If Your Life Depended On ItDari EverandNever Split the Difference: Negotiating As If Your Life Depended On ItPenilaian: 4.5 dari 5 bintang4.5/5 (838)

- Darcy Ripper User ManualDokumen17 halamanDarcy Ripper User ManualMohammad Bilal MirzaBelum ada peringkat

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDari EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FuturePenilaian: 4.5 dari 5 bintang4.5/5 (474)

- Back TrackingDokumen36 halamanBack TrackingMohammad Bilal MirzaBelum ada peringkat

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDari EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryPenilaian: 3.5 dari 5 bintang3.5/5 (231)

- FreebsdDokumen47 halamanFreebsdMohammad Bilal MirzaBelum ada peringkat

- Rise of ISIS: A Threat We Can't IgnoreDari EverandRise of ISIS: A Threat We Can't IgnorePenilaian: 3.5 dari 5 bintang3.5/5 (137)

- Opnet Projdec RipDokumen7 halamanOpnet Projdec RipMohammad Bilal MirzaBelum ada peringkat

- The Emperor of All Maladies: A Biography of CancerDari EverandThe Emperor of All Maladies: A Biography of CancerPenilaian: 4.5 dari 5 bintang4.5/5 (271)

- G52MAL: Lecture 18: Recursive-Descent Parsing: Elimination of Left RecursionDokumen26 halamanG52MAL: Lecture 18: Recursive-Descent Parsing: Elimination of Left RecursionMohammad Bilal MirzaBelum ada peringkat

- Dynamic Programming1Dokumen38 halamanDynamic Programming1Mohammad Bilal MirzaBelum ada peringkat

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDari EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaPenilaian: 4.5 dari 5 bintang4.5/5 (266)

- Ebook The Science of Paediatrics MRCPCH Mastercourse PDF Full Chapter PDFDokumen55 halamanEbook The Science of Paediatrics MRCPCH Mastercourse PDF Full Chapter PDFbyron.kahl853100% (28)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDari EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersPenilaian: 4.5 dari 5 bintang4.5/5 (345)

- SMDM Project SAMPLE REPORTDokumen7 halamanSMDM Project SAMPLE REPORTsushmita mukherjee0% (2)

- On Fire: The (Burning) Case for a Green New DealDari EverandOn Fire: The (Burning) Case for a Green New DealPenilaian: 4 dari 5 bintang4/5 (74)

- Effects of Music Notation Software On Compositional Practices and OutcomeDokumen271 halamanEffects of Music Notation Software On Compositional Practices and OutcomeConstantin PopescuBelum ada peringkat

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDari EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyPenilaian: 3.5 dari 5 bintang3.5/5 (2259)

- 2023 06 19 VacDokumen2 halaman2023 06 19 VacAnanyaBelum ada peringkat

- Team of Rivals: The Political Genius of Abraham LincolnDari EverandTeam of Rivals: The Political Genius of Abraham LincolnPenilaian: 4.5 dari 5 bintang4.5/5 (234)

- MIL 11 12 Q3 0102 What Is Media and Information Literacy PSDokumen14 halamanMIL 11 12 Q3 0102 What Is Media and Information Literacy PS2marfu4Belum ada peringkat

- The Unwinding: An Inner History of the New AmericaDari EverandThe Unwinding: An Inner History of the New AmericaPenilaian: 4 dari 5 bintang4/5 (45)

- Project Appendix Freud's Use of The Concept of Regression, Appendix A To Project For A Scientific PsychologyDokumen2 halamanProject Appendix Freud's Use of The Concept of Regression, Appendix A To Project For A Scientific PsychologybrthstBelum ada peringkat

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDari EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You ArePenilaian: 4 dari 5 bintang4/5 (1090)

- Challenges in Social Science ResearchDokumen4 halamanChallenges in Social Science ResearchphananlinhBelum ada peringkat

- Lifespan Development 7th Edition Boyd Test BankDokumen31 halamanLifespan Development 7th Edition Boyd Test Bankbrandoncoletjaxprigfm100% (32)

- Digital Logic and Computer Organization: Unit-I Page NoDokumen4 halamanDigital Logic and Computer Organization: Unit-I Page NoJit AggBelum ada peringkat

- UNIT-III (Gropu Discussion) (MBA-4 (HR)Dokumen6 halamanUNIT-III (Gropu Discussion) (MBA-4 (HR)harpominderBelum ada peringkat

- Sociolgy of Literature StudyDokumen231 halamanSociolgy of Literature StudySugeng Itchi AlordBelum ada peringkat

- (Or How Blah-Blah-Blah Has Gradually Taken Over Our Lives) Dan RoamDokumen18 halaman(Or How Blah-Blah-Blah Has Gradually Taken Over Our Lives) Dan RoamVictoria AdhityaBelum ada peringkat

- FA3 - Contemporary WorldDokumen15 halamanFA3 - Contemporary WorldGill GregorioBelum ada peringkat

- TCF-Annual Report (Website)Dokumen64 halamanTCF-Annual Report (Website)communitymarketsBelum ada peringkat

- Regional and Social Dialects A. Regional Variation 1. International VarietiesDokumen3 halamanRegional and Social Dialects A. Regional Variation 1. International VarietiesRiskaBelum ada peringkat

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Dari EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Penilaian: 4.5 dari 5 bintang4.5/5 (121)

- Prof. Ed 2: June Rebangcos Rañola, LPT InstructorDokumen25 halamanProf. Ed 2: June Rebangcos Rañola, LPT InstructorJune RanolaBelum ada peringkat

- PSYC 4110 PsycholinguisticsDokumen11 halamanPSYC 4110 Psycholinguisticsshakeelkhanturlandi5Belum ada peringkat

- MS401L16 Ready and Resilient Program SRDokumen5 halamanMS401L16 Ready and Resilient Program SRAlex LogvinovskyBelum ada peringkat

- Personal Space Lesson PlanDokumen5 halamanPersonal Space Lesson Planapi-392223990Belum ada peringkat

- What I Need To Know? What I Need To Know?: Quarter 1Dokumen9 halamanWhat I Need To Know? What I Need To Know?: Quarter 1Aileen gay PayunanBelum ada peringkat

- Media and Information Literacy - Lesson 2Dokumen1 halamanMedia and Information Literacy - Lesson 2Mary Chloe JaucianBelum ada peringkat

- DLL 2Dokumen7 halamanDLL 2Jonathan LilocanBelum ada peringkat

- Panjab University BA SyllabusDokumen245 halamanPanjab University BA SyllabusAditya SharmaBelum ada peringkat

- Vocabulary Lesson Plan Rationale 2016Dokumen7 halamanVocabulary Lesson Plan Rationale 2016api-312579145100% (1)

- FDARDokumen33 halamanFDARRaquel M. Mendoza100% (7)

- Her Body and Other Parties: StoriesDari EverandHer Body and Other Parties: StoriesPenilaian: 4 dari 5 bintang4/5 (821)

- Ied OIOS Manual v1 6Dokumen246 halamanIed OIOS Manual v1 6cornel.petruBelum ada peringkat

- PlummerDokumen321 halamanPlummerEnzo100% (3)

- Orem's TheoryDokumen54 halamanOrem's TheoryGiri SivaBelum ada peringkat

- Katia Sachoute Ead 533 Benchmark - Clinical Field Experience D Leading Leaders in Giving Peer Feedback Related To Teacher PerformanceDokumen6 halamanKatia Sachoute Ead 533 Benchmark - Clinical Field Experience D Leading Leaders in Giving Peer Feedback Related To Teacher Performanceapi-639561119Belum ada peringkat

- Mathematics Grades I-X Approved by BOG 66Dokumen118 halamanMathematics Grades I-X Approved by BOG 66imtiaz ahmadBelum ada peringkat