05 Unit5

Diunggah oleh

John ArthurJudul Asli

Hak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

05 Unit5

Diunggah oleh

John ArthurHak Cipta:

Format Tersedia

Fundamentals of Algorithms

Unit 5

Unit 5

Structure 5.1 Introduction Objectives 5.2 Dynamic Programming Strategy 5.3 Multistage Graphs 5.4 All Pair Shortest Paths 5.5 Traveling Salesman Problems 5.6 Summary 5.7 Terminal Questions 5.8 Answers

Dynamic Programming

5.1 Introduction

In the previous unit, we discussed the concept of greedy method but when the optimal solutions to subproblems are to be retained in order to avoid recomputing their values, we use dynamic programming. Dynamic programming is an algorithm design method that can be used when the solution to problem can be viewed as the result of sequence of decisions. The principle of optimality states that an optimal sequence of decisions has the property that whatever the initial state and decision are, the remaining decisions must constitute an optimal decision sequence with regard to the state resulting from the first decision. The essential difference between the greedy method and dynamic programming is that in the greedy method only one decision sequence is ever generated. In dynamic programming, many decision sequences may be generated. However, sequences containing suboptimal subsequences cannot be optimal and so not be generated. Objectives After studying this unit, you should be able to: apply Dynamic Programming in Multistage graphs solve all pair shortlist paths using Dynamic Programming solve Traveling Salesman Problems using Dynamic Programming.

Sikkim Manipal University

Page No. 113

Fundamentals of Algorithms

Unit 5

5.2 Dynamic Programming Strategy

Because of the use of the principle of optimality, decision sequences containing subsequences that are suboptimal are not considered. Although the total number of different decision sequences is exponential to the number of decisions (if there are d choices for each of the n decisions to be made then there are dn possible decision sequences). Dynamic programming algorithms often have a polynomial complexity. Another important feature of the dynamic programming approach is that optimal solutions to subproblems are retained so as to avoid recomputing their values. The use of these tabulated values makes it natural to recast the recursive equations into an iterative algorithm. Self Assessment Question 1. Dynamic programming algorithms often have a

5.3 Multistage Graphs

A multistage graph G = (V,E) is a directed graph in which the vertices are partitioned into K edge in E, then u are such that V1 2 disjoint sets Vi, 1 vi, and v

Vk

k. In addition, if <u, v> is an i < k. The sets V1 and Vk

vi+1 for some i, 1

i Let s and t, respectively, be the vertices in V1

and Vk. The vertex s is the source, and t the link. Let c(i, j) be the cost of edge <i, j>. The cost of a path from s to t is the sum of the costs of the edges on the path. The multistage graph problem is to find a minimum-cost path from s to t. Each set Vi defines a stage in the graph. Because of the constraints, on E, every path from s to t starts in stage 1, goes to, stage 2, then to stage 3, then to stage 4, and so on, and eventually terminates in stage K.

Sikkim Manipal University

Page No. 114

Fundamentals of Algorithms

Unit 5

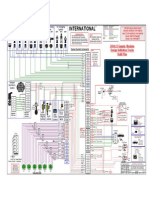

Fig. 5.1: A minimum-cost s to t path is indicated by the broken edges.

Many problems can be formulated as multistage graph problems. We give only one example. Consider a resource allocation problem in which n units of resource are to be allocated to r projects. If j 0 j n units of the resource are allocated to project i, then the resulting net profit is N(i,j). The problem is to allocate the resource to the r projects in such a way as to maximize total net profit. This problem can be formulated as an r+1 stage graph problem as follows: Stage i, 1 i r, represents project i. There are n+1 vertices V(i, j), 0 j n, associated with stage i, 2 i r. Stages 1 and r+1 each have one vertex, V(1,0) = s and V(r+1,n)=t, respectively. Vertex V(i,j), 2 i r, represents the state in which a total of j units of resource have been allocated to projects 1,2, .. ,i 1. The edges in G are of the form <V(i,j),V(i+1, l)> for all j l and 1 i < r. The edge <V(i,j, V(i+1, l)>, j<l, is assigned a weight or cost of N(i, l-j) and corresponds to allocating l-j units of resource to project i, 1 i < r. In addition, G has edges of the type <v(r,j), v(r+1,n)>. Each such edge is assigned a weight of max { N( r , p )} . It should be easy to see that an

0 p n j

optimal allocation of resources is defined by a maximum cost s to t path. This is easily converted into a minimum-cost problem by changing the sign of all the edge costs. A dynamic programming formulation for a k-stage graph problem is obtained by first noticing that every s to t path is the result of a sequence of k 2

Sikkim Manipal University Page No. 115

Fundamentals of Algorithms

Unit 5

decisions. The ith decision involves determining which vertex in Vi+1, 1 i k 2, is to be on the path. It is easy to see that the principle of optimality holds. Let p(i,j) be a minimum-cost path from vertex j in Vi of vertex t. Let cost(i,j) be the cost of this path. Then, using the forward approach, we obtain cos t ( i , j ) min { c( j , l ) cos t ( i 1, l )} (1)

l Vi 1 j ,l E

Before writing an algorithm for a general k-stage graph, let us impose an ordering on the vertices in V. This ordering makes it easier to write the algorithm. We require that the n vertices in V are indexed 1 through n. Indices are assigned in order of stages. First, s is assigned index 1, then vertices in V2 are assigned indices, then vertices from V3, and so on. Vertex t has index n. Hence, indices assigned to vertices in Vi. As a result of this indexing scheme, cost and d can be computed in the order n-1, n-2..., 1. The first subscript in cost, p and d only identifies the stage number and is omitted in the algorithm. The resulting algorithm in pseudocode is F Graph, given in Algorithm 5.1 Algorithm 5.1 1. Algorithm FGraph (G,k,n,p) 2. //The input is a K-stage graph G=(V,E) with n vertices 3. //indexed in order of stages. E is a set of edges and c[i,j] 4. //is the cost of <i, j> p[1:k] is a minimum-cost path. 5. { 6. cost[n] :=0.0; 7. for j:=n-1 to 1 step 1do 8. {//compute cost[j] 9. Let r be a vertex such that <j,r> is an edge 10. of G and c[j,r]+cost[r] is minimum: 11. cost[j] :=c[j, r]+cost[r]: 12. d[j]:r; 13. } 14. //Find a minimum-cost path 15. p[i]:=1; p[k]:=n; 16. for j:=2 to k-1 do p[j]:=d [p-1]]; 17. }

Sikkim Manipal University Page No. 116

Fundamentals of Algorithms

Unit 5

In the above algorithm, we study corresponding for the forward approach.

multistage

graph

pseudocode

The complexity analysis of the function F Graph is fairly straightforward. If G is represented by its adjacency lists, then r in line 9 of Algorithm 5.1 can be found in time proportional to the degree of vertex j, Hence, if G has IEl edges, then time for the for loop of line 7 is (lVl+lEI). The time for the loop of line 16 is (K). Hence, the total time is (IVI+lEl). In addition to the space needed for input, space is needed for cost[ ], d[ ], and p[ ]. The multistage graph problem can also be solved using the backward approach. Let bp(i,j) be a minimum-cost path from vertex s to a vertex j in Vi. Let bcost (I,j) be the cost bp(i,j). From the backward approach we obtain. b cos t ( i , j ) min { b cos t ( i 1, l ) c( l , j )} .5.2

l Vi 1 l,j E

Algorithm 5.2 1. Algorithm Bgraph (G, k, n, p) 2. // same function as Fgraph 3. { 4. bcost[1]:= 0.0; 5. for j:=2 to n do 6. {// compute bcost [j] 7. let r be such that <r,j> is an edge of 8. G and bcost [r] + c [r,j] is minimum; 9. bcost [j]: = bcost [r] + c[r,j]; 10. d[j]:=r; 11. } 12. // Find a minimum-cost path 13. p[1]:=1;p[k]:=n; 14. for j:=k-1 to 2 do p[j]:=d[p [j+1]]; 15. } In the above algorithm we study, the multistage graph pseudocode complexity to backwards approach

Sikkim Manipal University

Page No. 117

Fundamentals of Algorithms

Unit 5

This algorithm has the same complexity as F graph provided G is now represented by its inverse adjacency lists (i.e., for each vertex v, we have a list of vertices w such that <w,v> E). It should be easy to see that both F graph and B graph work correctly even on a more generalized version of multistage graphs. In this generalization, the graph is permitted to have edges <u.v> such that u Vi, V V1 and i<j. Self Assessment Question 2. A multistage graph G = (V,E) is a directed graph in which the vertices are into K 2 disjoint sets Vi, 1 i k.

5.4 All Pair Shortest Paths

Let G = (V, E) be a directed graph with n vertices and let cost be a cost adjacency matrix for G such that cost (i, i) = 0, 1 i n. Then cost (I,j) is the length (or cost) of edge <i,j> if <i,j> E(G) and cost (i,j) = if i j and <i,j> E(G). The all pairs shortest path problem is to determine a matrix A such that A (i,j) is the length of a shortest path from i to j. Let us examine a shortest i to j path in Gi j. This path originates at vertex i and goes through some intermediate vertices (possibly none) and terminates at vertex j. We can assume that this path contains no cycles for if there is a cycle, then this can be deleted without increasing the path length. If k is an intermediate vertex on this shortest path, then the subpaths from i to k and from k to j must be shortest paths from i to k and k to j respectively. Otherwise, the i to j path is not of minimum length. So the principle of optimality holds. This alerts us to the prospect of using dynamic programming. If k is the intermediate vertex with highest index, then the i to k path is a shortest i to k path in G going through no vertex with index greater than k1. Similarly, the k to j path is a shortest k to j path in G going through no vertex of index greater than k1. We can regard the construction of a shortest i to j path as first requiring a decision as to which is the highest indexed intermediate vertex k. once this decision has been made, we need to find two shortest paths, one from i to k and the other from k to j. Neither of these may go through a vertex with index greater than k1.Using Ak(i,j) to represent the length of a shortest path from i to j going through no vertex of index greater than k, we obtain

Sikkim Manipal University Page No. 118

Fundamentals of Algorithms

Unit 5

A( i , j )

min min { A k 1 ( i , k ) A k 1 ( k , j ),cos t ( i , j ) .5.3

1 k n

Clearly, A(i,j) = cost (i, j), 1 i n, 1 j n. We can obtain a recurrence for Ak(j,j) using argument similar to that used before. A shortest path from i to j going through no vertex higher than k either goes through vertex k or it does not. If it does, Ak (i,j) = Ak1 (i,k) + Ak1 (k, j). If it does not, then no intermediate vertex has index greater than k-1. Hence, Ak(i,j)=Ak1 (i, j). Combining, we get A(i,j)=min{A k-1(i, j), Ak-1 (i,k)+Ak-1(k,,j)},k>1 --------------- (5.4) Recurrence (5,4) can be solved for An by first computing A1, then A2, then A3 and so on. Since there is no vertex in G with index greater than n, A (i,j) = A(i,j) Algorithm Allpaths (cost, A, n) Algorithm 5.3 1. //cost[1:n, 1:n] is the cost adjacency matrix of a graph 2. //with n vertices; A[I, j] is the cost of a shortest path from 3. //vertex i to vertex j. cost [I, j] = 0.0, for 1 i n. 4. { 5. for i:=1 to n do 6. for j:=1 to n do 7. A[i, j]:=cost [i, j]; //copy cost into A. 8. for k:=1 to n do 9. for i:=1 to n do 10. for j:=1 to n do 11. A[i, j]:=min(A[i, j], A[i, k]+A[k, j];) 12. } Above algorithm functions to compute the length of shortest paths Function All paths computer An (i.j). The computation is done in place so the superscript on A is not needed. The reason this computation can be carried out in-place is that Ak (i,k) = Ak-1 (i, j) and Ak (k, j) = Ak-1 (k, j). Hence, when Ak is formed, the kth column and row do not change. Consequently, when Ak(i,j) is computed in line II of Algorithm 5.3, A (i,k) = Ak-l (i,k) = Ak (i, k) and A(k,j) = Ak-l (kJ) = Ak (k,j). So, the old values on which the new values are based do not change on this iteration.

Sikkim Manipal University Page No. 119

Fundamentals of Algorithms

Unit 5

Let M = max {cost (i,j)l<i,j> E (G)}. It is easy to see that An (i,j) (n1)M. From the working of all paths, it is clear that if <i,j> E (G) and i j, then we can initialize cost (i,,j) > (n-I) M,. If, at termination, A(i,j)>(n-1), then there is no directed path from i to j in G. The time needed by All paths (Algorithm 5.3) is especially easy to determine because the looping is independent of the data in the matrix A. Line 11 is iterated n3 times, and so the time for all paths is (n3). Self Assessment Question 3. The time needed by All paths is easy to determine, because the looping is of the data in the matrix .

5.5 Travelling Salesman Problem

A tour of G is a directed simple cycle that includes every vertex in V. The cost of the tour is the sum of the cost of the edges on the tour. The travelling salesperson problem is to find a tour of minimum cost. Let G=(V,E) be a directed graph with edges cost cij. The variable cij is defined such that cij = 0, cij> o for all i and j and cij = if < i,j> E. Let lVl = n and assume n>1. A tour is a simple path that starts and ends at vertex 1. Every tour consists of an edge < 1, k> for some k V {1} and a path from vertex k to vertex 1. The path from vertex k to vertex 1 goes through each vertex in V-{1,k} exactly once. It easy to see that if the tour is optimal, then the path from k to 1 must be a shortest k to 1 path going through all vertices in V {1,k). Hence, the principle of optimality holds. Let g (i,s) be the length of a shortest path starting at vertex i, going through all vertices in S, and terminating at vertex 1. The function g(1, V-{1}) is the length of an optimal salesperson tour. From the principal of optimality if follows that g(1,V { 1 }) min { c1k g( k ,V { j , k })} .5.5

2 k n

Generalizing (5.5), we obtain (for i s) g( i ,S ) min{ cij g( j ,S { j })} ..5.6

j S

Equation 5.5 can be solved for g (1, V- {1}) if we know g (k, V-{1,k}) for all choices of k. The g values can be obtained by using (5.6). Clearly, g (i, ) = Ci1, i 1 n.

Sikkim Manipal University

Page No. 120

Fundamentals of Algorithms

Unit 5

Hence, we can use (5.6) to obtain g(i,S) for all S of size 1. Then, we can obtain g (i, s) for S with |S|=2, and so on. When |S|< n-1, the values of i and S for which (i, S) is needed are such that i 1, 1 S, and i S. Let N be the number of g (i, S)s that have to be computed before (5.6) can be used to compute g (i,v-{i}). For each value of |S| there are n-1 choices for i. The number of distinct sets S of size k not including l and i is (kn-2). Hence,

n 2

N

k 0

n 1 kn

n 1 2n

An algorithm that proceeds to find an optimal tour by using (5.5) and (5.6) will require (n22n) time by using computation of g(i,s) with |S|=k ,require k-1 comparisons when solving (5.6). This is better than enumerating all n! different tours to find best one. The most serious drawback of this dynamic programming solution is, O(n2n). This is too large even for modest values of n. Self Assessment Question 4. A of G is a directed simple cycle that includes every vertex in V.

5.6 Summary

In this unit, we studied that Dynamic programming is an algorithm design method that can be used when the solution to a problem can be viewed as the result of a sequence decisions. Optimal sequence of decisions has the property that whatever the initial state and decision are, the remaining decisions must constitute an optimal decision sequence with regard to the state resulting from the first decision, this is called as the principle of optimality. We also studied the concept of travelling salesmen problems using the concept of graphs.

5.7 Terminal Questions

1. Explain the concept of Multistage Graphs 2. Explain the Concept of travelling Salesman Problem 3. Write an Algorithm to obtain a minimum cost s-t path

Sikkim Manipal University Page No. 121

Fundamentals of Algorithms

Unit 5

5.8 Answers

Self Assessment Questions 1. Polynomial complexity 2. partitioned 3. independent 4. tour Terminal Questions 1. A multistage graph G = (V,E) is a directed graph in which the vertices are partitioned into K 2 disjoint sets Vi, 1 i k. In addition, if <u, v> is an edge in E, then u vi, and v vi+1 for some i, 1 i < k. The sets V1 and Vk are such that

V1 Vk i Let s and t, respectively, be the

vertices in V1 and Vk. The vertex s is the source, and t the link. Let c(i, j) be the cost of edge <i, j>. The cost of a path from s to t is the sum of the costs of the edges on the path. The multistage graph problem is to find a minimum-cost path from s to t. Each set Vi defines a stage in the graph. (Refer Section 5.3) 2. Refer Section 5.5 3. Refer Section 5.3

Sikkim Manipal University

Page No. 122

Anda mungkin juga menyukai

- CLS 747 200Dokumen158 halamanCLS 747 200Rodrigo Adam100% (8)

- Shell Hazardous Area Classification FundamentalsDokumen30 halamanShell Hazardous Area Classification Fundamentalsthekevindesai100% (17)

- Kubernetes CommandsDokumen36 halamanKubernetes CommandsOvigz Hero100% (2)

- Exploratory Data Analysis Stephan Morgenthaler (2009)Dokumen12 halamanExploratory Data Analysis Stephan Morgenthaler (2009)s8nd11d UNI100% (2)

- Electrochemical Techniques in BatteryDokumen10 halamanElectrochemical Techniques in BatteryAbdullrahman Al-ShammaaBelum ada peringkat

- Greedy TechniqueDokumen37 halamanGreedy TechniquegorakhnnathBelum ada peringkat

- Design and Analysis of AlgorithmDokumen29 halamanDesign and Analysis of AlgorithmManishBelum ada peringkat

- Bernard D. Marquez Eduardo M. Axalan Engr. William A.L.T. NGDokumen1 halamanBernard D. Marquez Eduardo M. Axalan Engr. William A.L.T. NGRhon Nem KhoBelum ada peringkat

- Design and Analysis of Algorithms LabDokumen62 halamanDesign and Analysis of Algorithms LabRajendraYalaburgiBelum ada peringkat

- Esquema Elétrico NGD 9.3Dokumen2 halamanEsquema Elétrico NGD 9.3LuisCarlosKovalchuk100% (1)

- Limitations of Algorithm PowerDokumen10 halamanLimitations of Algorithm PowerAkhilesh Kumar100% (1)

- Dynamic ProgrammingDokumen23 halamanDynamic ProgrammingHydroBelum ada peringkat

- Engine Maintenance PartsDokumen13 halamanEngine Maintenance PartsSerkanAl100% (1)

- Dynamic ProgrammingDokumen15 halamanDynamic ProgrammingKyambadde FranciscoBelum ada peringkat

- Cs 6402 Design and Analysis of AlgorithmsDokumen112 halamanCs 6402 Design and Analysis of Algorithmsvidhya_bineeshBelum ada peringkat

- Dogging Guide 2003 - WorkCover NSWDokumen76 halamanDogging Guide 2003 - WorkCover NSWtadeumatas100% (1)

- Unit 13 Limitations of Algorithm Power: StructureDokumen21 halamanUnit 13 Limitations of Algorithm Power: StructureRaj SinghBelum ada peringkat

- CS2251 Design and Analysis of Algorithms - Question Bank: Unit - IDokumen14 halamanCS2251 Design and Analysis of Algorithms - Question Bank: Unit - ISatyajit MukherjeeBelum ada peringkat

- Warshall's AlgorithmDokumen2 halamanWarshall's Algorithmfarji kumarBelum ada peringkat

- DAA Decrease and Conquer ADADokumen16 halamanDAA Decrease and Conquer ADAachutha795830Belum ada peringkat

- Heapsort: Comp 122, Spring 2004Dokumen31 halamanHeapsort: Comp 122, Spring 2004Ioannis MilasBelum ada peringkat

- Data Visualization - PGDBDA - Feb 19Dokumen11 halamanData Visualization - PGDBDA - Feb 19Shashank GuptaBelum ada peringkat

- Microsoft Excel 2010 (NOTES)Dokumen10 halamanMicrosoft Excel 2010 (NOTES)Shara Cañada CalvarioBelum ada peringkat

- Introduction To Ms ExcelDokumen19 halamanIntroduction To Ms ExcelVandana InsanBelum ada peringkat

- Huffman AlgorithmDokumen21 halamanHuffman Algorithmabdullah azzam robbaniBelum ada peringkat

- Cse NotesDokumen16 halamanCse NotesPk AkBelum ada peringkat

- Charts and GraphsDokumen9 halamanCharts and GraphsKanikaBelum ada peringkat

- HeapAndHeapsort PDFDokumen12 halamanHeapAndHeapsort PDFLoai LabaniBelum ada peringkat

- UML Semantics Appendix M1-UML Glossary: 13 January 1997Dokumen18 halamanUML Semantics Appendix M1-UML Glossary: 13 January 1997erikurniaBelum ada peringkat

- MS Excel - Glossary: Absolute Cell ReferencingDokumen2 halamanMS Excel - Glossary: Absolute Cell ReferencingVipul SharmaBelum ada peringkat

- Business Analytics & Intelligence (BAI - Batch 10)Dokumen7 halamanBusiness Analytics & Intelligence (BAI - Batch 10)Piyush JainBelum ada peringkat

- Microbial Growth KineticsRSDokumen33 halamanMicrobial Growth KineticsRSnogenius100% (1)

- Excel ChartsDokumen1 halamanExcel Chartsapi-126005860Belum ada peringkat

- Knapsack ProblemDokumen11 halamanKnapsack ProblemVijay TrivediBelum ada peringkat

- Design and Analysis of AlgorithmsDokumen3 halamanDesign and Analysis of Algorithmskousjain1Belum ada peringkat

- MBA Knowledge Base: The Five Stages of Business IntelligenceDokumen1 halamanMBA Knowledge Base: The Five Stages of Business Intelligenceiraj shaikhBelum ada peringkat

- UAP CV FormatDokumen4 halamanUAP CV FormatMd. RuHul A.Belum ada peringkat

- Democracy in The Contemporary WorldDokumen6 halamanDemocracy in The Contemporary WorldSocialscience4u.blogspot.comBelum ada peringkat

- Dantes SecularismDokumen11 halamanDantes Secularismözge BalBelum ada peringkat

- Crisis Intervention ModelDokumen2 halamanCrisis Intervention ModelJayjeet BhattacharjeeBelum ada peringkat

- Reviewer RizalDokumen22 halamanReviewer RizalCorrine SolijonBelum ada peringkat

- Mission Vision Core Values: Excellence and ServiceDokumen16 halamanMission Vision Core Values: Excellence and ServicemrigankBelum ada peringkat

- Dante Alighieri - "De Monarchia"Dokumen38 halamanDante Alighieri - "De Monarchia"Mariz EreseBelum ada peringkat

- Huffman Coding: Greedy AlgorithmDokumen27 halamanHuffman Coding: Greedy AlgorithmIbrahim ChoudaryBelum ada peringkat

- Coin Change Problem Using DPDokumen2 halamanCoin Change Problem Using DPGoutam DasBelum ada peringkat

- CS8451 Design and Analysis of Algorithms MCQDokumen206 halamanCS8451 Design and Analysis of Algorithms MCQsanjana jadhavBelum ada peringkat

- Huffman CodesDokumen27 halamanHuffman CodesNikhil YadalaBelum ada peringkat

- Software EngineeringDokumen16 halamanSoftware EngineeringRashida JahanBelum ada peringkat

- MTH 501Dokumen7 halamanMTH 501Lightening LampBelum ada peringkat

- Floyd-Warshall AlgorithmDokumen6 halamanFloyd-Warshall Algorithmbartee000Belum ada peringkat

- Design and Analysis of Algorithms (DAA) NotesDokumen112 halamanDesign and Analysis of Algorithms (DAA) NotesMunavalli Matt K SBelum ada peringkat

- Knapsack Problem CourseraDokumen3 halamanKnapsack Problem CourseraAditya JhaBelum ada peringkat

- ChartsDokumen39 halamanChartsdeivy ardilaBelum ada peringkat

- Cruscotto v1 ManualDokumen18 halamanCruscotto v1 ManualAntonLoginovBelum ada peringkat

- Transitive ClosureDokumen9 halamanTransitive ClosureSK108108Belum ada peringkat

- Dynamic ProgrammingDokumen52 halamanDynamic ProgrammingattaullahchBelum ada peringkat

- Exploratory Data Analysis - Komorowski PDFDokumen20 halamanExploratory Data Analysis - Komorowski PDFEdinssonRamosBelum ada peringkat

- Cs 503 - Design & Analysis of Algorithm: Multiple Choice QuestionsDokumen3 halamanCs 503 - Design & Analysis of Algorithm: Multiple Choice QuestionsSheikh ShabirBelum ada peringkat

- 440 Sample Questions DecDokumen7 halaman440 Sample Questions DecSai KumarBelum ada peringkat

- Week 3 Excel With Excel Working With Structured Data SetsDokumen20 halamanWeek 3 Excel With Excel Working With Structured Data Setsirina88888100% (1)

- Blockchain: The Key Success of Healthcare DevelopmentDokumen8 halamanBlockchain: The Key Success of Healthcare DevelopmentIJAERS JOURNALBelum ada peringkat

- IT2251 - Software Engineering and Quality Assurance - NoRestrictionDokumen7 halamanIT2251 - Software Engineering and Quality Assurance - NoRestrictionrazithBelum ada peringkat

- Charting BasicsDokumen32 halamanCharting Basicsapi-26344229100% (2)

- Cs1201 Design and Analysis of AlgorithmDokumen27 halamanCs1201 Design and Analysis of AlgorithmmaharajakingBelum ada peringkat

- Google Adsense Beginners Guide Free e BookDokumen10 halamanGoogle Adsense Beginners Guide Free e BookBeytown WorldBelum ada peringkat

- Unit 3 - Analysis Design of Algorithm - WWW - Rgpvnotes.inDokumen8 halamanUnit 3 - Analysis Design of Algorithm - WWW - Rgpvnotes.inPranjal ChawdaBelum ada peringkat

- Daa Unit-3Dokumen32 halamanDaa Unit-3pihadar269Belum ada peringkat

- Mind, Character and Personality IDokumen184 halamanMind, Character and Personality IJohn ArthurBelum ada peringkat

- Messages To Young PeopleDokumen204 halamanMessages To Young PeopleJohn Arthur100% (1)

- Look To Jesus: William ReidDokumen38 halamanLook To Jesus: William ReidJohn ArthurBelum ada peringkat

- Model Question Paper: (For Final Practical Examination) Technical Communication (Practical) (BT 0084)Dokumen1 halamanModel Question Paper: (For Final Practical Examination) Technical Communication (Practical) (BT 0084)John ArthurBelum ada peringkat

- 01 ServerSideProgramming PracticalDokumen53 halaman01 ServerSideProgramming PracticalJohn ArthurBelum ada peringkat

- EducationDokumen145 halamanEducationJohn ArthurBelum ada peringkat

- Go Global: Universit Y of TorontoDokumen11 halamanGo Global: Universit Y of TorontoJohn ArthurBelum ada peringkat

- Moringa Benefits: Here Are The Benefits of Continuous Intake of Moringa TeaDokumen1 halamanMoringa Benefits: Here Are The Benefits of Continuous Intake of Moringa TeaJohn ArthurBelum ada peringkat

- BT0079-Mini Project GuidelinesDokumen9 halamanBT0079-Mini Project GuidelinesJohn ArthurBelum ada peringkat

- BibliographyDokumen1 halamanBibliographyJohn ArthurBelum ada peringkat

- Sunwich Programmes UCCDokumen10 halamanSunwich Programmes UCCJohn ArthurBelum ada peringkat

- Lingu 02Dokumen80 halamanLingu 02John ArthurBelum ada peringkat

- Acquisition Bulletin First Quarter 2012 2Dokumen3 halamanAcquisition Bulletin First Quarter 2012 2John ArthurBelum ada peringkat

- Introduction To HRMDokumen17 halamanIntroduction To HRMJohn ArthurBelum ada peringkat

- Unit 14 Complexity of Algorithms: StructureDokumen17 halamanUnit 14 Complexity of Algorithms: StructureJohn ArthurBelum ada peringkat

- Classical Economics Vs Keynesian Economics Part 1Dokumen5 halamanClassical Economics Vs Keynesian Economics Part 1Aahil AliBelum ada peringkat

- Unit 13 Directed Graphs: StructureDokumen19 halamanUnit 13 Directed Graphs: StructureJohn ArthurBelum ada peringkat

- Introduction To HRMDokumen17 halamanIntroduction To HRMJohn ArthurBelum ada peringkat

- Unit 12 Representations of Graphs: StructureDokumen15 halamanUnit 12 Representations of Graphs: StructureJohn ArthurBelum ada peringkat

- 3-Axially Loaded Compresion Members PDFDokumen37 halaman3-Axially Loaded Compresion Members PDFKellen BrumbaughBelum ada peringkat

- Huawei ACU2 Wireless Access Controller DatasheetDokumen12 halamanHuawei ACU2 Wireless Access Controller Datasheetdexater007Belum ada peringkat

- SR No Co Name Salutation Person Designation Contact NoDokumen4 halamanSR No Co Name Salutation Person Designation Contact NoAnindya SharmaBelum ada peringkat

- Diesel Generator Set QSL9 Series Engine: Power GenerationDokumen4 halamanDiesel Generator Set QSL9 Series Engine: Power Generationsdasd100% (1)

- Section 05120 Structural Steel Part 1Dokumen43 halamanSection 05120 Structural Steel Part 1jacksondcplBelum ada peringkat

- ChemCAD and ConcepSys AIChE Spring 09Dokumen28 halamanChemCAD and ConcepSys AIChE Spring 09ConcepSys Solutions LLCBelum ada peringkat

- Comparative Tracking Index of Electrical Insulating MaterialsDokumen6 halamanComparative Tracking Index of Electrical Insulating MaterialsAbu Anas M.SalaheldinBelum ada peringkat

- Genius Publication CatalogueDokumen16 halamanGenius Publication CatalogueRaheel KhanBelum ada peringkat

- Advanced Fluid Mechanics: Luigi Di Micco Email: Luigi - Dimicco@dicea - Unipd.itDokumen16 halamanAdvanced Fluid Mechanics: Luigi Di Micco Email: Luigi - Dimicco@dicea - Unipd.itHubert MoforBelum ada peringkat

- Lesson Plan 2 Road FurnitureDokumen4 halamanLesson Plan 2 Road FurnitureShahbaz SharifBelum ada peringkat

- Online Examination System For AndroidDokumen7 halamanOnline Examination System For AndroidSri Sai UniversityBelum ada peringkat

- Behringer UB2222FX PRODokumen5 halamanBehringer UB2222FX PROmtlcaqc97 mtlcaqc97Belum ada peringkat

- 4.10) Arch Shaped Self Supporting Trussless Roof SpecificationsDokumen11 halaman4.10) Arch Shaped Self Supporting Trussless Roof Specificationshebh123100% (1)

- MJ4502 High-Power PNP Silicon TransistorDokumen4 halamanMJ4502 High-Power PNP Silicon Transistorjoao victorBelum ada peringkat

- Supplier GPO Q TM 0001 02 SPDCR TemplateDokumen6 halamanSupplier GPO Q TM 0001 02 SPDCR TemplateMahe RonaldoBelum ada peringkat

- Internship Report May 2016Dokumen11 halamanInternship Report May 2016Rupini RagaviahBelum ada peringkat

- PIONEER AUTORADIO Deh-X4850bt Deh-X6850bt Operating Manual Ing - Esp - PorDokumen72 halamanPIONEER AUTORADIO Deh-X4850bt Deh-X6850bt Operating Manual Ing - Esp - PorJesus NinalayaBelum ada peringkat

- Topic 6 ESD & EMI Considerations Electrostatic Sensitive Devices (M4.2, 5.12 &5.14) - 1Dokumen49 halamanTopic 6 ESD & EMI Considerations Electrostatic Sensitive Devices (M4.2, 5.12 &5.14) - 1BeaglelalahahaBelum ada peringkat

- Curriculum Vitae: Augusto Javier Puican ZarpanDokumen4 halamanCurriculum Vitae: Augusto Javier Puican Zarpanfrank_d_1Belum ada peringkat

- Astm D3359Dokumen9 halamanAstm D3359Angel GuachaminBelum ada peringkat

- Jerry Hill's Letter To Bijan Sartipi, Director, District 4 CaltransDokumen1 halamanJerry Hill's Letter To Bijan Sartipi, Director, District 4 CaltransSabrina BrennanBelum ada peringkat

- PBLauncherDokumen50 halamanPBLauncherborreveroBelum ada peringkat

- HARGA REFERENSI B2S PapuaDokumen6 halamanHARGA REFERENSI B2S PapuaAbiyoga AdhityaBelum ada peringkat