Neural Network Time Series Prediction SP500 2

Diunggah oleh

motaheriHak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

Neural Network Time Series Prediction SP500 2

Diunggah oleh

motaheriHak Cipta:

Format Tersedia

Neural Network Time Series Prediction

With Matlab

By

Thorolf Horn Tonjum

School of Computing and Technology,

University of Sunderland, The Informatics Centre,

St Peter's Campus, St Peter's Way,

Sunderland, SR6 !!,

United "ingdom

#mail $ thorolf%ton&um'sunderland%ac%u(

Introduction

This paper describes neural network time series prediction project,

applied to forecasting the American S&P !! stock inde"#

$%& weeks of raw data is preprocessed and used to train a neural network#

The project is built with Matlab 'Mathworks inc#(

Matlab is used for processing and preprocessing the data#

A prediction error of !#!!))$ 'mean s*uared error( is achie+ed#

,n of the major goals of the project is to +isuali-e how the network

adapts to the real inde" course b. appro"imation, this is

achie+ed b. training the network in series of !! epochs each,

showing the change of the appro"imation 'green color( after each training#

/emember to push the 0Train !! 1pochs2 button at least ) times,

to get good results and a feel for the training# 3ou might ha+e to restart the whole program

se+eral times, before it 0lets loose2 and achie+es a good fit,

one out of reruns produce god fits#

To run4rerun the program in matlab, t.pe 5

66 preproc

66 t"

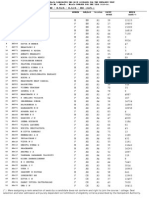

Dataset

$%7 weeks of American S&P !! inde" data#

8) basic forecasting +ariables#

The 8) basic +ariables are 5

8# S&P week highest inde"#

9# S&P week lowest inde"#

:# N3S1 week +olume#

)# N3S1 ad+ancing +olume 4 declining +olume#

# N3S1 ad+ancing 4 declining issues#

$# N3S1 new highs 4new lows#

%# NAS;A< week +olume#

7# NAS;A< ad+ancing +olume 4 declining +olume#

&# NAS;A< ad+ancing 4 declining issues#

8!# NAS;A< new highs 4new lows#

88# : Months treasur. bill#

89# :! 3ears treasur. bond .ield#

8:# =old price#

8)# S&P weekl. closing course#

These are all strong economic indicators#

The indicators ha+e not been subject to re>inde"ation or other alternations of the measurement

procedures, so the dataset co+ers an unobstructed span from ?anuar. 8&7! to ;ecember 8&&9#

@nterest rates and inflation are not included, as the. are reflected in the :! .ears treasur. bond

and the price of gold# The dataset pro+ides an ample model of the macro econom.#

Preprocessing

The weekl. change in closing course is used as output target for the network,

The 8) basic +ariables are transformed into ) features b. 5

Taking the first 8: +ariables and producing 5

@# The change since last week 'delta(#

@@# The Second power '"A9(#

@@@# The third power '"A:(#

And using the course change from last week as an input +ariable the week after, gi+es

) feature +ariables 'the 8) original static +ariables included(#

All input +ariables are then subjected to normali-ation, which

ensures that the input data follows the normal distribution, with a standard de+iation of 8,

and a mean of -ero# BMatlab command 5 prestdC

The dimensionalit. of the data is then reduced to 97 +ariables after a principal component

anal.sis with !#!!8 as threshold# The threshold is set low since we want to preser+e as much

data as possible for the 1lman network to work on# BMatlab command 5 prepcaC

We then scale the +ariables 'including the target data( to fit the B>8,8C range, as we use tansig

output functions# BMatlab command 5 premnm"C

S1 matlab file 0Preproc#m2 for further details#

Choice of Network architecture and algorithms.

We are doing time series prediction, but we are forecasting a stock inde", and rel. on current

economic data just as much as the lagged data from the time series being forecasted,

this gi+es us a wider specter of neural model options#

Multi De+el Perceptron networks 'MDP(,

Tapped ;ela.>line 'T;NN(, and a recurrent network model can be used#

@n our case, detecting c.clic patterns, becomes a priorit. together with good multi+ariate

pattern appro"imation abilit.#

The 1lman network is selected on behalf of its abilit. to detect both temporal and spatial

patterns# Ehoosing a recurrent network is fa+orable, as it accumulates historic data in its

recurrent connections#

Fsing a 1lman network for this problem domain, demands a high number of hidden weights,

: is found to be the best trade off in our e"ample, whereas if we used a normal MDP

network, around 8$ hidden weights would be enough#

The 1lman network needs more hidden nodes to respond to the comple"it. in the data,

as well as ha+ing to appro"imate both temporal and spatial patterns#

We train the network with gradient descent training algorithm, enhanced with momentum,

and adapting learning rate, this enables the network to performance>+ise climb past points

were gradient descent training algorithms without adapting learning rate

would get stuck#

We use the matlab learning function 5 learnpn for learning,

as we need robustness to deal with some *uite large outliers in the data#

Ma"imum +alidation failures Bnet#trainParam#ma"GfailH9C

@s set arbitraril. high , but this pro+ides the learning algorithm higher abilit. to escape local

minima, and continue to impro+e, were it would otherwise get stuck#

The momentum is also set high '!#&( to ensure high impact of pre+ious weight change,

This speeds up the gradient descent, helps keeps us out of local minima, and

resists memori-ation#

The learning rate is initiall. set relati+el. high at !#9, this is possible because of the high

momentum, and because it2s remote controlled b. the adapti+e learning rate rules of the

matlab training method traingd"#

We choose the purelin as the transfer function for the output of the hidden la.er,

as this pro+ided more appro"imation power, and tansig for the output la.er, as we scaled the

target data to fit the B>8,8C range#

The weight initiali-ation scheme init-ero is used to start the weights off from -ero,

this pro+ides the best end results, but heightens the trial and error factor, resulting in

ha+ing to restart the program between to 7 times to get a Iluck.J fit#

,nce .ou ha+e a Iluck.J fit, training the network for :> K !! epochs usuall.

.ields result in the !#!!) mse range#

Ma"imum performance increase is set to 8#9), gi+ing the algorithm some leewa. to test out

alternati+e routes, before getting called back on the path#

Bnet#trainParam#ma"GperfGinc H 8#9)C#

With well o+er )!! training cases to work with, : hidden neurons and 97 input +ariables,

we get &7! hidden la.er weights, which is well below the rule of thumb number )!!!

'8! " Eases(#

/esults in the !#!!) mse range, supports the conclusion that the model choice

was not the worst possible# Additional results could ha+e come from adding lagged

data, like e"ponentiall. smoothed a+erages from different time frames and with different

smoothing factors, efficientl. accumulating memor. of large time scales#

@ntegrating a tapped dela.>line setup, could also ha+e been beneficial#

Lut these alternati+es would ha+e added to the course of dimensionalit., probabl. not

.ielding great benefits in return, especiall. as long as the recurrent memor. of the 1lman

network seemed to perform with ample sufficienc.#

The training sets )!! weeks was taken from the start of the data, then came the 8)! weeks of

test set, and finall. 8:& weeks of +alidation data,

@n effect appro"imating data on the !#!!) mse le+el,

more than .ears '9%& weeks( into the future#

Training & Visualiation.

The data is as described abo+e, di+ided in the classic $! 9! 9! format for

training>set testing>set and +alidation>set#

The appro"imation is +isuali-ed b. the actual course 'blue( +ersus the appro"imation 'green(#

This is done for the training set, the testing set, and the +alidation set#

This clearl. demonstrates how the neural net is apro"imating the data 5

1rrors are displa.ed as red bars in the bottom of the charts#

The training is done b. training !! epochs, then displa.ing the results, then training a new

!! epochs, and so forth# Seeing the appro"imation 0li+e2 gi+es interesting insights into how

the algorithm adapts, and how changes in the model affect adaptation#

Push the button to train a new !! epochs#

The effect of the adapti+e learning rate is *uite intriguing, specificall. the effect

on the performance#

;.namic learning rate, controlled b. adaptation rules#

Mi+id performance change b. the changing learning rate#

The correlation plot gi+es ample insight into how close the model is mapping the data#

To see this push the 0correlation plot2 button#

The 0Sum'abs'errors((2 displa.s the sum of all the absolutes of the errors, as a steadfast

and unfiltered measurement#

Bi!liography

Malluru /ao 8&&: IENN Neural NettworksJ#

Neural Nettwork Toolbo" Fserguide# ) edition#

"ppendi#$ ". The %atla! code $

Preproc#m 5 Preprocessing the data#

T"#m 5 Setting up the network#

=ui#m 5 Training and displa.ing the network#

Anda mungkin juga menyukai

- Abnitio Interview QuestionDokumen9 halamanAbnitio Interview QuestionSomnath ChatterjeeBelum ada peringkat

- CS462 Project Report: Name: Samuel Day 1. The Nature of The ProjectDokumen7 halamanCS462 Project Report: Name: Samuel Day 1. The Nature of The ProjectSam DayBelum ada peringkat

- ProjectReport KanwarpalDokumen17 halamanProjectReport KanwarpalKanwarpal SinghBelum ada peringkat

- Neural Networks For PredictionDokumen4 halamanNeural Networks For PredictionRishi JhaBelum ada peringkat

- Getting rich quick with machine learning and stock predictionsDokumen17 halamanGetting rich quick with machine learning and stock predictionsJames LiuBelum ada peringkat

- Jupyter LabDokumen42 halamanJupyter LabPaul ShaafBelum ada peringkat

- A Hands-On Guide For Essbase Report ScriptDokumen9 halamanA Hands-On Guide For Essbase Report ScriptAmit SharmaBelum ada peringkat

- # Assignment 4&5 (Combined) (Clustering & Dimension Reduction)Dokumen15 halaman# Assignment 4&5 (Combined) (Clustering & Dimension Reduction)raosahebBelum ada peringkat

- The Prediction of Sale Time Series by Artificial Neural NetworkDokumen4 halamanThe Prediction of Sale Time Series by Artificial Neural Networkbasualok@rediffmail.comBelum ada peringkat

- AbInitio FAQsDokumen14 halamanAbInitio FAQssarvesh_mishraBelum ada peringkat

- Clean street data and fix errors for improved network analysis routingDokumen57 halamanClean street data and fix errors for improved network analysis routingGabriel B Arzabe100% (1)

- Design ComparisonDokumen19 halamanDesign ComparisonoddlogicBelum ada peringkat

- Naveen Kumar - ResumeDokumen4 halamanNaveen Kumar - ResumeRevathikalpanaBelum ada peringkat

- Tarptask 4Dokumen10 halamanTarptask 4Kartik SharmaBelum ada peringkat

- Regulations: Key elements of Grid ComputingDokumen2 halamanRegulations: Key elements of Grid Computinginvincible_shalin6954Belum ada peringkat

- BPSK SimulinkDokumen8 halamanBPSK SimulinkMAAZ KHANBelum ada peringkat

- 3-Predicting Stock Prices Using Deep Learning - by Yacoub Ahmed - Towards Data Science PDFDokumen15 halaman3-Predicting Stock Prices Using Deep Learning - by Yacoub Ahmed - Towards Data Science PDFAkash GuptaBelum ada peringkat

- Technical Note 17 Simulation: Review and Discussion QuestionsDokumen15 halamanTechnical Note 17 Simulation: Review and Discussion QuestionsAlejandroV2013Belum ada peringkat

- Informatica Map/Session Tuning Covers Basic, Intermediate, and Advanced Tuning Practices. (By: Dan Linstedt)Dokumen14 halamanInformatica Map/Session Tuning Covers Basic, Intermediate, and Advanced Tuning Practices. (By: Dan Linstedt)narendrareddybanthiBelum ada peringkat

- Add error bars to experimental data plotDokumen6 halamanAdd error bars to experimental data plotVicknesh ThanabalBelum ada peringkat

- Accessing PostgreSQL in CPPDokumen4 halamanAccessing PostgreSQL in CPPg_teodorescuBelum ada peringkat

- CS533 COMPUTER NETWORKSDokumen5 halamanCS533 COMPUTER NETWORKSSujy CauBelum ada peringkat

- 12 Useful Pandas Techniques in Python For Data ManipulationDokumen19 halaman12 Useful Pandas Techniques in Python For Data Manipulationxwpom2100% (2)

- ML Final Project ReportDokumen8 halamanML Final Project ReportAditya GuptaBelum ada peringkat

- Activity 01: Python Set/s of Source Code Use in The Activity (Paste Below)Dokumen2 halamanActivity 01: Python Set/s of Source Code Use in The Activity (Paste Below)SHELPTSBelum ada peringkat

- RNN LSTM Example Implementations With Keras TensorFlowDokumen20 halamanRNN LSTM Example Implementations With Keras TensorFlowRichard SmithBelum ada peringkat

- Project Bank: - Brought To You by - Ultimate Collection of Projects & Source Codes in All Programming LanguagesDokumen7 halamanProject Bank: - Brought To You by - Ultimate Collection of Projects & Source Codes in All Programming LanguagesRavi GuptaBelum ada peringkat

- Principal Component AnalysisDokumen1 halamanPrincipal Component AnalysisPranav MahamulkarBelum ada peringkat

- MBA Semester 2 Operations Research AssignmentDokumen12 halamanMBA Semester 2 Operations Research AssignmentamithakurBelum ada peringkat

- Project PlanDokumen8 halamanProject Planapi-532121045Belum ada peringkat

- Scimakelatex 78982 Asdf Asdfa Sdfasdasdff AsdfasdfDokumen8 halamanScimakelatex 78982 Asdf Asdfa Sdfasdasdff Asdfasdfefg243Belum ada peringkat

- Simulating Communications SystemsDokumen11 halamanSimulating Communications SystemsStarLink1Belum ada peringkat

- NEURAL NETWORKS: Basics using MATLAB Neural Network ToolboxDokumen54 halamanNEURAL NETWORKS: Basics using MATLAB Neural Network ToolboxBob AssanBelum ada peringkat

- Best PHP Mysql Interview Questions and Answers by Ashutoh Kr. AzadDokumen17 halamanBest PHP Mysql Interview Questions and Answers by Ashutoh Kr. AzadAshutosh Kr AzadBelum ada peringkat

- A Neural Network Model Using PythonDokumen10 halamanA Neural Network Model Using PythonKarol SkowronskiBelum ada peringkat

- Soto FerrariDokumen9 halamanSoto Ferrarifawzi5111963_7872830Belum ada peringkat

- AI Lab11 TaskDokumen21 halamanAI Lab11 TaskEngr Aftab AminBelum ada peringkat

- Department of Electronics & Communication Engineering Lab Manual Digital Signal Processing Using Matlab B-Tech - 6th SemesterDokumen22 halamanDepartment of Electronics & Communication Engineering Lab Manual Digital Signal Processing Using Matlab B-Tech - 6th SemesterMohit AroraBelum ada peringkat

- Project Report CS 341: Computer Architecture LabDokumen12 halamanProject Report CS 341: Computer Architecture LabthumarushikBelum ada peringkat

- Dbms Unit-5 NotesDokumen27 halamanDbms Unit-5 Notesfather_mother100% (2)

- SMS Call PredictionDokumen6 halamanSMS Call PredictionRJ RajpootBelum ada peringkat

- Python Matplotlib Data VisualizationDokumen32 halamanPython Matplotlib Data VisualizationLakshit ManraoBelum ada peringkat

- Bayesian, Linear-Time CommunicationDokumen7 halamanBayesian, Linear-Time CommunicationGathBelum ada peringkat

- Chapter 7 ImplementationDokumen59 halamanChapter 7 ImplementationJebaraj JeevaBelum ada peringkat

- Design and Anlaysis of AlgorithmDokumen206 halamanDesign and Anlaysis of AlgorithmSandeep VenupureBelum ada peringkat

- TB 969425740Dokumen16 halamanTB 969425740guohong huBelum ada peringkat

- Sree Buddha College of Engineering, Pattoor: 08.607 Microcontroller Lab (TA) Lab ManualDokumen103 halamanSree Buddha College of Engineering, Pattoor: 08.607 Microcontroller Lab (TA) Lab ManualAr UNBelum ada peringkat

- USACO TrainingDokumen12 halamanUSACO Trainingif05041736Belum ada peringkat

- Build Neural Network With MS Excel SampleDokumen104 halamanBuild Neural Network With MS Excel SampleEko SuhartonoBelum ada peringkat

- Decoupling Vacuum Tubes from Model Checking in Extreme ProgrammingDokumen7 halamanDecoupling Vacuum Tubes from Model Checking in Extreme Programming李舜臣Belum ada peringkat

- JDK 1.5 New Features: Generics, Enhanced For Loops, Autoboxing and MoreDokumen4 halamanJDK 1.5 New Features: Generics, Enhanced For Loops, Autoboxing and Morekumar554Belum ada peringkat

- AlgorithmDokumen15 halamanAlgorithmMansi SinghBelum ada peringkat

- Ambimorphic, Highly-Available Algorithms For 802.11B: Mous and AnonDokumen7 halamanAmbimorphic, Highly-Available Algorithms For 802.11B: Mous and Anonmdp anonBelum ada peringkat

- Stock Price Prediction Using ARIMA Model by Dereje Workneh MediumDokumen1 halamanStock Price Prediction Using ARIMA Model by Dereje Workneh MediumLê HoàBelum ada peringkat

- DL Mannual For ReferenceDokumen58 halamanDL Mannual For ReferenceDevant PajgadeBelum ada peringkat

- Mayank Kumar RatreDokumen15 halamanMayank Kumar RatreGjrn HhrBelum ada peringkat

- Lab 5Dokumen8 halamanLab 5maheshasicBelum ada peringkat

- Machine Learning: Hands-On for Developers and Technical ProfessionalsDari EverandMachine Learning: Hands-On for Developers and Technical ProfessionalsBelum ada peringkat

- Saw BookDokumen1 halamanSaw BookmotaheriBelum ada peringkat

- How To Improve Your Academic Writing PDFDokumen24 halamanHow To Improve Your Academic Writing PDFipqtrBelum ada peringkat

- The Ten QuestionsDokumen11 halamanThe Ten QuestionsmotaheriBelum ada peringkat

- BibleContra BigDokumen1 halamanBibleContra BigGiacomo Zeb LenziBelum ada peringkat

- BibleContra BigDokumen1 halamanBibleContra BigGiacomo Zeb LenziBelum ada peringkat

- 136 Bible ContradictionsDokumen45 halaman136 Bible Contradictionskphillips_biz8996Belum ada peringkat

- H KAINH ΔIAΘHKH (Stephanus 1550 Received Text)Dokumen737 halamanH KAINH ΔIAΘHKH (Stephanus 1550 Received Text)DimitryBelum ada peringkat

- The Greek Text of The New TestamentDokumen20 halamanThe Greek Text of The New TestamentmotaheriBelum ada peringkat

- Students' Union Strategic PlanDokumen6 halamanStudents' Union Strategic PlanmotaheriBelum ada peringkat

- Emotional Intelligence and Affective Events in Nurse Edu - 2017 - Nurse EducatioDokumen7 halamanEmotional Intelligence and Affective Events in Nurse Edu - 2017 - Nurse EducatioarbhBelum ada peringkat

- PPT, SBM Assessment ToolDokumen69 halamanPPT, SBM Assessment ToolMay Ann Guinto90% (20)

- Williams C 15328441 Edp255 Assessment TwoDokumen12 halamanWilliams C 15328441 Edp255 Assessment Twoapi-469447584100% (2)

- Department of Education - Division of PalawanDokumen27 halamanDepartment of Education - Division of PalawanJasmen Garnado EnojasBelum ada peringkat

- Vocabulary and grammar practice with prefixes, verbs and adjectivesDokumen3 halamanVocabulary and grammar practice with prefixes, verbs and adjectivesRosa MartinezBelum ada peringkat

- K To 12 Curriculum Guide For Mother Tongue (Grades 1 To 3)Dokumen13 halamanK To 12 Curriculum Guide For Mother Tongue (Grades 1 To 3)Dr. Joy Kenneth Sala BiasongBelum ada peringkat

- Sex EducationDokumen2 halamanSex EducationHuemer UyBelum ada peringkat

- SOP Bain Vishesh Sharma PDFDokumen1 halamanSOP Bain Vishesh Sharma PDFNikhil JaiswalBelum ada peringkat

- Financials: Five-Year Total Research Funding Record Research FundingDokumen18 halamanFinancials: Five-Year Total Research Funding Record Research FundingbuythishornBelum ada peringkat

- 2 Field Study Activities 7Dokumen13 halaman2 Field Study Activities 7Dionisia Rosario CabrillasBelum ada peringkat

- How To Complete TSA Charts and Search The NOC Career Handbook For Potentially Suitable OccupationsDokumen9 halamanHow To Complete TSA Charts and Search The NOC Career Handbook For Potentially Suitable OccupationsJohn F. Lepore100% (1)

- M4 - Post Task: Ramboyong, Yarrah Ishika G. Dent - 1BDokumen8 halamanM4 - Post Task: Ramboyong, Yarrah Ishika G. Dent - 1BAlyanna Elisse VergaraBelum ada peringkat

- Jennifer Hutchison's Annotated BibliographyDokumen10 halamanJennifer Hutchison's Annotated Bibliographyapi-265761386Belum ada peringkat

- Mtech 2013 RankDokumen419 halamanMtech 2013 Ranksatyendra_scribd81Belum ada peringkat

- Units 1-9: Course Code: 6466Dokumen291 halamanUnits 1-9: Course Code: 6466Sonia MushtaqBelum ada peringkat

- Curriculum and Instruction Delivery: PadayonDokumen15 halamanCurriculum and Instruction Delivery: PadayonDada N. NahilBelum ada peringkat

- 2014 Table Clinic InstructionsDokumen19 halaman2014 Table Clinic InstructionsMaria Mercedes LeivaBelum ada peringkat

- Institute of Graduate Studies of Sultan Idris Education University Dissertation/Thesis Writing GuideDokumen11 halamanInstitute of Graduate Studies of Sultan Idris Education University Dissertation/Thesis Writing GuideNurul SakiinahBelum ada peringkat

- Giáo Án Tiếng Anh 9 Thí ĐiểmDokumen181 halamanGiáo Án Tiếng Anh 9 Thí ĐiểmMy TranBelum ada peringkat

- Silo - Tips - Toda Mulher Quer Um Cafajeste by Eduardo Ribeiro AwsDokumen3 halamanSilo - Tips - Toda Mulher Quer Um Cafajeste by Eduardo Ribeiro AwsMaikon SalesBelum ada peringkat

- Art Reflection PaperDokumen6 halamanArt Reflection Paperapi-549800918Belum ada peringkat

- Step 4: Administer The SurveyDokumen1 halamanStep 4: Administer The Surveymogijo11dffdfgdfgBelum ada peringkat

- 19 Energy PyramidDokumen4 halaman19 Energy PyramidRahul KumarBelum ada peringkat

- Intrams Day 1Dokumen5 halamanIntrams Day 1Manisan VibesBelum ada peringkat

- Job Recommendation System Using Ensemble Filtering MethodDokumen5 halamanJob Recommendation System Using Ensemble Filtering MethodPREET GADABelum ada peringkat

- Student Affairs ReportDokumen17 halamanStudent Affairs ReportCalu MorgadoBelum ada peringkat

- Pankaj CVDokumen3 halamanPankaj CVkumar MukeshBelum ada peringkat

- Name: No# Sec.: Q No. Q. 1 Q. 2 Q. 3 Q. 4 Total Notes Points 5 % 5 % 5 % 5 % 20 % GradeDokumen4 halamanName: No# Sec.: Q No. Q. 1 Q. 2 Q. 3 Q. 4 Total Notes Points 5 % 5 % 5 % 5 % 20 % GradeJLHMBelum ada peringkat

- Chapter 3Dokumen9 halamanChapter 3Riza PutriBelum ada peringkat

- Sample English Report Cards 2022Dokumen9 halamanSample English Report Cards 2022Valentina NarcisoBelum ada peringkat