Target Tracking and Following of A Mobile Robot Using Infrared Sensors

Diunggah oleh

pejuang93Deskripsi Asli:

Judul Asli

Hak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

Target Tracking and Following of A Mobile Robot Using Infrared Sensors

Diunggah oleh

pejuang93Hak Cipta:

Format Tersedia

AbstractAn algorithm for target tracking and follow-

ing on the mobile robot is reported. The target detection,

including its direction and distance, is achieved by the

low-cost infrared transmitter and receiver pair. Though in

the following task the target already performs obstacle

avoidance in certain level, a simple but effective obstacle

algorithm for the follower is implemented to increase the

motion safety level. In addition, the odometry mode is de-

signed as well to deal with the situation while the target is

missing. Experimental validation is executed to evaluate

the performance the proposed algorithm. With the inte-

grated setup, the follower can track and follow the target in

various kinds of complex environment at different desig-

nated following distances and speed ranges.

Keywords: human following, infrared sensor, ultrasonic

sensor, obstacle avoidance

I. INTRODUCTION

The fast development of the robotics in recent years

brings up several new issues regarding the human robot

interaction (HRI). How to design a robot which can

perform variety of tasks and in the meantime adequately

serve and accompany with human in the human envi-

ronment gradually becomes an important task. Among

them, design a mobile platform which can follow the

human locomotion is one of the popular topics. In order

to achieve this goal, the follower should be capable of

detecting the direction and distance from the target, and

then it should be capable of moving effectively and

safely without any collision with obstacles, thus to fol-

low the target successfully.

Several methods can achieve the target detection or

following, and vision is one of the popular methods. For

example, using single camera to catch the leg motion of

the human target [1], using single camera to catch the

infrared signals transmitted from the target [2], using

stereo vision to identify the target and obstacle [3], etc.

Target tracking achieved by infrared (IR) transmitter and

receiver pairs together with fuzzy logic was reported [4].

Inertial sensors was also utilized to compute the target

speed and orientation [5]. RFID was adopted to track

target by the intensity RF information [6]. In order to get

precise motion information, the algorithm which is ca-

pable of recognizing people intention by using laser

range finder (LRF) was reported [7]. LRF in general is a

sensor with high precision, wide detection range, and

high reliability. In addition, human tracking by utilizing

multiple sensors with fusion scheme was reported as well.

For example, fusion of the signals from LRF and vision

sensor [8] or fusion of infrared sensors and sonar [9] to

track the target. The successful target tracking includes

two subtasks: one is detect the target, and the other one is

to perform the adequate locomotion. For the latter one,

obstacle avoidance in most scenarios is necessary, and

the potential field method is also widely adopted, where

the follower was attracted to the target and was

pushed away from the obstacles .

The relation between the target and the follower va-

ries according to the applications. Image in the future you

may have an automatic following suitcase, so you no

longer need to pull it in travel. Or you may have the au-

tomatic following shopping cart, golfing cart, or library

chair, which frees your hands for you to enjoy your ac-

tivities. The suitcase is in general personal belongings,

where long-term relationship exists between the target

and follower. Thus, in this scenario vision is adequate for

this application since (i) the users (i.e., target) is not re-

quired to mount additional equipments, which is pre-

ferable, and (ii) the required recognition of target by

vision system can be trained and learnt through various

empirical usages. In contrast, shopping cart, gold cart, or

library chair belongs to other owners, so the target and

the follower only have short-term relationship. In this

situation, the transmitter-receiver based following design

is more effective because this method in general doesnt

require data training.

Previously, we reported on the design of the target

detection module by using the low-cost IR transmitter

and receiver pair based on their directional and invisible

transmission nature. A primitive following algorithm

was also reported. The IR device doesnt need data

training, so it is suitable for the target following scenarios

where the target and the follower have short-term rela-

tionship. Here, with an extensive revision on the usage of

the ultrasonic sensors for obstacle avoidance and on the

overall algorithm, we report on the robust target tracking

and object following of a mobile robot. A simple method

is proposed to achieve the obstacle avoidance suitable for

the following task. The robot with the proposed algo-

rithm and system integration can track and follow the

object with various distance and speed settings as well as

can pass and turn in narrow corridors and in complex

human environment. In addition, the odometry naviga-

tion mode also allows the robot to follow the target path

while the signal from it is obstructed.

The paper is organized as follows: Section II briefly

reviews the design of the target detection module. Sec-

tion III presents the locomotion of the follower, and

Section IV describes obstacle avoidance algorithm uti-

lized on the follower. Section V reports the results of the

Wei-Shun Yu, Geng-Ping Ren, Chia-Hung Tsai, Youg Jen Wen, and Pei-Chun Lin

Department of Mechanical Engineering,

National Taiwan University

Taipei, Taiwan

Corresponding e-mail: peichunlin@ntu.edu.tw

Target Tracking and Following of a Mobile Robot Using Infrared Sensors

671

The 43

rd

Intl. Symp. on Robotics (ISR2012),Taipei, Taiwan, Aug. 29-31, 2012

experimental evaluation, and Section VI concludes the

work.

II. BRIEF REVIEW OF THE TARGET DETECTION

MODULE

In order to achieve the target following task, it is ne-

cessary for the follower to know the relative position

between the target and itself. This information includes

two states, the direction and the distance, and more spe-

cifically, the position of the target can be represented in

the polar coordinates of the coordinate system built on

the follower, (o

o

, I

o

), where o = 0 represents the no-

minal heading of the follower.

The IR transmitter and receiver are chosen to achieve

the direction detection of the target o

o

because the IR

light in principle is directional and invisible by the hu-

man. The former one and latter one are installed on the

target and the follower, respectively. Since the device is

intended to be used in the normal environment where lots

of other infrared signals may exist, the signal exchanged

between the transmitter and receiver are coded according

to a specific protocol, which in empirical is done by a

commercial transmitter-receiver IC pair. Though the IR

light signal itself is directional, the commercial IR

transmitter and receivers are usually mounted in the

optical lenses to increase the transmitting and receiving

angles. To make the signals transmitted from the target

omnidirectional, several transmitters are installed and

faced different directions. In the human following task

demonstrated in this paper, the transmitters are mounted

on the belt as shown in Fig. 1(a). In contrast, to prevent

the commercial IR receivers to receive the IR signals

from unwanted directions, a custom design of the hous-

ing of the IR receiver is built as shown in Fig. 1(b).

A bank of nine receivers is adopted to cover the front

180-degree detection range in the final design. In most

occasions only one receiver senses the signals from the

transmitter, and at most two receivers can sense the

signals from the transmitter simultaneously. Assuming

there is no obstacle impeding the light travel from the

transmitter to the receiver, the direction of the target o

o

can be computed as:

o

o

=

_ S

i i

_ S

i i

,, (1)

where

i = [80 60 40 20 0 -20 -40 -60 -80]

representing the physical directions in degree in the fol-

lowers coordinate, and

S

= ]

1 triggcrcJ

0 clsc

(2)

represents the status of the IR receiver. Though 9

terms are summed up in (1), please remind that at most

two S

i

s are 1 (i.e., receiver triggered), and the others are 0.

In addition, the resolution of the o

o

is 10 degree.

Two IR distance sensors are installed on a small RC

servo motor to detect the distance between the target and

the follower shown in Fig. 1(c). One is for short distance

and the other is for long range. After the follower knows

the direction of the target, o

o

, the RC servo quickly ro-

tates the IR distance sensors to aim at that direction, so

the distance between the target and the follower, I

o

, can

be obtained. Though the RC servo may not be necessary

since the equivalent rotation can be achieved by the mo-

tion of the follower itself, by turning the RC rather than

the follower itself can avoid the follower performing

high frequency rotation or swing motion, so the follower

can move in a low frequency smoother trajectory.

In short, the state of the target relative to the follower,

(o

o

, I

o

), are obtained. Next, the question lies in how to

generate adequate motion of the follower to follow the

target.

III. LOCOMOTION OF THE FOLLOWER

During the following motion, the follower is pro-

grammed to face the target directly and to follow the

target with a designate distance. Thus, at any instant with

given (o

o

, I

o

) provided by the detection device, the de-

sired setting is o

o

-

= 0

o

and I

o

-

= constont. Generally,

the normal social distance between people is about

122-366 cm. Therefore, the designed distance is set to

1m or 2m.

The control goal is to minimize the difference be-

tween the current value and the desired one:

_

Ao = o

o

- o

o

-

AI = I

o

- I

o

-

. (3)

The position of the target (o

o

, I

o

) changes as the

target moves, and the follower updates the new goal and

tracks the target. This mode is hereafter referred as the

normal mode. In order to prevent in certain occasions

where the detection module does not obtain the new

states (i.e., the receivers dont receive any signal from the

transmitters), the follower is built in with another

odometry mode which moves the follower to the last

sensed position of the target. During this blind motion, if

$;0

$0

$(0

$40

$0

$-0

$-40

$-(0

$-;0

( b)

( c)

( a)

Fig. 1. (a) Photo of the belt with transmitters wear by the target;

(b) configuration of nine IR receivers installed inside the se-

micircle-shape housing; (c) photo of the target detection device.

672

The 43

rd

Intl. Symp. on Robotics (ISR2012),Taipei, Taiwan, Aug. 29-31, 2012

X

Y

i

oi

oi

L

s

R

s

x

y

(xi,yi)

(xoi,yoi)

Fig. 2. The maximum forward speed (a) and turning speed (b)

versus the errors of target tracking.

the detect module senses the target, the follower shifts

back to the normal mode automatically. If no signal from

the transmitters appears in between, the follower stops at

the last sensed position and enters the search mode,

where the robot rotates in place to search for the signals

from the IR transmitters. Because the IR receivers are

installed in the front 180-degree, the rotation of the fol-

lower enables the search of the target in all directions

around the follower. If the search still fails to locate the

target, the follower will stop and wait at the last sensed

position. If the target is found, the follower switches back

to the normal mode and track the target.

A simple kinematic model is built in the follower to

track the motions of the target and the follower. With loss

of generality, assume the current state of the follower is

[ (x

, y

), 0

] , where the first two values and the last one

value represents the position and the orientation of the

follower at time stamp i, respectively, as shown in Fig. 2.

In the two-wheeled differential-drive mobile robot, the

incremental movements of the robot in the forward As

and rotational A0 directions are

As =

As

L

+As

R

2

, A0 =

As

L

-As

R

b

, (4)

where As

L

and As

R

are the incremental rotations of

the left and right wheels in the time stamp i and b is the

distance between two wheels. Thus, the state of the robot

(i.e., follower) at the next time stamp i+1 based on the

odometry can be computed as

[ (x

+1

, y

+1

), 0

+1

] = [(x

+ Ax , y

+ Ay), 0

+ A0 ] (5)

where

Ax = As cos(0

+ A0 2 / ) onJ Ay = As sin(0

+A0 2 / ) .

In addition, if the state of the target relative to the

follower at time stamp i is (o

o

, I

o

), the state of the

target in the normal mode can be represented as

[ (x

o +1

, y

o +1

)]

= [(x

o

+I

o

cos ( 0

+ o

o

), y

o

+I

o

sin ( 0

+ o

o

)) ].

(6)

The states of both target and follower are computed

and stored by onboard embedded system in real time.

Assuming at time stamp j+1 the follower does not ac-

quire new state of the target, the follower enters the

odometry mode automatically and treats the state

| (x

o ]

, y

o ]

)] as the final goal to reach. In the following

time stamp i with i>j, the dummy target state is com-

puted as

I

o

= [(x

o ]

- x

)

2

(y

o ]

- y

)

2

1

2

+ c I

o

-

o

o

= oton2(y

o ]

- y

, x

o ]

-x

) - 0

, (7)

where c is the tuned value which gradually increases

to 1 while the follower approaches the target. This setting

allows the smooth switching from the normal mode to

the odometry mode smoothly, and the follower can

moves to the target but not keep the designate following

distance I

o

-

. If the follower cannot find the target in the

followed time stamp i, it keeps moving to the final goal

| (x

o ]

, y

o ]

)]. Because the positioning of the follower has

certain accuracy, the follower is programmed to stop and

start the search mode once the follower enters the

pre-defined small area centered at the goal | (x

o ]

, y

o ]

)].

If the target is found at any time stamp i>j before it

reaches the goal, the follower automatically shifts back

to the normal mode and follows the target according to

the newly detected target position.

The follower such as a mobile robot is in general a

dynamic system which has certain response characteris-

tic. For the safety reason, the maximum allowable speed

is set to confine the motion of the follower. The maxi-

mum allowable speed of the robot V

max

is set as a func-

tion of the actual distance. V

max

increases as I

o

increases,

to ensure that I

o

can be converged to I

o

-

. While the

actual distance I

o

is short, the reduced maximum al-

lowable speed can prevent the follower bumping into the

target. The formula is

I

mux

= C

bk

I

o

, (8)

where C

bk

is a constant roughly determined by the

braking performance of the follower.

u-80

u80

u60

u20

u0

u-20

u-40

u-60

z

z

u40

,,z

,,z

[,[z

[,|zp

80

u

60

u

40

u

40

u

60

u

80

u

20

u

0

u

20

u

(a)

(b)

Fig. 3. (a). Configuration of nine ultrasonic range finders in-

stalled on the follower; (b) the configuration where the thre-

sholds u

]

are set up.

673

The 43

rd

Intl. Symp. on Robotics (ISR2012),Taipei, Taiwan, Aug. 29-31, 2012

The motion of the follower basically utilizes speed

control strategy. For the forward motion, the quantitative

representation of the speed profile versus the following

error, AI, is listed as follows:

I = mox(min(I

o

+PI(I

o

, I

o

-

) , I

mux

), 0) , (9)

where V represents the forward speed and V

o

is the

speed of the target, derived from differentiation of I

o

.

The speed V is adjusted by an ordinary PID control

strategy. Equation (9) also reveals that if the following

distance is kept at the desired value, the follower also

moves at speed V

o

, the same as the target. For the turning

motion, the quantitative representation of the speed pro-

file versus the following error, AI, is listed as follows:

w = _

o

w

(o

o

- o

o

-

) , (o

o

- o

o

-

) > o

s

w

s

, (o

o

- o

o

-

) < o

s

(10)

where W represents the turning speeds, and a

W

, o

s

,

and W

s

are constants. Because the resolution of direction

detection o

o

is 10 degree, the PID controller is not ef-

fective. Instead, a simple proportional control is utilized.

For small error Ao < o

s

= 15, a smaller value w

s

is set

to prevent overcorrection owing to the slow dynamic

response of the robot. For safety reason, equation (10)

also constrains the follower to move backward because

no ultrasonic sensor senses the back side of the follower.

In the empirical evaluation where the follower is a

two-wheeled differential-drive mobile robot, the forward

and turning speed can further be converted to the forward

speeds of the left and right wheels, V

L

and V

R

,

_

I

L

= I + bw/2

I

R

= I -bw/2

. (11)

The rotation speeds of the left and the right wheels,

L

and

R

, can then be calculated as

_

L

=

v

L

R

R

=

v

R

R

, (12)

where R is the radius of the wheel.

IV. OBSTACLE AVOIDANCE

If the follower tails the target very closely, the motion

of the follower can be programmed to directly follow the

movable target point (i.e., a tracking problem). In this

case the obstacle avoidance capability in the follower is

not necessary as long as the target can find an accessible

path and avoid the obstacles successfully. In this case,

actually it is the target itself to perform the obstacle

avoidance.

In general following tasks where certain distance ex-

ists between the target and the follower, the capability of

obstacle avoidance in the follower is crucial. For exam-

ple, in the case of human following where the comfort-

able distance for human to be tailed is at least 1 meter, it

is hard to guarantee that the space between the human

and the follower is clear at all time. Thus, in this section

the motion of the follower with obstacle avoidance ca-

pability is described.

As reported in, nine ultrasonic range finders are in-

stalled on the follower to cover the front 180-degree en-

vironment sensing capability as shown in Fig. 3(a). The

installation and alignment of the ultrasonic range finders

are similar to that of the IR receivers, equally distributed

on the semicircle. Thus, the difference of the main

sensing direction between the consecutive sensors is 20

degree. The ultrasonic range finder itself has ~45 degree

receiving cone, so in current arrangement the sensing

areas have some overlap to make sure the front area are

sensed thoroughly. Assume u

]

is the distance of the ob-

stacle measured by the sensor, where the notation

] = [80 60 40 20 0 -20 -40 -60 -80] indicates

the physical sensing directions of the sensors, similar to

the notations of the IR receivers shown in the section II.

Nine threshold u

]

s are defined as the safety measure

of the followers motion. Because the follower faces the

target most of the time, the defined thresholds of the six

range finders on both sides are mainly determined by the

geometrical configuration of the follower relative to the

imaginary side wall as shown in Fig. 3(b). Here L

saIc

is

set to 55cm and the u

]

s are calculated as u

]

=

L

saIc

/cos (90 - obs(])), where j = 40, 60, and 80.

Because the combined sensed range of the three front

sensors is wider than the width of the follower, the u

]

s of

these three are treated as the judgment whether the fol-

lower can go straight forward or not. Empirically the

value is set to u

]

= 75 cm, j = 0, 20. In addition,

when the sensed u

]

is less than L

stop

= 37.5cm, the

follower stops immediately to avoid any potential colli-

sion.

A function

]

(u

]

) is defined to represent the obstacle

status around the follower:

]

(u

]

) = _

1 i u

]

u

]

0 clsc

, (13)

where the value 1 indicates that the robot is too close

to the obstacle in the j direction. The value 0 indicates no

obstacle in that direction. Because the combined sensed

range of three consecutive sensors is wider than the

width of the follower, the follower can move safely to-

ward the center of the three consecutive sensors which

shows 0. Following that logic, the feasible following

direction o

b

with obstacle avoidance (hereafter referred

to as front obstacle avoidance) can be described as

`

1

1

1

1

o

b

= o

o

i

]

(u

]

) = 0

]

] = o

o

, o

o

20

o

b

= o

o

i

]

(u

]

) = 0

]

] = o

o

, o

o

20 min[o

o

-o

o

[

(14)

if the direction of the target 0

o

is derived to be the

same as one of the number j (i.e., 0, 20, 40,), as the

case 1 shown in Fig. 3(a). If the derived 0

o

is derived to

be in between the number js (ex, 10, 30, ), the criteria

is

674

The 43

rd

Intl. Symp. on Robotics (ISR2012),Taipei, Taiwan, Aug. 29-31, 2012

_

o

b

= o

o

i _

]

(u

]

) = 0

]

] = o

o

10 , o

o

30

o

b

= o

o

i _

]

(u

]

) = 0

]

] = o

o

, o

o

20 min[o

o

-o

o

[

(15)

where the obstacle status of 4 consecutive sensors is

checked, as the case 2 shown in Fig. 3(a). In brief, if no

obstacle in the o

o

direction, the follower moves toward

that direction in order to follow the target adequately. If

the obstacle exists, the follower will search for the suit-

able direction (1) where three consecutive

]

(u

]

)s are 0s

and (2) which is as close to the o

o

as possible.

The algorithm shown above can effectively detour the

path of the follower to avoid the obstacles in the original

moving direction. However, the detoured path should

also be checked with obstacle condition for safe follower

motion. Thus, obstacle status sensed by six side sensors

]

(u

]

)s is utilized to adjust the motion direction shown

below:

o

s

= _w

]

]

(u

]

)

u

]

-u

]

u

] ,10

-u

]

]=40,60,80

, (16)

where o

s

represents the direction adjustment (hereaf-

ter referred to as side obstacle avoidance), w

j

s indicate

the weights of the sensor readings, and u

] ,10

s are the

constants calculated while the motion direction of the

follower is 10 degree toward the side wall. Ten degree is

chosen because the best resolution of direction o

o

is 10

degree. The direction adjustment is linearized and scaled

according to this number.

Finally, the motion direction of the follower, o

m

, can

be calculated as the linear combination of the above

considerations:

o

m

= o

b

+ o

s

, (17)

where the o

m

replaces the o

o

in (10) as the new target

direction to generate the motion of the follower. The

revised direction tracks the target closely but also avoids

the obstacles.

Besides the direction correction, the allowable for-

ward speed should be adjusted if the obstacles are sensed.

Intuitively, when the follower travels in the tight space

and is surrounded by lots of obstacles, the motion of the

follower should be slower, just like animals moves

slower in the challenge terrain. Thus, the allowable

forward speed, I

re

, is further revised as

I

re

= (_

]

(u

]

)) I , (18)

which is a function of the original controlled forward

speed I shown in (9) and obstacle status

]

(u

]

). Equa-

tion (18) replaces the I shown in (9) as the new allowa-

ble speed, to constrain the motion of the follower in the

safer manner.

V. EXPERIMENTAL VALIDATION

The platform shown in Fig. 4 is utilized as the fol-

lower for experimental evaluation. A tester wearing the

belt with transmitters acted as the target to be followed,

so the following task is in certain level equals to the

human following. The platform is a three-wheeled mo-

bile robot with two-wheel differential drive as the actu-

ating mechanism and the third wheel as an idler. Thus, it

can move forward/backward and turn in place (i.e., mo-

bility =2). The mechatronic system on the platform in-

cludes a detection device which senses the direction and

distance of the target described in Section II, an ultra-

sonic sensor array to detect the surrounded obstacles, and

a real time embedded control system (sbRIO-9642, Na-

tional Instruments) which is in charge of algorithm

computation and motion control. The overall flow chart

is depicted in Fig. 5.

The proposed sensory setup and following algorithm

were evaluated experimentally under various scenarios.

A commercial HD camcorder (XDR-11, SONY) was

placed on top of the scenarios to record the trajectories of

the target and the follower. LEDs are installed on top of

Fig. 4. Photo of the follower utilized in the experimental vali-

dation.

Motion generation

Odometry mode

Following mode

Obstacle avoidance

No

Calculate distance of

the target

IR signals received ?

Start

Obtain sensor signals:

IR signals from the target si and ultrasonic ranger singals uj

Past state of

the target exist ?

Calculate direction of

the target o

Yes

Obtain last (xo , yo)

Turn RC servo motor

toward the target

o

o

Calculate o and

o

Calculate feasible

following dir. oa-f

Calculate adjusted

motion dir. oa-s

Calculate direction

of motion f

Calculate forward

speed v

Calculate voa

Calculate desire

wheel speeds

Wheel drive and

motion control

Calculate turning

speed w

Yes No

Yes

No

Fig. 5. The flowchart of the overall algorithm

675

The 43

rd

Intl. Symp. on Robotics (ISR2012),Taipei, Taiwan, Aug. 29-31, 2012

the target and the follower as the markers during the

experiments, which ease the followed post processing in

Matlab to extract the positions of the markers from a

sequence of images. The results are shown in Fig 6 and

Fig 7, where the red line and blue line represent the tra-

jectories of the target and follower, respectively. The

special legends marked on both lines indicate the posi-

tions extracted from the same image, and this informa-

tion provides the relative position between the target and

the follower at several different time stamps.

Figure 6(a) plots the trajectories of the target and the

follower where the follower loses the signal from the

target (intentionally turns off the transmitter) at point R

A

.

The follower enters the odometry model and successfully

moves to the last target position O

A

within certain error

range. It then performs the search mode (i.e., turning in

place), but because no target is found, the follower rests

around the last target position O

A

. In Fig. 6(b), the fol-

lower also goes through normal mode, odometry mode,

and then search mode by the similar setting (intentionally

turns off the transmitter). At certain moment after the

transmitter is intentionally back on, the follower finds the

target at O

B

and moves directly toward the target. Figure

6 (c) to (e) compare the performance of the following

with different designated following distances. In the

simple turning motion shown in Fig. 6(c)-1m, the fol-

lower can track the target well with smooth trajectory

close to that of the target. For the case in Fig. 6(c)-2m,

because of the longer following distance, at certain time

period right after the turning of the target, the follower

loses the signals from the target and enters the odometry

mode (pink line segment, between R

A

and R

B

), but it then

recovers back to the normal mode at the later moment

before it reaches the target point O

A

. Thus, the follower

goes back to the normal mode from the search mode, not

entering the search mode. Comparing to Fig. 6(b) which

has a sharp turning owing to the turning in place in search

mode, the trajectory shown in Fig. 6(c)-2m has a

smoother trajectory.

VI. CONCLUSION

We report on the target tracking and following algo-

rithm based on the developed IR-based target detection

module. With given target direction and distance, the

follower generates the adequate motion with obstacle

avoidance to track the target. The follower can also

detect the speed of the target and follows it accordingly.

If the signal from the target is lost, the follower switches

to the odometry mode to move to the last target position

and search for the target. As long as the target is found,

the follower automatically switches back to the normal

mode and keeps following task. The algorithm is expe-

rimentally evaluated and is proved functional.

We are current in the process of developing a more

sophisticate algorithm which can perform target fol-

lowing with faster following speed and in a complicate

and wide range of environment setup.

VII. ACKNOWLEDGMENT

The authors gratefully thank the National Instruments

Taiwan Branch for their kindly support of technical

consulting.

REFERENCES

[1] N. Tsuda, S. Harimoto, T. Saitoh, and R. Konishi, "Mobile robot

with following and returning mode," in The 18th IEEE

International Symposium on Robot and Human Interactive

Communication (RO-MAN) 2009, pp. 933-938.

[2] Y. Nagumo and A. Ohya, "Human following behavior of an

autonomous mobile robot using light-emitting device," in IEEE

International Workshop on Robot and Human Interactive

Communication (RO-MAN) 2001, pp. 225-230.

[3] C.-H. Chao, B.-Y. Hsueh, M.-Y. Hsiao, S.-H. Tsai, and T.-H. Li,

"Real-time target tracking and obstacle avoidance for mobile

robots using two cameras," in ICROS-SICE International Joint

Conference, 2009, pp. 4347-4352.

[4] T. H. S. Li, C. Shih-Jie, and T. Wei, "Fuzzy target tracking control

of autonomous mobile robots by using infrared sensors," Fuzzy

Systems, IEEE Transactions on, vol. 12, pp. 491-501, 2004.

[5] S. Bosch, M. Marin-Perianu, R. Marin-Perianu, H. Scholten, and P.

Havinga, "Follow me! mobile team coordination in wireless sensor

and actuator networks," in IEEE International Conference on

Pervasive Computing and Communications (PerCom) 2009, pp.

1-11.

[6] K. Myungsik, C. Nak Young, A. Hyo-Sung, and Y. Wonpil,

"RFID-enabled target tracking and following with a mobile robot

using direction finding antennas," in IEEE International

Conference on Automation Science and Engineering (CASE), 2007,

pp. 1014-1019.

[7] K. Seongyong and K. Dong-Soo, "Recognizing human intentional

actions from the relative movements between human and robot," in

IEEE International Symposium on Robot and Human Interactive

Communication (RO-MAN) 2009, pp. 939-944.

[8] R. C. Luo, C. Nai-Wen, L. Shih-Chi, and W. Shih-Chiang, "Human

tracking and following using sensor fusion approach for mobile

assistive companion robot," in Annual Conference of the IEEE

Industrial Electronics Society (IECON), 2009, pp. 2235-2240.

[9] A. Korodi, A. Ccdrean, L. Banita, and C. Volosencu, "Aspects

regarding the object following control procedure for wheeled

mobile robots," WSEAS Transactions on Systems and Control, vol.

3, pp. 537-546, 2008.

0 100 200 300 400 500 600

0

100

200

300

0 100 200 300 400 500 600

0

100

200

300

0 100 200 300 400 500 600

0

100

200

300

0 100 200 300 400 500 600

0

100

200

300

OA RA RA

OA

RA

RB

OA

OB

Start Start

Start Start

End

End

End

End

(b)

(c)

( 1m ) ( 1m )

( 2m ) ( 1m )

(a)

OB

Fig. 6. Trajectories of the target (red line) and the follower (blue

line) in various scenarios viewing from the ceiling (top view).

Walls/obstacles are shown in green color and the designated fol-

lowing distance (1m or 2m) is shown at the upper right corner of

each scenario. When the robot is operated in the odometry mode,

the trajectory is marked in pink color. (a) odometry mode and

stop; (b) odometry mode and then back to normal mode;(c) right

angle turn

676

The 43

rd

Intl. Symp. on Robotics (ISR2012),Taipei, Taiwan, Aug. 29-31, 2012

Anda mungkin juga menyukai

- Automatic Target Recognition: Advances in Computer Vision Techniques for Target RecognitionDari EverandAutomatic Target Recognition: Advances in Computer Vision Techniques for Target RecognitionBelum ada peringkat

- Automatic Target Recognition: Fundamentals and ApplicationsDari EverandAutomatic Target Recognition: Fundamentals and ApplicationsBelum ada peringkat

- Infrared Range Sensor Array For 3D Sensing in Robotic ApplicationsDokumen9 halamanInfrared Range Sensor Array For 3D Sensing in Robotic Applicationshabib_archillaBelum ada peringkat

- Autonomous Mobile Robot Using Wireless SensorDokumen3 halamanAutonomous Mobile Robot Using Wireless SensorJose CastanedoBelum ada peringkat

- An RF-based System For Tracking Transceiver-Free Objects: Dian Zhang, Jian Ma, Quanbin Chen, and Lionel M. NiDokumen10 halamanAn RF-based System For Tracking Transceiver-Free Objects: Dian Zhang, Jian Ma, Quanbin Chen, and Lionel M. NiSamira PalipanaBelum ada peringkat

- Final Report On Autonomous Mobile Robot NavigationDokumen30 halamanFinal Report On Autonomous Mobile Robot NavigationChanderbhan GoyalBelum ada peringkat

- An Intelligent Active Range Sensor For Vehicle Guidance: System OverviewDokumen8 halamanAn Intelligent Active Range Sensor For Vehicle Guidance: System OverviewUtsav V ByndoorBelum ada peringkat

- Enhanced Wireless Location TrackingDokumen8 halamanEnhanced Wireless Location TrackingAyman AwadBelum ada peringkat

- Equiangular Navigation Guidance of A Wheeled Mobile Robot With Local Obstacle AvoidanceDokumen6 halamanEquiangular Navigation Guidance of A Wheeled Mobile Robot With Local Obstacle Avoidancearyan_iustBelum ada peringkat

- Key Words - Arduino UNO, Motor Shield L293d, Ultrasonic Sensor HC-SR04, DCDokumen17 halamanKey Words - Arduino UNO, Motor Shield L293d, Ultrasonic Sensor HC-SR04, DCBîswãjït NãyàkBelum ada peringkat

- Detection and Motion Estimation of Moving Objects Based On 3D-WarpingDokumen6 halamanDetection and Motion Estimation of Moving Objects Based On 3D-WarpingUmair KhanBelum ada peringkat

- Technology For Visual DisabilityDokumen6 halamanTechnology For Visual DisabilityNaiemah AbdullahBelum ada peringkat

- Autonomous Mobile Robot Navigation Using Passive RFID in Indoor EnvironmentDokumen8 halamanAutonomous Mobile Robot Navigation Using Passive RFID in Indoor EnvironmentGunasekar R ErBelum ada peringkat

- Base PapDokumen6 halamanBase PapAnonymous ovq7UE2WzBelum ada peringkat

- Implementation of An Object-Grasping Robot Arm Using Stereo Vision Measurement and Fuzzy ControlDokumen13 halamanImplementation of An Object-Grasping Robot Arm Using Stereo Vision Measurement and Fuzzy ControlWikipedia.Belum ada peringkat

- Obstacles Avoidance Method For An Autonomous Mobile Robot Using Two IR SensorsDokumen4 halamanObstacles Avoidance Method For An Autonomous Mobile Robot Using Two IR SensorsDeeksha AggarwalBelum ada peringkat

- Assistive Infrared Sensor Based Smart Stick For Blind PeopleDokumen6 halamanAssistive Infrared Sensor Based Smart Stick For Blind PeopleجعفرالشموسيBelum ada peringkat

- AIM2019 Last VersionDokumen6 halamanAIM2019 Last VersionBruno F.Belum ada peringkat

- A New Approach For Error Reduction in Localization For Wireless Sensor NetworksDokumen6 halamanA New Approach For Error Reduction in Localization For Wireless Sensor NetworksidescitationBelum ada peringkat

- Synthetic Aperture Radar Principles and Applications of AI in Automatic Target RecognitionDokumen6 halamanSynthetic Aperture Radar Principles and Applications of AI in Automatic Target RecognitionoveiskntuBelum ada peringkat

- Stick Blind ManDokumen4 halamanStick Blind ManجعفرالشموسيBelum ada peringkat

- Sensors: A Vision-Based Automated Guided Vehicle System With Marker Recognition For Indoor UseDokumen22 halamanSensors: A Vision-Based Automated Guided Vehicle System With Marker Recognition For Indoor Usebalakrishna88Belum ada peringkat

- P L M B Rssi U Rfid: Osition Ocation Ethodology Ased ON SingDokumen6 halamanP L M B Rssi U Rfid: Osition Ocation Ethodology Ased ON SingInternational Journal of Application or Innovation in Engineering & ManagementBelum ada peringkat

- Ijrtem E0210031038 PDFDokumen8 halamanIjrtem E0210031038 PDFjournalBelum ada peringkat

- 1I11-IJAET1111203 Novel 3dDokumen12 halaman1I11-IJAET1111203 Novel 3dIJAET JournalBelum ada peringkat

- CH03 - 1algorithms Used in Positioning SystemsDokumen10 halamanCH03 - 1algorithms Used in Positioning SystemsrokomBelum ada peringkat

- Wall Following With A Single Ultrasonic Sensor: Shérine M. Antoun and Phillip J. MckerrowDokumen12 halamanWall Following With A Single Ultrasonic Sensor: Shérine M. Antoun and Phillip J. MckerrowEncangBelum ada peringkat

- Infrared Object Position Locator: Oladapo, Opeoluwa AyokunleDokumen44 halamanInfrared Object Position Locator: Oladapo, Opeoluwa Ayokunleoopeoluwa_1Belum ada peringkat

- AM 8th Sem Solution 17.05.19 in CourseDokumen16 halamanAM 8th Sem Solution 17.05.19 in CourseRaj PatelBelum ada peringkat

- Imtc RobotNavigation 7594Dokumen5 halamanImtc RobotNavigation 7594flv_91Belum ada peringkat

- Sensors: Obstacle Avoidance of Multi-Sensor Intelligent Robot Based On Road Sign DetectionDokumen17 halamanSensors: Obstacle Avoidance of Multi-Sensor Intelligent Robot Based On Road Sign DetectionRamakrishna MamidiBelum ada peringkat

- 8 B2016247 PDFDokumen6 halaman8 B2016247 PDFPriya K PrasadBelum ada peringkat

- Self Localization Methods in RobotsDokumen12 halamanSelf Localization Methods in RobotsBarvinBelum ada peringkat

- Localization, Tracking, and Imaging of Targets in Wireless Sensor Networks: An Invited ReviewDokumen4 halamanLocalization, Tracking, and Imaging of Targets in Wireless Sensor Networks: An Invited ReviewPraveen KrishnaBelum ada peringkat

- Rodriguez Mva 2011Dokumen16 halamanRodriguez Mva 2011Felipe Taha Sant'AnaBelum ada peringkat

- RFID-Based Mobile Robot Positioning - Sensors and TechniquesDokumen5 halamanRFID-Based Mobile Robot Positioning - Sensors and Techniquessurendiran123Belum ada peringkat

- Extraction of Doppler Signature of Micro-To-Macro Rotations/motions Using Continuous Wave Radar-Assisted Measurement SystemDokumen15 halamanExtraction of Doppler Signature of Micro-To-Macro Rotations/motions Using Continuous Wave Radar-Assisted Measurement Systemv3rajasekarBelum ada peringkat

- Tapu A Smartphone-Based Obstacle 2013 ICCV Paper PDFDokumen8 halamanTapu A Smartphone-Based Obstacle 2013 ICCV Paper PDFAlejandro ReyesBelum ada peringkat

- SM Wireless Sensor 05524668Dokumen6 halamanSM Wireless Sensor 05524668Nguyen Tri PhungBelum ada peringkat

- Obstacle Avoiding Robot Using Arduino: AbstractDokumen5 halamanObstacle Avoiding Robot Using Arduino: AbstractWarna KlasikBelum ada peringkat

- Sensors: Pose Estimation of A Mobile Robot Based On Fusion of IMU Data and Vision Data Using An Extended Kalman FilterDokumen22 halamanSensors: Pose Estimation of A Mobile Robot Based On Fusion of IMU Data and Vision Data Using An Extended Kalman Filterxyz shahBelum ada peringkat

- Laser Scanner TechnologyDokumen270 halamanLaser Scanner TechnologyJosé Ramírez100% (2)

- Laser InterferometeryDokumen22 halamanLaser InterferometeryAndy ValleeBelum ada peringkat

- Autonomous Robots PDFDokumen8 halamanAutonomous Robots PDFEduard FlorinBelum ada peringkat

- Sensors: Human Movement Detection and Identification Using Pyroelectric Infrared SensorsDokumen25 halamanSensors: Human Movement Detection and Identification Using Pyroelectric Infrared SensorsSahar AhmadBelum ada peringkat

- Development of A Laser-Range-Finder-Based Human Tracking and Control Algorithm For A Marathoner Service RobotDokumen14 halamanDevelopment of A Laser-Range-Finder-Based Human Tracking and Control Algorithm For A Marathoner Service RobotAnjireddy ThatiparthyBelum ada peringkat

- OIE 751 ROBOTICS Unit 3 Class 8 (30-10-2020)Dokumen14 halamanOIE 751 ROBOTICS Unit 3 Class 8 (30-10-2020)MICHEL RAJBelum ada peringkat

- Man Capi - R2 PDFDokumen6 halamanMan Capi - R2 PDFIshu ReddyBelum ada peringkat

- Sensors Self LocalisationDokumen21 halamanSensors Self Localisationsivabharath44Belum ada peringkat

- Automatic Quadcopter Control Avoiding Obstacle UsiDokumen7 halamanAutomatic Quadcopter Control Avoiding Obstacle Usimathan mariBelum ada peringkat

- Exp.8 Total Station-IntroductionDokumen4 halamanExp.8 Total Station-IntroductionSaurabhChoudhary67% (3)

- Sensors: Laser Sensors For Displacement, Distance and PositionDokumen4 halamanSensors: Laser Sensors For Displacement, Distance and PositionLuis SánchezBelum ada peringkat

- StarliteDokumen4 halamanStarliteSundar C EceBelum ada peringkat

- Bi-Directional Passing People Counting System Based On IR-UWB Radar SensorsDokumen11 halamanBi-Directional Passing People Counting System Based On IR-UWB Radar Sensorsdan_intel6735Belum ada peringkat

- Sensors 14 08057 PDFDokumen25 halamanSensors 14 08057 PDFTATAH DEVINE BERILIYBelum ada peringkat

- On - Automotive Rail Track Crack Detection SystemDokumen17 halamanOn - Automotive Rail Track Crack Detection SystemHuxtle RahulBelum ada peringkat

- Design For Visually Impaired To Work at Industry Using RFID TechnologyDokumen5 halamanDesign For Visually Impaired To Work at Industry Using RFID Technologyilg1Belum ada peringkat

- Localizationmag10 (Expuesto)Dokumen10 halamanLocalizationmag10 (Expuesto)mmssrrrBelum ada peringkat

- DSFGDFDokumen5 halamanDSFGDFnidheeshBelum ada peringkat

- The Optical Mouse Sensor As An Incremental Rotary EncoderDokumen9 halamanThe Optical Mouse Sensor As An Incremental Rotary EncoderRobert PetersonBelum ada peringkat

- Synopsis RailwayDokumen8 halamanSynopsis RailwaySathwik HvBelum ada peringkat

- JVL Profibus Expansion Modules MAC00-FP2 and MAC00-FP4Dokumen4 halamanJVL Profibus Expansion Modules MAC00-FP2 and MAC00-FP4ElectromateBelum ada peringkat

- RLC Circuits PDFDokumen4 halamanRLC Circuits PDFtuvvacBelum ada peringkat

- Gs1m Diode SMDDokumen2 halamanGs1m Diode SMDandrimanadBelum ada peringkat

- Atomic 3000 LED Service Manual: Revision A, 11-30-2017Dokumen20 halamanAtomic 3000 LED Service Manual: Revision A, 11-30-2017Giovani AkBelum ada peringkat

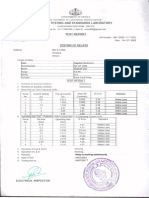

- Img20220809 07262264Dokumen1 halamanImg20220809 07262264Hafiz RahimBelum ada peringkat

- PSLC Professional Lighting 17-18 - Accent LightDokumen14 halamanPSLC Professional Lighting 17-18 - Accent LightAhmed salahBelum ada peringkat

- Startup Giude MVS-3000Dokumen59 halamanStartup Giude MVS-3000Christian Stevanus50% (2)

- Five Day Online FDP Brochure - Anurag UniversityDokumen2 halamanFive Day Online FDP Brochure - Anurag Universityjel3electricalBelum ada peringkat

- Modulasi MulticarrierDokumen73 halamanModulasi MulticarrierRizka NurhasanahBelum ada peringkat

- Dokumen - Tips - Upto 145kv 40ka 3150a Operating Manual 56327f822540fDokumen64 halamanDokumen - Tips - Upto 145kv 40ka 3150a Operating Manual 56327f822540fSerik ZhilkamanovBelum ada peringkat

- DigiDokumen5 halamanDigiFurqan HameedBelum ada peringkat

- Parameter and Error List Zanotti GM-Uniblock Zanotti GS-SplitDokumen16 halamanParameter and Error List Zanotti GM-Uniblock Zanotti GS-SplitJaffer HussainBelum ada peringkat

- Manual Porsche Charge o Mat Pro EUDokumen122 halamanManual Porsche Charge o Mat Pro EUcristian rubertBelum ada peringkat

- CH 5 Series CircuitsDokumen40 halamanCH 5 Series Circuitsjorrrique100% (1)

- KSTAR Utility System Solution 2023Dokumen10 halamanKSTAR Utility System Solution 2023Sid-Ali RatniBelum ada peringkat

- Research Paper 1Dokumen8 halamanResearch Paper 1ankitBelum ada peringkat

- Dynamic Spectrum Sharing (DSS) in LTE NRDokumen6 halamanDynamic Spectrum Sharing (DSS) in LTE NReng.ashishmishra2012Belum ada peringkat

- KatalogDokumen164 halamanKatalogVien HernandezBelum ada peringkat

- User Manual: UPS ON LINE EA900 G4 (1-1) 6 - 10 KVADokumen44 halamanUser Manual: UPS ON LINE EA900 G4 (1-1) 6 - 10 KVArabia akramBelum ada peringkat

- ST5461D07-1 - Product Spec - Ver0 2 20160726Dokumen32 halamanST5461D07-1 - Product Spec - Ver0 2 20160726Birciu ValiBelum ada peringkat

- Bench Type Digital Multimeter: DescriptionDokumen1 halamanBench Type Digital Multimeter: DescriptionManuel RochaBelum ada peringkat

- FTTH Fast Connector SC/APC B Type: Feature Technical SpecificationDokumen3 halamanFTTH Fast Connector SC/APC B Type: Feature Technical SpecificationvicenteBelum ada peringkat

- Double Error Correction Code For 32-Bit Data Words With Efficent DecodingDokumen3 halamanDouble Error Correction Code For 32-Bit Data Words With Efficent DecodingSreshta TricBelum ada peringkat

- DZ-HBR-01-GEN-PJM-PDR-0204 RA2 OTP For Solar Power Supply SystemDokumen10 halamanDZ-HBR-01-GEN-PJM-PDR-0204 RA2 OTP For Solar Power Supply Systemmameri malekBelum ada peringkat

- NETWORKDokumen4 halamanNETWORKB20-CSE-203 Sneha BasakBelum ada peringkat

- Basic Diesel Generator Maintenance Checklist - SafetyCultureDokumen4 halamanBasic Diesel Generator Maintenance Checklist - SafetyCultureDon LucaBelum ada peringkat

- Homodyne and Heterodyne Interferometry PDFDokumen8 halamanHomodyne and Heterodyne Interferometry PDFDragan LazicBelum ada peringkat

- Preparing and Interpreting Technical DrawingDokumen20 halamanPreparing and Interpreting Technical DrawingAriel Santor EstradaBelum ada peringkat

- Lock Picking Hotel RoomsDokumen22 halamanLock Picking Hotel Roomsbiffbuff99Belum ada peringkat