Pso Convergence

Diunggah oleh

Anonymous TxPyX8cHak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

Pso Convergence

Diunggah oleh

Anonymous TxPyX8cHak Cipta:

Format Tersedia

Measures for Improving Premature Convergence in Particle Swarm

Optimization for Association Rule Mining

Abstract: Particle Swam Optimization (PSO) has become popular choice for solving

complex problems which are otherwise difficult to solve by traditional methods. One of the

drawbacks of PSO is premature convergence and trapping into local optima. This paper

attempts to avoid premature convergence by modifying the velocity updation function in

PSO. Variants in inertia weight, chaotic operators, neighbourhood selection and self

adaptation of inertia weight through three methods are introduced with velocity update

function to avoid convergence at local optima. The variants when tested on five datasets for

mining Association rules (AR) avoid premature convergence thereby enhancing the

predictive accuracy of the rules mined.

Keywords: Particle Swarm Optimization, Premature Convergence, Inertia weight, Chaotic

operator, Neighbourhood selection, Association Rule.

1.Introduction

Association rule mining is a data mining task that discovers associations among items in a

large database. Association rules have been extensively studied in the literature for their

usefulness in many application domains such as recommender systems, diagnosis decisions

support, telecommunication, intrusion detection, etc. Efficient discovery of such rules has

been a major focus in data mining research.

Apriori algorithm is the most widely represented algorithm for association rule mining. Many

modifications have been made in this algorithm focusing on improvement of its efficiency

and accuracy. However, two parameters, minimal support and confidence, determined by the

decision-maker or trial-and-error identifies that the algorithm lack in both objectiveness and

efficiency. Traditional methods for rule mining namely decision tree, Bayesian classifier and

statistic methods are usually accurate, but the computation complexity could be very high.

Metaheuristic optimization algorithms have been the popular choice for solving complex and

intricate problems which are otherwise difficult to solve by traditional methods [1]. The

Particle Swarm Optimization (PSO) algorithm is an evolutionary computation technique and

an important heuristic algorithm in recent years. The mechanism of PSO algorithm is to

mimic the social behaviour of animals such as fish schooling and bird flocking. A potential

solution to the involved problem is depicted with a particle (individual). The particle adjusts

its position by flying with some velocity in the search space. The flying velocity of an

individual depends both on its personal experience and neighbours experience.

Despite having several attractive features, it has been observed that PSO algorithms do not

always perform as per expectations. Particle swarm optimization algorithms can easily get

trapped in the local optima when solving complex multimodal problems. The success of PSO

algorithm to a large extent depends on the careful balancing of two conflicting goals,

exploration (diversification) and exploitation (intensification). While exploration is important

to ensure that every part of the solution domain is searched enough to provide a reliable

estimate of the global optimum; exploitation, on the other hand, is important to concentrate,

the search effort around the best solutions found so far by searching their neighbourhoods to

reach better solutions [2]. Accelerating convergence speed and avoiding the local optima

have become the two most important and appealing goals in PSO research.

Since PSO was proposed, investigations have been made theoretically and experimentally to

analyze and improve PSO. Clerc and Kennedy [7] explored how PSO works from a

mathematical perspective, introduced a constriction factor v to guarantee the convergence of

PSO, and analyzed the trajectory of a single particle in both discrete time and continuous

time. Van den Bergh and Engelbrecht [8] analyzed how the inertia weight and acceleration

constants affect the trajectories of particles and provided theoretical findings on the dynamics

of the PSO systems. These studies provided theoretical supports for the research on the

improvement of PSO. In order to achieve good balanceween exploitation capability and

exploration capability, neighborhood topologies designed for particles are studied. Four

neighborhood topologies comprising circles, wheels, stars and random edges were tested in

[9].

Eberhart and Shi [17] proposed a Random Inertia Weight strategy and experimentally found

that this strategy increases the convergence of PSO in early iterations of the algorithm. In

Global-Local Best Inertia Weight [18], the Inertia Weight is based on the function of local

best and global best of the particles in each generation. It neither takes a constant value nor a

linearly decreasing time-varying value. Using the merits of chaotic optimization, Chaotic

Inertia Weight has been proposed by Feng et al. [19]. A novel rule-based classifier [10]

design method was constructed by using improvised simple swarm optimization, to mine a

thyroid gland dataset from University of California Irvine repository. An elite concept is

added to the proposed method to improve solution quality and close interval encoding is

added to efficiently represent the rule structure.

Yang Shi et al. [6] proposes a cellular particle swarm optimization, hybridizing cellular

automata and particle swarm optimization (PSO) for function optimization. In the proposed

method, a mechanism of Cellular Automata is integrated in the velocity update to modify the

trajectories of particles to avoid being trapped in the local optimum. To prevent the PSO from

premature convergence, many researchers have proposed adaptive or self-adaptive strategies

such as the adaptive variable population size method in Chen and Zhao [20], the self-adaptive

method for generating the particles velocity in Jin et al. [21], and the adaptive inertia weight

method in Nickabadi et al. [22].

This paper analyzes various methods for avoiding local optima (premature) convergence,

thereby resulting in better predictive accuracy of the mined rules. The rest of this paper is

organized as follows. In Section 2, framework of PSO is described. Then Section 3 discusses

the variations introduced in PSO for avoiding premature convergence. Section 4 compares

the results of these variants when applied for association rule mining followed by conclusion

in section 5.

2. Preliminaries

This section will briefly present the general backgrounds of association rule mining and the

particle swarm optimization method, respectively.

2.1 Association rule

In many applications of data mining technology, applying association rules are the most

broadly discussed method. This method is capable of finding interesting associative and

relative characteristics from commercial transaction records and helping decision-makers

formulate business strategy.

The concept of association rule mining was first proposed by Agrawal et al. [4] in 1993. Let

I = {i

1

, i

2

, ..., i

m

} be a set of m distinct attributes, T be the transaction that contains a set of

items such that T I, D be a database with different transaction records Ts. An association

rule is an implication in the form of XY, where X, Y I are sets of items called itemsets,

and XY = . X is called antecedent while Y is called consequent, the rule means X implies

Y.

However, association rule mining must accord with parameters namely support and

confidence.

Support (s) of an association rule is defined as the percentage of records that

contain X Y to the total number of records in the database. It means the support

count does not take the quantity of the item into account.

( )

(1)

Confidence of an association rule is defined as the percentage of the number of

transactions that contain X Y to the total number of records that contain X. If

the percentage exceeds the threshold of confidence an interesting association rule

X Y can be generated.

( )

(2)

Confidence is a measure of strength of the association rules.

2.2 Particle Swarm Optimization

Particle Swarm Optimization algorithm was inspired by the social behaviour of biological

organisms, specifically the ability of groups of some species of animals to work as a whole in

locating desirable positions in a given area, e.g. birds flocking to a food source. This seeking

behaviour is associated with that of an optimization search for solutions to non-linear

equations in a real-valued search space.

In PSO there is a set of particles, called swarm [5], that are possible solutions for the

problem. These particles move through an n-dimensional search space based on their

neighbours best positions and on their own best position. In order to achieve this in each

generation the position and velocity of the particles are updated based on the best position

obtained by that particle and global best position obtained from all particles in the swarm.

The best particles are derived based on the fitness function, which is the problems objective

function.

Each particle p, at some iteration t, has a position x (t), and a displacement velocity v(t). The

particles best (pBest) position p(t) and global best (gBest) position g(t) are stored in the

associated memory. The velocity and position are updated using equations 3 and 4

respectively.

()(

()(

) (3)

(4)

Where

v

i

is the particle velocity of the i

th

particle

x

i

is the i

th

, or current particle

i is the particles number

d is the dimension of searching space

rand ( ) is a random number in (0, 1)

c

1

is the individual factor

c

2

is the societal factor

pBest is the particle best

gBest is the global best

Both c

1

and c

2

are set to be 2 in all literature works analyzed and hence the same is adopted

here. The velocity v

i

of each particle is clamped to a maximum velocity v

max

which is

specified by the user. v

max

determines the resolution with which regions between the present

position and the target position are searched.

The pseudo code for PSO algorithm is given below

For each particle

Initialize particle position and velocity

END

Repeat

For each particle

Calculate fitness value

If the fitness value is better than its personal best

set current value as the new pBest

End

Choose the particle with the best fitness value of all as gBest

For each particle

Calculate particle velocity according equation (3)

Update particle position according equation (4)

End

Until maximum number of iterations or minimum error criteria

The initial population is selected based on fitness value. The velocity and position of all the

particles are set randomly. Based on the fitness function the importance of the particles is

evaluated. The fitness function designed is based on support and confidence of the

association rule. The objective of fitness function is maximization. The fitness function is

shown in equation 5.

() () (() () ) (5)

Fitness (k) is the fitness value of association rule type k, confidence (x) is the confidence of

association rule type k and support(x) is the actual support of association rule type k. When

the support and confidence values are larger, then larger is the fitness value meaning that it is

an important association rule.

2.3 Predictive Accuracy

Predictive accuracy measures the effectiveness of the rules mined. The mined rules must have

high predictive accuracy.

(6)

where |X&Y| is the number of records that satisfy both the antecedent X and consequent Y,

|X| is the number of rules satisfying the antecedent X.

3. PSO and its Variants

Particle swarm optimization is based on the intelligence. PSO has no overlapping and

mutation calculation. During the development of several generations, only the most optimist

particle can transmit information onto the other particles. The speed of the searching is very

fast and it occupies the bigger optimization ability, thereby completing easily.

The swarm behaviour varies between exploratory behaviour, that is, searching a broader

region of the search-space, and exploitative behaviour, that is, a locally oriented search so as

to get closer to a (possibly local) optimum. The PSO algorithm and its parameters must be

chosen properly to balance between exploration and exploitation to avoid premature

convergence to a local optimum and yet also ensures a good rate of convergence to the

optimum. To avoid premature convergence at local optima Particle swarm optimization

variants are proposed and tested for mining association rules.

Variations have been introduced in velocity updation function to ensure convergence towards

global optima rather than local optima.

3.1 Particle Swarm Optimization with Inertia Weight

Inertia weight is added to the velocity update function and the equation 3 is modified as

()(

()(

) (7)

where is the inertia weight factor. The inertia weight is employed to control the impact

of the previous history of velocities on the current velocity, thus to influence the trade-off

between global (wide-ranging) and local (nearby) exploration abilities of the "flying points".

A larger inertia weight facilitates global exploration (searching new areas) while a smaller

inertia weight tends to facilitate local exploration to fine-tune the current search area. Suitable

selection of the inertia weight can provide a balance between global and local exploration

abilities and thus require less iteration on average to find the optimum.

3.2. Chaotic Particle Swarm Optimization

The canonical PSO tends to struck at local optima and thereby leading to premature

convergence when applied for solving practical problems. To improve the global searching

capability and escape from local optima chaos is introduced in PSO [14]. Chaos is a

deterministic dynamic system which is very sensitive and dependent on its initial conditions

and parameters. The common method of generating chaotic behaviour is based on Zaslavskii

map[15]. This representation of map involves many variables. Setting right values for all

these variables involved increases the complexity of the system. Erroneous values might bring

down the accuracy of the system involved. Logistic map and tent map are also most

frequently used chaotic behaviour. The drawback of these maps is that the range of values

generated by both the maps after some iteration becomes fixed to a particular range. To

overcome this defect the tent map undisturbed by the logistic map [16] is introduced as the

chaotic behaviour. The new chaotic map model is proposed with the following equation.

(

)

(8)

{

(

( (

)

)

The initial value of u

0

and v

0

are set to 0.1. The slight tuning of initial values of u0 and v

0

creates wide range of values with good distribution. The chaotic operator chaotic_operator(k)

= v

k

is designed therefore to generate different chaotic operators by tuning u

0

and v

0

. The

value of u

0

is set to two different values for generating the chaotic operators 1 and 2.

The velocity updation equation based on chaotic PSO is given in equation 9.

) ()

3.3 Neighbourhood Selection in PSO

In the original PSO, two kinds of neighbourhoods are defined for PSO:

In the gBest swarm, all the particles are neighbours of each other; thus, the position of

the best overall particle in the swarm is used in the social term of the velocity update

equation. The gBest swarms converge fast, as all the particles are attracted

simultaneously to the best part of the search space. However, if the global optimum is

not close to the best particle, it may be impossible to the swarm to explore other areas;

this means that the swarm can be trapped in local optima.

In the lBest swarm, only a specific number of particles (neighbour count) affect the

velocity of a given particle. The swarm will converge slower but can locate the global

optimum with a greater chance.

As the local best (lBest) value leads to convergence at the global optima the lBest value is

selected from neighbourhood values rather than the particles best values so far. The

neighbourhood best (lBest) selection is done as follows;

Calculate the distance of the current particle from other particles by equation 10.

) (10)

Find the nearest m particles as the neighbour of the current particle based on distance

calculated

Choose the local optimum lBest among the neighbourhood in terms of fitness values

The number of neighbourhood particles m is set to 2. Velocity and position updation of

particles are based on equation 3 and 4. The velocity updation is restricted to maximum

velocity V

max

set by the user. The termination condition is set as fixed number of

generations.

3.4 Self Adaptive Particle Swarm Optimization (SAPSO1 and SAPSO2)

The original PSO has pretty good convergence ability, but suffers with the demerit of

premature convergence [11], due to the loss of diversity [12]. Improving the exploration

ability of PSO has been an active research topic in recent years. Thus, the proposed algorithm

introduces the concept of self-adaptation as the primary key to tune the two basic rules

velocity and position. Effectively, reinforcing a PSO implies improving the inertia weight

formulae and thereby maintaining diversity of population. The basic PSO, presented by

Eberhart and Kennedy in 1995 [3], has no Inertia Weight. In 1998, first time Shi and Eberhart

[13] presented the concept of Inertia Weight by introducing Constant Inertia Weight.

By looking at equation (3) more closely, it can be seen that the maximum velocity allowed

actually serves as a constraint that controls the maximum global exploration ability PSO can

have. By setting a too small maximum velocity allowed, maximum global exploration ability

is limited, and PSO will always favour a local search no matter what the inertia weight is. By

setting a large maximum velocity allowed, the PSO can have a large range of exploration

ability to select by selecting the inertia weight. Since the maximum velocity allowed affects

global exploration ability indirectly and the inertia weight affects it directly, it will generally

be better to control global exploration ability through inertia weight only. A way to do that is

to allow inertia weight itself to control exploration ability. Thus the inertia weight is made

self adaptive. Two self adaptive inertia weights are introduced for mining association rules in

this paper.

In order to linearly decrease the inertia weight as iteration progress the inertia weight is made

adaptive through the equation 11 in SAPSO1.

(11)

Where

and

are the maximum and minimum inertia weights, g is the generation

index and G is the predefined maximum number of generation.

In SAPSO2 the inertia weight adaptation is made to depend upon the values from previous

generation so as to linearly decrease its value with increasing iterations as shown in equation

12.

( ) ()

(12)

Where ( ) is the inertia weight for the current generation, () is the inertia weight for

the previous generation,

and

are the maximum and minimum inertia weights and

G is the predefined maximum number of generation.

The steps in self adaptive PSO1 and PSO 2 are as follows.

Step1: Initialize the position and velocity of particles.

Step 2: The importance of each particle is studied utilizing fitness function. Fitness value is

evaluated using the fitness function. The objective of the fitness function is maximization.

Equation 13 describes the fitness function.

() () (() () ) (13)

where fitness(x) is the fitness value of the association rule type x, support(x) and

confidence(x) are as described in equation 1 and 2 and length(x) is length of the association

rule type x. If the support and confidence factors are larger then, greater is the strength of the

rule with more importance.

Step 3: Get the local best and particle best for the swarm. The local best is the best fitness

attained by the individual particle till present iteration and the overall best fitness attained by

all the particles so far is the global best value.

Step 4: Set

max

as 0.9 and

min

as 0.4 and find the adaptive weights for both SAPSO1 and

SAPSO2. Update velocity of the particles using equation 5.

Step 5: Update position of the particles using equation 6.

Step 6: Terminate if the condition is met.

Step 7: Go to step 2.

3.5 Self Adaptive Chaotic Particle Swarm Optimization (SACPSO)

The major drawback of standard PSO lies in its premature convergence, especially while

handling problems with many local optima. Based on the standard PSO, a novel chaotic

operator is introduced with the expectation of keeping the local diversity, as well as

enhancing the reliability of the algorithm. The velocity of each particle is updated by the

following equation:

[ ] [] ([] [] )

( [] []) (14)

where, chaotic_operator is an iterative value as chaotic mapping. The chaotic operators are

generated based on equation 8. The use of a fixed inertia weight does not have an impact on

the global and local search. When value is greater, it could undermine the search space's

excellent solutions, the algorithm does not even slow down the convergence. Hence, a

method of adaptive system optimization, where is made dynamic is proposed as given in

equation 11.

4. Evaluation Results and Discussion

To test the performance of the variants of PSO for mining association rules, computational

experiments were carried out on the well-known benchmark datasets from University of

California Irvine (UCI) repository. The experiments were carried out in Java on windows

platform. The datasets considered for the experiments is listed in Table 1.

Table 1. Datasets Description

Dataset Attributes Instances Attribute

characteristics

Lenses 4 24 Categorical

Car Evaluation 6 1728 Categorical, Integer

Habermans Survival 3 310 Integer

Post-operative Patient Care 8 87 Categorical, Integer

Zoo 16 101 Categorical, Binary,

Integer

The initial parameters set for the evaluation is listed in Table 2.

Table 2. Parameter values set for the Experiment

Dataset Swarm

Size

C1 C2 Inertia

Weight

Generations

max

min

Lenses 24 2 2 0.2 100 0.9 0.4

Car

Evaluation

700 2 2 0.4 100 0.9 0.4

Habermans

Survival

300 2 2 0.4 100 0.9 0.4

Post-

operative

Patient Care

87 2 2 0.3 100 0.9 0.4

Zoo 101 2 2 0.3 100 0.9 0.4

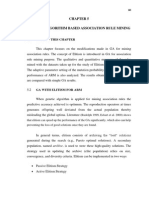

Balancing between exploration and exploitation is carried out using the variants of PSO

proposed and the results for the five datasets are plotted in figures 1 to 5

.

45

50

55

60

65

70

75

80

85

90

95

100

10 20 30 40 50 60 70 80 90 100

P

r

e

d

i

c

t

i

v

e

A

c

c

u

r

a

c

y

(

%

)

No. of Iterations

PSO

WPSO

CPSO

NPSO

SAPSO1

SAPSO2

SACPSO

Figure 1. Convergence of Predictive Accuracy for Lens Dataset

Figure 2. Convergence of Predictive Accuracy for Car Evaluation Dataset

Figure 3. Convergence of Predictive Accuracy for Habermans Survival Dataset

Figure4. Convergence of Predictive Accuracy for Post Operative Patient Care Dataset

84

86

88

90

92

94

96

98

100

102

10 20 30 40 50 60 70 80 90 100

P

r

e

d

i

c

t

i

v

e

A

c

c

u

r

a

c

y

No.of Iterations

PSO

WPSO

CPSO

NPSO

SAPSO1

SAPSO2

60

70

80

90

100

10 20 30 40 50 60 70 80 90 100

P

r

e

d

i

c

t

i

v

e

A

c

c

u

r

a

c

y

(

%

)

No. of Iterations

PSO

WPSO

CPSO

NPSO

SAPSO1

SAPSO2

SACPSO

40

50

60

70

80

90

100

10 20 30 40 50 60 70 80 90 100

P

r

e

d

i

c

t

i

v

e

A

c

c

u

r

a

c

y

(

%

)

No. of Iterations

PSO

WPSO

CPSO

NPSO

SAPSO1

SAPSO2

SACPSO

Figure 5. Convergence of Predictive Accuracy for Zoo Dataset

The Self adaptive variants SAPSO1, SAPSO2 and SACPSO give consistent performance

when compared to other variants throughout the generations. The predictive accuracy

achieved by applying these self adaptive methods for association rule mining is better when

compared to the normal variants. The traditional particle swam optimization method when

applied for AR mining converges at very early stage for all the datasets. The performance of

WPSO, CPSO and NPSO varies from dataset to dataset. It is consistent for Zoo and Post

operative patient care datasets while inconsistent for Lenses, Habermans survival and Car

evaluation datasets.

The scope of introducing the variants in PSO is to avoid premature convergence and in turn

increase the predictive accuracy of the mined rules. The predictive accuracy is plotted for the

variants of PSO for all the five datasets in figure 6.

Figure 6. Predictive Accuracy comparison for PSO Variants

The variants of PSO perform better when compared to traditional PSO for mining association

rules. In terms of predictive accuracy the self adaptive methods SAPSO1, SAPSO2 and

40

50

60

70

80

90

100

10 20 30 40 50 60 70 80 90 100

P

r

e

d

i

c

t

i

v

e

A

c

c

u

r

a

c

y

(

%

)

No. of Iterations

PSO

WPSO

CPSO

NPSO

SAPSO1

SAPSO2

SACPSO

75

80

85

90

95

100

Lenses Car Evaluation Habermans

Survival

Po-opert Care Zoo

P

r

e

d

i

c

t

i

v

e

A

c

c

u

r

a

c

y

(

%

)

PSO

CPSO

NPSO

WPSO

SAPSO2

SAPSO1

SACPSO

SACPSO perform better than the normal PSO variants CPSO, WPSO and NPSO. The

weighted PSO gives better performance for all the datasets among the chaotic PSO and

neighbourhood selection PSO.

The iteration at which maximum predictive accuracy attained for the five datasets by

applying the variants of PSO in association rule mining is shown in figure 7.

Figure 7. Convergence rate comparison for PSO variants

The convergence rate varies from dataset to dataset for all the methods. The method in which

the convergence at local optima is avoided generates association rules with maximum

accuracy. This could be noted from figures 6 and 7.

The variants of PSO attempt to avoid convergence at the local optima by balancing between

exploration and exploitation. The predictive accuracy achieved by the variants is also

enhanced for all the datasets. The inertia weight, chaotic operators, neighborhood selection

and adapting the inertia weight dynamically, introduced in velocity updation function

maintains the balancing of convergence at local optima and deviation from global optima.

The self adaptive methods perform better than other methods.

5. Conclusion

Association rule mining is one of the most important tasks in data mining community because

the data being generated and stored in databases are already enormous and continues to grow

very fast. Particle Swarm Optimization algorithm mimics the social behaviour instead of

survival of fitness used in most of evolution algorithms. This principle reduces the time

complexity of PSO when compared to other algorithms. The convergence at local optima also

tends to reduce the time complexity.

In this paper inertia weight, chaotic operators, Neighbourhood selection and two adaptive

methods for inertia weight are introduced in the velocity updation function. These variants

when applied for association rule mining results in increased predictive accuracy for all the

five datasets used. The shift in convergence rate is achieved by avoiding convergence at local

optima though the variants of PSO. This also enhances the efficiency of the rules mined.

0

10

20

30

40

50

60

70

80

90

100

Lenses Car Evaluation Habermans

Survival

Po-opert Care Zoo

I

t

e

r

a

t

i

o

n

PSO

WPSO

CPSO

NPSO

SAPSO1

SAPSO2

SACPSO

When compared to PSO the PSO variants perform better both in terms of predictive accuracy

and balancing between exploration and exploitation. The three self adaptive methods

SAPSO1, SAPSO2 and SACPSO exhibit consistent performance for all the datasets. The

inertia weight factor performs better among the other PSO variants. The behaviour of Chaotic

PSO and neighbourhood selection in PSO varies from dataset to dataset depending on the

attributes involved and its values.

Avoiding exploitation at global search and testing on more datasets could be taken up for

further exploration.

References

1. A.A.Freitas. A survey of evolutionary algorithms for data mining and knowledge

discovery, Advances in Evolutionary Computation. Springer-Verlag, 2001.

2. Torn, A. Zilinskas (Eds.), Global Optimization, Lecture Notes in Computer Science, vol.

350, Springer-Verlag, 1989.

3. J. Kennedy, R. Eberhart, Particle swarm optimization, International Conference on

Neural Networks, pp. 19421948, 1995.

4. R. Agrawal, T. Imielin ski, A. Swami, Mining association rules between sets of items in

large databases, ACM SIGMOD 22 (2), pp.207216, 1993.

5. Tiago Sousa, Ana Paula Neves F. da Silva, Arlindo Silva , Ernesto Costa, Particle Swarm

Based Data Mining Algorithms for Classification Tasks, Parallel Computing, 30, pp.

767-783, Elsevier, 2004

6. Yang Shi, Hongcheng Liu, Liang Gao, Guohui Zhang, Cellular particle swarm

optimization, Information Sciences, 181, pp.44604493,2011.

7. M. Clerc, J. Kennedy, The particle swarm-explosion, stability, and convergence in a

multidimensional complex space, IEEE Transactions on Evolutionary Computation,

pp.5873, 2002.

8. F. Van den Bergh, A.P. Engelbrecht, A study of particle swarm optimization particle

trajectories, Information Sciences, 176, pp.937971, 2006.

9. J. Kenndy, Small worlds and mega-minds: effects of neighborhood topology on particle

swarm performance, In: Proceedings of IEEE Congress on Evolutionary Computation,

pp. 19311938, 1999.

10. W.-C. Yeh, Novel swarm optimization for mining classification rules on thyroid gland

data, Inform. Sci. , doi:10.1016/j.ins.2012.02.009, 2012

11. Zhao Xinchao, A perturbed particle swarm algorithm for numerical optimization,

Applied Soft Computing 10 (1), pp. 119124, 2010.

12. Yuxin Zhao, Wei Zub, Haitao Zeng, A modified particle swarm optimization via particle

visual modeling analysis, Computers and Mathematics with Applications 57, pp. 2022

2029, 2009.

13. Y. Shi and R. Eberhart., A modified particle swarm optimizer, International Conference

on Evolutionary Computation Proceedings, IEEE, pp. 6973, 1998.

14. W.J. Kong, W.J. Cheng, J.L. Ding, T.Y. Chai, A Reliable and Efficient Hybrid PSO

Algorithm for Parameter Optimization of LS-SVM for Production Index Prediction

Model, Third International Symposium on Computational Intelligence and Design, vol.2,

pp.140-143, 2010.

15. Bilal Atlas, Erhan Akin, Multi-objective rule mining using a chaotic particle swarm

optimization algorithms, Knowledge based systems,23, pp. 455-460,2009.

16. lal Alatas, Erhan Akin, A. Bedri Ozer, Chaos embedded particle swarm optimization,

Chaos,Solitons&Fractals, vol. 40, no.4, pp. 1715 - 1734, 2009.

17. R.C. Eberhart and Y. Shi., Tracking and optimizing dynamic systems with particle

swarms, Proceedings of the 2001 Congress on Evolutionary Computation, volume 1, pp.

94100, IEEE, 2002

18. M.S. Arumugam and MVC Rao., On the performance of the particle swarm optimization

algorithm with various Inertia Weight variants for computing optimal control of a class

of hybrid systems, Discrete Dynamics in Nature and Society, 2006.

19. Y. Feng, G.F. Teng, A.X. Wang, and Y.M. Yao., Chaotic Inertia Weight in Particle

Swarm Optimization, Proceedings of the 2001 Congress on Innovative Computing,

Information and Control, pp. 475-481. IEEE, 2008.

20. D.B. Chen, C.X. Zhao, Particle swarm optimization with adaptive population size and

its application, Applied Soft Computing. Pp.3948, 2009.

21. Y.S. Jin, K. Joshua, H.M. Lu, Y.Z. Liang, B.K. Douglas, The landscape adaptive

particle swarm optimizer, Applied Soft Computing. 8, pp. 295 304, 2008.

22. A. Nickabadi, M.M. Ebadzadeh, R. Safabakhsh, A novel particle swarm optimization

algorithm with adaptive inertia weight, Appl. Soft Computing, 11,pp. 36583670, 2011.

Anda mungkin juga menyukai

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryDari EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryPenilaian: 3.5 dari 5 bintang3.5/5 (231)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Dari EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Penilaian: 4.5 dari 5 bintang4.5/5 (121)

- Grit: The Power of Passion and PerseveranceDari EverandGrit: The Power of Passion and PerseverancePenilaian: 4 dari 5 bintang4/5 (588)

- Never Split the Difference: Negotiating As If Your Life Depended On ItDari EverandNever Split the Difference: Negotiating As If Your Life Depended On ItPenilaian: 4.5 dari 5 bintang4.5/5 (838)

- The Little Book of Hygge: Danish Secrets to Happy LivingDari EverandThe Little Book of Hygge: Danish Secrets to Happy LivingPenilaian: 3.5 dari 5 bintang3.5/5 (400)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaDari EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaPenilaian: 4.5 dari 5 bintang4.5/5 (266)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeDari EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifePenilaian: 4 dari 5 bintang4/5 (5794)

- Rise of ISIS: A Threat We Can't IgnoreDari EverandRise of ISIS: A Threat We Can't IgnorePenilaian: 3.5 dari 5 bintang3.5/5 (137)

- Her Body and Other Parties: StoriesDari EverandHer Body and Other Parties: StoriesPenilaian: 4 dari 5 bintang4/5 (821)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreDari EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You ArePenilaian: 4 dari 5 bintang4/5 (1090)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyDari EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyPenilaian: 3.5 dari 5 bintang3.5/5 (2259)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersDari EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersPenilaian: 4.5 dari 5 bintang4.5/5 (345)

- Shoe Dog: A Memoir by the Creator of NikeDari EverandShoe Dog: A Memoir by the Creator of NikePenilaian: 4.5 dari 5 bintang4.5/5 (537)

- The Emperor of All Maladies: A Biography of CancerDari EverandThe Emperor of All Maladies: A Biography of CancerPenilaian: 4.5 dari 5 bintang4.5/5 (271)

- Team of Rivals: The Political Genius of Abraham LincolnDari EverandTeam of Rivals: The Political Genius of Abraham LincolnPenilaian: 4.5 dari 5 bintang4.5/5 (234)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceDari EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RacePenilaian: 4 dari 5 bintang4/5 (895)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureDari EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FuturePenilaian: 4.5 dari 5 bintang4.5/5 (474)

- Essential Oil ExtractionDokumen159 halamanEssential Oil ExtractionAubrey Hernandez100% (4)

- On Fire: The (Burning) Case for a Green New DealDari EverandOn Fire: The (Burning) Case for a Green New DealPenilaian: 4 dari 5 bintang4/5 (74)

- The Yellow House: A Memoir (2019 National Book Award Winner)Dari EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Penilaian: 4 dari 5 bintang4/5 (98)

- The Unwinding: An Inner History of the New AmericaDari EverandThe Unwinding: An Inner History of the New AmericaPenilaian: 4 dari 5 bintang4/5 (45)

- Ground Investigation ReportDokumen49 halamanGround Investigation Reportjoemacx6624Belum ada peringkat

- Roger Ghanem, David Higdon, Houman Owhadi (Eds.) - Handbook of Uncertainty Quantification-Springer International Publishing (2017)Dokumen2.035 halamanRoger Ghanem, David Higdon, Houman Owhadi (Eds.) - Handbook of Uncertainty Quantification-Springer International Publishing (2017)Jaime Andres Cerda Garrido100% (1)

- Standard Evaluation System For RiceDokumen62 halamanStandard Evaluation System For RiceIRRI_resources90% (10)

- Framework For Comparison of Association Rule Mining Using Genetic AlgorithmDokumen8 halamanFramework For Comparison of Association Rule Mining Using Genetic AlgorithmAnonymous TxPyX8cBelum ada peringkat

- E.S. Engineering College, Villupuram University Practical Examinations, October 2013 Tea, Breakfast DetailsDokumen1 halamanE.S. Engineering College, Villupuram University Practical Examinations, October 2013 Tea, Breakfast DetailsAnonymous TxPyX8cBelum ada peringkat

- Adobe Presenter Phy SampDokumen6 halamanAdobe Presenter Phy SampAnonymous TxPyX8cBelum ada peringkat

- Survey On Mining Ars Using Genetic AlgorithmDokumen4 halamanSurvey On Mining Ars Using Genetic AlgorithmAnonymous TxPyX8cBelum ada peringkat

- X Standard Public Time Table 2013Dokumen1 halamanX Standard Public Time Table 2013Anonymous TxPyX8cBelum ada peringkat

- B.e.cseDokumen107 halamanB.e.cseSangeetha ShankaranBelum ada peringkat

- GD 2016 Welcome SpeechDokumen3 halamanGD 2016 Welcome SpeechAnonymous TxPyX8cBelum ada peringkat

- Chapter No. Title Page No. List of Tables List of Figures List of Abbreviations 1Dokumen9 halamanChapter No. Title Page No. List of Tables List of Figures List of Abbreviations 1Anonymous TxPyX8cBelum ada peringkat

- E.S.Engineering College, Villupuram: Department of Mechanical Engineering Time TableDokumen1 halamanE.S.Engineering College, Villupuram: Department of Mechanical Engineering Time TableAnonymous TxPyX8cBelum ada peringkat

- Sathyabama University: (Established Under Section 3 of UGC Act, 1956)Dokumen2 halamanSathyabama University: (Established Under Section 3 of UGC Act, 1956)Anonymous TxPyX8cBelum ada peringkat

- Tamil Nadu Government Gazette: ExtraordinaryDokumen6 halamanTamil Nadu Government Gazette: ExtraordinaryAnonymous TxPyX8cBelum ada peringkat

- Particle Swarm Optimization For Mining Association Rules: 5.1 GeneralDokumen48 halamanParticle Swarm Optimization For Mining Association Rules: 5.1 GeneralAnonymous TxPyX8cBelum ada peringkat

- ReferencesDokumen21 halamanReferencesAnonymous TxPyX8cBelum ada peringkat

- Genetic Algorithm Based Association Rule Mining 5.1 Aims of This ChapterDokumen15 halamanGenetic Algorithm Based Association Rule Mining 5.1 Aims of This ChapterAnonymous TxPyX8cBelum ada peringkat

- Particle Swarm Optimization For Mining Association RulesDokumen20 halamanParticle Swarm Optimization For Mining Association RulesAnonymous TxPyX8cBelum ada peringkat

- 5.1 Aims of This ChapterDokumen15 halaman5.1 Aims of This ChapterAnonymous TxPyX8cBelum ada peringkat

- 3rd Quarter PHYSICAL SCIENCE ExamDokumen19 halaman3rd Quarter PHYSICAL SCIENCE ExamZhering RodulfoBelum ada peringkat

- Project Sanjay YadavDokumen51 halamanProject Sanjay YadavriyacomputerBelum ada peringkat

- Comparative Study of Conventional and Generative Design ProcessDokumen11 halamanComparative Study of Conventional and Generative Design ProcessIJRASETPublicationsBelum ada peringkat

- Chemical Tanker Familiarization (CTF) : Companies Can Opt For Block BookingDokumen1 halamanChemical Tanker Familiarization (CTF) : Companies Can Opt For Block BookingSamiulBelum ada peringkat

- Theories of DissolutionDokumen17 halamanTheories of DissolutionsubhamBelum ada peringkat

- 100G OTN Muxponder: Cost-Efficient Transport of 10x10G Over 100G in Metro NetworksDokumen2 halaman100G OTN Muxponder: Cost-Efficient Transport of 10x10G Over 100G in Metro NetworkshasBelum ada peringkat

- 4200 Magnetometer Interface Manual 0014079 - Rev - ADokumen34 halaman4200 Magnetometer Interface Manual 0014079 - Rev - AJose Alberto R PBelum ada peringkat

- Craig - 4353 TX CobraDokumen3 halamanCraig - 4353 TX CobraJorge ContrerasBelum ada peringkat

- Custard The DragonDokumen4 halamanCustard The DragonNilesh NagarBelum ada peringkat

- Speaking With Confidence: Chapter Objectives: Chapter OutlineDokumen12 halamanSpeaking With Confidence: Chapter Objectives: Chapter OutlinehassanBelum ada peringkat

- Special Order Gun CatalogDokumen123 halamanSpecial Order Gun Catalogmrgigahertz100% (1)

- Massage Intake FormDokumen2 halamanMassage Intake Formapi-253959832Belum ada peringkat

- HW - MainlineList - 2023 - FINAL 2 17 23 UPDATEDDokumen9 halamanHW - MainlineList - 2023 - FINAL 2 17 23 UPDATEDJosé Mario González AlfaroBelum ada peringkat

- CBSE Sample Paper Class 9 Science SA2 Set 7Dokumen13 halamanCBSE Sample Paper Class 9 Science SA2 Set 7PALAK SHARMABelum ada peringkat

- Photovoltaic Water Heater: The Ecological Revolution MADE IN ITALYDokumen4 halamanPhotovoltaic Water Heater: The Ecological Revolution MADE IN ITALYDani Good VibeBelum ada peringkat

- HVT DS HAEFELY RIC 422 Reference Impulse Calibrator V2004Dokumen4 halamanHVT DS HAEFELY RIC 422 Reference Impulse Calibrator V2004leivajBelum ada peringkat

- Our School Broke Up For The Winter VacationsDokumen7 halamanOur School Broke Up For The Winter VacationsprinceBelum ada peringkat

- EN 14103 - ThermoDokumen4 halamanEN 14103 - ThermoLuciana TrisnaBelum ada peringkat

- HOconsDokumen14 halamanHOconsMax PradoBelum ada peringkat

- 1 An Introduction Basin AnalysisDokumen29 halaman1 An Introduction Basin AnalysisMuhamadKamilAzharBelum ada peringkat

- Taenia SoliumDokumen40 halamanTaenia SoliumBio SciencesBelum ada peringkat

- List of Japanese Company in IndiaDokumen2 halamanList of Japanese Company in Indiakaushalshinde317Belum ada peringkat

- MKRS Training ProfileDokumen10 halamanMKRS Training ProfileZafri MKRS100% (1)

- The Beginningof The Church.R.E.brownDokumen4 halamanThe Beginningof The Church.R.E.brownnoquierodarinforBelum ada peringkat

- 06-Soil Fert Nutr MGTDokumen8 halaman06-Soil Fert Nutr MGTAndres LuqueBelum ada peringkat

- V260 Control ValveDokumen12 halamanV260 Control ValvenwabukingzBelum ada peringkat