Separating Color and Pattern Information For Color Texture Discrimination

Diunggah oleh

James Earl CubillasJudul Asli

Hak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

Separating Color and Pattern Information For Color Texture Discrimination

Diunggah oleh

James Earl CubillasHak Cipta:

Format Tersedia

Separating Color and Pattern Information for Color Texture Discrimination

Topi Maenpaa , Matti Pietikainen and Jaakko Viertola

Machine Vision Group

Department of Electrical and Information Engineering

University of Oulu, Finland

{topiolli, mkp, jaskav}@ee.oulu.fi

Abstract

The analysis of colored surface textures is a challenging

research problem in computer vision. Current approaches

to this task can be roughly divided into two categories:

methods that process color and texture information separately and those that utilize multispectral texture descriptions. Motivated by recent psychophysical findings, we find

the former approach quite auspicious. We propose the use

of complementary color and texture measures that are combined on a higher level, and empirically demonstrate the

validity of our proposion using a large set of natural color

textures.

1. Introduction

The use of joint color-texture features has been a popular

approach to color texture analysis. One of the first methods allowing spatial interactions within and between spectral bands was proposed by Rosenfeld et al. [15]. Statistics derived from co-occurrence matrices and difference histograms were considered as texture descriptors. Panjwani

and Healey introduced a Markov random field model for

color images which captures spatial interaction both within

and between color bands [12]. Jain and Healey proposed

a multiscale representation including unichrome features

computed from each spectral band separately, as well as

opponent color features that capture the spatial interaction

between spectral bands [5]. Recently, a number of other approaches allowing spatial interactions have been proposed.

In some approaches, only the spatial interactions within

bands are considered. For example, Caelli and Reye proposed a method which extracts features from three spectral

channels by using three multiscale isotropic filters [1].

The financial support provided by Academy of Finland and the Graduate School in Electronics, Telecommunications and Automation is gratefully acknowledged.

Another way of analyzing color texture is to divide the

color signal into luminance and chrominance components,

and process them separately. Many approaches using this

principle have also been proposed. For example, Tan and

Kittler extracted texture features based on the discrete cosine transform of a gray level image, while measures derived from color histograms were used for color description

[17]. A color granite classification problem was used as a

test bed for the method.

The neurophysical studies of DeYoe and van Essen [2],

for example, support separate processing of luminance and

chrominance information. Also, recent psychophysical

studies by Poirson and Wandell suggest that color and pattern information are processed in separable pathways [14].

In their recent research on the vocabulary and grammar of

color patterns, Mojsilovic et al. conclude that human perception of pattern is unrelated to the color content of an

image [8], while admitting that there are some residual interactions along the pathways.

In the human eye, color information is processed at lower

spatial frequency than intensity. This fact is utilized in image compression and also in imaging sensors. Because of

the fact that photographs are usually intended for human audience, there is no need to acquire colors in high resolution.

For example, color CCD chips measure color on each pixel

using just one sensor band instead of all three. Therefore,

color information for the pixel must be calculated from the

neighbors using an interpolation routine of some type. This

does not show up for the human eye, but it might destroy

information that is useful for a computer vision system. It

can however be argued that since our eyes have been evolving for some million years, they would use high resolution

color information if it was useful.

In this paper, we empirically show that the use of separate feature spaces for color texture discrimination is the

preferred choice. Using two sets of natural color textures we

compare multispectral texture features with separate color

and texture features. We also demonstrate that the use of

high-resolution color information does not necessarily help

joint color-texture operators.

2. Experiments

2.1. Image Data

We arranged three experiments with two different texture sets. The sets included 54 color textures from the Vision Texture database [7], and 68 color textures from the

Outex texture database [11]. The main difference between

these two is that in the former, texture images are taken under non-specified illumination conditions and imaging geometries whereas the latter has a fixed imaging geometry

and strictly specified illumination sources. Furthermore,

the textures in Outex have been imaged with a three-CCD

digital camera, which means that their color resolution is

as good as the intensity resolution. This allows us to empirically evaluate whether the performance of joint colortexture operators is affected by the color resolution. Outex

also provides many different versions of the same texture

illuminated with different light sources.

First, the 54 VisTex textures were split into 128x128

pixel sub-images. Since the size of the original images was

512x512, this makes up a total of 16 sub-images per texture.

Half of the samples from each texture were used in training

while the rest served as testing data. A checkerboard pattern

was used in dividing the sub-images into two sets, the upper

left sub-image being the first training sample. This data was

submitted to the Outex site as test suite Contrib TC 00006

[11].

Second, a set of 68 Outex textures were treated in a similar manner. In this case, the total number of sub-images per

texture was 20 due to the fact that the original size of the

images was 746x538 pixels. Thus, there were 680 samples

in both the training and the test set. The selected textures

were imaged at 100dpi and illuminated with a 2856K incandescent CIE A light source. At the Outex site, this test

suite has the id Outex TC 00013.

Third, the same 68 Outex textures were used as training

data. As test samples, two differently illuminated samples

of the very same textures were utilized. The illumination

sources were 2300K horizon sunlight and 4000K fluorescent TL84. Despite the spectrum, the three illumination

sources slightly differ in positions, which produces varying

shadows. Using this type of problem setting, it was possible to see how illumination changes affect texture and color

descriptors. The numbers of training and testing samples in

this test were 680 and 1360, respectively. This test suite has

the Outex id Outex TC 00014.

All gray-scale images were scaled so that the mean and

standard deviation of their gray levels were 127 and 20, respectively. This transformation removes the effect of mean

luminance and overall contrast changes, but may fail in normalizing the images against illumination color or geometry

variations. Color images were used as such, and with the

comprehensive normalization algorithm of Finlayson et al.

[3], which normalizes RGB colors against both illumination

geometry and color changes.

2.2. Features

RGB histograms were used as color features. First, each

8-bit color channel was quantized into 16 and 32 levels by

dividing the values on each color channel by 16 and 8, respectively. Three-dimensional histograms with 163 and 323

entries were created. Let us denote this quantization method

as raw quantization. Second, the quantization method presented in [9, 13] was used in obtaining three- and onedimensional color distributions. The resulting histograms

are later denoted by RGB 163 , 323 and 256 3. As a dissimilarity measure, histogram intersection was utilized [16].

As gray-scale texture operators, we selected the Gabor

filtering method of Manjunath and Ma [6] and the local binary pattern (LBP) operator [9, 10] because they both perform very well and have a generalization for multispectral images. Consequently, the opponent color features of

Jain and Healey [5] and an opponent color version of the

LBP operator were used in joint color-texture analysis. As

suggested by the authors, a city-block distance scaled with

the standard deviations of the features was used as a dissimilarity measure for the gray-scale Gabor features. For

opponent-color Gabor features, a squared Euclidean distance scaled with feature variances was used. For LBP distributions, the suggested log-likelihood measure was chosen.

There is a significant difference between the spatial support of Gabor filters and the LBP operator. The size of

the largest Gabor filters is 35x35 pixels, whereas the basic

LBP operator is calculated in a neighborhood of 3x3 pixels. Therefore, the Gabor filters are likely to capture the

macrostructure of a texture much better than the LBP features. To account for this weakness, we used three variations of the LBP operator. Instead of the traditional 3x3

rectangular neighborhood, we sampled the neighborhood

circularly with varying radii, and used a different number

of neighborhood samples. The resulting operators are deu2

noted by LBP8,1 , LBPu2

16,2 and LBP24,3 , where subscripts

tell the number of samples and the neighborhood radii. The

superscript u2 indicates that only uniform patterns are in

use [10].

The main difference between the gray-scale Gabor filtering method and its multispectral counterpart is that the latter

uses differences between filtered color channels to mimic

the opponent color processing of the eye. Similarly, the difference between opponent color and gray-scale LBP opera-

tors is that in the former, the center pixel for a neighborhood

and the neighborhood itself can be taken from any color

channel. For three dimensional color spaces this means a

total of nine possible operators and nine feature distributions. We used them all and concatenated the nine histograms into a single distribution containing 2304 bins.

Finally, two methods of combining color and texture on

a higher level were used. For this experiment, we selected

the LBPu2

16,2 and 1-D RGB methods due to the fact that

among the tested color and texture methods, they had the

best overall performance alone (See Table 1). During classification, color and texture information can be combined

by using a separate dissimilarity measure for both feature

vectors. The dissimilarities between the corresponding feature vectors may then be summed up to produce an overall

dissimilarity. This method requires the normalization of the

dissimilarities to reduce the effects of incompatible dissimilarity value ranges. We used scaling with mean values.

Normalizing dissimilarities and summing them together

is far from being the optimal way of combining color and

texture feature vectors. In a case where complementary

color and texture information is used, it is unlikely that both

color and texture make exactly the same mistakes. Therefore, we need a method of combining these two that can take

the strengths and weaknesses of each feature type into account. This can be done by combining classification results

or class rankings, to be exact from multiple classifiers.

We used the method of Ho et al. [4] to combine the classification results with color and texture features. Now, each

sample was represented by two feature vectors: LBPu2

16,2

distribution and an RGB histogram. The sample sets were

separately classified using each of these feature sets in turn,

and class rankings were used to derive the final decision.

The Borda count was used as a decision criterion.

2.3. Results

All the aforementioned features were used in classifying the three test sets. We selected to use a simple nonparametric classification principle and used a k-NN classifier with k = 3. The percentages of correct classifications

are listed in Table 1. For the cases where color features

are used, results are shown both for the non-normalized

and normalized textures. The classification accuracy of

RGB histograms is severely degraded when the illumination

source is not kept constant. The comprehensive normalization algorithm helps a lot, but still the results are not very

good. On the other hand, in the constant illumination case

(test suite 13) RGB histograms clearly beat texture features.

Texture measures also suffer from the illumination color

change, but not nearly as much as color. Due to the small

variations in illumination source positions, the LBP operators with small neighborhoods suffer from changing shad-

Table 1. Classification results

Feature

VisTex Outex 13 Outex 14

RGB histograms

3-D 163 raw

99.1

92.8 13.7/22.6

3-D 323 raw

99.8

93.5

9.0/28.5

3-D 163

99.1

94.7

9.0/27.5

3-D 323

99.5

94.3

9.3/32.1

1-D 256*3

95.8

91.5 19.0/28.9

Gray-scale texture features

Gabor

90.5

LBP

97.7

LBP8,1

97.5

LBPu2

97.0

16,2

LBPu2

92.4

24,3

77.6

81.0

80.0

80.4

76.2

66.0

60.0

57.6

69.0

68.4

Multispectral texture features

Gabor

97.9

LBP8,1

98.8

81.2

91.2

53.3/47.4

10.9/46.9

LBPu2

16,2 and 1-D RGB 256*3

Dissimilarity sum

97.0

Borda count

98.8

93.1

90.0

26.9/40.8

59.1/55.4

ows. When the size of the neighborhood grows, the accuracy increases. The LBPu2

16,2 operator seems to give the

solidest performance over the three experiments, but it also

introduces a classification accuracy drop of over 10 percentage units in the illumination invariance test. However, in all

the three experiments, it works better than the gray-scale

Gabor features. The opponent color LBP works better than

opponent color Gabor with constant illumination, but significantly weaker if the light source changes. It thus seems

that the opponent color LBP relies mainly on local color differences instead of texture pattern information, whereas opponent color Gabor filters are able to measure texture structures at a larger scale, thereby reducing the effect of changes

in local differences. Again, the comprehensive normalization algorithm reduces the problem, but the result is still not

very good as over half of the samples were misclassified.

The VisTex textures can be classified almost perfectly

with 3-D RGB histograms the 323 version falls only one

sample short of a faultless result. LBP does not do much

worse, and there seems to be no significant difference between gray-scale and opponent color versions. However,

the opponent color Gabor features crearly defeat their grayscale counterparts.

3. Discussion

The results show that color and texture indeed have complementary roles. Color histograms are very powerful in

stable illumination conditions, but fail when illumination

conditions change. At the same time, texture features especially the LBP distributions provide fairly robust performance irrespective of illumination. In the cases where

good results are achieved with multispectral texture descriptors, color histograms are still better. In the cases where

color histograms fail, texture features calculated from the

luminance information provide best accuracy. Neither of

the two multispectral texture operators was the best one in

any of the experiments. In all cases, either color or texture

alone, and either of the two methods of combining separate

color and texture measures gave better accuracy. Therefore,

we argue that the biological motivation for separate processing of color and pattern information is a quite justified one.

From the biological point of view, these results are

hardly surprising. Just think how the human visual system

works with low illumination levels. We are able to recognize our environment even if there were no colors. How

about color-blindness? Does it not affect just the perception

of colors, and not the patterns?

We perceive the patterns in our environment in an essentially constant way independent of the intensity or color

of illumination. Moreover, most of the textural information

seems to be in its high-frequency components. This argument can be based on the fact that the overall classification

accuracy of the LBP operator in a 3x3 neighborhood is better than that of the much larger Gabor filters. Therefore, it

is justifiable to use pattern-related information like the LBP

in addition to color measurements.

The utility attained from high-resolution color information seems not to be too significant to the multispectral texture operators. This is indicated by the fact that in the Outex TC 00013 test, where high-resolution color is available,

the multi-spectral texture descriptors perform worse than

color histograms, whereas the VisTex textures are classified

with nearly the same accuracy. The opponent-color LBP

outperforms Gabor features in both tests, and its accuracy

is essentially equivalent to the color histograms.

Finally, it should be noted that in many real-world situations, for example in visual inspection, one really must

take computational requirements seriously. The use of complementary feature spaces allows one to optimize color and

texture measures separately. With simple color features calculated from color histograms, and simple texture measures

like the LBP, it is possible to achieve real-time performance

even in very demanding tasks.

A more comprehensive study is still needed to further

confirm the conclusions. Different color spaces and more

features must be investigated. To get the maximum performance out of all features, different classifiers must be used.

Furthermore, to be able to fairly compare LBP and Gabor

features, the LBP operator must be enhanced to measure

more than just local textural structures.

References

[1] T. Caelli and D. Reye. On the classification of image regions by colour, texture and shape. Pattern Recognition,

26(4):461470, 1993.

[2] E. DeYoe and D. van Essen. Concurrent processing streams

in monkey visual cortex. Trends. Neurosci., 11:219226,

1996.

[3] G. Finlayson, B. Schiele, and J. Crowley. Comprehensive

colour image normalization. In 5th European Conference

on Computer Vision, volume 1, pages 475490, Freiburg,

Germany, 1998.

[4] T. Ho, J. Hull, and S. Srihari. Decision combination in multiple classifier systems. IEEE Transactions on Pattern Analysis and Machine Intelligence, 16(1):6675, 1994.

[5] A. Jain and G. Healey. A multiscale representation including

opponent color features for texture recognition. IEEE Transactions on Image Processing, 7(1):124128, Jan. 1998.

[6] B. Manjunath and W. Ma. Texture features for browsing

and retrieval of image data. IEEE Transactions on Pattern

Analysis and Machine Intelligence, 18(8):837842, 1996.

[7] MIT Media Lab.

Vision texture VisTex database.

http://www-white.media.mit.edu/vismod/imagery/VisionTexture/vistex.html.

[8] A. Mojsilovic, J. Kovacevic, D. Kall, R. Safranek, and

S. Ganapathy. Matching and retrieval based on the vocabulary and grammar of color patterns. IEEE Transactions on

Image Processing, 9(1):3854, 2000.

[9] T. Ojala, M. Pietikainen, and D. Harwood. A comparative

study of texture measures with classification based on feature distributions. Pattern Recognition, 29:5159, 1996.

[10] T. Ojala, M. Pietikainen, and T. Maenpaa. Multiresolution

gray scale and rotation invariant texture analysis with local

binary patterns. IEEE Transactions on Pattern Analysis and

Machine Intelligence, 24(7), 2002. in press.

[11] T. Ojala, M. Pietikainen, T. Maenpaa, J. Viertola,

J. Kyllonen, and S. Huovinen. Outex - new framework for

empirical evaluation of texture analysis algorithms. In 16th

International Conference on Pattern Recognition, Quebec,

Canada, August 2002.

[12] D. Panjwani and G. Healey. Unsupervised segmentation of

textured color images. IEEE Transactions on Pattern Analysis and Machine Intelligence, 17(10):939954, 1995.

[13] M. Pietikainen, S. Nieminen, E. Marszalec, and T. Ojala.

Accurate color discrimination with classification based on

feature distributions. In 13th International Conference on

Pattern Recognition, volume 3, pages 833838, Vienna,

Austria, 1996.

[14] B. Poirson and B. Wandell. Pattern-color separable pathways predict sensitivity to simple colored patterns. Vision

Research, 36(4):515526, 1996.

[15] A. Rosenfeld, C. Ye-Wang, and A. Wu. Multispectral texture. IEEE Transactions on Systems, Man, and Cybernetics,

12(1):7984, 1982.

[16] M. Swain and D. Ballard. Color indexing. International

Journal of Computer Vision, 7:1132, 1991.

[17] T. Tan and J. Kittler. Colour texture classification using features from colour histogram. In 8th Scandinavian Conference on Image Analysis, volume 2, pages 807813, Tromso,

Norge, 1993.

Anda mungkin juga menyukai

- English Grammar Master in 30 DaysDokumen185 halamanEnglish Grammar Master in 30 DaysHassan SalviBelum ada peringkat

- Strategy Map TemplatesDokumen19 halamanStrategy Map TemplatesJames Earl CubillasBelum ada peringkat

- End of PeriodizationDokumen7 halamanEnd of PeriodizationAlexandru GheboianuBelum ada peringkat

- Icing Welding Lesson Plan 2020Dokumen2 halamanIcing Welding Lesson Plan 2020api-404959954Belum ada peringkat

- Feature Extraction For Image Retrieval Using Image Mining TechniquesDokumen7 halamanFeature Extraction For Image Retrieval Using Image Mining TechniquesresearchinventyBelum ada peringkat

- Color Image Segmentation Based On Principal Component Analysis With Application of Firefly Algorithm and Gaussian Mixture ModelDokumen12 halamanColor Image Segmentation Based On Principal Component Analysis With Application of Firefly Algorithm and Gaussian Mixture ModelAI Coordinator - CSC JournalsBelum ada peringkat

- Texture ClassificationDokumen6 halamanTexture ClassificationG.m. RajaBelum ada peringkat

- A Comparative Study of Texture Measures (1996-Cited1797)Dokumen9 halamanA Comparative Study of Texture Measures (1996-Cited1797)miusayBelum ada peringkat

- 3 mmPSODokumen21 halaman3 mmPSOGoran WnisBelum ada peringkat

- Histogram-Based Smoke Segmentation in Forest Fire Detection SystemDokumen8 halamanHistogram-Based Smoke Segmentation in Forest Fire Detection SystemRenato SouzaBelum ada peringkat

- Joc 2904 02Dokumen19 halamanJoc 2904 02PRABHAKARANBelum ada peringkat

- Color Texture Moments For Content-Based Image RetrievalDokumen4 halamanColor Texture Moments For Content-Based Image RetrievalEdwin SinghBelum ada peringkat

- LQEBPDokumen13 halamanLQEBPSuchi ReddyBelum ada peringkat

- Feature Fusion Technique For Colour Texture ClassiDokumen7 halamanFeature Fusion Technique For Colour Texture ClassiChandruBelum ada peringkat

- Robust Rule Based Local Binary Pattern Method For Texture Classification and AnalysisDokumen4 halamanRobust Rule Based Local Binary Pattern Method For Texture Classification and AnalysisInternational Journal of Application or Innovation in Engineering & ManagementBelum ada peringkat

- Color Image Segmentation and Parameter Estimation in A Markovian FrameworkDokumen13 halamanColor Image Segmentation and Parameter Estimation in A Markovian FrameworkAthira KrBelum ada peringkat

- A Spectral Color Imaging System For Estimating Spectral Reflectance of PaintDokumen28 halamanA Spectral Color Imaging System For Estimating Spectral Reflectance of PaintKarthik KompelliBelum ada peringkat

- Skin Texture Recognition Using Neural NetworksDokumen4 halamanSkin Texture Recognition Using Neural NetworksZaid AlyasseriBelum ada peringkat

- Retrieval of Color Image Using Color Correlogram and Wavelet FiltersDokumen4 halamanRetrieval of Color Image Using Color Correlogram and Wavelet FiltersNguyễnTrungKiênBelum ada peringkat

- Content SearchDokumen4 halamanContent SearchMocofan MugurBelum ada peringkat

- J Forc 2020 100221Dokumen21 halamanJ Forc 2020 100221Nadir BelloullouBelum ada peringkat

- A Skin Tone Detection Algorithm For An Adaptive Approach To SteganographyDokumen23 halamanA Skin Tone Detection Algorithm For An Adaptive Approach To SteganographyLeela PavanBelum ada peringkat

- User Interactive Color Transformation Between Images: Miss. Aarti K. Masal, Asst. Prof. R.R.DubeDokumen4 halamanUser Interactive Color Transformation Between Images: Miss. Aarti K. Masal, Asst. Prof. R.R.DubeIJMERBelum ada peringkat

- Wire Vision 00Dokumen5 halamanWire Vision 00Manoj KumarBelum ada peringkat

- A Review For Object Recognition Using Color Based Image Quantization Technique ManuscriptDokumen3 halamanA Review For Object Recognition Using Color Based Image Quantization Technique Manuscriptijr_journalBelum ada peringkat

- A Fast Image Segmentation Algorithm Based On Saliency Map and Neutrosophic Set TheoryDokumen12 halamanA Fast Image Segmentation Algorithm Based On Saliency Map and Neutrosophic Set TheoryScience DirectBelum ada peringkat

- Improved Color Edge Detection by Fusion of Hue, PCA & Hybrid CannyDokumen8 halamanImproved Color Edge Detection by Fusion of Hue, PCA & Hybrid CannyVijayakumarBelum ada peringkat

- Iciar 01 GorskiiDokumen10 halamanIciar 01 GorskiipetrusvdmerweBelum ada peringkat

- Modified Color Based Edge Detection of Remote Sensing Images Using Fuzzy LogicDokumen5 halamanModified Color Based Edge Detection of Remote Sensing Images Using Fuzzy LogicInternational Journal of Application or Innovation in Engineering & ManagementBelum ada peringkat

- An Unsupervised Natural Image Segmentation Algorithm Using Mean Histogram FeaturesDokumen6 halamanAn Unsupervised Natural Image Segmentation Algorithm Using Mean Histogram FeaturesS. A. AHSAN RAJONBelum ada peringkat

- Evaluation of Texture Features For Content-Based Image RetrievalDokumen9 halamanEvaluation of Texture Features For Content-Based Image RetrievalliketoendBelum ada peringkat

- Defect Detection in Textured Surfaces Using Color Ring-Projection CorrelationDokumen22 halamanDefect Detection in Textured Surfaces Using Color Ring-Projection CorrelationSatish SarrafBelum ada peringkat

- Perception Based Texture Classification, Representation and RetrievalDokumen6 halamanPerception Based Texture Classification, Representation and Retrievalsurendiran123Belum ada peringkat

- Improving Image Retrieval Performance by Using Both Color and Texture FeaturesDokumen4 halamanImproving Image Retrieval Performance by Using Both Color and Texture Featuresdivyaa76Belum ada peringkat

- Conglomeration For Color Image Segmentation of Otsu Method, Median Filter and Adaptive Median FilterDokumen8 halamanConglomeration For Color Image Segmentation of Otsu Method, Median Filter and Adaptive Median FilterInternational Journal of Application or Innovation in Engineering & ManagementBelum ada peringkat

- Convolutional Neural Networks For Automatic Image ColorizationDokumen15 halamanConvolutional Neural Networks For Automatic Image ColorizationChilupuri SreeyaBelum ada peringkat

- 5 - Adaptive-Neighborhood HistogramDokumen23 halaman5 - Adaptive-Neighborhood HistogramAndrada CirneanuBelum ada peringkat

- Evaluation of Light and Color Performances of Deep Black Coloring of Non Circular Cross Section Polyester Fabrics Using Polarization Image Processing 2165 8064.1000145Dokumen8 halamanEvaluation of Light and Color Performances of Deep Black Coloring of Non Circular Cross Section Polyester Fabrics Using Polarization Image Processing 2165 8064.1000145Nikhil HosurBelum ada peringkat

- Automatic Place Det PaperDokumen7 halamanAutomatic Place Det PaperThiago_Rocha_5457Belum ada peringkat

- Tekstur ClassificationDokumen7 halamanTekstur ClassificationJosua BiondiBelum ada peringkat

- Multiscale Segmentation Techniques For Textile Images: April 2011Dokumen17 halamanMultiscale Segmentation Techniques For Textile Images: April 2011realjj0110Belum ada peringkat

- Enhancement of Images Using Morphological Transformations: K.Sreedhar and B.PanlalDokumen18 halamanEnhancement of Images Using Morphological Transformations: K.Sreedhar and B.PanlalRozita JackBelum ada peringkat

- Research Article: New Brodatz-Based Image Databases For Grayscale Color and Multiband Texture AnalysisDokumen15 halamanResearch Article: New Brodatz-Based Image Databases For Grayscale Color and Multiband Texture AnalysisarunpandiyanBelum ada peringkat

- Comparing Color and Texture-Based Algorithms For Human Skin DetectionDokumen9 halamanComparing Color and Texture-Based Algorithms For Human Skin DetectionRolandoRamosConBelum ada peringkat

- Color Image Segmentation Using Fuzzy C-Means and Eigenspace ProjectionsDokumen12 halamanColor Image Segmentation Using Fuzzy C-Means and Eigenspace ProjectionsChandan NathBelum ada peringkat

- Achromatic and Colored LightDokumen24 halamanAchromatic and Colored Lightbskc_sunilBelum ada peringkat

- Junqing Chen, Thrasyvoulos N. Pappas Aleksandra Mojsilovic, Bernice E. RogowitzDokumen4 halamanJunqing Chen, Thrasyvoulos N. Pappas Aleksandra Mojsilovic, Bernice E. RogowitzanasunislaBelum ada peringkat

- Analyses: Parquet Sorting TextureDokumen6 halamanAnalyses: Parquet Sorting TextureMekaTronBelum ada peringkat

- Domain Specific Cbir For Highly Textured ImagesDokumen7 halamanDomain Specific Cbir For Highly Textured ImagescseijBelum ada peringkat

- A Mathematical Analysis and Implementation of Residual Interpolation Demosaicking AlgorithmsDokumen50 halamanA Mathematical Analysis and Implementation of Residual Interpolation Demosaicking AlgorithmsAyoub SmitBelum ada peringkat

- Object Recognition Using Visual CodebookDokumen5 halamanObject Recognition Using Visual CodebookInternational Journal of Application or Innovation in Engineering & ManagementBelum ada peringkat

- A Uniform Framework For Estimating Illumination Chromaticity, Correspondence, and Specular ReflectionDokumen11 halamanA Uniform Framework For Estimating Illumination Chromaticity, Correspondence, and Specular ReflectionR Kishore KumarBelum ada peringkat

- 1506 01472 PDFDokumen12 halaman1506 01472 PDFrifkystrBelum ada peringkat

- Wavelet Based Texture ClassificationDokumen4 halamanWavelet Based Texture ClassificationSameera RoshanBelum ada peringkat

- A Novel Elimination-Based A Approach To Image RetrievalDokumen6 halamanA Novel Elimination-Based A Approach To Image RetrievalPavan Kumar NsBelum ada peringkat

- Journal KolorimetriDokumen8 halamanJournal Kolorimetriyayax_619Belum ada peringkat

- A New Technique For Hand Gesture RecognitionDokumen4 halamanA New Technique For Hand Gesture RecognitionchfakhtBelum ada peringkat

- Spectral Imaging Revised RemovedDokumen6 halamanSpectral Imaging Revised RemovedSilence is BetterBelum ada peringkat

- Combining Colour SignaturesDokumen6 halamanCombining Colour SignaturesSEP-PublisherBelum ada peringkat

- Development of An Intelligent Computer Vision System For Automatic Recyclable Waste Paper SortingDokumen7 halamanDevelopment of An Intelligent Computer Vision System For Automatic Recyclable Waste Paper SortingMekaTronBelum ada peringkat

- Color Constancy For Improving Skin DetectionDokumen18 halamanColor Constancy For Improving Skin DetectionAI Coordinator - CSC JournalsBelum ada peringkat

- Improved Colour Image Enhancement Scheme Using Mathematical MorphologyDokumen8 halamanImproved Colour Image Enhancement Scheme Using Mathematical MorphologyMade TokeBelum ada peringkat

- Optic Disc Segmentation Based On Independent Component Analysis and K-Means ClusteringDokumen6 halamanOptic Disc Segmentation Based On Independent Component Analysis and K-Means ClusteringInternational Journal of Application or Innovation in Engineering & ManagementBelum ada peringkat

- Histogram Equalization: Enhancing Image Contrast for Enhanced Visual PerceptionDari EverandHistogram Equalization: Enhancing Image Contrast for Enhanced Visual PerceptionBelum ada peringkat

- 022482-2508a Sps855 Gnss Modular Receiver Ds 0513 LRDokumen2 halaman022482-2508a Sps855 Gnss Modular Receiver Ds 0513 LRJames Earl CubillasBelum ada peringkat

- 6790 Rahman ProcDokumen6 halaman6790 Rahman ProcJames Earl CubillasBelum ada peringkat

- Japitana, Et - Al - sEASC 2015 AbstractDokumen3 halamanJapitana, Et - Al - sEASC 2015 AbstractJames Earl CubillasBelum ada peringkat

- Image Processing: Georg FriesDokumen14 halamanImage Processing: Georg FriesJames Earl CubillasBelum ada peringkat

- USB License Key For SuperGIS ProductsDokumen2 halamanUSB License Key For SuperGIS ProductsJames Earl CubillasBelum ada peringkat

- Final How To Setup Supergis Desktop For Single LicenseDokumen9 halamanFinal How To Setup Supergis Desktop For Single LicenseJames Earl CubillasBelum ada peringkat

- 229 Forestsat2005 20 20filip 20hajekDokumen5 halaman229 Forestsat2005 20 20filip 20hajekJames Earl CubillasBelum ada peringkat

- SVM Challenge BermoyDokumen5 halamanSVM Challenge BermoyJames Earl CubillasBelum ada peringkat

- Kitty Currier Department of Geography, University of California, Santa Barbara and Biosphere FoundationDokumen1 halamanKitty Currier Department of Geography, University of California, Santa Barbara and Biosphere FoundationJames Earl CubillasBelum ada peringkat

- Learning New WordsDokumen5 halamanLearning New WordsAnanda KayleenBelum ada peringkat

- Chapter 5 Stress ManagementDokumen27 halamanChapter 5 Stress ManagementLeonardo Campos PoBelum ada peringkat

- ScriptDokumen4 halamanScriptPrincess Fatima De JuanBelum ada peringkat

- WH QuestionsDokumen3 halamanWH QuestionsJinky Nequinto CaraldeBelum ada peringkat

- Past Simple 2 Negative, Interrogative & Mixed FormsDokumen28 halamanPast Simple 2 Negative, Interrogative & Mixed FormsValy de VargasBelum ada peringkat

- Developing Teaching Material of Poetry AppreciationDokumen10 halamanDeveloping Teaching Material of Poetry AppreciationBerry Perdana SitumorangBelum ada peringkat

- Ccny Syllabus EE6530Dokumen2 halamanCcny Syllabus EE6530Jorge GuerreroBelum ada peringkat

- Meditating On Basic Goodness: Tergar Meditation GroupDokumen3 halamanMeditating On Basic Goodness: Tergar Meditation GroupJake DavilaBelum ada peringkat

- Essay1finaldraft 2Dokumen5 halamanEssay1finaldraft 2api-608678773Belum ada peringkat

- Scientist ProjectDokumen1 halamanScientist ProjectEamon BarkhordarianBelum ada peringkat

- What Is William WordsworthDokumen2 halamanWhat Is William WordsworthMandyMacdonaldMominBelum ada peringkat

- Handout Ethics 1-2 IntroductionDokumen12 halamanHandout Ethics 1-2 IntroductionanthonyBelum ada peringkat

- Non-Prose TextDokumen3 halamanNon-Prose TextErdy John GaldianoBelum ada peringkat

- REFLECTION - READING HABITS - 4th QTRDokumen1 halamanREFLECTION - READING HABITS - 4th QTRRyan VargasBelum ada peringkat

- Legal Contract Translation Problems Voices From Sudanese Translation PractitionersDokumen20 halamanLegal Contract Translation Problems Voices From Sudanese Translation PractitionersM MBelum ada peringkat

- FinalDokumen4 halamanFinalSou SouBelum ada peringkat

- 3 Day Challenge WorkbookDokumen29 halaman3 Day Challenge WorkbookMaja Bogicevic GavrilovicBelum ada peringkat

- Teaching by Listening: The Importance of Adult-Child Conversations To Language DevelopmentDokumen10 halamanTeaching by Listening: The Importance of Adult-Child Conversations To Language DevelopmentBárbara Mejías MagnaBelum ada peringkat

- Lesson 5Dokumen4 halamanLesson 5api-281649979Belum ada peringkat

- Short Lesson Plan: Possible Answers: Words That Describe Color, Shape, Size, Sound, Feel, Touch, and SmellDokumen2 halamanShort Lesson Plan: Possible Answers: Words That Describe Color, Shape, Size, Sound, Feel, Touch, and Smellapi-282308927Belum ada peringkat

- Revised ENG LESSON MAPDokumen167 halamanRevised ENG LESSON MAPCyrile PelagioBelum ada peringkat

- Educ 201 Diversity ReflectionsDokumen14 halamanEduc 201 Diversity Reflectionsapi-297507006Belum ada peringkat

- Furniture Lesson PlanDokumen16 halamanFurniture Lesson PlanGhetsuDionysiyBelum ada peringkat

- Genius Report - Blaze HHHDokumen8 halamanGenius Report - Blaze HHHashwinBelum ada peringkat

- Considerations in The Use of PodcastsDokumen26 halamanConsiderations in The Use of Podcastsapi-699389850Belum ada peringkat

- Observational LearningDokumen3 halamanObservational LearningBrien NacoBelum ada peringkat

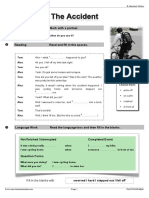

- The Accident WorksheetDokumen4 halamanThe Accident Worksheetjoao100% (1)