Quantifying Human Reliability in Risk Assessments

Diunggah oleh

Kit NottellingHak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

Quantifying Human Reliability in Risk Assessments

Diunggah oleh

Kit NottellingHak Cipta:

Format Tersedia

E I TECHNICAL

Safety

Quantifying human reliability

in risk assessments

Jamie Henderson and David Embrey, from Human Reliability Associates, provide an

overview of the new EI Technical human factors publication Guidance on quantified

human reliability analysis (QHRA).

ollowing the Buncefield accident in

2005, operators of bulk petroleum

storage facilities in the UK were

requested to provide greater assurance

of their overfill protection systems by

risk assessing them using the layers of

protection analysis (LOPA) technique. A

subsequent review of LOPAs1 indicated

a recurring problem with the use of

human error probabilities (HEPs)

without an adequate consideration of

the conditions that influence these

probabilities in the scenario under consideration. It is obvious that the error

probability will be affected by a

number of factors (eg time pressure,

quality of procedures, equipment

design and operating culture) that are

likely to be specific to the situation

being evaluated. Using an HEP from a

database or table without considering

the task context can therefore lead to

inaccurate results in applications such

as quantified risk assessment (QRA),

LOPA and safety integrity level (SIL)

determination studies.

Human reliability analysis (HRA) techniques are available to support the

development of HEPs and, in some cases,

their integration into QRAs. However,

without a basic understanding of human

factors issues, and the strengths, weaknesses and limitations of the

techniques, their use can lead to wildly

pessimistic or optimistic results.

Using funding from its Technical

Partners and other sponsors, the Energy

Institutes (EI) SILs/LOPAs Working

Group commissioned Human Reliability

Associates to develop guidance in this

area. The aim is to reduce instances of

poorly conceived or executed analyses.

The guidance provides an overview of

important practical considerations,

worked examples and supporting

checklists, to assist with commissioning

and reviewing HRAs.2

HRA techniques

HRA techniques are designed to support

the assessment and minimisation of

risks associated with human failures.

They have both qualitative (eg task

analysis, failure identification) and

quantitative (eg human error estimation) components. The guidance focuses

primarily on quantification, but illustrates the importance of the associated

qualitative analyses that can have a significant impact on the numerical results.

Further EI guidance on qualitative

analysis is also available.3 There are a

large number of available HRA techniques that address quantification one

review identified 72 different methods.4

The respective merits of HRA techniques

are not addressed in the new guidance,

since this information, and more

detailed discussion of the concept of

HRA, are available elsewhere.4,5,6

Attempts to quantify the probability

of human failures have a long history.

Early efforts treated people like any

other component in a reliability assessment (eg what is the probability of an

operator failing to respond to an

alarm?). Because these assessments

required probabilities as inputs, there

was a requirement to develop HEP

databases. However, very few industries

were prepared to invest in the effort

required to collect the data to develop

HEPs, so this led to the widespread use

of generic data contained in tools such

as THERP (technique for human error

rate prediction). In fact, the data contained in THERP and other popular

quantification techniques such as

HEART (human error assessment and

reduction technique) are actually

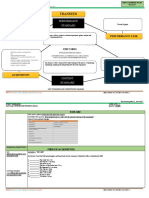

1. Preparation and problem definition

2. Task analysis

3. Failure identification

4. Modelling

5. Quantification

6. Impact assessment

7. Failure reduction

8. Review

Table 1: Generic HRA process

30

Figure 1: Examples of the potential impact of human failures on an event sequence

PETROLEUM REVIEW NOVEMBER 2012

derived primarily from laboratory-based

studies on human performance.

As it became recognised that people

and, consequently HEPs, are significantly

influenced by a wide range of

environmental factors, techniques were

developed to modify baseline generic

HEPs to take into account these contextual

factors (eg time pressure, distractions,

quality of training and quality of the

human machine interface) known as

performance influencing factors (PIFs) or

performance shaping factors (PSFs). A

parallel strand of development was in the

use of expert judgement techniques, such

as paired comparisons and absolute probability judgement, to derive HEPs. Other

techniques, such as SLIM (success likelihood index method) used a combination

of inputs from human factors specialists

and subject matter experts to develop a

context specific set of PIFs/PSFs. These were

then used to derive an index, which could

be converted to a HEP, based on the

quality of these factors in the situation

being assessed.

Despite well-known issues with their

application, and more recent attempts

to develop new techniques that attempt

to address these issues, techniques such

as THERP and HEART are still the most

widely used.

Whilst the quantification of HEPs may

be problematic, the importance of the

human contribution to overall system

risk cannot be overstated. For example,

the bow-tie diagram in Figure 1 shows

how different human failures can affect

the initiation (left hand side), mitigation

and escalation (right hand side) of a

hypothetical event.

Practical issues

The EI guidance2 provides an overview

of some of the most important factors

that can undermine the validity of an

HRA. These include:

Expert judgement Every HRA technique requires some degree of expert

judgement in deciding which factors

influence the likelihood of error in the

situation being assessed and whether

these are adequately addressed in

the quantification technique. A welldeveloped understanding of the task

and operating environment is therefore

essential and any HRA report must

include a documented record of all

assumptions made during the analysis.

In particular, this must provide a justification for any HEPs that have been

imported from an external source such

as a database. It may also be useful, in

interpreting the results, to demonstrate

the potential impact of changes to these

assumptions on the final outcome.

Impact of task context upon HEPs As

discussed previously, human perfor-

PETROLEUM REVIEW NOVEMBER 2012

Commentary Identifying failures

Using a set of guidewords to identify potential deviations is a common approach

to this stage of the analysis. However, this can be a time-consuming and potentially complex process. There are some steps that can be taken to reduce this

complexity, and simplify the subsequent modelling stage:

Be clear about the consequences of identified failures. If the outcomes of concern

are specified at the project preparation stage then some failures will result in

consequences that can be excluded (eg production and occupational safety issues).

Document opportunities for recovery of the failure before consequences are realised

(eg planned checks).

Identify existing control measures designed to prevent or mitigate the consequences

of the identified failures.

Group failures with similar outcomes together. For example, not doing something at

all may be equivalent, in terms of outcome, to doing it too late. Care should be

taken here, however, as whilst the outcomes may be the same, the reasons for the

failures may be different.

Table 2: Example of commentary from the guidance (Step 3 Failure identification)

mance is highly dependent upon

prevailing task conditions. For example,

a simple task, under optimal laboratory

conditions, might have a failure probability of 0.0001 (ie once in 10,000 times).

However, this probability could easily be

degraded to 0.1 (ie once in 10 times) if

the person is subject to PIFs such as high

levels of stress or distractions. There are

very few HEP databases that specify the

contextual factors that were present

when the data were collected. Instead,

the usual approach has been to take

data from other sources, such as laboratory experiments, and modify these HEPs

to suit specific situations.

Sources of data in HRA techniques

Depending on the HRA technique used,

it can be difficult to establish the exact

source of the base HEP data. It might be

from operating experience, experimental research, simulator studies,

expert judgement, or some combination

of these sources. This has implications

for the ability of the analyst to determine the relevance of the data source to

the situation under consideration.

Qualitative modelling

Some HRA techniques, in addition to

HEP estimation, provide the opportunity

to consider and model the impact of PIFs

upon safety critical tasks. This means

that, whilst the generated HEP may be

continued on p34...

Checklist 3: Reviewing HRA outputs

Guidance section

3.1 Was a task analysis developed?

Step 2 Task analysis

3.2 Did the development of the task

analysis involve task experts (ie people

with experience of the task)?

Step 2 Task analysis

3.3 Did the task analysis involve a

walkthrough of the operating

environment?

Step 2 Task analysis

3.4 Is there a record of the inputs to

the task analysis (eg operator

experience, operating instructions,

piping and instrumentation diagrams,

work control documentation)?

Step 2 Task analysis

3.5 Was a formal identification process

used to identify all important failures?

Step 3 Failure

identification

3.6 Does the analysis represent all

obvious errors in the scenario, or explain

why the analysis has been limited to a

sub-set of possible failures?

Step 3 Failure

identification

Yes/No

Table 3: Extract from Checklist 3 Reviewing HRA outputs

31

... continued from p31

treated with scepticism, the analysis

provides useful insights into the factors

affecting task performance and how

these can be improved. For example,

factors such as the quality of communication and equipment layout may be

identified as the PIFs having the

greatest influence over the HEP and,

accordingly, these factors can be prioritised when considering where resources

should be applied in order to achieve

an improved level of reliability.

Whilst in practice it may be difficult

to establish the absolute probability of

failure, an analyst can use appropriate

HRA techniques to establish which factors have the greatest relative impact

on the probability of failure.

Guidance structure

The guidance that HRA has developed

for the EI2 takes a generic HRA process

as its starting point (see Table 1, p30).

Each stage is described, alongside a discussion of relevant potential pitfalls and

commentaries regarding important practical considerations. For example, Table 2

addresses issues related to the failure

identification stage of the process.

34

In addition, to support organisations

commissioning or reviewing HRA

analyses, three checklists are provided:

Checklist 1: Deciding whether to under

take HRA.

Checklist 2: Preparing to undertake

HRA.

Checklist 3: Reviewing HRA outputs.

The checklist items are related to the

stages of the HRA process set out in

Table 1. A short, illustrative extract from

Checklist 3 is provided in Table 3.

The guidance also includes full

worked examples, along with associated

commentary related to the checklist

items, to further illustrate common

issues with HRA analyses.

The use of HEPs and associated HRA

techniques is a difficult area. The aim of

the EI guidance is to better equip

non-specialists thinking of undertaking,

or charged with commissioning, HRAs.

In many cases, a qualitative HRA may be

more appropriate than a quantitative

analysis. Any proposed analysis should

be mindful of the pitfalls set out in

the guidance. Moreover, the limitations

of the outputs should be clearly

communicated to the final user of the

analysis.

References

1. Health & Safety Executive, Research

Report RR716: A review of Layers of

Protection Analysis (LOPA) analyses of

overfill of fuel storage tanks, HSE

Books, 2009.

2. Energy Institute, Guidance on quantified human reliability analysis (QHRA),

2012.

3. Energy Institute, Guidance on human

factors safety critical task analysis, 2011.

www.energyinst.org/scta

4. Health & Safety Executive, Research

Report RR679: Review of human reliability assessment methods, HSE Books,

2009.

5. Embrey, D E, Human reliability

assessment, in Human factors for engineers, Sandom, C and Harvey R S (eds),

ISBN 0 86341 329 3, Institute of

Electrical Engineers Publishing, London,

2004.

6. Kirwan, B, A guide to practical

human reliability assessment, London:

Taylor & Francis, 1994.

Guidance on quantified human reliability analysis (QHRA), ISBN 978 0 85293

635 1, September 2012, is freely available from www.energyinst.org/qhra

PETROLEUM REVIEW NOVEMBER 2012

Anda mungkin juga menyukai

- Gas and Oil Reliability Engineering: Modeling and AnalysisDari EverandGas and Oil Reliability Engineering: Modeling and AnalysisPenilaian: 4.5 dari 5 bintang4.5/5 (6)

- Phoenix - A Model-Based Human Reliability AnalysisDokumen15 halamanPhoenix - A Model-Based Human Reliability AnalysissteveBelum ada peringkat

- Optimization Under Stochastic Uncertainty: Methods, Control and Random Search MethodsDari EverandOptimization Under Stochastic Uncertainty: Methods, Control and Random Search MethodsBelum ada peringkat

- 2.7. The Sociotechnical PerspectiveDokumen17 halaman2.7. The Sociotechnical PerspectiveMa NpaBelum ada peringkat

- Usage of Human Reliability Quantification Methods: Miroljub GrozdanovicDokumen7 halamanUsage of Human Reliability Quantification Methods: Miroljub GrozdanovicCSEngineerBelum ada peringkat

- Mastering Opportunities and Risks in IT Projects: Identifying, anticipating and controlling opportunities and risks: A model for effective management in IT development and operationDari EverandMastering Opportunities and Risks in IT Projects: Identifying, anticipating and controlling opportunities and risks: A model for effective management in IT development and operationBelum ada peringkat

- Analyzing human error and accident causationDokumen17 halamanAnalyzing human error and accident causationVeera RagavanBelum ada peringkat

- Assessing Human Error Probability with HEARTDokumen6 halamanAssessing Human Error Probability with HEARTAnnisa MaulidyaBelum ada peringkat

- Evaluating Human Error in Industrial Control RoomsDokumen5 halamanEvaluating Human Error in Industrial Control RoomsJesús SarriaBelum ada peringkat

- Rio Pipeline Conference Presentation v5Dokumen20 halamanRio Pipeline Conference Presentation v5Sara ZaedBelum ada peringkat

- Human Error Identification in Human Reliability Assessment - Part 1 - Overview of Approaches PDFDokumen20 halamanHuman Error Identification in Human Reliability Assessment - Part 1 - Overview of Approaches PDFalkmindBelum ada peringkat

- Human Error Assessment and Reduction TechniqueDokumen4 halamanHuman Error Assessment and Reduction TechniqueJUNIOR OLIVOBelum ada peringkat

- Iranian Journal of Fuzzy Systems Vol. 15, No. 1, (2018) Pp. 139-161 139Dokumen23 halamanIranian Journal of Fuzzy Systems Vol. 15, No. 1, (2018) Pp. 139-161 139Mia AmaliaBelum ada peringkat

- Selection of Hazard Evaluation Techniques PDFDokumen16 halamanSelection of Hazard Evaluation Techniques PDFdediodedBelum ada peringkat

- Human Factors in LOPADokumen26 halamanHuman Factors in LOPASaqib NazirBelum ada peringkat

- Addressing Human Errors in Process Hazard AnalysesDokumen19 halamanAddressing Human Errors in Process Hazard AnalysesAnil Deonarine100% (1)

- 42R-08 Risk Analysis and Contingency Determination Using Parametric EstimatingDokumen9 halaman42R-08 Risk Analysis and Contingency Determination Using Parametric EstimatingDody BdgBelum ada peringkat

- Efficient PHA of Non-Continuous Operating ModesDokumen25 halamanEfficient PHA of Non-Continuous Operating ModesShakirBelum ada peringkat

- Application of Heart Technique For Human Reliability Assessment - A Serbian ExperienceDokumen10 halamanApplication of Heart Technique For Human Reliability Assessment - A Serbian ExperienceAnonymous imiMwtBelum ada peringkat

- FMEADokumen14 halamanFMEAAndreea FeltAccessoriesBelum ada peringkat

- Risk Cost Assess.00Dokumen19 halamanRisk Cost Assess.00johnny_cashedBelum ada peringkat

- Confiabilidade Humana em Sistemas Homem-MáquinaDokumen8 halamanConfiabilidade Humana em Sistemas Homem-MáquinaJonas SouzaBelum ada peringkat

- Issues in Benchmarking Human Reliability Analysis Methods: A Literature ReviewDokumen4 halamanIssues in Benchmarking Human Reliability Analysis Methods: A Literature ReviewSiva RamBelum ada peringkat

- Industrial Process Hazard Analysis: What Is It and How Do I Do It?Dokumen5 halamanIndustrial Process Hazard Analysis: What Is It and How Do I Do It?Heri IsmantoBelum ada peringkat

- Sms Tools& Analysis MethodDokumen27 halamanSms Tools& Analysis Methodsaif ur rehman shahid hussain (aviator)Belum ada peringkat

- Human Reliability Assessment On RTG Operational Activities in Container Service Companies Based On Sherpa and Heart MethodsDokumen7 halamanHuman Reliability Assessment On RTG Operational Activities in Container Service Companies Based On Sherpa and Heart MethodsInternational Journal of Innovative Science and Research TechnologyBelum ada peringkat

- SherpaDokumen17 halamanSherpaShivani HubliBelum ada peringkat

- Risk Assessment MethodsDokumen24 halamanRisk Assessment MethodsXie ShjBelum ada peringkat

- Evaluation Human Errorin Control RoomDokumen6 halamanEvaluation Human Errorin Control RoomJesús SarriaBelum ada peringkat

- Failure Mode and Effects Analysis Considering Consensus and Preferences InterdependenceDokumen24 halamanFailure Mode and Effects Analysis Considering Consensus and Preferences InterdependenceMia AmaliaBelum ada peringkat

- Utilizing Criteria For Assessing Multiple-Task Manual Materials Handling JobsDokumen12 halamanUtilizing Criteria For Assessing Multiple-Task Manual Materials Handling JobsdessynurvitariniBelum ada peringkat

- A-Systems-Approach-to-Failure-Modes-v1 Paper Good For Functions and Failure MechanismDokumen19 halamanA-Systems-Approach-to-Failure-Modes-v1 Paper Good For Functions and Failure Mechanismkhmorteza100% (1)

- Zarei Et Al-2016-ProcessDokumen8 halamanZarei Et Al-2016-ProcessIntrépido BufonBelum ada peringkat

- Chen JK. 2007. Utility Priority Number Evaluation For FMEADokumen8 halamanChen JK. 2007. Utility Priority Number Evaluation For FMEAAlwi SalamBelum ada peringkat

- Tree Level of Causes Fmea Dan RcaDokumen8 halamanTree Level of Causes Fmea Dan RcaWahyu Radityo UtomoBelum ada peringkat

- 1 s2.0 S0169814118302737 MainDokumen19 halaman1 s2.0 S0169814118302737 Mainashish kumarBelum ada peringkat

- Risk Analysis & ContengencyDokumen8 halamanRisk Analysis & ContengencymanikantanBelum ada peringkat

- TRIZ Method by Genrich AltshullerDokumen5 halamanTRIZ Method by Genrich AltshullerAnonymous JyrLl3RBelum ada peringkat

- Failure Mode Effect Analysis and Fault Tree Analysis As A Combined Methodology in Risk ManagementDokumen12 halamanFailure Mode Effect Analysis and Fault Tree Analysis As A Combined Methodology in Risk ManagementandrianioktafBelum ada peringkat

- Dynamic Human Reliability Analysis Benefits and Challenges of Simulating Human PerformanceDokumen8 halamanDynamic Human Reliability Analysis Benefits and Challenges of Simulating Human PerformancepxmBelum ada peringkat

- ANALYZEDokumen6 halamanANALYZEpranitsadhuBelum ada peringkat

- Process Fault Diagnosis - AAMDokumen61 halamanProcess Fault Diagnosis - AAMsaynapogado18Belum ada peringkat

- AHP-GP model allocates auditing timeDokumen18 halamanAHP-GP model allocates auditing timeMarco AraújoBelum ada peringkat

- Improved Integration of LOPA With HAZOP Analyses: Dick Baum, Nancy Faulk, and P.E. John Pe RezDokumen4 halamanImproved Integration of LOPA With HAZOP Analyses: Dick Baum, Nancy Faulk, and P.E. John Pe RezJéssica LimaBelum ada peringkat

- 5 - DATA FOR RISK ANALYSIS AND HAZARD IDENTIFY - Muh. Iqran Al MuktadirDokumen13 halaman5 - DATA FOR RISK ANALYSIS AND HAZARD IDENTIFY - Muh. Iqran Al MuktadirLala CheeseBelum ada peringkat

- Fuzzy logic risk assessment model for HSE in oil and gasDokumen17 halamanFuzzy logic risk assessment model for HSE in oil and gasUmair SarwarBelum ada peringkat

- Beyond FMEA The Structured What-If Techn PDFDokumen13 halamanBeyond FMEA The Structured What-If Techn PDFDaniel88036Belum ada peringkat

- Application of Fmea-Fta in Reliability-Centered Maintenance PlanningDokumen12 halamanApplication of Fmea-Fta in Reliability-Centered Maintenance PlanningcuongBelum ada peringkat

- Process Hazards Analysis MethodsDokumen1 halamanProcess Hazards Analysis MethodsRobert MontoyaBelum ada peringkat

- 5 - HazopDokumen23 halaman5 - HazopMuhammad Raditya Adjie PratamaBelum ada peringkat

- An Ontology To Support Semantic Management of FMEADokumen16 halamanAn Ontology To Support Semantic Management of FMEAcsvspcal143Belum ada peringkat

- Preliminary Hazard Analysis (PHA)Dokumen8 halamanPreliminary Hazard Analysis (PHA)dBelum ada peringkat

- Comprehensive fuzzy FMEA model for ERP risksDokumen32 halamanComprehensive fuzzy FMEA model for ERP risksIbtasamLatifBelum ada peringkat

- Understanding The TaskDokumen6 halamanUnderstanding The TaskMohamedBelum ada peringkat

- Fuzzy FMEA for Reach Stacker CranesDokumen13 halamanFuzzy FMEA for Reach Stacker CranesEva WatiBelum ada peringkat

- Study On The Main Factors Influencing HumanDokumen8 halamanStudy On The Main Factors Influencing HumanDoru ToaderBelum ada peringkat

- Hazard Identification Techniques in IndustryDokumen4 halamanHazard Identification Techniques in IndustrylennyBelum ada peringkat

- Misconceptions Within The Use of Overall Equipment Effectiveness - A Theoretical Discussion On Industrial ExamplesDokumen12 halamanMisconceptions Within The Use of Overall Equipment Effectiveness - A Theoretical Discussion On Industrial ExamplesAliirshad10Belum ada peringkat

- Fatigue Management Guide Airline OperatorsDokumen167 halamanFatigue Management Guide Airline OperatorsTirumal raoBelum ada peringkat

- Report On Indian AviationDokumen72 halamanReport On Indian AviationTirumal raoBelum ada peringkat

- JIG Bulletin 74 Fuelling Vehicle Soak Testing Procedure During Commissioning Feb 2015Dokumen2 halamanJIG Bulletin 74 Fuelling Vehicle Soak Testing Procedure During Commissioning Feb 2015Tirumal raoBelum ada peringkat

- JIG Bulletin 65Dokumen3 halamanJIG Bulletin 65Tirumal raoBelum ada peringkat

- MSDS of Stadis-450 PDFDokumen8 halamanMSDS of Stadis-450 PDFTirumal raoBelum ada peringkat

- Def Stan 91-91 Issue 7Dokumen34 halamanDef Stan 91-91 Issue 7asrahmanBelum ada peringkat

- Synthesis Report of Four Working Groups On Road Safety - 2916469697Dokumen87 halamanSynthesis Report of Four Working Groups On Road Safety - 2916469697Tirumal raoBelum ada peringkat

- Oisd STD 235Dokumen110 halamanOisd STD 235naved ahmed100% (5)

- ATSB Take-Off Performance Calculation and Entry ErrorsDokumen100 halamanATSB Take-Off Performance Calculation and Entry ErrorsTirumal raoBelum ada peringkat

- CTG Action PlanDokumen2 halamanCTG Action Planapi-378658338Belum ada peringkat

- Hal 119 No 3-1Dokumen2 halamanHal 119 No 3-1deka ferianaBelum ada peringkat

- VERIFYING SIMULATION MODELSDokumen16 halamanVERIFYING SIMULATION MODELSTim KhamBelum ada peringkat

- Mock Test: Sub.: Business Research Methods Paper Code:C-203Dokumen7 halamanMock Test: Sub.: Business Research Methods Paper Code:C-203aaaBelum ada peringkat

- TLC PaprikaDokumen6 halamanTLC Paprikamaysilee-katnissBelum ada peringkat

- 2-AWWA Manual Od Water Audit and Leak DetectionDokumen55 halaman2-AWWA Manual Od Water Audit and Leak Detectionmuhammad.civilBelum ada peringkat

- Continuous Random VariableDokumen19 halamanContinuous Random VariableMark Niño JavierBelum ada peringkat

- 3B. Kuantitatif - Data Preparation (Malhotra 14)Dokumen28 halaman3B. Kuantitatif - Data Preparation (Malhotra 14)katonBelum ada peringkat

- Chapter 3Dokumen19 halamanChapter 3Mohamad Hafizi PijiBelum ada peringkat

- Policy Studies Journal - 2020 - Lemire - The Growth of The Evaluation Tree in The Policy Analysis Forest RecentDokumen24 halamanPolicy Studies Journal - 2020 - Lemire - The Growth of The Evaluation Tree in The Policy Analysis Forest RecentBudi IrawanBelum ada peringkat

- Psychology Lab Methods - PSYC1005 - 2023 - Assessment 3 Instructions FinalDokumen3 halamanPsychology Lab Methods - PSYC1005 - 2023 - Assessment 3 Instructions Finalmynameisfurry1200000Belum ada peringkat

- 4517-4379 Lovelock PPT Chapter 13Dokumen33 halaman4517-4379 Lovelock PPT Chapter 13Chaitu SagiBelum ada peringkat

- Mohr's Circle in 3 Dimensions - RockMechsDokumen8 halamanMohr's Circle in 3 Dimensions - RockMechsmindpower_146Belum ada peringkat

- GLP Protocols and Study Conduct-It Just Takes A Little PlanningDokumen11 halamanGLP Protocols and Study Conduct-It Just Takes A Little PlanningSofia BlazevicBelum ada peringkat

- Journal of Mathematical Analysis and ApplicationsDokumen15 halamanJournal of Mathematical Analysis and ApplicationsAnamBelum ada peringkat

- Sociology Test 1Dokumen15 halamanSociology Test 1muayadBelum ada peringkat

- Rating Scale - M WPS OfficeDokumen5 halamanRating Scale - M WPS OfficeJulius Ryan Lim BalbinBelum ada peringkat

- USGS Method I-1586 (PH)Dokumen2 halamanUSGS Method I-1586 (PH)link815Belum ada peringkat

- Kiran - Synopsis - 1Dokumen4 halamanKiran - Synopsis - 1umeshrathoreBelum ada peringkat

- HVAC AuditDokumen3 halamanHVAC AuditAshwini Shelke-ShindeBelum ada peringkat

- How To Make Your TOS - STEP BY STEPDokumen2 halamanHow To Make Your TOS - STEP BY STEPLily Cruz100% (1)

- Weekly Accomplishment ReportDokumen3 halamanWeekly Accomplishment Reportwingpa delacruzBelum ada peringkat

- Research LP (3RD Quarter)Dokumen7 halamanResearch LP (3RD Quarter)Jaidee BiluganBelum ada peringkat

- Data ..Analysis .Report: Mira K. DesaiDokumen18 halamanData ..Analysis .Report: Mira K. DesaiAlfred CobarianBelum ada peringkat

- CRISC Review QAE 2015 Correction Page 65 XPR Eng 0615Dokumen1 halamanCRISC Review QAE 2015 Correction Page 65 XPR Eng 0615Sakil MahmudBelum ada peringkat

- Pump Performance Lab ReportDokumen8 halamanPump Performance Lab ReportEdgar Dizal0% (1)

- Legal Research and Writing MethodsDokumen2 halamanLegal Research and Writing MethodsAmee MemonBelum ada peringkat

- Masonry Buildings: Research and Practice: EditorialDokumen3 halamanMasonry Buildings: Research and Practice: Editorialعبد القادر نورBelum ada peringkat

- Defensible Spaces in ArchitectureDokumen6 halamanDefensible Spaces in ArchitectureZoya ZahidBelum ada peringkat

- 6th Chapter4 Section5 pt2Dokumen11 halaman6th Chapter4 Section5 pt2Angel TemelkoBelum ada peringkat

- Transformed: Moving to the Product Operating ModelDari EverandTransformed: Moving to the Product Operating ModelPenilaian: 4 dari 5 bintang4/5 (1)

- ChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindDari EverandChatGPT Money Machine 2024 - The Ultimate Chatbot Cheat Sheet to Go From Clueless Noob to Prompt Prodigy Fast! Complete AI Beginner’s Course to Catch the GPT Gold Rush Before It Leaves You BehindBelum ada peringkat

- The User's Journey: Storymapping Products That People LoveDari EverandThe User's Journey: Storymapping Products That People LovePenilaian: 3.5 dari 5 bintang3.5/5 (8)

- PLC Programming & Implementation: An Introduction to PLC Programming Methods and ApplicationsDari EverandPLC Programming & Implementation: An Introduction to PLC Programming Methods and ApplicationsBelum ada peringkat

- Comprehensive Guide to Robotic Process Automation (RPA): Tips, Recommendations, and Strategies for SuccessDari EverandComprehensive Guide to Robotic Process Automation (RPA): Tips, Recommendations, and Strategies for SuccessBelum ada peringkat

- The Fourth Age: Smart Robots, Conscious Computers, and the Future of HumanityDari EverandThe Fourth Age: Smart Robots, Conscious Computers, and the Future of HumanityPenilaian: 4.5 dari 5 bintang4.5/5 (115)

- Design for How People Think: Using Brain Science to Build Better ProductsDari EverandDesign for How People Think: Using Brain Science to Build Better ProductsPenilaian: 4 dari 5 bintang4/5 (8)

- Artificial Intelligence Revolution: How AI Will Change our Society, Economy, and CultureDari EverandArtificial Intelligence Revolution: How AI Will Change our Society, Economy, and CulturePenilaian: 4.5 dari 5 bintang4.5/5 (2)

- Nir Eyal's Hooked: Proven Strategies for Getting Up to Speed Faster and Smarter SummaryDari EverandNir Eyal's Hooked: Proven Strategies for Getting Up to Speed Faster and Smarter SummaryPenilaian: 4 dari 5 bintang4/5 (5)

- In the Age of AI: How AI and Emerging Technologies Are Disrupting Industries, Lives, and the Future of WorkDari EverandIn the Age of AI: How AI and Emerging Technologies Are Disrupting Industries, Lives, and the Future of WorkPenilaian: 5 dari 5 bintang5/5 (1)

- The Maker's Field Guide: The Art & Science of Making Anything ImaginableDari EverandThe Maker's Field Guide: The Art & Science of Making Anything ImaginableBelum ada peringkat

- Atlas of AI: Power, Politics, and the Planetary Costs of Artificial IntelligenceDari EverandAtlas of AI: Power, Politics, and the Planetary Costs of Artificial IntelligencePenilaian: 5 dari 5 bintang5/5 (9)

- Dark Aeon: Transhumanism and the War Against HumanityDari EverandDark Aeon: Transhumanism and the War Against HumanityPenilaian: 5 dari 5 bintang5/5 (1)

- Understanding Automotive Electronics: An Engineering PerspectiveDari EverandUnderstanding Automotive Electronics: An Engineering PerspectivePenilaian: 3.5 dari 5 bintang3.5/5 (16)

- The Design Thinking Playbook: Mindful Digital Transformation of Teams, Products, Services, Businesses and EcosystemsDari EverandThe Design Thinking Playbook: Mindful Digital Transformation of Teams, Products, Services, Businesses and EcosystemsBelum ada peringkat

- Artificial You: AI and the Future of Your MindDari EverandArtificial You: AI and the Future of Your MindPenilaian: 4 dari 5 bintang4/5 (3)

- Collection of Raspberry Pi ProjectsDari EverandCollection of Raspberry Pi ProjectsPenilaian: 5 dari 5 bintang5/5 (1)

- Artificial Intelligence: From Medieval Robots to Neural NetworksDari EverandArtificial Intelligence: From Medieval Robots to Neural NetworksPenilaian: 4 dari 5 bintang4/5 (3)

- Operational Amplifier Circuits: Analysis and DesignDari EverandOperational Amplifier Circuits: Analysis and DesignPenilaian: 4.5 dari 5 bintang4.5/5 (2)

- 507 Mechanical Movements: Mechanisms and DevicesDari Everand507 Mechanical Movements: Mechanisms and DevicesPenilaian: 4 dari 5 bintang4/5 (28)

- Delft Design Guide -Revised edition: Perspectives- Models - Approaches - MethodsDari EverandDelft Design Guide -Revised edition: Perspectives- Models - Approaches - MethodsBelum ada peringkat

- Robotics: Designing the Mechanisms for Automated MachineryDari EverandRobotics: Designing the Mechanisms for Automated MachineryPenilaian: 4.5 dari 5 bintang4.5/5 (8)

- Electrical Principles and Technology for EngineeringDari EverandElectrical Principles and Technology for EngineeringPenilaian: 4 dari 5 bintang4/5 (4)

- Design Is The Problem: The Future of Design Must Be SustainableDari EverandDesign Is The Problem: The Future of Design Must Be SustainablePenilaian: 1.5 dari 5 bintang1.5/5 (2)