File-Based Loader User S Guide

Diunggah oleh

jkpt188Judul Asli

Hak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

File-Based Loader User S Guide

Diunggah oleh

jkpt188Hak Cipta:

Format Tersedia

An Oracle White Paper

July 2015

Oracle Fusion Human Capital Management

File-Based Loader Users Guide

Oracle Fusion HCM File-Based Loader Users Guide

Oracle Fusion HCM File-Based Loader Users Guide

Introduction to Oracle Fusion HCM File-Based Loader....................... 2

File-Based Loader Availability .................................................................... 2

How File-Based Loader Works ................................................................... 2

Objects That You Can Load Using File-Based Loader .............................. 4

Using File-Based Loader ............................................................................ 7

Best Practices ............................................................................................. 9

Related Documentation ............................................................................ 11

Preparing the Oracle Fusion HCM Environment ............................... 12

Step 1: Configure the Load Batch Data Process...................................... 12

Step 2: Define Oracle Fusion Business Objects ...................................... 16

Step 3: Generate the Mapping File of Cross-Reference Information ....... 17

Preparing and Extracting Source Data .............................................. 20

Step 4: Import Cross-Reference Information to the Source Environment 20

Step 6: Extract the Source Data ............................................................... 24

Importing and Loading Data to Oracle Fusion HCM ......................... 37

Step 8: Import Source Data to the Stage Tables ...................................... 37

Step 9: Load Data from the Stage Tables to the Application Tables ....... 46

Step 10: Fix Batch-Load Errors ................................................................ 47

Appendix: Example Data Files .......................................................... 52

Example 1: Changing the Logical Start Date of an Object ....................... 52

Example 2: Changing the Logical End Date of an Object ........................ 54

Example 3: Uploading a Partial Object Hierarchy .................................... 57

Example 4: Uploading a Partial Object ..................................................... 59

Example 5: Specifying a Nonstandard Column Order.............................. 60

Oracle Fusion HCM File-Based Loader Users Guide

Introduction to Oracle Fusion HCM File-Based Loader

Oracle Fusion Human Capital Management (Oracle Fusion HCM) File-Based Loader enables you to

bulk-load data, including object history, from any data source to Oracle Fusion HCM. Typically, you

use File-Based Loader for a once-only upload of data and maintain the data in Oracle Fusion HCM

thereafter. However, you can also use File-Based Loader to load data after the initial load in a variety of

scenarios. For example, you may want to upload changed data periodically if you are continuing to

maintain the source data. Alternatively, you can use File-Based Loader for periodic batch loading of

objects such as competencies or element entries.

File-Based Loader Availability

This document applies to File-Based Loader for Oracle Fusion Human Capital Management 11g

Release 7 (11.1.7) onwards. Some of the business objects and attributes are specific to a particular

release, and are indicated wherever applicable.

How File-Based Loader Works

File-Based Loader uses the Oracle Fusion HCM Load Batch Data process to load your source data to

the Oracle Fusion application tables. Load Batch Data is a generic utility for loading data to Oracle

Fusion from external sources. For example, it is used by Oracle Fusion Coexistence for HCM, the

Oracle Fusion Enterprise Structures Configurator (ESC), and the Oracle Fusion HCM Spreadsheet

Data Loader. A key feature of Load Batch Data is that data is imported initially in object-specific

batches to stage tables. Data is prepared for load in the stage tables and loaded from the stage tables to

the Oracle Fusion application tables.

File-Based Loader is suitable for loading large volumes (tens of thousands) of complex hierarchical

objects. You can upload data from any source, provided that, when it is loaded to the Load Batch Data

stage tables, it is in a format that satisfies Oracle Fusion business rules. (See the document File-Based

Loader Column Mapping Spreadsheet in My Oracle Support document ID 1595283.1 for business

rules governing supported objects.)

Oracle Fusion HCM File-Based Loader Users Guide

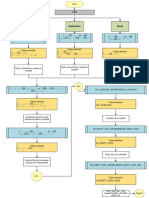

The key stages of the process are shown in the following figure:

Figure 1. A Summary of the Data-Load Process Using Oracle Fusion HCM File-Based Loader

Each step of this process is covered in more detail beginning on page 12.

Oracle Fusion HCM File-Based Loader Users Guide

Objects That You Can Load Using File-Based Loader

Table 1 shows the business objects that you can upload to Oracle Fusion HCM using File-Based

Loader. For the initial load, objects must be loaded in the order shown here, which respects

dependencies between objects.

TABLE 1. BUSINESS OBJECTS AND THEIR LOAD ORDER

ORDER

BUSINESS OBJECT

DEPENDENCIES

Actions

None

Action Reasons

Actions

Location

None

Business Unit

None

COMMENTS

You must map each business unit to a default set

immediately after load.

Grade

None

Grade Rate

Grade, Business Unit

Job Family

None

Job

Job Family, Grade

Salary Basis

Grade Rate

10

Establishment

None

11

Rating Model

None

12

Talent Profile Content Item

Rating Model

13

Talent Profile Content Item

Content Item, Establishment

Relationship

14

Person

None

Employees, contingent workers, pending workers,

and nonworkers

15

Person Contacts

Person

16

Person Documentation

Person

17

Department

Location, Person, Business

Citizenship, Passports, and Visas only.

Unit

18

Position

Job, Department

19

Work Relationship

Person, Position

Includes contracts for both 2-tier and 3-tier

employment models.

20

Salary

Salary Basis

21

Element Entry

Work Relationship

22

Tree

None

23

Tree Version

Tree

24

Department Tree Node

Tree, Tree Version,

Department

Oracle Fusion HCM File-Based Loader Users Guide

25

Person Accrual Detail

Person, Work Relationship

This business object is supported from Release 8

onwards.

26

Person Absence Entry

Person

This business object is supported from Release 8

onwards.

27

Person Maternity Absence Entry

Person

This business object is supported from Release 8

onwards.

28

Person Entitlement Detail

Person, Work Relationship

This business object is supported from Release 8

onwards.

Via upload, you can create and update business objects and upload the complete history for any object.

However, you cannot delete objects via upload, nor can you upload attachments.

Note: You can load the Absence business objects (Person Accrual Detail, Person Absence Entry,

Person Maternity Absence Entry, and Person Entitlement Detail) into Oracle Fusion only once.

Incremental load is not supported due to limitations in the data loading capabilities of the core

application.

Oracle Fusion HCM File-Based Loader Users Guide

Highlights of Oracle Fusion HCM File-Based Loader

Oracle Fusion HCM File-Based Loader provides the following new functionality from Release 7

(11.1.7) onwards.

Integration with Oracle WebCenter Content

WebCenter Content is a comprehensive suite of content-management tools that is fully integrated in

Oracle Fusion Applications. This document assumes that you are using WebCenter Content for

delivery of data files to Oracle Fusion HCM. (Existing users of SFTP should refer to the Oracle

Fusion Human Capital Management 11g Release 5 (11.1.5) File-Based Loader Users Guide, Document

ID 1533860.1, for SFTP-related information.)

To load data via Oracle WebCenter Content, you must have one of these job roles:

Human Capital Management Application Administrator

Human Capital Management Integration Specialist

Both of these job roles inherit the duty role File Import and Export Management Duty.

Flexfield Support

File-Based Loader supports the upload of descriptive flexfield data for the following business objects:

Assignment

Department

Grade

Job

Location

Person, Person Citizenships, and Person Ethnicity

(For descriptive flexfields that are not supported by File-Based Loader, a work-around exists, as

described in Loading Flexfields on page 30.)

Oracle Fusion HCM File-Based Loader Users Guide

Using File-Based Loader

You can implement Oracle Fusion HCM fully (either on-premises or in the cloud). Alternatively, you

can implement Oracle Fusion HCM in a coexistence scenario, where you use Oracle Fusion Talent

Management or Oracle Fusion Workforce Compensation, but continue to use your existing HR

applications.

In both cases, you implement Oracle Fusion HCM by performing the tasks that appear in your

implementation project. The tasks vary with both the type of implementation and the features and

options that you include.

Full Implementations

If you are performing a full implementation of Oracle Fusion HCM, then you can use File-Based

Loader to bulk-load your existing HCM data at appropriate stages in the implementation. Typically,

you load each type of data once only for this type of implementation. Following a successful upload,

you manage your data in Oracle Fusion HCM.

For more information about full implementations of Oracle Fusion HCM, see the Setup section in the

Oracle Global Human Resources Cloud Library.

http://docs.oracle.com/cloud/farel8/globalcs_gs/docs.htm

https://docs.oracle.com/cloud/latest/globalcs_gs/docs.htm

Coexistence Implementations

In a coexistence scenario, you maintain your existing HR applications alongside Oracle Fusion Talent

Management and Oracle Fusion Workforce Compensation. For this type of implementation, you:

Move talent management data permanently to Oracle Fusion HCM, which becomes the

system of record for talent management data.

Upload other types of data, such as person records, periodically to Oracle Fusion HCM. The

source system remains the system of record for this data.

The Coexistence for HCM Feature

In a standard Coexistence for HCM implementation, the only supported source environments are

Oracle PeopleSoft Enterprise Human Resources and Oracle E-Business Suite Human Resources.

From these environments, data upload is managed using HR2HR.

Note: The Coexistence for HCM feature based on HR2HR is not available for new coexistence

implementations.

The Coexistence for HCM feature is documented in the Oracle Human Capital Management Cloud

Integrating with Oracle HCM Cloud Guide.

Oracle Fusion HCM File-Based Loader Users Guide

Coexistence Implementations for Any Source Environment

To implement a new HCM coexistence scenario, for any source system, you can use File-Based Loader

for data upload. When using File-Based Loader:

You must define the mapping between your source data and Oracle Fusion HCM and manage

the data extract from your source system.

In most cases, you do not have to upload complete business objects in every data upload.

(The exception to this rule is work relationships. You must upload the entire work

relationship object whenever you update any of its components.)

No mechanism exists in File-Based Loader for extracting compensation data from Oracle

Fusion HCM and returning it to the source environment. However, you can use HCM

Extracts to extract compensation data if you plan to use Oracle Fusion Workforce

Compensation in a coexistence scenario.

When implementing a coexistence scenario, you can follow the general guidance in the document

Oracle Human Capital Management Cloud Integrating with Oracle HCM Cloud and the

implementation task order in the Coexistence Implementation Checklist in the Data Conversion

Reference Library on My Oracle Support (document ID 1595261.1).

However, the details of the data-upload process are as described in this document (the Oracle

Fusion HCM File-Based Loader Users Guide).

See also: E-Business Suite HCM Extraction Toolkit for Fusion HCM Integration Using File Based

Loader (Document ID 1556687.1).

Security Considerations

To load data via Oracle WebCenter Content, you must have the duty role File Import and Export

Management Duty. By default, these job roles inherit the File Import and Export Management Duty

role:

Human Capital Management Application Administrator

Human Capital Management Integration Specialist

To load data from the Load Batch Data stage tables to the Oracle Fusion application tables, you must

have the HCM Batch Data Loading Duty role. By default, these job roles inherit the HCM Batch Data

Loading Duty role:

Application Implementation Consultant

Human Capital Management Application Administrator

Oracle Fusion HCM File-Based Loader Users Guide

Best Practices

For successful use of File-Based Loader, follow these recommendations.

Understand Your Deployment Model

Are you moving all of your data to Oracle Fusion HCM or implementing a coexistence scenario?

If your deployment model requires that data be updated via upload, then devise a strategy for ongoing

data maintenance.

Prepare the Source Data

Identify the business objects that you are planning to upload to Oracle Fusion HCM and their source

systems. Review and analyze this source data, and verify that it is both accurate and current. If it is not,

then devise a plan to correct any problems before you attempt to load it. In particular:

Ensure that a manager is identified for every worker and that the information is accurate.

For jobs and positions, ensure that accurate job codes and titles exist in the legacy system.

For job history, establish the accuracy of any historical data. Understand whether all historical data

must be uploaded or just key events, such as hire, promotion, and termination.

Cleaning up the legacy data will minimize the problems that can occur when you upload the data to

Oracle Fusion HCM.

Prepare for Upload

Set the configuration parameters for the Load Batch Data process (as defined in Step 1 on page 12)

appropriately.

Understand the Oracle Fusion HCM implementation to which you are importing data. For example,

identify the legal employers, business units, and reference data sets.

Know which Oracle Fusion lookups you need to set and identify required functional mappings (for

example, worker numbers, job definitions, and position definitions).

Design your data transformations. For any business object that you plan to load, refer to the File-Based

Loader Column Mapping Spreadsheet in My Oracle Support document ID 1595283.1 for information

about the structure of the target Oracle Fusion object.

Manage the Upload Process

Always perform a test load to the stage environment of a small amount of data for all object types that

you need to load. Only when the test loads are successful and you have validated the data

transformations should you load data to the production environment.

Oracle Fusion HCM File-Based Loader Users Guide

Validate your data using the Data-File Validator utility, as described in My Oracle Support document

ID 1587716.1. The Data-File Validator enables you to perform most data-formatting validations before

you load data to Oracle Fusion HCM. You run the validator in the source environment to validate

either individual .dat files or all .dat files in a zip file. HTML output from the validator lists validation

errors, which you can correct in the .dat file.

Load objects in the prescribed order to avoid data-dependency errors. For initial loads, you are

recommended to load each object type separately so that any problems can be more easily diagnosed

and fixed. If errors occur, fix them before attempting to load the next object.

Map each business unit to a default set immediately after load, as described in the My Oracle Support

document ID 1458769.1. The default set, also known as the Reference Data Set, corresponds to the

Reference Data Set Code value on the Manage Business Unit Set Assignments page. More information

on Reference Data Set mapping is available in the My Oracle Support document ID 1521801.1.

Do not mix your use of HCM File-Based Loader with HCM Spreadsheet Data Loader for the same

data in a single environment. File-Based Loader keeps track of the data it loads to determine whether

data is to be created or updated. If you load data either interactively or using HCM Spreadsheet Data

Loader, then File-Based Loader will be unaware of those changes, which may cause errors.

You can use both tools during an implementation. HCM Spreadsheet Data Loader is recommended

for setting up training and conference-room pilot (CRP) environments. File-Based Loader is

recommended for full data uploads to both stage and production environments.

You may need to delete data loaded to the stage environment. Deletion scripts are preinstalled in your

stage environment. Be aware that these scripts delete all data rather than just the rows in error.

10

Oracle Fusion HCM File-Based Loader Users Guide

Related Documentation

Business Object Key Map Extract for File-Based Loader, Document 1595283.1

Data File Validation for File Based Loader, Document 1587716.1

Reference Data Set Assignments for Business Unit, Document 1458769.1

E-Business Suite HCM Extraction Toolkit for Fusion HCM Integration Using File Based Loader,

Document 1556687.1

11

Oracle Fusion HCM File-Based Loader Users Guide

Preparing the Oracle Fusion HCM Environment

Step 1: Configure the Load Batch Data Process

Load Batch Data is the HCM process that loads data from the stage tables to the Oracle Fusion

application tables. The following parameters determine how the process operates in your environment.

Configuration Parameters

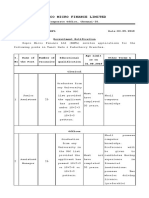

The configuration parameters and their default values are shown in Table 2. Several of these parameters

are not directly relevant to File-Based Loader, and in most cases you can use the default value shown

here. No value is shown in the table for parameters that are blank by default and that you can leave

blank.

You must set Loader Number of Processes to 4 or 8. This parameter is set to 1 by default.

More information about some key parameters is provided in Table 2.

TABLE 2. LOAD BATCH DATA CONFIGURATION PARAMETERS

PARAMETER NAME

PARAMETER VALUE

Allow Talent Data Increment Load

Enable Keyword Crawler

Environment Properties File

/u01/APPLTOP/instance/ess/config/environment.properties

Gather statistics after load

HRC Product Top

/u01/APPLTOP/fusionapps/applications/hcm/hrc

Initial Load

Load HCM Data Files Automatically

Loader Cache Clear Limit

99

Loader Chunk Size

200

Loader Maximum Errors

100

Loader Number of Processes

Loader Save Size

ODI Context

DEVELOPMENT

ODI Log Level

ODI Language

AMERICAN_AMERICA.WE8ISO8859P1

ODI Password

ODI Root Directory

/u01/APPLTOP/instance/odi/file-root/ODI_FILE_ROOT_HCM

ODI User

FUSION_APPS_HCM_ODI_SUPERVISOR_APPID

ODI Work Repository

FUSIONAPPS_WREP

On Demand FTP Root Directory

Use Python Loader

12

Oracle Fusion HCM File-Based Loader Users Guide

TABLE 2. LOAD BATCH DATA CONFIGURATION PARAMETERS

User Name Expression

Use Content Server for Cross Reference File Generation

Description

Gather statistics after load

Determines whether statistics are generated after each batch load. The default value is N. Setting this

parameter to Y improves the performance of data load.

Initial Load

Determines whether statistics are generated during initial load. The default value is N. If this parameter

is set to Y, statistics are generated after 500 top-level business objects are loaded. Setting this parameter

to Y improves the performance of data load and resolves bootstrap issues.

If you set both the Initial Load and Gather statistics after load parameters to Y, statistics are

generated during initial load and also after each batch load.

Load HCM Data Files Automatically

Use the AutoLoad parameter on the Loader IntegrationService web service (as described on page 39)

to control loading of HCM data files. You do not need to change the default value of the Load HCM

Data Files Automatically parameter. (If you are using SFTP rather than WebCenterContent, then

you use the AutoLoad parameter on the InboundLoaderProcess web service to control automatic

loading.)

Loader Cache Clear Limit

The number of top-level business objects to be processed by Load Batch Data before the cache is

cleared.

Loader Chunk Size

The number of top-level business objects a single Load Batch Data thread processes in a single action.

Set the chunk size based on the total number of objects to be loaded and the Loader Number of

Processes value.

Loader Maximum Errors

The maximum number of errors that can occur on a Load Batch Data thread before processing

terminates. If an error occurs during the processing of a complex business object (such as a person

record), then all rows for that business object are rolled back and marked as Error in Row. If you leave

this parameter set to 100, then the load process stops after 100 errors occur.

13

Oracle Fusion HCM File-Based Loader Users Guide

Loader Number of Processes

The number of Load Batch Data threads to run in parallel. This value is 1 by default. You are

recommended to set this value to 4 or 8. If you leave this parameter set to 1, then the Load Batch Data

process does not run multithreaded. For large data volumes, the performance impact can be severe.

Loader Save Size

The number of top-level business objects to be processed before the objects are committed to the

application tables.

ODI Language

Determines the character set used for data loading. Do not change the default value of this parameter.

ODI Root Directory

Used for staging .dat files. Do not change the default value of this parameter.

On Demand FTP Root Directory

This parameter does not have an effect on the FBL configuration. You can enter a string value and

continue.

Use Python Loader

Use the LoadType parameter on the LoaderIntegrationService web service (as described on page 39)

to select the load type for the HCM data files. (If you are using SFTP rather than WebCenter Content,

then you use the LoadType parameter on the InboundLoaderProcess web service to select the load

type.)

You do not need to change the default value of the Use Python Loader parameter.

User Name Expression

Determines how user names are constructed when you import person records. To use the enterprise

default format, leave this parameter value blank. If you prefer, you can specify that either person

numbers or assignment numbers be used as user names by setting User Name Expression to one of

the following expressions:

loaderCtx.getPersonAttr(PersonNumber)

loaderCtx.getAssignmentAttr(AssignmentNumber)

Use Content Server for Cross Reference File Generation

This parameter controls the location of the cross reference zip file. If the parameter is set to Y, then

the generated cross reference file would be placed on the Oracle WebCenter Content server. If the

parameter is set to N, then the generated cross reference file would be placed on the SFTP server.

14

Oracle Fusion HCM File-Based Loader Users Guide

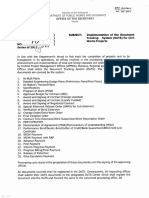

Setting the Configuration Parameters

To set these parameters, you perform the task Manage HCM Configuration for Coexistence from the

Setup and Maintenance work area.

Figure 2. Navigation: Setup and Maintenance - Manage HCM Configuration for Coexistence

When you run the Load Batch Data process for individual batches, you can override the values of the

Loader Chunk Size, Loader Maximum Errors, and Loader Number of Processes parameters. However,

that should not be necessary. Typically, you set these configuration parameters once only.

15

Oracle Fusion HCM File-Based Loader Users Guide

Step 2: Define Oracle Fusion Business Objects

Business objects that originate in your source system may reference other business objects that

originate in Oracle Fusion HCM. For these referenced business objects, you need to generate crossreference information and include it in your source system.

The following table shows the Oracle Fusion business objects for which you may need cross-reference

information. It also shows the task that you perform to define each business object. If you want to

reference any of these business objects, then you must have performed the relevant Oracle Fusion task

before proceeding to Step 3.

TABLE 3. DEFINING ORACLE FUSION BUSINESS OBJECTS

BUSINESS OBJECT

ORACLE FUSION TASK

Employment Action Reason

Manage Actions, Manage Action Reasons

Person Assignment Status Type

Manage Assignment Status

Enterprise

Manage Enterprise HCM Information

Talent Profile Content Item

Manage Profile Content Items

Talent Profile Content Type

Manage Profile Content Types

Talent Profile Type

Manage Profile Types

Talent Instance Qualifier Set

Manage Instance Qualifiers

Legal Entity

Manage Legal Entity

Legislative Data Group

Manage Legislative Data Groups

Payroll Element Type

Manage Elements

Payroll Element Input Value

Manage Elements

Person Type

Manage Person Types

Application Reference Data Set

Manage Reference Data Sets

Note also that when you load person records in bulk:

User account requests are created by default and sent to Oracle Identity Management when you run

the Send Pending LDAP Requests process (as described in Post-Load Processes on page 50.)

Roles are provisioned to users as specified by current role mappings.

Perform the task Manage Enterprise HCM Information in the target environment to review current

User and Role Provisioning settings and update them as necessary.

16

Oracle Fusion HCM File-Based Loader Users Guide

Step 3: Generate the Mapping File of Cross-Reference Information

You generate cross-reference information for any referenced business objects that you defined in Step

2. The cross-reference information comprises Globally Unique Identifiers (GUIDs) for those business

objects.

To generate cross-reference information, you submit the Generate Mapping File for HCM Business

Objects process from the Manage HCM Configuration for Coexistence page:

Figure 3. Navigation: Setup and Maintenance - Manage HCM Configuration for Coexistence

Once the process is submitted, make a note of the process ID and click Search to refresh the results in

the Generate Mapping File for HCM Business Objects section. You may need to click Search more

than once until the process completes. Process Status 12 means that the process completed

successfully.

Process Status values are described in the following table.

TABLE 4. GENERATE MAPPING FILE FOR HCM BUSINESS OBJECTS: PROCESS STATUS VALUES

STATUS

STATUS NAME

STATUS DESCRIPTION

WAIT

The job request is awaiting dispatch.

READY

The job request has been dispatched and is awaiting processing.

RUNNING

The job request is being processed

COMPLETED

The job request has completed and postprocessing has started.

CANCELLING

The job request has been canceled and is awaiting acknowledgement.

CANCELLED

The job request was cancelled.

10

ERROR

The job request has run and resulted in an error.

11

WARNING

The job request has run and resulted in a warning.

12

SUCCEEDED

The job request has run and completed successfully.

13

PAUSED

The job request paused for subrequest completion.

17

FINISHED

The job request and all child job requests have finished.

NUMBER

For processes that complete with errors (status 10), search for the process ID in the Scheduled

Processes work area (Navigator - Tools - Scheduled Processes) and view the associated log file.

17

Oracle Fusion HCM File-Based Loader Users Guide

If the process does not complete in a reasonable time, search for your process ID in the Scheduled

Processes work area and note the Scheduled Time for your process. This time is set automatically and

may be some time after the submission time.

The Generate Mapping File for HCM Business Objects process creates one or more data files (.dat

files) for each business object. The .dat files are packaged automatically in a zipped data file that is

written to the WebCenter Content server. To download the file:

1.

Open the File Import and Export page (Navigator - Tools - File Import and Export).

2.

On the File Import and Export page, set the Account value in the Search section to

hcm/dataloader/export and click Search. The zip file of reference information appears in

the search results:

Figure 4. Navigation: Navigator - Tools - File Import and Export

3.

Click the file name in the search results. When prompted, save the file locally.

The zip file contains the following individual .dat files for the business objects that you defined in Step

2 of the File-Based Loader process:

18

Oracle Fusion HCM File-Based Loader Users Guide

TABLE 5. DATA FILES GENERATED FOR ORACLE FUSION BUSINESS OBJECTS

BUSINESS OBJECT

DATA FILE NAME

Employment Action Reason

XR_ACTION.dat

XR_ACTION_REASON.dat

XR_ACTION_REASON_USAGE.dat

XR_ACTION_TYPE.dat

Person Assignment Status Type

XR_ASSIGNMENT_STATUS_TYPE.dat

Enterprise

XR_ENTERPRISE.dat

Talent Profile Content Item

XR_HRT_CONTENT_ITEM_LANGUAGE.dat

Talent Profile Content Type

XR_HRT_CONTENT_TYPE.dat

XR_HRT_CONTENT_TYPE_RELAT.dat

Talent Profile Type

XR_HRT_PROFILE_TYPE.dat

Talent Instance Qualifier Set

XR_HRT_QUALIFIER.dat

XR_HRT_QUALIFIER_SET.dat

XR_HRT_RELATION_CONFIG.dat

Legal Entity

XR_LEGAL_ENTITY.dat

Legislative Data Group

XR_LEGISLATIVE_DATA_GROUP.dat

Payroll Element Type

XR_PAY_ELEMENT_TYPE_STD.dat

XR_PAY_ELEMENT_TYPE_SUPPL.dat

Payroll Element Input Value

XR_PAY_INPUT_VALUE_STD.dat

XR_PAY_INPUT_VALUE_SUPPL.dat

Person Type

XR_PERSON_TYPE.dat

Application Reference Data Set

XR_SETID_SET.dat

Notes:

Whenever you make changes to any of the Oracle Fusion business objects identified in Step 2,

remember that you need to regenerate the mapping file of cross-reference information. For example, if

you define additional person types, then you need to regenerate the GUIDs for the Oracle Fusion

instance.

The GUID values associated with an Oracle Fusion instance do not change. However, GUIDs vary

among instances. Therefore, the GUIDs that you generate from the stage environment are different

from those that you generate from the production environment. You need to generate them in both

environments.

As an alternative to running the Generate Mapping File for HCM Business Objects process, you can

use the HCM extract described in the document Business Object Key Map Extract for File-Based

Loader (My Oracle Support article ID 1595283.1).

19

Oracle Fusion HCM File-Based Loader Users Guide

Preparing and Extracting Source Data

Step 4: Import Cross-Reference Information to the Source Environment

To ensure that foreign-key references in your source data to existing Oracle Fusion objects are correct,

you must use the GUID values from the cross-reference information that you generated in Step 3.

You need to devise a way of generating the correct values in the business objects that you plan to

extract and upload to Oracle Fusion. For example, you could store the mapping data from Step 3 in a

temporary storage table and join to the table in the extract process.

The following example shows GUIDs from an XR_ASSIGNMENT_STATUS_TYPE.dat file.

Row 2 (from FusionGUID through Description2) identifies the values in each subsequent row:

FusionGUID is the unique, 32-character alphanumeric identifier used by both Oracle Fusion HCM

and source applications such as Oracle PeopleSoft and Oracle EBS.

FusionKey is the value that is used by Oracle Fusion HCM.

PeopleSoftKey is the value that is used by the source system (which may be Oracle PeopleSoft or some

other system).

Description and Description2 describe the value identified by the keys.

More information about how GUIDs are processed for records sourced in either Oracle Fusion HCM

or externally is provided in the remainder of this section.

Key Mapping

Records loaded from an external source to Oracle Fusion HCM must be uniquely identified in both

source and target environments. In addition, a mapping must be maintained between the source and

target keys.

Keys are used to identify:

The row being created or updated

The parent of the row being created or updated

Any objects referenced by the row being created or updated

20

Oracle Fusion HCM File-Based Loader Users Guide

Records Sourced Externally

Each record sourced externally includes a pointer to the external record, which is the records Globally

Unique Identifier (GUID). Oracle Fusion HCM maintains a Key Mapping table

(HRC_LOADER_BATCH_KEY_MAP) that records, for each business object, its type, source

GUID, and Oracle Fusion ID (the ID by which it is identified in Oracle Fusion). For example:

OBJECT TYPE

SOURCE GUID

ORACLE FUSION ID

Person

PERS123

006854

Person

PERS456

059832

When a record is imported to the Load Batch Data stage tables, the import process compares the

records source GUID and object-type values with values in the Key Mapping table:

If the values exist in the Key Mapping table, then the process replaces the source GUID in the stage

tables with the Oracle Fusion ID.

If the values do not exist in the Key Mapping table, then an Oracle Fusion ID is generated for the

record, recorded in the Key Mapping table, and used to replace the source GUID in the stage tables.

By the time the data in the stage tables is ready for loading to the Oracle Fusion application tables, all

Oracle Fusion IDs have been allocated. The Oracle Fusion object services can process predefined

Oracle Fusion IDs when creating new records.

Records Sourced in Oracle Fusion HCM

For each reference object that originates in Oracle Fusion HCM, the process Generate Mapping File

for HCM Business Objects generates a source GUID and creates a row in the Key Mapping table that

holds both the newly generated GUID and the existing Oracle Fusion ID for the object. The process

also generates a zip file of data files containing the GUIDs for the reference objects, which you import

into your source environment (as described in Step 3). When you import source data that references

these objects to the Load Batch Data stage tables, you must ensure that you include the referenceobject GUIDs so that the correct reference objects can be identified.

Key-Mapping Example

Your source data includes the following records and business objects:

OBJECT TYPE

SOURCE GUID

NAME

DESCRIPTION

FOREIGN KEY TO RECORD

REC

ABC

Rec1

Record Number 1

REC

DEF

Rec2

Record Number 2

OBJ

TUV

Obj1

Object Number 1

ABC

OBJ

XYZ

Obj2

Object Number 2

DEF

21

Oracle Fusion HCM File-Based Loader Users Guide

The Key Mapping table is as follows:

OBJECT TYPE

SOURCE GUID

ORACLE FUSION ID

REC

ABC

REC

DEF

OBJ

TUV

OBJ

XYZ

The stage-table entries are as follows:

ORACLE FUSION ID

NAME

DESCRIPTION

FOREIGN KEY TO RECORD

Rec1

Record Number 1

Rec2

Record Number 2

Obj1

Object Number 1

Obj2

Object Number 2

Business Object Key Map Extract

A predefined extract, Business Object Key Map, is available. This optional extract enables you to:

Extract GUID key-mapping information from the HRC_LOADER_BATCH_KEY_MAP

table to a report or an XML-format file.

Generate GUIDs for objects that were not created using File-Based Loader so that you can

update those objects using File-Based Loader.

More information about the Business Object Key Map extract is available in the document Business

Object Key Map Extract for File-Based Loader, which you can find on My Oracle Support in article

1595283.1.

22

Oracle Fusion HCM File-Based Loader Users Guide

Step 5: Map Source Data to the Reference Data

You need to define mappings between your source data and the cross-reference data imported to the

source environment. The details of this step are determined locally because they are implementationdependent.

23

Oracle Fusion HCM File-Based Loader Users Guide

Step 6: Extract the Source Data

Extract your source data and package it for delivery to Oracle Fusion HCM.

For the extract itself, you can use tools that are native to the source system, such as PL/SQL in Oracle

E-Business Suite (EBS) or SQR in Oracle PeopleSoft. Alternatively you can use an ETL (Extract,

Transform, and Load) tool, such as Oracle Data Integrator (ODI) or PowerCenter Informatica.

For Oracle E-Business Suite release 11i and release 12.1.x. a sample HCM Extraction Toolkit is

available on My Oracle Support in document 1556687.1.

This section covers three aspects of the delivery of your source data to Oracle Fusion HCM:

The structure of the zip file that you deliver to Oracle Fusion HCM

The general format of each data file in the zip file

Data operations supported in each data file

Zip-File Structure

The data that you extract from your source system for upload to Oracle Fusion must be delivered as a

set of data files (.dat files), grouped by object type, in a zip file.

For example, jobs comprise job and job grade data, and departments comprise department and

department details data. If you load both in the same zip file, then the file structure will be:

OBJECT FOLDER

DATA-FILE NAME

Department

F_DEPARTMENT_DETAIL_VO.dat

F_DEPARTMENT_VO.dat

Job

F_JOB_GRADE_VO.dat

F_JOB_VO.dat

No parent folder exists in the zip file.

Each business object in the zip file is processed as a separate batch. For example, if the zip file

contains both Department and Job business objects, then the zip-file contents are processed as two

separate batches, one for Departments and one for Jobs.

Table 6 shows the directory names that you use to group data files by object type. For each object, the

table also shows the data-file names that you must use.

TABLE 6. OBJECT DIRECTORY NAMES

OBJECT DIRECTORY NAME

DATA-FILE NAME

Action

F_ACTIONS_VO.dat

F_ACTION_REASON_USAGES_VO.dat

ActionReason

F_ACTION_REASONS_VO.dat

BusinessUnit

F_BUSINESS_UNIT_VO.dat

24

Oracle Fusion HCM File-Based Loader Users Guide

TABLE 6. OBJECT DIRECTORY NAMES

OBJECT DIRECTORY NAME

DATA-FILE NAME

ContentItem

F_CONTENT_ITEM_RATING_DESCRIPTION_VO.dat

F_CONTENT_ITEM_VO.dat

ContentItemRelationship

F_CONTENT_ITEM_RELATIONSHIP_VO.dat

Department

F_DEPARTMENT_DETAIL_VO.dat

F_DEPARTMENT_VO.dat

DepartmentTreeNode

F_PER_DEPT_TREE_NODE.dat

ElementEntry

F_ELEMENT_ENTRY_VALUE_VO.dat

F_ELEMENT_ENTRY_VO.dat

Establishment

F_ESTABLISHMENT_VO.dat

Grade

F_GRADE_VO.dat

GradeRate

F_GRADE_RATE_VALUE_VO.dat

F_GRADE_RATE_VO.dat

Job

F_JOB_GRADE_VO.dat

F_JOB_VO.dat

JobFamily

F_JOB_FAMILY_VO.dat

Location

F_LOCATION_VO.dat

Person

F_PERSON_ADDRESS_VO.dat

F_PERSON_EMAIL_VO.dat

F_PERSON_ETHNICITY_VO.dat

F_PERSON_LEGISLATIVE_DATA_VO.dat

F_PERSON_NAME_VO.dat

F_PERSON_NATIONAL_IDENTIFIER_VO.dat

F_PERSON_PHONE_VO.dat

F_PERSON_RELIGION_VO.dat

F_PERSON_TYPE_USAGE_VO.dat

F_PERSON_VO.dat

PersonAbsenceEntry

F_PERSON_ABSENCE_ENTRY_VO.dat

PersonAccrualDetail

F_PERSON_ACCRUAL_DTL_VO.dat

PersonContact

F_PERSON_CONTACT_VO.dat

PersonDocumentation

F_PERSON_CITIZENSHIP_VO.dat

F_PERSON_DOCUMENTATION_VO.dat

F_PERSON_VISA_VO.dat

F_PERSON_PASSPORT_VO.dat

PersonEntitlementDetail

F_PERSON_PLAN_ENTRY_DTL_VO.dat

PersonMaternityAbsenceEntry

F_PERSON_MAT_ABS_ENTRY_VO.dat

Position

F_POSITION_GRADE_VO.dat

25

Oracle Fusion HCM File-Based Loader Users Guide

TABLE 6. OBJECT DIRECTORY NAMES

OBJECT DIRECTORY NAME

DATA-FILE NAME

F_POSITION_VO.dat

Profile

F_PROFILE_ITEM_VO.dat

F_PROFILE_RELATION_VO.dat

F_PROFILE_VO.dat

RatingModel

F_RATING_LEVEL_VO.dat

F_RATING_MODEL_VO.dat

Salary

F_SALARY_COMPONENT_VO.dat

F_SALARY_VO.dat

SalaryBasis

F_SALARY_BASIS_VO.dat

Tree

F_FND_TREE.dat

TreeVersion

F_FND_TREE_VERSION.dat

WorkRelationship

F_ASSIGNMENT_SUPERVISOR_VO.dat

F_ASSIGNMENT_VO.dat

F_ASSIGNMENT_WORK_MEASURE_VO.dat

F_WORK_RELATIONSHIP_VO.dat

F_WORK_TERMS_VO.dat

F_CONTRACT_VO.dat

Batch Names

Each business object is processed as a separate batch. The batch name is formed automatically by

prefixing the object directory name (for example, SalaryBasis or GradeRate) with the internal loader

batch ID. For example:

123456789:Person

987654321:WorkRelationship

If you import and load data manually, then you have the opportunity to specify a meaningful batch

name when you schedule the import or load process. If you import and load data automatically, then

the batch names that are generated automatically are used.

26

Oracle Fusion HCM File-Based Loader Users Guide

Data-File Format

Each data file has a predefined format; example .dat files and other FBL sample files are provided in

the Data Conversion Reference Library on My Oracle Support (document ID 1595261.1).

You construct the heading row in the data file for each business object type by concatenating the

Datastore Attribute Names with pipe separators. Heading rows must be in capital letters and spelled as

shown in the example .dat files; however, the columns can appear in any order.

The data lines follow this heading row, with data items also being separated by the pipe character.

The following example shows a single data line from a Department data file:

Figure 5. Example Data Line

All text in this example from ORGANIZATION_ID through LEGISLATION_CODE constitutes

the heading line. Everything following LEGISLATION_CODE is the first data line. In this example,

all columns are specified for illustration purposes.

Note: The pipe character (|) is not supported in data values.

27

Oracle Fusion HCM File-Based Loader Users Guide

Supported Data Operations

Updating Data After Initial Import and Load

You upload changed information to Oracle Fusion in a zip file of data files (.dat files), just as for the

initial load. If the zip file contains changes for multiple business objects, then the changes are

processed in multiple batches, one per business object, just as for the initial load.

Uploading a Partial Object Hierarchy

Many Oracle Fusion HCM business objects comprise a hierarchy of related entities. For example, a

person object comprises not just the person entity but also person addresses, phones, names, ethnicity,

and so on. When you update most of the complex business objects, you do not have to upload the

complete object. For example, if a persons address changes, then you can upload just the new address:

you do not need to upload the entire person business object.

The exception to this general rule is work relationships.

You must upload all entities of the work relationship when updating.

This requirement exists because of the complexity of the work relationship object, which comprises

multiple dependent entities. Partial updates are likely to cause inconsistencies among dependent

entities. If inconsistencies occur, then the entire work relationship object may have to be deleted and

reloaded.

For date-effective objects you can upload a partial history; you do not need to upload the complete

history of the object.

Example 3 in Appendix A shows update of a complex business object.

Uploading a Partial Object

You can omit optional columns when creating or updating business objects.

The document File-Based Loader Column Mapping Spreadsheet in My Oracle Support document ID

1595283.1 identifies mandatory columns for each business object.

Example 4 in Appendix A shows a data file that omits some optional attributes.

Specifying Nonstandard Column Order

When creating or updating business objects, you can specify columns in any order.

Example 5 in Appendix A shows a data file with a nonstandard column order.

28

Oracle Fusion HCM File-Based Loader Users Guide

Setting Attribute Values to NULL

When creating a business object, you can omit optional attributes or leave them blank. File-Based

Loader sets such attributes in new objects to NULL.

When updating a business object, any optional attribute that you omit or leave blank is excluded from

the update and remains unchanged in Oracle Fusion. However, you can set non-NULL attributes to

NULL by specifying a NULL directive value, as follows:

If the attribute is a VARCHAR2 or NUMBER value, then you set it to #NULL.

If the attribute is a DATE value, then you set it to 31-Dec-0001 (or date-format equivalent).

File-Based Loader sets #NULL and 31-Dec-0001 values to NULL.

Note: You cannot leave mandatory attributes blank when you are creating or updating a business

object. If you leave a surrogate key, parent key, or date-effective attribute blank in a business object,

then an error is raised.

See Example 6 in Appendix A for examples of setting attribute values to NULL.

Updating Logical Start and End Dates for Date-Effective Business Objects

You can specify a new start or end date for a logical row in a date-effective business object without

having to load the entire history of the object. For date-effective objects, two additional columns exist

in the relevant data (.dat) file:

LSD (Logical Start Date)

LED (Logical End Date)

Setting either of these columns to Y indicates to Oracle Fusion HCM that a new logical start date

(LSD) or logical end date (LED) is specified for the row. Values other than Y in the LSD and LED

columns are ignored.

In the following example, the logical row for the Sales Director job comprises three physical records:

RECORD

JOB

JOB CODE

EFFECTIVE START DATE

EFFECTIVE END DATE

Sales Director

SDR.450

01 January 2010

12 March 2011

Sales Director

SDR.450

13 March 2011

04 April 2012

Sales Director

SDR.450

05 April 2012

31 December 4712

To set the logical end date of the logical row to 31 December 2012, you would specify any mandatory

values and the new effective end date value of 31 December 2012. To indicate that this is the new

logical end date, you would also set the LED column to Y.

Note: You cannot specify a logical end date for a primary object, such as a persons primary

assignment or mailing address. You must make the object nonprimary before attempting to specify a

logical end date.

29

Oracle Fusion HCM File-Based Loader Users Guide

Examples 1, 2, and 3 in Appendix A show how to update logical start and end dates for date-effective

business objects.

Loading Flexfields

You can load data for the following descriptive flexfields:

TABLE 7. SUPPORTED DESCRIPTIVE FLEXFIELDS

BUSINESS OBJECT

DATA FILE

FLEXFIELD

Department

F_DEPARTMENT_VO.dat

PER_ORGANIZATIONS_DF

Grade

F_GRADE_VO.dat

PER_GRADES_DF

Job

F_JOB_VO.dat

PER_JOBS_DFF

Location

F_LOCATION_VO.dat

PER_LOCATIONS_DF

Person

F_PERSON_ETHNICITY_VO.dat

PER_ETHNICITIES_DFF

F_PERSON_VO.dat

PER_PERSONS_DFF

Person Documentation

F_PERSON_CITIZENSHIP_VO.dat

PER_CITIZENSHIPS_DFF

Work Relationship

F_ASSIGNMENT_VO.dat

PER_ASG_DF

Example .dat files and other FBL sample files are provided in the Data Conversion Reference Library

on My Oracle Support (document ID 1595261.1).

To load data for other flexfields, you:

Configure and deploy your flexfields using the Manage Flexfields task.

Enter your flexfield data in a supplied data (.dat) file and save it in .csv format.

Open a service request to have the flexfield data loaded from the .csv file to your environment.

30

Oracle Fusion HCM File-Based Loader Users Guide

Step 7: Deliver Your Data to the Oracle WebCenter Content Server

Three main methods of delivering your data to the WebCenter Content server exist:

Oracle Fusion HCM File Import and Export interface

WebCenter Content Document Transfer Utility

Remote Intradoc Client (RIDC)

Oracle Fusion HCM File Import and Export Interface

Deliver files individually to the WebCenter Content server as follows:

1.

Open the File Import and Export page (Navigator - Tools - File Import and Export).

2.

On the File Import and Export page, click the Upload icon in the Search Results section:

3.

In the Upload File dialog box, browse for your zip file of data, and set the Account value to

hcm/dataloader/import:

4.

Click Save and Close. The zip file is uploaded to the hcm/dataloader/import account and

appears automatically in the Search Results section of the File Import and Export page:

WebCenter Content automatically allocates a content ID to uploaded files. To see the content ID for a

file, select View - Columns - Content ID in the Search Results section of the File Import and Export

page. The search results now include the Content ID column:

31

Oracle Fusion HCM File-Based Loader Users Guide

WebCenter Content Document Transfer Utility

The WebCenter Content Document Transfer Utility for Oracle Fusion Applications is a feature-set

Java library that provides content export and import capabilities. You can evaluate the utility from the

Individual Component Downloads section of the Oracle WebCenter Content 11g R1 Downloads tab

on Oracle Technology Network (OTN):

http://www.oracle.com/technetwork/middleware/webcenter/content/downloads/index.html

(Note: Current customers can download the utility from Oracle Software Delivery Cloud.)

Open the Individual Components Download section on the Downloads tab, accept the license

agreement, and download the WebCenter Content Document Transfer Utility. Once the component

zip file is downloaded, extract the JAR file. The zip file also contains a useful readme file describing

the example invocation command shown in Figure 6.

java -classpath "oracle.ucm.fa_client_11.1.1.jar" oracle.ucm.client.UploadTool -url=https://{host}/cs/idcplg

--username=<provide_user_name> --password=<provide_password> -primaryFile="<file_path_with_filename>" --dDocTitle="<provide_Zip_Filename>" dDocAccount=hcm/dataloader/import

e.g.

java -cp "oracle.ucm.fa_client_11.1.1.jar" oracle.ucm.client.UploadTool -url="https://{host}/cs/idcplg" --username="HCM_IMPL" --password="Welcome1" -primaryFile="/scratch/HRDataFile.zip" --dDocTitle="Department Load File" -dSecurityGroup="FAFusionImportExport" --dDocAccount="hcm/dataloader/import"

Sample output:

Oracle WebCenter Content Document Transfer Utility

Oracle Fusion Applications

Copyright (c) 2013, Oracle.

All rights reserved.

Performing upload (CHECKIN_UNIVERSAL) ...

Upload successful.

[dID=21537 | dDocName=UCMFA021487]

Figure 6. Example Invocation Command for the WebCenter Content Document Transfer Utility

The dDocName value (which is equivalent to the content ID) returned by the above statement is

required for the LoaderIntegrationService call described on page 39.

32

Oracle Fusion HCM File-Based Loader Users Guide

Review the readme file downloaded with the WebCenter Content Document Transfer Utility for a list

of all parameters, including advanced networking options for resolving proxy issues.

Remote Intradoc Client (RIDC)

The RIDC communication API removes data abstractions to Oracle Content Server while still

providing a wrapper to handle connection pooling, security, and protocol specifics. This is the

recommended approach if you want to use native Java APIs.

RIDC supports three protocols: Intradoc, HTTP, and JAX-WS.

Intradoc

The Intradoc protocol communicates with Oracle Content Server over the Intradoc socket port

(typically, 4444). This protocol does not perform password validation and so requires a trusted

connection between the client and Oracle Content Server. Clients that use this protocol are expected to

perform any required authentication. Intradoc communication can also be configured to run over SSL.

HTTP

RIDC communicates with the web server for Oracle Content Server using the Apache HttpClient

package. Unlike Intradoc, this protocol requires authentication credentials for each request.

JAX-WS

The JAX-WS protocol is supported only in Oracle WebCenter Content 11g with Oracle Content

Server running in Oracle WebLogic Server. To provide JAX-WS support, several additional JAR files

are required.

For more information, see:

Oracle WebCenter Content Developer's Guide for Content Server (specifically the section Using

RIDC to Access Content Server)

Oracle Fusion Middleware Developer's Guide for Remote Intradoc Client (RIDC)

Once the RIDC Component Library download file has been unzipped, include the following JAR files

in your project. Figure 7 shows an example from Oracle JDeveloper.

33

Oracle Fusion HCM File-Based Loader Users Guide

Figure 7. Including Libraries in a JDeveloper Project

Figure 8 shows example code for uploading a file into WebCenter Content. Parameter details are

provided in Table 8.

import

import

import

import

java.io.File;

java.io.FileInputStream;

java.io.InputStream;

java.io.IOException;

import

import

import

import

import

import

import

oracle.stellent.ridc.IdcClient;

oracle.stellent.ridc.IdcClientException;

oracle.stellent.ridc.IdcClientManager;

oracle.stellent.ridc.IdcContext;

oracle.stellent.ridc.model.DataBinder;

oracle.stellent.ridc.model.TransferFile;

oracle.stellent.ridc.protocol.ServiceResponse;

public class UploadFile {

public static void main(String[] arg) throws Exception {

try {

IdcClientManager m_clientManager = new IdcClientManager();

IdcClient idcClient =

m_clientManager.createClient("https://{host}/cs/idcplg");

// replace

34

Oracle Fusion HCM File-Based Loader Users Guide

with relevant URL

IdcContext userContext = new IdcContext("HCM_ADMIN", "Password"); //

replace with relevant username password

checkin(idcClient, userContext,

"/scratch/jdoe/ridc/BusinessUnit1.zip",

// Replace with fully qualified path to source file

"Document", // content type

"BusinessUnit1", // doc title

userContext.getUser(), // author

"FAFusionImportExport", // security group

"hcm$/dataloader$/import$", // account

"BU5") // dDocName - this is the ContentId

;

} catch (IdcClientException e) {

e.printStackTrace();

}

}

/**

* Method description

*

* @param idcClient

* @param userContext

* @param sourceFileFQP

fully qualified path to source content

* @param contentType

content type

* @param dDocTitle

doc title

* @param dDocAuthor

author

* @param dSecurityGroup

security group

* @param dDocAccount

account

* @param dDocName

dDocName

*

* @throws IdcClientException

*/

public static void checkin(IdcClient idcClient, IdcContext userContext,

String sourceFileFQP, String contentType,

String dDocTitle, String dDocAuthor,

String dSecurityGroup, String dDocAccount,

String dDocName) throws IdcClientException {

InputStream is = null;

try {

String fileName =

sourceFileFQP.substring(sourceFileFQP.lastIndexOf('/') + 1);

is = new FileInputStream(sourceFileFQP);

long fileLength = new File(sourceFileFQP).length();

TransferFile primaryFile = new TransferFile();

primaryFile.setInputStream(is);

primaryFile.setContentType(contentType);

primaryFile.setFileName(fileName);

primaryFile.setContentLength(fileLength);

// note!!! when using HTTP protocol (not intradoc/jaxws) - one must

explicitly

// set the Content Length when supplying an InputStream to the transfer

file

// e.g. primaryFile.setContentLength(xxx);

// otherwise, a 0-byte file results on the server

DataBinder request = idcClient.createBinder();

35

Oracle Fusion HCM File-Based Loader Users Guide

request.putLocal("IdcService", "CHECKIN_UNIVERSAL");

request.addFile("primaryFile", primaryFile);

request.putLocal("dDocTitle", dDocTitle);

request.putLocal("dDocAuthor", dDocAuthor);

request.putLocal("dDocType", contentType);

request.putLocal("dSecurityGroup", dSecurityGroup);

// if server is setup to use accounts - an account MUST be specified

// even if it is the empty string; supplying null results in Content server

// attempting to apply an account named "null" to the content!

request.putLocal("dDocAccount", dDocAccount == null ? "" : dDocAccount);

if (dDocName != null && dDocName.trim().length() > 0) {

request.putLocal("dDocName", dDocName);

}

// execute the request

ServiceResponse response =

idcClient.sendRequest(userContext, request); // throws IdcClientException

// get the binder - get a binder closes the response automatically

DataBinder responseBinder =

response.getResponseAsBinder(); // throws IdcClientException

} catch (IOException e) {

e.printStackTrace(System.out);

} finally {

if (is != null) {

try {

is.close();

} catch (IOException ignore) {

}

}

}

}

}

Figure 8. Example Java Code for Uploading Files to Oracle WebCenter Content

TABLE 8. ATTRIBUTES OF THE DATABINDER OBJECT USED IN FIGURE 8

PARAMETER

MEANING

COMMENTS

IdcService

The service to invoke.

CHECKIN_UNIVERSAL for uploading

files

dDocName

The content ID for the content item.

Value passed to LoaderIntegrationService

dDocAuthor

The content item author (contributor).

dDocTitle

The content item title.

dDocType

The content item type.

Document

dSecurityGroup

The security group, such as Public or Secure.

FAFusionImportExport

dDocAccount

The account for the content item. Required only if accounts are

hcm$/dataloader$/import$

enabled.

primaryFile

The absolute path to the location of the file as seen from the server.

36

Oracle Fusion HCM File-Based Loader Users Guide

Importing and Loading Data to Oracle Fusion HCM

Step 8: Import Source Data to the Stage Tables

Once you have placed the zip file containing your .dat files in account hcm/dataloader/import on

the WebCenter Content server, you can either import or import and load the data:

From the Load HCM Data for Coexistence page

Using the LoaderIntegrationService web service

Importing and Loading from the Load HCM Data for Coexistence Page

To import or import and load a zip file from the hcm/dataloader/import account on the WebCenter

Content server:

1. Open the Data Exchange work area (Navigator - Workforce Management - Data Exchange).

2. In the Data Exchange work area, select the task Load HCM Data for Coexistence.

3. On the Load HCM Data for Coexistence page, click Import. The Import and Load HCM Data

dialog box opens.

4. In the Import and Load HCM Data dialog box, enter the content ID that you obtained when

loading the file to the WebCenter Content server using the File Import and Export interface.

5. Select an individual business object or All to load all business objects from the zip file.

6. Provide a meaningful batch name. Object names are prefixed with the batch name to provide a

unique batch name for each batch.

7. If you set the Loader Run Type parameter to Import, then data is imported to the stage tables.

You can review the results of this process and correct any import errors before proceeding with the

load to the application tables. When you first start to use File-Based Loader, this is the

recommended approach.

If you set the Loader Run Type parameter to Import and Load Batch Data, then data is

imported to the stage tables. All objects imported successfully to the stage tables are then loaded

automatically to the application tables. You may prefer this approach when import errors are few

and your data-loading is routine.

37

Oracle Fusion HCM File-Based Loader Users Guide

8. Click Submit.

Your data is imported to the stage tables and also loaded to the application tables, if appropriate. For

next steps, see Reviewing the Import Log and Fixing Import Errors on page 44.

Importing and Loading Using the Loader Integration Service Web Service

You can find service invocation details for the LoaderIntegrationService in the public Oracle

Enterprise Repository (OER) at http://fusionappsoer.oracle.com.

38

Oracle Fusion HCM File-Based Loader Users Guide

Figure 9. The Loader Integration Service in OER

Review the documentation for the LoaderIntegrationService asset in OER.

Sample Code to Invoke the Loader Integration Service

Several ways exist of invoking Oracle Fusion web services. This section explains how to invoke web

services using generated proxy classes. You can generate your own proxy classes by providing the URL

of the service WSDL file to your generator of choice. These proxy classes are then used to invoke the

web service.

Note: Oracle Fusion Web services are protected by Oracle Web Services Manager (OWSM) security

policies. Refer to the Oracle Fusion Middleware Security and Administrator's Guide for Web Services

for further details.

Figure 10 shows how to call the LoaderIntegrationService.

http://{Host}/hcmCommonBatchLoader/LoaderIntegrationService

<soap:Envelope xmlns:soap="http://schemas.xmlsoap.org/soap/envelope/">

<soap:Body>

<ns1:submitBatch

xmlns:ns1="http://xmlns.oracle.com/apps/hcm/common/batchLoader/core/loaderIntegrationSe

rvice/types/">

<ns1:ZipFileName></ns1:ZipFileName>

39

Oracle Fusion HCM File-Based Loader Users Guide

<ns1:BusinessObjectList></ns1:BusinessObjectList>

<ns1:BatchName></ns1:BatchName>

<ns1:LoadType></ns1:LoadType>

<ns1:AutoLoad></ns1:AutoLoad>

</ns1:submitBatch>

</soap:Body>

</soap:Envelope>

Figure 10. Sample Service Interface for the LoaderIntegrationService

TABLE 9. PARAMETERS OF THE LOADER INTEGRATION SERVICE

PARAMETER

DESCRIPTION

ZipFileName

Content ID of the file on the WebCenter Content server (the same value as dDocName in the

WebCenter Content Java call)

BusinessObjectList

Name of the business object to be loaded. Repeat this tag for each business object to be loaded.

BatchName

Name of the batch when it is created in Oracle Fusion.

LoadType

The type of load. Can be either FBL or HR2HR. Use FBL.

AutoLoad

Indicates whether to load the data into Oracle Fusion.

N = Import only

Y = Import and Load

Note: This parameter in the service replaces the setup parameter Load HCM Data Files Automatically

on the Manage HCM Configuration for Coexistence page.

Implications of Security Policy on the LoaderIntegrationService

The LoaderIntegrationService is secured using the following policy:

oracle/wss11_saml_or_username_token_with_message_protection_servic

e_policy

Therefore, when a client calls the service it must satisfy the message-protection policy to ensure that

the payload is transported encrypted or sent over the SSL transport layer.

Note: Previously this service could be called directly from the browser, supplying the parameters as

requested by the Oracle WSM Web Services Test Page. However, the test page is used to test policies

that implement username tokens to authenticate users without message protection. The policy used to

secure the LoaderIntegrationService precludes the use of the Web Services Test Page for this reason.

A client policy that can be used to meet this requirement is:

oracle/wss11_username_token_with_message_protection_client_policy

40

Oracle Fusion HCM File-Based Loader Users Guide

To use this policy, the message must be encrypted using a public key provided by the server. When the

message reaches the server it can be decrypted by the server's private key. A KeyStore is used to import

the certificate and is referenced in the subsequent client code.

The public key can be obtained from the certificate provided in the service WSDL file. See Figure 11

(the certificate is Base64 encoded).

Figure 11. Example of a Certificate in a Service WSDL File

To use the key in this certificate, you need to create a local KeyStore and import the certificate into it:

1. Create a new file with any name you like. You must change the extension to .cer to indicate that it is

a certificate file.

2. Using a text editor, open the file you just created and enter "-----BEGIN CERTIFICATE-----" on

the first line.

3. In the next line, copy the Base64 encoded certificate from the service WSDL file to the newly

created certificate file.

4. Add "-----END CERTIFICATE-----" on a new line and save the file. Now you have a certificate

containing the public key from the server.

5. Open the command line and change the directory to $JAVA_HOME/bin. Use the following

command to create a KeyStore and import the public key from the certificate.

keytool -import -file <Provide the path of the certification.cer file> -alias orakey

-keypass welcome -keystore <Provide the path where the jks file needs to be

created(including the file name)> -storepass welcome

41

Oracle Fusion HCM File-Based Loader Users Guide

6. You can find the KeyStore file in the KeyStore path that you set.

Once the client KeyStore has been created, you can call the service using the proxy classes. The

following parameters are used by the proxy class to encrypt and decrypt the message.

PARAMETER

DESCRIPTION

WSBindingProvider.USERNAME_PROPERTY

User name of the application user who has relevant privileges for importing

and processing FBL data files.

WSBindingProvider.PASSWORD_PROPERTY

The password of the above user.

ClientConstants.WSSEC_KEYSTORE_TYPE:

Type of the KeyStore you created. JKS (Java KeyStore) is widely used and is

the most common type.

ClientConstants.WSSEC_KEYSTORE_LOCATION

Path of the client KeyStore file.

ClientConstants.WSSEC_KEYSTORE_PASSWORD:

Password of your client KeyStore.

ClientConstants.WSSEC_ENC_KEY_ALIAS

Alias of the key you use to decrypt the SOAP message from the server.

ClientConstants.WSSEC_ENC_KEY_PASSWORD:

Password of the key you use to decrypt the SOAP message.

ClientConstants.WSSEC_RECIPIENT_KEY_ALIAS:

Alias of the key you use to encrypt the SOAP message to the server.

How to Create a Proxy Class

Generate the JAX-WS proxy class for the LoaderIntegrationService using the wsimport command,

which is available at JAVA_HOME/bin:

wsimport -s <Provide the folder where the generated files need to be placed> -d

<Provide the folder where the generated files need to be placed> <The Loader

Integration Service URL>

e.g. wsimport -s "D:\LoaderIntegrationService" -d "D:\LoaderIntegrationService"

https://{host}/hcmCommonBatchLoader/LoaderIntegrationService?wsdl

The files generated are placed in the following two folders:

com

sdo

Add the generated code to a JAR file:

zip loaderIntegrationProxy.jar -r * How to Invoke the Web Service

Create the client class LoaderIntegrationServiceSoapHttpPortClient for invoking the

LoaderIntegrationService. The class must be created in the folder

com/oracle/xmlns/apps/hcm/common/batchloader/core/loaderintegrationservice:

42

Oracle Fusion HCM File-Based Loader Users Guide

package com.oracle.xmlns.apps.hcm.common.batchloader.core.loaderintegrationservice;

import

import

import

import

import

import

java.util.ArrayList;

java.util.Map;

java.util.StringTokenizer;

javax.xml.ws.BindingProvider;

javax.xml.ws.WebServiceRef;

weblogic.wsee.jws.jaxws.owsm.SecurityPolicyFeature;

public class LoaderIntegrationServiceSoapHttpPortClient {

@WebServiceRef

private static LoaderIntegrationService_Service loaderIntegrationService_Service;

public static void main(String[] args) {

loaderIntegrationService_Service =

new LoaderIntegrationService_Service();

SecurityPolicyFeature[] securityFeatures =

new SecurityPolicyFeature[] { new

SecurityPolicyFeature("oracle/wss11_username_token_with_message_protection_client_poli

cy") };

LoaderIntegrationService loaderIntegrationService =

loaderIntegrationService_Service.getLoaderIntegrationServiceSoapHttpPort(securityFeatu

res);

BindingProvider wsbp = (BindingProvider)loaderIntegrationService;

Map<String, Object> requestContext = wsbp.getRequestContext();

requestContext.put(BindingProvider.USERNAME_PROPERTY,"Provide the applications

username");

requestContext.put(BindingProvider.PASSWORD_PROPERTY, "Provide the password");

requestContext.put("oracle.webservices.security.keystore.type", "JKS");

requestContext.put("oracle.webservices.security.keystore.location",

"Provide the location of the default-keystore.jks (including the file name)");

requestContext.put("oracle.webservices.security.keystore.password", "welcome");

requestContext.put("oracle.webservices.security.encryption.key.alias", "orakey");

requestContext.put("oracle.webservices.security.encryption.key.password", "welcome");

requestContext.put("oracle.webservices.security.recipient.key.alias", "orakey");

String fileName = args[0];

String batchName = args[1];

String autoLoad = args[2];

String businessObj = args[3];

StringTokenizer strTok = new StringTokenizer(businessObj, ",");

ArrayList businessObjList = new ArrayList();

while (strTok.hasMoreTokens()) {

businessObjList.add(strTok.nextToken());

}

String response;

try {

response =

loaderIntegrationService.submitBatch(fileName, businessObjList,

batchName, "FBL",

autoLoad);

System.out.println("The response received from the server is ...");

System.out.println(response);

} catch (ServiceException e) {

System.out.println("Error occurred during the invocation of the service ...");

e.printStackTrace();

43

Oracle Fusion HCM File-Based Loader Users Guide

}

}

}

To generate the class file you need the following JAR file:

ws.api_1.1.0.0.jar

This JAR file is available at the following location:

$MIDDLEWARE_HOME/modules

If necessary, you can download the JAR file as part of JDeveloper. The JAR file is available at the

following location in the JDeveloper installation.

modules/ ws.api_1.1.0.0.jar

Compile the Java code.

javac -classpath <Provide the path of the folder where the JAX-WS files are

generated>;<Provide the location of the ws.api_1.1.0.0.jar>

LoaderIntegrationServiceSoapHttpPortClient.java

Run the class LoaderIntegrationServiceSoapHttpPortClient to invoke the Loader Integration Service

java -classpath <Provide the path of the folder where the JAX-WS files are

generated>;<Provide the location of the weblogic.jar>;<Provide the location of the

jrf.jar>

com.oracle.xmlns.apps.hcm.common.batchloader.core.loaderintegrationservice.LoaderIntegr

ationServiceSoapHttpPortClient <ZipFileName> <BatchName> <AutoLoad>

<BusinessObjectList>

Importing Data to the Stage Tables

If you specify AutoLoad=N when you invoke the Loader Integration Service, then you need to import

and load data manually. If you specify AutoLoad=Y, then you can proceed to review the import log (as

described in Reviewing the Import Log and Fixing Import Errors).

To import your zip file, open the Data Exchange work area and select the task Load HCM Data for

Coexistence. On the Load HCM Data for Coexistence page, click Schedule. On the Schedule Request

page, select your zip file and specify either Import or Import and Load as the Run Type value. If you

select Import, then no attempt is made to load the data to the application tables. In this case, you run

the load process separately. You also specify a batch name value. This value forms the prefix for each

business object in your file. For example, if your file contains Job and Job Family objects and you

specify the batch name MyData, then your batch names are MyData:Job and MyData:JobFamily.

Reviewing the Import Log and Fixing Import Errors

In the Search Results section on the Load HCM Data for Coexistence page, click Refresh to see the

results of the import process. All import processes produce a log file. For successful imports, the log

file summarizes the process of unzipping the file and creating the object batches. If errors occur during

44

Oracle Fusion HCM File-Based Loader Users Guide

import to the stage tables, then the errors are written to the log file. You can access the log file for a

selected zip file in the Log column of the Search Results region.

Figure 12. Navigation: Data Exchange - Load HCM Data for Coexistence

Click the Log icon to download the log file to your desktop.