Memory, Register and Aspects of System by Canara ENG CLG

Diunggah oleh

abhijithJudul Asli

Hak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

Memory, Register and Aspects of System by Canara ENG CLG

Diunggah oleh

abhijithHak Cipta:

Format Tersedia

Dept.

of ECE, CEC, Benjanapadavu VLSI Design

Module - 5

Memory, Registers and Aspects of system Timing

System timing considerations

1. Two phase non-overlapping clock should be available throughout the system.

2. Clock phases are to be identified as Φ1 and Φ2 where Φ1 is assumed to lead Φ2.

3. Data (bits) to be stored are written to the registers, storage elements and subsystem on Φ1

of the clock along with write signal WR (i.e. WR is ANDed with Φ1).

4. Data written into the storage elements are assumed to be settled down before the

occurrence of the Φ2 signal and this Φ2 is used to refresh the data i.e. stored data.

5. Delays through the datapath of the combinational logic are assumed to be less than the

interval between the leading edge’s of the two phase clock.

6. Data stored may be read from the storage elements on the next cycle of Φ1 which is

ANDed with read signal RD.

7. For the system to be stable there must be at least one clocked storage element in series

with every closed loop.

Memory Elements

Selection of memory elements depends on the following parameters

i. Area

ii. Power dissipation

iii. Volatility

Faculty: Mr. Mohan A.R Page 1

Dept. of ECE, CEC, Benjanapadavu VLSI Design

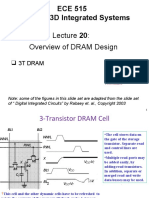

Three-transistor dynamic RAM Cell

Fig.5.1 shows the three-transistor dynamic RAM where a depletion pull up nMOS

transistor is connected between VDD and bus.

Fig.5.1: Three-transistor dynamic RAM

When WR signal goes high transistor T1 turns on and data from the bus is stored at the

gate capacitance of T2.

The bit value is stored for some time by gate capacitance of T2 when both RD and WR

signal are low.

To read the stored bit at the gate capacitance of T2, RD signal is made high. Since RD is

high, transistor T3 is turned on.

If the stored data at the gate capacitance of T2 is ‘1’ then the bus will be pulled down to

‘0’ since T2 is connected to GND.

If the stored data at the gate capacitance of T2 is ‘0’ then the bus will be held at VDD since

T2 is off.

So we can say that always complement value of the stored bit is read onto bus.

Area

Requires more area since to store one bit it requires three transistors

Faculty: Mr. Mohan A.R Page 2

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Power dissipation

Static power dissipation is nil since current flows only when RD is high and logic 1 is

stored at T2.

Hence actual dissipation associated with each bit stored will depend on the pull up

transistor and on the duration of RD signal and on the switching frequency.

Volatility

RAM Cell is dynamic and will hold the data as long as sufficient charge remains on the

gate capacitance Cg of T2.

One-transistor dynamic RAM Cell

Fig.5.2 shows the one transistor dynamic RAM which consists of a nMOS transistor and

a capacitor Cm.

Fig.5.2: Single transistor dynamic RAM

Capacitor Cm can be charged during write cycle from the read/write line by making row

select line high.

Depending upon the data stored on the read/write line the capacitor gets charged.

The data are read from the capacitor / written to the capacitor through read/write line

after sending RD and WR signals.

To read the stored value of the capacitor, the row select is made high.

A Sense amplifier is used to differentiate between a stored 0 and stored 1.

Fabricating an external capacitor into the IC requires a larger area hence it’s not a good

design.

Faculty: Mr. Mohan A.R Page 3

Dept. of ECE, CEC, Benjanapadavu VLSI Design

If we exclude the external capacitor then we can consider the diffusion to substrate

capacitance to store the charge by extending the source diffusion layer.

The capacitance formed by the diffusion to substrate capacitance is very small. To

increase the capacitance a polysilicon plate is placed on the source and then connected to

the VDD as shown in the fig.5.3.

Fig.5.3: Stick diagram of single transistor dynamic RAM by extending the source diffusion layer

Thus Cm can be realized as a three plate structure as shown in the fig.5.4.

Fig.5.4: Equivalent three plate structure

Area

Requires less area compared to three transistor dynamic RAM cell since to store one bit it

requires one transistor.

Power dissipation

No static power but switching energy is associated while writing to and reading from the

storage element.

Faculty: Mr. Mohan A.R Page 4

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Volatility

It’s volatile. Leakage current will deplete the charge stored in Cm and thus the data will

be held for only up-to 1msec or less.

Pseudo-Static RAM

If the stored elements are considered as volatile then the data should be periodically

refreshed.

In order to overcome this static storage, cells are designed which will hold the data

indefinitely.

Fig.5.5 shows the pseudo-static RAM where RD and WR synchronized with Φ1.

Fig.5.5: Pseudo-static RAM using nMOS logic

When WR.Φ1 goes high, T1 will be in on state and the data is stored at the gate

capacitance of the inverter correspondingly complemented output is obtained at the

output of each inverter.

When RD.Φ1 goes high, T2 will be in on state and the data is read into bus.

To hold the data for longer time a refresh circuit is added which connects the output of

the second inverter to the input of the first inverter through a pass transistor T3.

When Φ2 goes high T3 turns on and the output of the second inverter is applied as an

input to the first inverter.

To design a Pseudo-Static RAM using CMOS logic then replace nMOS transistor by

transmission gate as shown in the fig.5.6.

Faculty: Mr. Mohan A.R Page 5

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Fig.5.6: Pseudo-static RAM using CMOS logic

Area

Requires more area compared to three transistor and one transistor dynamic RAM cell

since two inverters are used to store one bit.

Power dissipation

Static power is there due to inverters.

Volatility

It’s non-volatile due to Φ2 signal.

Four-transistor Dynamic and Six-transistor Static CMOS Memory

Cells

The arrangement for four transistor dynamic cell for storing one bit is as shown in fig.5.7

(a). Each bit is stored on the gate capacitance of two n-transistor T1 and T2.

Both dynamic and static CMOS memory cells uses two bus; bit and ����

bit.

���� buses are pre-charged to VDD in coincidence

To perform write operation the bit and bit

with Φ1 of a two phase clock. Buses are pre-charged through transistors T5 and T6.

���� bus is discharged

When column select line is high in coincidence with Φ2 either bit or bit

by the logic level present on the I/O bus lines.

Faculty: Mr. Mohan A.R Page 6

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Fig.5.7: Dynamic and Static memory Cells

Faculty: Mr. Mohan A.R Page 7

Dept. of ECE, CEC, Benjanapadavu VLSI Design

The row select line is activated at the same time as column select and the bit line states

are written via T3 and T4 and stored at the gate capacitance Cg1 and Cg2 of T1 and T2.

T1 and T2 are interconnected in such a way that they will be in complementary states

when row select line is high.

Once the select lines are deactivated, the states of T1 and T2 are remembered until the

charges are discharged.

To perform read operation the bit and ����

bit buses are pre-charged to VDD once again in

coincidence with Φ1 through transistors T5 and T6.

If ‘1’ has been stored then T2 will be on and T1 will be off. Thus ����

bit line will be

discharged to logic 0 through T2 and stored bit is read into bus.

The arrangement for six transistor static cell for storing one bit is as shown in fig.5.7 (b).

The transistors T1 and T2 of fig.5.7 (a) are replaced by an inverter.

The write and read operation for six transistor static cell is similar to four transistor

dynamic cell.

When these cells are used it is incapable of sinking large charges quickly therefore RAM

arrays uses the sense amplifier circuits as shown in fig.5.7(c).

The transistor T1, T2, T3 and T4 forms a flip-flop circuit.

If sense line is inactive then the state of the bit line is reflected at the gate capacitance of

T1 and T3.

Current flowing from VDD through an on transistor helps to maintain the state of the bit

lines and predetermines the state which will be taken up by the sense flip-flop when the

sense line is activated.

The geometry (W and L) of the sense amplifier is such that it amplifies the current

sinking capability.

CMOS Pseudo-static D flip-flop

Fig.5.8 shows the CMOS pseudo-static D flip-flop where LD signal is synchronized with

Φ1.

When LD.Φ1 goes high, transmission gate T1 is in on state and the data D is stored at the

gate capacitance of the first inverter, correspondingly complemented output is obtained at

the output of first inverter.

Faculty: Mr. Mohan A.R Page 8

Dept. of ECE, CEC, Benjanapadavu VLSI Design

This data is stored at the gate capacitance of the second inverter; correspondingly

complemented output is obtained at the output of second inverter which is true data D.

Fig.5.8: CMOS Pseudo-static D flip-flop

To hold the data for longer time a refresh circuit is added which connects the output of

the second inverter to the input of the first inverter through a transmission gate.

LD is synchronized with Φ2 is applied as a control signal for the transmission gate T2.

When LD.Φ2 goes high transmission gate T2 turns on and the output of the second

inverter is applied as an input to the first inverter.

Faculty: Mr. Mohan A.R Page 9

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Testing and Verification

Introduction

Testing is an organized process to verify the behavior, performance, and reliability of a

device or system against designed specifications.

It ensures a device or system to be as defect-free as possible.

Testing a die (chip) can occur at the following levels

i. Wafer level

ii. Packaged chip level

iii. Board level

iv. System level

v. Field level

By detecting a malfunctioning chip early, the manufacturing cost can be kept low. For

instance, the approximate cost to a company of detecting a fault at the various levels is at

least

i. Wafer $0.01–$0.10

ii. Packaged chip $0.10–$1

iii. Board $1–$10

iv. System $10–$100

v. Field $100–$1000

Obviously, if faults can be detected at the wafer level, the cost of manufacturing is lower.

Logic Verification

In the design of integrated circuits, at all levels of abstraction, verification tools compare

the design at different levels to make sure that in the synthesis process, the designers or

optimization tools have not introduced errors, particularly logic errors.

Due to the high complexity of VLSI design and the complexity of synthesis tools, logic

verification has become increasingly important.

Logic verification detects any discrepancy in the function implemented by the two

compared logic designs.

Faculty: Mr. Mohan A.R Page 10

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Fig. 5.9: Functional equivalence at various levels of abstraction

Fig.5.9 shows the functional equivalence at various levels of abstraction.

To check the functional equivalence simulations on the two descriptions of the chip (e.g.,

one at the gate level and one at a functional level) is done and outputs are compared for

all the applied inputs.

Verification tools are used to check the functional equivalence. These tools verify that a

lower-level implementation is equivalent to a higher-level one.

Verification tools can be a

i. Formal verification tools: such as mathematical models, Boolean equivalence,

Binary Decision Diagrams (BDDs)

ii. Simulation tools

• A simulator uses mathematical models to represent the behavior of circuit

components.

Faculty: Mr. Mohan A.R Page 11

Dept. of ECE, CEC, Benjanapadavu VLSI Design

• For a given specific input signals, the simulator solves for the signals inside

the circuit.

• Simulators come in a wide variety depending on the level of accuracy and the

simulation speed desired.

a. circuit simulation

b. switch-level simulation

c. logic simulation

d. functional simulation

The behavioral specification might be a verbal description, a plain language textual

specification, a description in some high level computer language such as C, or a

hardware description language such as VHDL or Verilog, or simply a table of inputs and

required outputs.

RTL converts the HDL into a set of registers and combinational logic. The combinational

logic is optimized using algebraic and/or Boolean techniques

Structural specification converts the combinational logic into switch level.

Physical specification converts the switch level into layer specifications.

Logic Verification Principles

Testbenches and Harnesses

A verification test bench or harness is a piece of HDL code that is placed as a wrapper

around a core piece of HDL to apply and check test vectors.

In the simplest test bench, input vectors are applied to the module under test and at each

cycle, the outputs are examined to determine whether they comply with a predefined

expected data set.

The expected outputs can be derived from the golden model and saved as a file or the

value can be computed on the fly.

Simulators usually provide settable break points and single or multiple stepping abilities

to allow the designer to step through a test sequence while debugging discrepancies.

Regression Testing

Regression testing involves performing a set of simulations to automatically verify that

no functionality has changed inadvertently (accidentally) in a module or set of modules.

Faculty: Mr. Mohan A.R Page 12

Dept. of ECE, CEC, Benjanapadavu VLSI Design

During a design, it is common practice to run a regression test after design activities have

concluded to check the bug.

High-level language scripts are frequently used when running large testbenches,

especially for regression testing.

Version Control

Combined with regression testing is the use of versioning, that is, the orderly

management of different design iterations. Unix/Linux tools such as CVS or Subversion

are useful for this.

Bug Tracking

Bug-tracking systems such as the Unix/Linux based GNATS allow the management of a

wide variety of bugs.

In these systems, each bug is entered and the location, nature, and severity of the bug

noted.

The bug discoverer is noted, along with the perceived person responsible for fixing the

bug.

Manufacturing Test Principles

Manufacturing tests verify that every gate and register in the chip functions correctly.

These tests are used after the chip is manufactured to verify that the silicon is intact.

Fault Models

To deal with the existence of good and bad parts, it is necessary to propose a fault model;

i.e., a model for how faults occur and their impact on circuits.

Types of fault models

i. Stuck-At model

ii. Short Circuit / Open Circuit model

Stuck-At Faults

In the Stuck-At model, a faulty gate input is modeled as a stuck at zero (Stuck-At-0, S-A-

0) or stuck at one (Stuck-At-l, S-A-l) as shown in fig.5.10.

These faults most frequently occur due to gate oxide shorts (the nMOS gate to GND or

the pMOS gate to VDD) or metal-to-metal shorts.

Faculty: Mr. Mohan A.R Page 13

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Fig.5.10: Stuck at faults

Short-Circuit and Open-Circuit Faults

In open-circuit fault a single transistor is permanently stuck in the open state and in short

circuit fault a single transistor is permanently shorted irrespective of its gate voltage.

Fig.5.11: Bridge faults

Two bridging or shorted faults are shown in fig.5.11. The short S1 results in an S-A-0

fault at input A, while short S2 modifies the function of the gate.

Fig.5.12 shows a 2-input NOR gate in which one of the nMOS transistor A is stuck open,

then the function displayed by the gate will be

Z = �������

A+B+B �Z ′

where Z' is the previous state of the gate.

Fig.5.12: Open faults

Faculty: Mr. Mohan A.R Page 14

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Stuck open fault converts a combinational circuit into a sequential circuit.

Observability

Observability is the ability to observe, either directly or indirectly, the state of any node

in the circuit.

Observability is relevant when you want to measure the output of a gate within a larger

circuit to check that it operates correctly.

Given the limited number of nodes that can be directly observed, it is the aim of good

chip designers to have easily observed gate outputs.

Ideally, chip designers should be able to observe directly or with moderate indirection

(i.e., with a wait of few cycles) of every gate output within an integrated circuit.

Controllability

Controllability is the ability to set (to 1) and reset (to 0) every internal node of the circuit.

Controllability is important when assessing the degree of difficulty of testing a particular

signal within a circuit.

It should be the aim of good chip designers to make all nodes easily controllable.

An easily controllable node would be directly settable via an input pad.

A node with little controllability, such as the most significant bit of a counter, might

require many hundreds or thousands of cycles to get it to the right state.

For example, making all flip-flops resettable via a global reset signal is one step toward

good controllability.

Fault Coverage

Fault coverage tells what percentage of the chip’s internal nodes was checked for a set of

test vectors.

To detect fault, each circuit node is taken in sequence and held to 0 (S-A-0).

The circuit is simulated with the test vectors and the outputs are compared with a known

good machine with no nodes artificially set to 0.

If a difference is detected between the faulty machine and the good machine, the fault is

marked as detected and the simulation is stopped.

This is repeated by setting the node to 1 (S-A-1). In turn, every node is stuck (artificially)

at 1 and 0 sequentially.

Faculty: Mr. Mohan A.R Page 15

Dept. of ECE, CEC, Benjanapadavu VLSI Design

The fault coverage of a set of test vectors is the percentage of the total nodes that can be

detected as faulty when the vectors are applied.

To achieve world-class quality levels, circuits are required to have in excess of 98.5%

fault coverage.

Automatic Test Pattern Generation (ATPG)

Manufacturing test ideally would check every node in the circuit to prove it is not stuck.

Sequence of test vectors should be applied to the circuit to prove each node is not stuck.

Generation of test vector (test pattern) is tedious, hence Automatic Test Pattern

Generation (ATPG) tools are used

Automatic Test Pattern Generation, or ATPG, generates the vectors or input patterns

automatically which are required to check a device for faults.

The vectors are sequentially applied to the device under test and the device's response to

each set of inputs is compared with the expected response from a good circuit.

An 'error' in the response of the device means that it is faulty.

The effectiveness of the ATPG is measured primarily by the fault coverage achieved and

by the number of patterns generated.

Delay Fault Testing

Delay fault increases the input to output delay of one logic gate, at a time but the

functionality of the circuit is untouched.

For ex, consider an inverter gate composed of paralleled nMOS and pMOS transistors as

shown in fig.5.13

Fig.5.13: Delay fault

If an open circuit occurs in one of the nMOS transistor source connections to GND, then

the gate would still function but with increased fall time propagation delay (tpdf).

Faculty: Mr. Mohan A.R Page 16

Dept. of ECE, CEC, Benjanapadavu VLSI Design

The fault now becomes sequential as the detection of the fault depends on the previous

state of the gate.

Design for Testability

Design for testability (DFT) refers to those design techniques that make the task of

subsequent testing easier. There is definitely no single methodology that solves all testing

problems. There also is no single DFT technique, which is effective for all kinds of

circuits.

The keys to designing circuits that are testable are controllability and observability.

Good observability and controllability reduce the cost of manufacturing testing because

they allow high fault coverage with relatively few test vectors.

Main approaches of Design for Testability (DFT) are

1. Ad hoc testing

2. Scan-based approaches

3. Built-in self-test (BIST)

Ad Hoc Testing

Ad-hoc testing is useful only for small designs where scan, ATPG, and BIST are not

available.

Common techniques used for ad hoc testing are

1. Partitioning large sequential circuits

2. Adding test points

3. Adding multiplexers

4. Providing for easy state reset

Large circuits should be partitioned into smaller sub-circuits to reduce test costs. One of

the most important steps in designing a testable chip is to first partition the chip in an

appropriate way such that for each functional module there is an effective (DFT)

technique to test it. Partitioning must be done at every level of the design process, from

architecture to circuit, whether testing is considered or not. Partitioning can be functional

(according to functional module boundaries) or physical (based on circuit topology).

Faculty: Mr. Mohan A.R Page 17

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Test access points must be inserted to enhance controllability & observability of the

circuit.

Multiplexers can be used to provide alternative signal paths during testing.

Any design should always have a method of resetting the internal state of the chip within

a single cycle or at most a few cycles. A power-on reset mechanism controllable from

primary inputs is the most effective and widely used approach.

Scan Design

The scan-design strategy for testing provides observability and controllability at each

register.

The registers operate either in normal mode or scan mode.

In normal mode, registers behave as expected. In scan mode, registers are connected to

form a giant shift register called a scan chain spanning the whole chip.

Fig.5.14: Scan based testing

By applying N clock pulses in scan mode, all N bits of state in the system can be shifted

out and new N bits of state can be shifted in. Therefore, scan mode gives easy

observability and controllability of every register in the system.

Faculty: Mr. Mohan A.R Page 18

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Scan based testing is as shown in fig.5.14. The scan register is a D flip-flop preceded by a

multiplexer.

When the SCAN signal is deasserted, the register behaves as a conventional register,

storing data on the D input.

When SCAN is asserted, the data is loaded from the SI pin, which is connected in shift

register fashion to the previous register Q output in the scan chain.

Test generation for this type of test architecture can be highly automated. ATPG

techniques can be used for the combinational blocks.

The prime disadvantage is the area and delay impact of the extra multiplexer in the scan

register.

Built-In Self-Test (BIST)

Built-in Self-test allow the circuit to be self-testable

These techniques add area to the chip for the test logic, but reduce the test time required

and thus can lower the overall system cost.

One method of testing a module is to use signature analysis or cyclic redundancy

checking. This involves using a pseudo-random sequence generator (PRSG) to produce

the input signals for a section of combinational circuitry and a signature analyzer to

observe the output signals.

A PRSG of length n is constructed from a linear feedback shift register (LFSR), which in

turn is made of n flip-flops connected in a serial fashion, as shown in fig.5.15 (a).

The XOR of particular outputs are fed back to the input of the LFSR.

On reset the registers must be initialized to a non zero value.

An n-bit LFSR will cycle through 2n – 1 states before repeating the sequence.

The inputs fed to the XOR are described by a characteristic polynomial indicating which

bits are fed back.

In the fig.5.15 (a) inputs are fed to the XOR after 1st and 3rd bits hence the characteristic

polynomial 1 + x + x3.

A complete feedback shift register (CFSR), shown in fig.5.15 (b), includes the zero state

that may be required in some test situations.

Faculty: Mr. Mohan A.R Page 19

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Fig.5.15: Pseudo-random sequence generator

An n-bit LFSR is converted to an n-bit CFSR by adding an n – 1 input NOR gate

connected to all bits except the last bit.

When in state 0…01, the next state is 0…00. When in state 0…00, the next state is

10…0. Otherwise, the sequence is the same.

A signature analyzer receives successive outputs of a combinational logic block and

produces a syndrome that is a function of these outputs.

The syndrome is reset to 0, and then XORed with the output on each cycle. The

syndrome is swizzled each cycle so that a fault in one bit is unlikely to cancel itself out.

At the end of a test sequence, the LFSR contains the syndrome that is a function of all

previous outputs. This can be compared with the correct syndrome to determine whether

the circuit is good or bad.

Built-In Logic Block Observation (BILBO)

The essence of BIST is to have internal capability for generation of tests and for

compression of the results.

Faculty: Mr. Mohan A.R Page 20

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Instead of using separate circuits for these two functions, it is possible to design a single

circuit that serves both purposes known as the built-in logic block observation (BILBO)

as shown in the fig.5.16.

Fig.5.16: Built-In Logic Block Observation

The 3-bit BILBO register shown in fig.5.16 is a scannable, resettable register that also

can serve as a pattern generator and signature analyzer.

The BILBO circuit has four modes of operation, which are controlled by the mode bits

C[1:0].

In the reset mode (10), all the flip-flops are synchronously initialized to 0.

In normal mode (11), the flip-flops behave normally with their D input and Q output.

In scan mode (00), the flip-flops are configured as a 3-bit shift register between SI and

SO.

In test mode (01), the register behaves as a pseudo-random sequence generator or

signature analyzer.

If all the D inputs are held low, the Q outputs loop through a pseudo-random bit

sequence, which can serve as the input to the combinational logic.

Faculty: Mr. Mohan A.R Page 21

Dept. of ECE, CEC, Benjanapadavu VLSI Design

Verification v/s Testing

Verification Testing

Verification verifies correctness of Testing verifies correctness of

design manufactured hardware

Verification is performed by Testing is a two-part process:

simulation, hardware emulation, or i. Test generation

formal methods ii. Test application

Verification is performed once prior to Testing is performed on every

manufacturing. manufactured device.

Verification is responsible for quality Testing is responsible for quality of

of design. devices.

Faculty: Mr. Mohan A.R Page 22

Anda mungkin juga menyukai

- Final 8051Dokumen79 halamanFinal 8051Mahaboob Shareef100% (1)

- Memory, Registers and Aspects of System TimingDokumen20 halamanMemory, Registers and Aspects of System TimingShruthi ShilluBelum ada peringkat

- Notes Vlsi24Dokumen22 halamanNotes Vlsi24manasvinaik3005Belum ada peringkat

- Memory, Registers and Aspects of System TimingDokumen20 halamanMemory, Registers and Aspects of System TimingBharath C RBelum ada peringkat

- The Memory System PDFDokumen38 halamanThe Memory System PDFMeenu RadhakrishnanBelum ada peringkat

- COA Module 4 BEC306CDokumen16 halamanCOA Module 4 BEC306Csachinksr007Belum ada peringkat

- COA Module4Dokumen35 halamanCOA Module4puse1223Belum ada peringkat

- Memory SystemsDokumen93 halamanMemory SystemsdeivasigamaniBelum ada peringkat

- Column Decoder Using PTL For Memory: M.Manimaraboopathy, S.Sivasaravanababu, S.Sebastinsuresh A. RajivDokumen8 halamanColumn Decoder Using PTL For Memory: M.Manimaraboopathy, S.Sivasaravanababu, S.Sebastinsuresh A. RajivInternational Organization of Scientific Research (IOSR)Belum ada peringkat

- Rom/Prom/Eprom: Jen-Sheng HwangDokumen9 halamanRom/Prom/Eprom: Jen-Sheng Hwangkumkum_parishitBelum ada peringkat

- CO UNIT-5 NotesDokumen22 halamanCO UNIT-5 NotesAmber HeardBelum ada peringkat

- Flash MemoryDokumen68 halamanFlash Memoryshiva100% (1)

- Array SubsystemDokumen29 halamanArray SubsystemMukesh MakwanaBelum ada peringkat

- Memory and Programmable LogicDokumen62 halamanMemory and Programmable LogicChandravadhana NarayananBelum ada peringkat

- Chapter - 3: RA WADokumen12 halamanChapter - 3: RA WADinesh SaiBelum ada peringkat

- Dram KTMTDokumen24 halamanDram KTMTNguyễn Khắc ThanhBelum ada peringkat

- CO Module3Dokumen39 halamanCO Module3pavanar619Belum ada peringkat

- Semiconductor Memories: VLSI Design (18EC72)Dokumen11 halamanSemiconductor Memories: VLSI Design (18EC72)PraveenBelum ada peringkat

- Unit 4 - 1Dokumen65 halamanUnit 4 - 1sparsh kaudinyaBelum ada peringkat

- 12.3 A 6nW Inductive-Coupling Wake-Up Transceiver For Reducing Standby Power of Non-Contact Memory Card by 500×Dokumen3 halaman12.3 A 6nW Inductive-Coupling Wake-Up Transceiver For Reducing Standby Power of Non-Contact Memory Card by 500×felix_007_villedaBelum ada peringkat

- Sram StudyDokumen5 halamanSram StudyAjitaSPBelum ada peringkat

- Memory SystemsDokumen11 halamanMemory SystemsViji VijithaBelum ada peringkat

- Islamic University of Technology: EEE 4483 Digital Electronics & Pulse TechniquesDokumen20 halamanIslamic University of Technology: EEE 4483 Digital Electronics & Pulse TechniquesMUHAMMAD JAWAD CHOWDHURY, 180041228Belum ada peringkat

- Module 4Dokumen28 halamanModule 4Dr. Hadimani H.C.Belum ada peringkat

- Unit 7Dokumen5 halamanUnit 7Raja VidyaBelum ada peringkat

- Module 3Dokumen33 halamanModule 3Angel VasundharaBelum ada peringkat

- Dynamic Random Access Memories (Drams)Dokumen72 halamanDynamic Random Access Memories (Drams)Charan MsdBelum ada peringkat

- 2.1.1 Flash Memory: 2.1.2 Comparison Between Floating 2.1 High-K Gate Stacks 2.1 High-K Gate StacksDokumen4 halaman2.1.1 Flash Memory: 2.1.2 Comparison Between Floating 2.1 High-K Gate Stacks 2.1 High-K Gate Stackstanuj_sharma1991Belum ada peringkat

- Leakage Current Reduction 6TDokumen3 halamanLeakage Current Reduction 6Tshoaib nadeemBelum ada peringkat

- Power-Gated 9T SRAM Cell For Low-Energy OperationDokumen5 halamanPower-Gated 9T SRAM Cell For Low-Energy OperationShital JoshiBelum ada peringkat

- Unit 3: Cmos Logic StructuresDokumen14 halamanUnit 3: Cmos Logic StructuresPraveen AndrewBelum ada peringkat

- EC6601 VLSI Design Model QBDokumen44 halamanEC6601 VLSI Design Model QBxperiaashBelum ada peringkat

- DRAM, ROM, SRAM Notes.Dokumen3 halamanDRAM, ROM, SRAM Notes.Sravani bitraguntaBelum ada peringkat

- Lec 29 PDFDokumen9 halamanLec 29 PDFPrashant SinghBelum ada peringkat

- Desing NandDokumen4 halamanDesing NandDavid Villamarin RiveraBelum ada peringkat

- Module 5 VLSI Design NotesDokumen23 halamanModule 5 VLSI Design NotesUmme HanieBelum ada peringkat

- Svit - MODULE 3Dokumen29 halamanSvit - MODULE 3gjtuyBelum ada peringkat

- A New Read Circuit For Multi-Bit Memristor-Based Memories Based On Time To Digital Sensing CircuitDokumen5 halamanA New Read Circuit For Multi-Bit Memristor-Based Memories Based On Time To Digital Sensing CircuitHussain Bin AliBelum ada peringkat

- SRAMReportDokumen42 halamanSRAMReportPremkumar ChandhranBelum ada peringkat

- Unit 4 - VLSI Design - WWW - Rgpvnotes.inDokumen9 halamanUnit 4 - VLSI Design - WWW - Rgpvnotes.inPranav ChaturvediBelum ada peringkat

- Low Power 12T MTCMOS SRAM Based CAMDokumen6 halamanLow Power 12T MTCMOS SRAM Based CAMmani_vlsiBelum ada peringkat

- Different Types of MemoriesDokumen11 halamanDifferent Types of MemoriessamactrangBelum ada peringkat

- A New Loadless 4-Transistor SRAM Cell With A 0.18 M CMOS TechnologyDokumen4 halamanA New Loadless 4-Transistor SRAM Cell With A 0.18 M CMOS TechnologyLanku J GowdaBelum ada peringkat

- Architecture of Static Random Access Memory Design Using 65nm TechnologyDokumen3 halamanArchitecture of Static Random Access Memory Design Using 65nm Technologyanil kasotBelum ada peringkat

- Que - Classification of Memory Array ?: Es PDFDokumen12 halamanQue - Classification of Memory Array ?: Es PDFAnimisha VermaBelum ada peringkat

- Design and Stability Analysis of CNTFETDokumen5 halamanDesign and Stability Analysis of CNTFETRuqaiya KhanamBelum ada peringkat

- Computer OrganizationDokumen32 halamanComputer Organizationy22cd125Belum ada peringkat

- Overview of DRAM DesignDokumen21 halamanOverview of DRAM DesignAzim ShihabBelum ada peringkat

- Cache PPTDokumen20 halamanCache PPTshahida18Belum ada peringkat

- Lecture 12 DramDokumen54 halamanLecture 12 Dramfreetest04Belum ada peringkat

- Comparative Study of Sense Amplifiers For Sram IJERTCONV4IS32019Dokumen4 halamanComparative Study of Sense Amplifiers For Sram IJERTCONV4IS32019Bhagyavant HanchinalBelum ada peringkat

- CMOS Positive Feedback Latch Structures For Complementary Signal Edge AlignmentDokumen4 halamanCMOS Positive Feedback Latch Structures For Complementary Signal Edge AlignmentJosue EliasBelum ada peringkat

- Design, Modeling and Simulation Methodology For Source Synchronous DDR Memory SubsystemsDokumen5 halamanDesign, Modeling and Simulation Methodology For Source Synchronous DDR Memory Subsystemssanjeevsoni64Belum ada peringkat

- ASIC-System On Chip-VLSI Design - SRAM Cell Design PDFDokumen8 halamanASIC-System On Chip-VLSI Design - SRAM Cell Design PDFGowtham SpBelum ada peringkat

- Coa CH3 Q4Dokumen2 halamanCoa CH3 Q4vishalBelum ada peringkat

- VLSI Design SoC CH 6Dokumen125 halamanVLSI Design SoC CH 6Arqam Ali KhanBelum ada peringkat

- Pseudo Differential Multi-Cell Upset Immune Robust SRAM CellDokumen20 halamanPseudo Differential Multi-Cell Upset Immune Robust SRAM Cellkazem.khaari77Belum ada peringkat

- Module-5-Final GMITDokumen20 halamanModule-5-Final GMITmvs sowmyaBelum ada peringkat

- 3 Memory InterfaceDokumen14 halaman3 Memory InterfaceAKASH PALBelum ada peringkat

- Gain-Cell Embedded DRAMs for Low-Power VLSI Systems-on-ChipDari EverandGain-Cell Embedded DRAMs for Low-Power VLSI Systems-on-ChipBelum ada peringkat

- SPANNING TREE PROTOCOL: Most important topic in switchingDari EverandSPANNING TREE PROTOCOL: Most important topic in switchingBelum ada peringkat

- Blended VLSI-RN Maven-SiliconDokumen4 halamanBlended VLSI-RN Maven-SiliconBharathBelum ada peringkat

- Placement Brochure 2019Dokumen40 halamanPlacement Brochure 2019eaglebrdBelum ada peringkat

- VHDL PresentationDokumen224 halamanVHDL Presentationguptaprakhar93Belum ada peringkat

- 09-Logic Design With ASM Chart-11-07Dokumen58 halaman09-Logic Design With ASM Chart-11-07Sandeep ChaudharyBelum ada peringkat

- VLSI Design1Dokumen29 halamanVLSI Design1Sisay ADBelum ada peringkat

- System Verilog TrainingDokumen410 halamanSystem Verilog Trainingdharma0786% (7)

- 19EC303-DPSD - ECE-Updated 03.01.2020 PDFDokumen4 halaman19EC303-DPSD - ECE-Updated 03.01.2020 PDFMulla SarfarazBelum ada peringkat

- Bachelor of Science in Electrical and Electronic EngineeringDokumen22 halamanBachelor of Science in Electrical and Electronic EngineeringSharhan KhanBelum ada peringkat

- Islamic University of Technology: EEE 4765 Embedded Systems DesignDokumen20 halamanIslamic University of Technology: EEE 4765 Embedded Systems DesignAli Sami Ahmad Faqeeh 160021176Belum ada peringkat

- Unit 2 CAD For VLSI DesignDokumen69 halamanUnit 2 CAD For VLSI Designkapil chanderBelum ada peringkat

- Ahmad Roohullah Arif: (Pakistan Engineering Council Reg # Electro-17872) (Passport # GQ1989191)Dokumen2 halamanAhmad Roohullah Arif: (Pakistan Engineering Council Reg # Electro-17872) (Passport # GQ1989191)Ahmad Roohullah ArifBelum ada peringkat

- B19ei030 Internship Report1Dokumen20 halamanB19ei030 Internship Report1Tejaswini ThogaruBelum ada peringkat

- Ec8661 - Vlsi Design Laboratory Manual 2022Dokumen61 halamanEc8661 - Vlsi Design Laboratory Manual 2022KAARUNYA S R - 20ITA17Belum ada peringkat

- M.E. App Ele R21 SyllabusDokumen62 halamanM.E. App Ele R21 SyllabusValar MathyBelum ada peringkat

- CAD For VLSI Design (CS61068, 3-1-0)Dokumen12 halamanCAD For VLSI Design (CS61068, 3-1-0)Bruhath KotamrajuBelum ada peringkat

- CV Sellaroli AlessioDokumen2 halamanCV Sellaroli AlessioSellaroliAlessioBelum ada peringkat

- ECE 501 F11 Session 1a IntroDokumen13 halamanECE 501 F11 Session 1a IntroVasant KumarBelum ada peringkat

- FpgaDokumen6 halamanFpgaÁryâñ SinghBelum ada peringkat

- Vlsi Design Flow & Stick Diagrams: by M.BharathiDokumen40 halamanVlsi Design Flow & Stick Diagrams: by M.BharathiBharathi MuniBelum ada peringkat

- FFT VHDL Fpga PDFDokumen2 halamanFFT VHDL Fpga PDFLynnBelum ada peringkat

- DDV Tutorial NewformatDokumen3 halamanDDV Tutorial NewformatChintamaneni VijayalakshmiBelum ada peringkat

- Rohan Srivastav: ProfileDokumen2 halamanRohan Srivastav: ProfilerohanBelum ada peringkat

- Verilog HDL Language Lab ManualDokumen164 halamanVerilog HDL Language Lab ManualDossDossBelum ada peringkat

- Xilinx ISE 10.1 TutorialsDokumen20 halamanXilinx ISE 10.1 Tutorialssareluis30Belum ada peringkat

- Unit 3Dokumen117 halamanUnit 3MANTHAN GHOSHBelum ada peringkat

- EE316 Spring2016Dokumen5 halamanEE316 Spring2016TravisBelum ada peringkat

- Hacettepe University: Laboratory Rules ExperimentsDokumen8 halamanHacettepe University: Laboratory Rules Experimentsberal sanghviBelum ada peringkat

- B.tech Spot Valuation RegistrationDokumen6 halamanB.tech Spot Valuation Registrationmaruthi631Belum ada peringkat

- Design A Trustzone-Enalble Soc Using The Xilinx Vivado Cad ToolDokumen28 halamanDesign A Trustzone-Enalble Soc Using The Xilinx Vivado Cad ToolNguyen Van ToanBelum ada peringkat