1 - Regresi Model Building Methodology

Diunggah oleh

Evyn Muntya PrambudiJudul Asli

Hak Cipta

Format Tersedia

Bagikan dokumen Ini

Apakah menurut Anda dokumen ini bermanfaat?

Apakah konten ini tidak pantas?

Laporkan Dokumen IniHak Cipta:

Format Tersedia

1 - Regresi Model Building Methodology

Diunggah oleh

Evyn Muntya PrambudiHak Cipta:

Format Tersedia

Ch.

1

Regresi: Model Building

Methodology

Setyo Tri Wahyudi

Pendahuluan

Korelasi:

Ukuran kekuatan hubungan antara 2 variabel.

Misal X1 dengan X2.

Nilai antara 0-1; nilai 0 semakin tidak

berhubungan (tidak berkorelasi); nilai 1 korelasi

sempurna.

Regresi:

Suatu proses pembentukan model matematika

atau fungsi yang dapat digunakan untuk prediksi

atau penentuan suatu variabel oleh variabel

lainnya.

Macam-macam Regresi

Regresi Linear

Regresi linier ialah bentuk hubungan di mana variabel

bebas X maupun variabel tergantung Y sebagai faktor

yang berpangkat satu.

Regresi linier ini dibedakan menjadi:

1). Regresi linier sederhana dengan bentuk fungsi:

Y = a + bX + e,

2). Regresi linier berganda dengan bentuk fungsi:

Y = b

0

+ b

1

X

1

+ . . . + b

1

X

1

+ e

Dari kedua fungsi di atas 1) dan 2); masing-masing

berbentuk garis lurus (linier sederhana) dan bidang datar

(linier berganda).

Regresi Non-Linear

Regresi non linier ialah bentuk hubungan atau fungsi di mana variabel X

dan atau variabel Y dapat berfungsi sebagai faktor atau variabel dengan

pangkat tertentu.

Beberapa bentuk regresi non linier adalah sebagai berikut:

1). Regresi polinomial ialah regresi dengan sebuah variabel bebas

sebagai faktor dengan pangkat terurut.

Y = a + bX + cX

2

(fungsi kuadratik).

Y = a + bX + cX

2

+ bX

2

(fungsi kubik)

Y = a + bX + cX

2

+ dX

2

+ eX

4

(fungsi kuartik),

Y = a + bX + cX

2

+ dX

3

+ eX

4

+ fX

5

(fungsi kuinik), dan seterusnya.

2). Regresi hiperbola (fungsi resiprokal)

Pada regresi hiperbola, di mana variabel bebas X atau variabel tak bebas

Y, dapat berfungsi sebagai penyebut sehingga regresi ini disebut regresi

dengan fungsi pecahan atau fungsi resiprok. Regresi ini mempunyai

bentuk fungsi seperti:

1/Y = a + Bx

3). Regresi eksponensial

Regresi eksponensial ialah regresi di mana variabel bebas X berfungsi

sebagai pangkat atau eksponen. Bentuk fungsi regresi ini adalah:

Y = a ebX

Regresi sederhana vs berganda

Sederhana: terdapat dua variabel dalam model

dependent variable, the variable to be

predicted, usually called Y

independent variable, the predictor or

explanatory variable, usually called X

Y = |

0

+ |

1

X

1

+ c

Berganda: terdapat dua atau lebih variabel

independen

Y = |

0

+ |

1

X

1

+ |

2

X

2

+ |

3

X

3

+ . . . +

|

k

X

k

+ c

Evaluating Regression Model

H

H

k

a

0

1 2 3

0 :

:

| | | | = = = = =

=

At least one of the regression coefficients is 0

H

H

H

H

H

H

H

H

a a

a

k

a

k

0

1

1

0

3

3

0

2

2

0

0

0

0

0

0

0

0

0

:

:

:

:

:

:

:

:

|

|

|

|

|

|

|

|

=

=

=

=

=

=

=

=

Significance

Tests for

Individual

Regression

Coefficients

Testing

the

Overall

Model

Testing the Overall Model (F test)

0 is ts coefficien regression the of one least At :

0 :

2

1

0

=

=

=

a H

H

| |

MSR

SSR

k

MSE

SSE

n k

F

MSR

MSE

= =

=

1

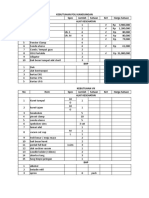

ANOVA

df

SS MS F p

Regression 2 8189.723 4094.86 28.63 .000

Residual (Error) 20 2861.017 143.1

Total 22 11050.74

. , ,

.

. . ,

01 2 20

585

28 63 585

F

F

Cal

=

= > reject H . 0

Significance Test of the

Regression Coefficients (t test)

H

H

H

H

a

a

0

1

1

0

2

2

0

0

0

0

:

:

:

:

|

|

|

|

=

=

=

=

t

Cal

= 5.63 > 2.086, reject H

0

.

Coefficients Std Dev t Stat p

X

1

0.0177 0.003146 5.63 .000

X

2

-0.666 0.2280

-2.92 .008

t

.025,20

= 2.086

Residuals and Sum of Squares

Error

SSE

Observation Y Observation Y

1 43.0 42.466 0.534 0.285 13 59.7 65.602 -5.902 34.832

2 45.1 51.465 -6.365 40.517 14 64.5 75.383 -10.883 118.438

3 49.9 51.540 -1.640 2.689 15 76.0 65.442 10.558 111.479

4 56.8 58.622 -1.822 3.319 16 89.5 82.772 6.728 45.265

5 53.9 54.073 -0.173 0.030 17 82.5 77.659 4.841 23.440

6 57.9 55.627 2.273 5.168 18 101.0 87.187 13.813 190.799

7 54.9 62.991 -8.091 65.466 19 84.9 89.356 -4.456 19.858

8 58.0 85.702 -27.702 767.388 20 108.0 91.237 16.763 280.982

9 59.0 48.495 10.505 110.360 21 109.0 85.064 23.936 572.936

10 63.4 61.124 2.276 5.181 22 97.9 114.447 -16.547 273.815

11 59.5 68.265 -8.765 76.823 23 120.0 112.460 7.540 56.854

12 63.9 71.322 -7.422 55.092 2861.017

Y Y Y

( )

2

Y Y

Y Y Y

( )

2

Y Y

SSE and Standard Error

of the Estimate

e

S

SSE

n k

where

=

=

=

1

2861

23 2 1

1196 .

: n = number of observations

k = number of independent variables

SSE

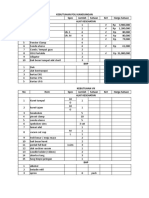

ANOVA

df

SS MS F P

Regression 2 8189.7 4094.9 28.63 .000

Residual (Error) 20 2861.0 143.1

Total 22 11050.7

Coefficient Determination (R

2

)

2

2

8189 723

11050 74

741

1 1

2861017

11050 74

741

R

R

SSR

SSY

SSE

SSY

= = =

= = =

.

.

.

.

.

.

SSE

ANOVA

df

SS MS F p

Regression 2 8189.7 4094.89 28.63 .000

Residual (Error) 20 2861.0 143.1

Total 22 11050.7

SS

YY

SSR

Adjusted R

2

adj

SSE

n k

SSY

n

R

.

.

.

. .

2

1

1

1

1

2861017

23 2 1

1105074

23 1

1 285 715 =

= =

ANOVA

df

SS MS F p

Regression 2 8189.7 4094.9 28.63 .000

Residual (Error) 20 2861.0 143.1

Total 22 11050.7

SS

YY

SSE

n-k-1

n-1

Model-Building

Stepwise Regression

Forward Selection

Backward Elimination

All Possible Regressions

Stepwise Regression

Perform k simple regressions; and

select the best as the initial model

Evaluate each variable not in the model

If none meet the criterion, stop

Add the best variable to the model; re-

evaluate previous variables, and drop any

which are not significant

Return to previous step

Forward Selection

Like stepwise, except

variables are not re-evaluated

after entering the model

Backward Elimination

Start with the full model (all k predictors)

If all predictors are significant, stop

Otherwise, eliminate the most non-

significant predictor; return to previous

step

Data for Multiple

Regression

Y World Crude Oil

Production

X

1

U.S. Energy

Consumption

X

2

U.S. Nuclear

Generation

X

3

U.S. Coal

Production

X

4

U.S. Dry Gas

Production

X

5

U.S. Fuel Rate

for Autos

Y X

1

X

2

X

3

X

4

X

5

55.7 74.3 83.5 598.6 21.7 13.30

55.7 72.5 114.0 610.0 20.7 13.42

52.8 70.5 172.5 654.6 19.2 13.52

57.3 74.4 191.1 684.9 19.1 13.53

59.7 76.3 250.9 697.2 19.2 13.80

60.2 78.1 276.4 670.2 19.1 14.04

62.7 78.9 255.2 781.1 19.7 14.41

59.6 76.0 251.1 829.7 19.4 15.46

56.1 74.0 272.7 823.8 19.2 15.94

53.5 70.8 282.8 838.1 17.8 16.65

53.3 70.5 293.7 782.1 16.1 17.14

54.5 74.1 327.6 895.9 17.5 17.83

54.0 74.0 383.7 883.6 16.5 18.20

56.2 74.3 414.0 890.3 16.1 18.27

56.7 76.9 455.3 918.8 16.6 19.20

58.7 80.2 527.0 950.3 17.1 19.87

59.9 81.3 529.4 980.7 17.3 20.31

60.6 81.3 576.9 1029.1 17.8 21.02

60.2 81.1 612.6 996.0 17.7 21.69

60.2 82.1 618.8 997.5 17.8 21.68

60.6 83.9 610.3 945.4 18.2 21.04

60.9 85.6 640.4 1033.5 18.9 21.48

Stepwise: Step 1 - Simple Regression Results

for Each Independent Variable

Dependent

Variable

Independent

Variable t-Ratio R

2

Y X

1

11.77 85.2%

Y X

2

4.43 45.0%

Y X

3

3.91 38.9%

Y X

4

1.08 4.6%

Y X

5

33.54 34.2%

All Possible Regressions

with Five Independent Variables

Four

Predictors

X

1

,X

2

,X

3

,X

4

X

1

,X

2

,X

3

,X

5

X

1

,X

2

,X

4

,X

5

X

1

,X

3

,X

4

,X

5

X

2

,X

3

,X

4

,X

5

Single

Predictor

X

1

X

2

X

3

X

4

X

5

Two

Predictors

X

1

,X

2

X

1

,X

3

X

1

,X

4

X

1

,X

5

X

2

,X

3

X

2

,X

4

X

2

,X

5

X

3

,X

4

X

3

,X

5

X

4

,X

5

Three

Predictors

X

1

,X

2

,X

3

X

1

,X

2

,X

4

X

1

,X

2

,X

5

X

1

,X

3

,X

4

X

1

,X

3

,X

5

X

1

,X

4

,X

5

X

2

,X

3

,X

4

X

2

,X

3

,X

5

X

2

,X

4

,X

5

X

3

,X

4

,X

5

Five Predictors

X

1

,X

2

,X

3

,X

4

,X

5

6.20

Functional Forms of Regression

The term linear in a simple regression model

means that there are linear in the parameters;

variables in the regression model may or may not

be linear.

6.21

True model is non-linear

Y

X

Income

Age

60

15

PRF

SRF

But run the wrong linear regression model

and makes a wrong prediction

6.22

Y

i

= |

0

+ |

1

X

i

+ c

i

Examples of Linear Statistical Models

ln(Y

i

) = |

0

+ |

1

X

i

+ c

i

Y

i

= |

0

+ |

1

ln(X

i

)

+ c

i

Y

i

= |

0

+ |

1

X

i

+ c

i

2

Examples of Non-linear Statistical Models

Y

i

= |

0

+ |

1

X

i

+ c

i

|

2

Y

i

= |

0

+ |

1

X

i

+ exp(|

2

X

i

)

+ c

i

Y

i

= |

0

+ |

1

X

i

+ c

i

|

2

Linear vs. Nonlinear

6.23

Different Functional Forms

5. Reciprocal (or inverse)

Attention to

each forms

slope and

elasticity

1. Linear

2. Log-Log

3. Semilog

Linear-Log or Log-Linear

4. Polynomial

6.24

Functional Forms of Regression models

Transform into linear log-form:

i

c

X

ln ln Y ln

+ =

1

| |

0

i

c X Y

+ + =

*

*

1

*

0

*

| |

i

c X ln Y ln

+ =

1

*

0

| |

==>

==>

1

*

1

| | =

where

*

*

*

ln

ln

|

1

= = =

X

dX

Y

dY

X d

Y d

dX

dY

elasticity

coefficient

2. Log-log model:

c

i

e X Y

0

|

1

|

=

This is a non-

linear model

6.25

Functional Forms of Regression models

Q

u

a

n

t

i

t

y

D

e

m

a

n

d

Y

X

price

1

0

|

|

=

X Y

lnY

lnX

X Y ln ln ln

1 0

| | =

lnY

lnX

X Y ln ln ln

1 0

| | + =

Q

u

a

n

t

i

t

y

D

e

m

a

n

d

price

Y

X

1

0

|

| =

X Y

6.26

Functional Forms of Regression models

3. Semi log model:

Log-lin model or lin-log model:

i i i

c

X Y

+ + =

1 0

ln

o o

i i i

c

X Y

+ + =

ln

1 0

| |

or

and

=

1

o

relative change in Y

absolute change in X

Y dX

dY

dX

Y

dY

dX

Y d 1 ln

= = =

=

1

|

absolute change in Y

relative change in X

1 ln

X

dX

dY

X d

dY

= =

6.27

5. Reciprocal (or inverse) transformations

i

i

i

c

X

Y

+ + =

)

1

(

1 0

| |

Functional Forms of Regression models(Cont.)

i i i

c X Y

+ + =

) (

*

1 0

| |

==>

Where

i

i

X

X

1 *

=

4. Polynomial: Quadratic term to capture the nonlinear pattern

Y

i

= |

0

+ |

1

X

i

+|

2

X

2

i

+ c

i

Yi

X

i

|

1

>0, |

2

<0

Yi

X

i

|

1

<0, |

2

>0

6.28

Some features of reciprocal model

X

Y

1

|

1

|

0

+ =

Y

0

|

X

0

0

> |

0

and

0

1

> |

Y

X

0

|

0

+

-

X

Y

1

|

1

|

0

+ =

0

0

< |

and

0

1

> |

Y

0

|

X

0

0 1

/

| |

0

0

> |

and

0

1

< |

Y

0

|

X

0

0 1

/

| |

0

0

< |

and

0

1

< |

6.29

Two conditions for nonlinear, non-additive equation

transformation.

1. Exist a transformation of the variable.

2. Sample must provide sufficient information.

Example 1:

Suppose

2 1 3

2

1 2 1 1 0

X X X X Y

| | | | + + + =

transforming

X

2

*

= X

1

2

X

3

*

= X

1

X

2

rewrite

*

3 3

*

2 2 1 1 0

X X X Y

| | | | + + + =

6.30

Example 2:

2

1

0

|

|

|

+

+ =

X

Y

transforming

2

*

1

1

| +

=

X

X

*

1 1 0

X Y

| | + =

rewrite

However, X

1

*

cannot be computed, because |

is unknown.

2

6.31

Application of functional form regression

1. Cobb-Douglas Production function:

c

e K L Y

0

|

2

|

1

| =

Transforming:

c K L Y

c K L Y

+ + + =

+ + + =

ln ln ln

ln ln ln ln

2 1 0

2 1 0

| | |

| | |

==>

1

ln

ln

| =

L d

Y d

2

ln

ln

| =

K d

Y d

: elasticity of output w.r.t. labor input

: elasticity of output w.r.t. capital input.

1

2 1

= + | |

>

<

Information about the scale of returns.

6.32

2. Polynomial regression model:

Marginal cost function or total cost function

cost

s

y

MC

i.e.

cost

s

y

c X X Y

+ + + =

2

2 1 0

| | |

(MC)

or

cost

s

y

TC

c X X X Y

+ + + + =

3

3

2

2 1 0

| | | |

(TC)

6.33

2

5325 . 1 304 . 100 M P N G

+ =

^

(1.368) (39.20)

Linear model

6.34

GNP = -1.6329.21 + 2584.78

lnM

2

(-23.44) (27.48)

^

Lin-log model

6.35

lnGNP = 6.8612 + 0.00057 M

2

(100.38) (15.65)

^

Log-lin model

6.36

2

ln 9882 . 0 5529 . 0 ln M NP G

+ =

^

(3.194) (42.29)

Log-log model

6.37

Wage(y)

unemp.(x)

SRF

10.4

3

wage=10.343-3.808(unemploy)

(4.862) (-2.66)

^

6.38

)

1

(

x

y

SRF

-1.428

u

N

u

N

: natural rate of

unemployment

Reciprocal Model

(1/unemploy)

Wage = -1.4282+8.7243

)

1

(

x

(-.0690) (3.063)

^

The |

0

is statistically insignificant

Therefore, -1.428 is not reliable

6.39

lnwage = 1.9038 - 1.175ln(unemploy)

(10.375) (-2.618)

^

6.40

Lnwage = 1.9038 + 1.175 ln

)

1

(

X

(10.37) (2.618)

^

Antilog(1.9038) = 6.7113, therefore it is a more meaningful

and statistically significant bottom line for min. wage

Antilog(1.175) = 3.238, therefore it means that one unit X increase

will have 3.238 unit decrease in wage

6.41

(MacKinnon, White, Davidson)

MWD Test for the functional form (Wooldridge, pp.203)

Procedures:

1. Run OLS on the linear model, obtain Y

^

Y = o

0

+ o

1

X

1

+ o

2

X

2

^

^ ^ ^

2. Run OLS on the log-log model and obtain lnY

^

lnY = |

0

+ |

1

ln

X

1

+ |

2

ln

X

2

^

^ ^ ^

3. Compute Z

1

= ln(Y) - lnY

^

^

4. Run OLS on the linear model by adding z

1

Y = o

0

+ o

1

X

1

+ o

2

X

2

+ o

3

Z

1

^

^

^

^

^

and check t-statistic of o

3

If t

*

o

3

> t

c

==> reject H

0

: linear model

If t

*

o

3

< t

c

==> not reject H

0

: linear model

6.42

MWD test for the functional form (Cont.)

5. Compute Z

2

= antilog (lnY) - Y

^

^

6. Run OLS on the log-log model by adding Z

2

lnY = |

0

+ |

1

ln X

1

+ |

2

ln X

2

+ |

3

Z

2

^

^

^ ^

^

If t

*

|

3

> t

c

==> reject H

0

: log-log model

If t

*

|

3

< t

c

==> not reject H

0

: log-log model

and check t-statistic of |

3

6.43

MWD TEST: TESTING the Functional form of regression

CV

1

=

o

Y

_

=

1583.279

24735.33

= 0.064

^

Y

^

Example:(Table 7.3)

Step 1:

Run the linear model

and obtain

C

X1

X2

6.44

lnY

^

fitted

or

estimated

Step 2:

Run the log-log model

and obtain

C

LNX1

LNX2

CV

2

=

o

Y

_

=

0.07481

10.09653

= 0.0074

^

6.45

MWD TEST

t

c

0.05, 11

= 1.796

t

c

0.10, 11

= 1.363

t

*

< t

c

at 5%

=> not reject H

0

t

*

> t

c

at 10%

=> reject H

0

Step 4:

H

0

: true model

is linear

C

X1

X2

Z1

6.46

MWD Test

t

c

0.025, 11

= 2.201

t

c

0.05, 11

= 1.796

t

c

0.10, 11

= 1.363

Since t

*

< t

c

=> not reject H

0

Comparing the C.V. =

C.V.

1

C.V.

2

=

0.064

0.0074

Step 6:

H

0

: true model

is log-log model

C

LNX1

LNX2

Z2

6.47

o

Y

^

The coefficient of variation:

C.V. =

It measures the average error of the sample regression function

relative to the mean of Y.

Linear, log-linear, and log-log equations can be

meaningfully compared.

The smaller C.V. of the model,

the more preferred equation (functional model).

Criterion for comparing two different functional models:

6.48

= 4.916 means that model 2 is better

Coefficient Variation

(C.V.)

o / Y

of model 1

^

o / Y

of model 2

^

=

2.1225/89.612

0.0217/4.4891

=

0.0236

0.0048

Compare two different functional form models:

Model 1

linear model

Model 2

log-log model

TUGAS INDIVIDU:

1. Cari sebarang data (buku, web)

2. Tentukan model awal (berdasar teori):

model linear dan model log-linear

3. Lakukan uji MWD

4. Interpretasikan hasilnya

Pengumpulan:

- Minggu Depan (17/09/2012)

- Print out

Anda mungkin juga menyukai

- Regresi Linier Berganda Bertahap & PCADokumen10 halamanRegresi Linier Berganda Bertahap & PCAkagulancity100% (1)

- Laporan KP Anisa ArimaDokumen31 halamanLaporan KP Anisa ArimaAnisa Riska Andi SaputriBelum ada peringkat

- Makalah BiplotDokumen16 halamanMakalah BiplotErna Noviani Sari0% (1)

- OPTIMAL SOLUSI RODokumen2 halamanOPTIMAL SOLUSI RONining ArlianBelum ada peringkat

- Statistika Deskriptif - Bab 6 Kesimpulan Dan Saran - Modul 1 - Laboratorium Statistika Industri - Data Praktikum - Risalah - Moch Ahlan Munajat - Universitas Komputer IndonesiaDokumen1 halamanStatistika Deskriptif - Bab 6 Kesimpulan Dan Saran - Modul 1 - Laboratorium Statistika Industri - Data Praktikum - Risalah - Moch Ahlan Munajat - Universitas Komputer IndonesiaMuhammad Ahlan Munajat (Moch Ahlan Munajat)Belum ada peringkat

- DISTRIBUSI PROBABILITASDokumen43 halamanDISTRIBUSI PROBABILITASArita SafitriBelum ada peringkat

- MULTKOLINEARITASDokumen13 halamanMULTKOLINEARITASAyu LestaryBelum ada peringkat

- Algoritma Dan Program Pengurangan Matriks Tugas Bu HerlaDokumen6 halamanAlgoritma Dan Program Pengurangan Matriks Tugas Bu HerlaSyarif DechBelum ada peringkat

- Distribusi Normal RevisiDokumen41 halamanDistribusi Normal Revisithady alfaidzinBelum ada peringkat

- Rancob Faktorial 2kDokumen16 halamanRancob Faktorial 2kNadiaBelum ada peringkat

- Materi M2 (4) - Distribusi Kontinu Yang Penting PDFDokumen58 halamanMateri M2 (4) - Distribusi Kontinu Yang Penting PDFImam Mark AzkienvevBelum ada peringkat

- Distribusi FDokumen7 halamanDistribusi Fichsan100% (1)

- 2016 Elastisitas UpdateDokumen59 halaman2016 Elastisitas UpdatesemBelum ada peringkat

- Kalkulus Integral Untuk EkonomiDokumen71 halamanKalkulus Integral Untuk EkonomiElsaBelum ada peringkat

- Regresi Stepwise - Fadilla HasanDokumen9 halamanRegresi Stepwise - Fadilla HasanfadillahasanBelum ada peringkat

- Metode SmoothingDokumen24 halamanMetode SmoothingAhmad ZaenalBelum ada peringkat

- Sistem Dinamik Makalah Tugas KelompokDokumen23 halamanSistem Dinamik Makalah Tugas KelompokEka Wahyuning DhewantyBelum ada peringkat

- Analalisis Diskriminan Dan BiplotDokumen5 halamanAnalalisis Diskriminan Dan BiplotFajar ShodikBelum ada peringkat

- p8 MatlabDokumen26 halamanp8 MatlabRadhitya PujosaktiBelum ada peringkat

- Deret aritmetika dengan bilangan ditambahkanDokumen6 halamanDeret aritmetika dengan bilangan ditambahkanFirdaus GTBelum ada peringkat

- Probabilitas Bersyarat Dan Ekspektasi BersyaratDokumen21 halamanProbabilitas Bersyarat Dan Ekspektasi BersyaratMuhammad IqbalBelum ada peringkat

- Official Statistics PrinciplesDokumen6 halamanOfficial Statistics PrinciplesNur Tsaniyah NstBelum ada peringkat

- Bab 5 Fungsi Kompleks PDFDokumen79 halamanBab 5 Fungsi Kompleks PDFElviera MelsaBelum ada peringkat

- Regresi Probit Dan TobitDokumen10 halamanRegresi Probit Dan TobitTeti Widia0% (1)

- Algoritma NotasiDokumen12 halamanAlgoritma NotasiHani NurwiyantiBelum ada peringkat

- Soal ISu GlobalDokumen3 halamanSoal ISu GlobalParida SaepulohBelum ada peringkat

- Sifat Estimator-Tak BiasDokumen11 halamanSifat Estimator-Tak BiasSiti AisyahBelum ada peringkat

- Analisis Korelasi KanonikDokumen18 halamanAnalisis Korelasi KanonikMuhammad Andry50% (2)

- Bahan Kuliah Statistika Lingkungan - 4 Dan 5 PDFDokumen75 halamanBahan Kuliah Statistika Lingkungan - 4 Dan 5 PDFhidayatunnisaBelum ada peringkat

- Optimasi Penjualan Warung MakananDokumen37 halamanOptimasi Penjualan Warung MakananFakhri AzizBelum ada peringkat

- Pengantar Manajemen SainsDokumen11 halamanPengantar Manajemen Sainsmaster_miBelum ada peringkat

- Kelompok 3 - Pengenalan Aplikasi RStudioDokumen21 halamanKelompok 3 - Pengenalan Aplikasi RStudioLylyan Amalia Mia SeptyaBelum ada peringkat

- PENGUJIAN DUA SAMPEL BERPASANGAN XDokumen14 halamanPENGUJIAN DUA SAMPEL BERPASANGAN XBustanil ErvanBelum ada peringkat

- BAB 12 ANALISIS REGRESI NovDokumen24 halamanBAB 12 ANALISIS REGRESI NovRizka DheaBelum ada peringkat

- RBLatinMakalahDokumen27 halamanRBLatinMakalahPaizo PoeTri100% (1)

- Sintaks Software R Untuk Pola DataDokumen8 halamanSintaks Software R Untuk Pola DataRahmiBelum ada peringkat

- Latihan Soal MPC2 Double SamplingDokumen3 halamanLatihan Soal MPC2 Double SamplingIndra SaputraBelum ada peringkat

- UTS Matematika DiskritDokumen3 halamanUTS Matematika DiskritMUHAMAD REZABelum ada peringkat

- Contoh Tes IntelegensiDokumen2 halamanContoh Tes IntelegensievisiregarBelum ada peringkat

- Pertemuan 2 Peranan Komputer Pada Metode Numerik PDFDokumen6 halamanPertemuan 2 Peranan Komputer Pada Metode Numerik PDFAzi HaetamiBelum ada peringkat

- Materi MatriksDokumen71 halamanMateri MatriksTeteh NandaBelum ada peringkat

- Aljabar Linier MatriksDokumen47 halamanAljabar Linier MatriksDhani MeylindraBelum ada peringkat

- ANALISIS RUNTUN WAKTUDokumen23 halamanANALISIS RUNTUN WAKTUSukmaHidayantiNurBelum ada peringkat

- Uji Statistik Dua Sampel IndependenDokumen10 halamanUji Statistik Dua Sampel IndependenAiko HikariBelum ada peringkat

- Matematika Diskrit Materi 8Dokumen5 halamanMatematika Diskrit Materi 8umiBelum ada peringkat

- TK1 2 UJI MEDIAN - Rizky - RevisiDokumen17 halamanTK1 2 UJI MEDIAN - Rizky - RevisiAmira RanaBelum ada peringkat

- Nodul PDFDokumen41 halamanNodul PDFAhmad PakayaBelum ada peringkat

- RANGKAIANDokumen20 halamanRANGKAIANWHr GedeBelum ada peringkat

- Full-DikonversiDokumen388 halamanFull-Dikonversimuktabar annurulBelum ada peringkat

- Matematika Ekonomi 3 Fungsi Non LinierDokumen17 halamanMatematika Ekonomi 3 Fungsi Non LinierBunga Nafeera HassanBelum ada peringkat

- Gujarateee en IdDokumen76 halamanGujarateee en IdSteven GouldBelum ada peringkat

- Laporan DekomposisiDokumen16 halamanLaporan DekomposisiRoghibah SalsabilaBelum ada peringkat

- Distribusi Peluang Diskrit Poisson dan AplikasinyaDokumen14 halamanDistribusi Peluang Diskrit Poisson dan AplikasinyaRahmi Nurul MaulidyaBelum ada peringkat

- BAB IV KB3 Peluang KejadianDokumen9 halamanBAB IV KB3 Peluang KejadianYoga Adi Pradana50% (2)

- EKONOMETRIKA MODELDokumen22 halamanEKONOMETRIKA MODELWahyudi SaputraBelum ada peringkat

- 5 MultikolinieritasDokumen25 halaman5 MultikolinieritasayaBelum ada peringkat

- Analisis Regresi RidgeDokumen33 halamanAnalisis Regresi RidgeFara Ariestia50% (2)

- REGRESIDokumen16 halamanREGRESISalman Al Farisi50% (4)

- Analisis Regresi Linier BergandaDokumen10 halamanAnalisis Regresi Linier BergandaNadia Budi SeptiariniBelum ada peringkat

- Summary Business StatistikDokumen26 halamanSummary Business StatistikMarchellino NattyBelum ada peringkat

- 9.pengolahan IpalDokumen1 halaman9.pengolahan IpalEvyn Muntya PrambudiBelum ada peringkat

- Daftar Hadir BpjsDokumen3 halamanDaftar Hadir BpjsEvyn Muntya PrambudiBelum ada peringkat

- Perpanjangan STR (SpA - SpA) - 3Dokumen15 halamanPerpanjangan STR (SpA - SpA) - 3Evyn Muntya PrambudiBelum ada peringkat

- POLI UMUM Dan ToiletDokumen3 halamanPOLI UMUM Dan ToiletEvyn Muntya PrambudiBelum ada peringkat

- Alkes Poli KandunganDokumen4 halamanAlkes Poli KandunganEvyn Muntya PrambudiBelum ada peringkat

- Alkes Poli KandunganDokumen4 halamanAlkes Poli KandunganEvyn Muntya PrambudiBelum ada peringkat

- Contoh Dari Pak AbuDokumen1 halamanContoh Dari Pak AbuEvyn Muntya PrambudiBelum ada peringkat

- Katering 3Dokumen5 halamanKatering 3Evyn Muntya PrambudiBelum ada peringkat

- Absensi GuruDokumen285 halamanAbsensi GuruEvyn Muntya PrambudiBelum ada peringkat

- Format Cuti BersalinDokumen3 halamanFormat Cuti BersalinEvyn Muntya PrambudiBelum ada peringkat

- IZIN KERJA DR BAYUDokumen1 halamanIZIN KERJA DR BAYUEvyn Muntya PrambudiBelum ada peringkat

- Format Berita Acara Peminjaman Barang BosDokumen1 halamanFormat Berita Acara Peminjaman Barang BosEvyn Muntya Prambudi100% (2)

- Format Cuti BersalinDokumen3 halamanFormat Cuti BersalinEvyn Muntya PrambudiBelum ada peringkat

- Dagusibu RSPSDokumen1 halamanDagusibu RSPSEvyn Muntya PrambudiBelum ada peringkat

- Rekap Absensi FillialDokumen18 halamanRekap Absensi FillialEvyn Muntya PrambudiBelum ada peringkat

- Banner KartiniDokumen1 halamanBanner KartiniEvyn Muntya PrambudiBelum ada peringkat

- Anggota Gerakan PramukaDokumen2 halamanAnggota Gerakan PramukaEvyn Muntya PrambudiBelum ada peringkat

- Lampiran FotoDokumen5 halamanLampiran FotoEvyn Muntya PrambudiBelum ada peringkat

- KONEKTIVITAS IKANDokumen8 halamanKONEKTIVITAS IKANEvyn Muntya PrambudiBelum ada peringkat

- ABSENSI GURUDokumen37 halamanABSENSI GURUEvyn Muntya PrambudiBelum ada peringkat

- Proposal Perpus 09Dokumen9 halamanProposal Perpus 09Evyn Muntya PrambudiBelum ada peringkat

- Surat Permohonan Pembuatan Dudukan Pondasi TangkiDokumen1 halamanSurat Permohonan Pembuatan Dudukan Pondasi TangkiEvyn Muntya PrambudiBelum ada peringkat

- Kisi-Kisi UN SD 2008-2012Dokumen43 halamanKisi-Kisi UN SD 2008-2012Evyn Muntya PrambudiBelum ada peringkat

- Estimasi Ketersediaan Padang Rumput LautDokumen10 halamanEstimasi Ketersediaan Padang Rumput LautEvyn Muntya PrambudiBelum ada peringkat

- Absensi Guru Fillial 2016Dokumen46 halamanAbsensi Guru Fillial 2016Evyn Muntya PrambudiBelum ada peringkat

- Mengenal Pramuka Gerakan Pramuka Dan KepramukaanDokumen2 halamanMengenal Pramuka Gerakan Pramuka Dan KepramukaanEvyn Muntya PrambudiBelum ada peringkat

- Rehab Lantai SekolahDokumen6 halamanRehab Lantai SekolahEvyn Muntya Prambudi100% (3)

- Proposal Rehab Lantai - SDN 013 - Februari 2015Dokumen6 halamanProposal Rehab Lantai - SDN 013 - Februari 2015Evyn Muntya PrambudiBelum ada peringkat

- Proposal AcDokumen5 halamanProposal AcEvyn Muntya Prambudi100% (2)

- Katalog Sep 2016 Low ResDokumen140 halamanKatalog Sep 2016 Low ResEvyn Muntya PrambudiBelum ada peringkat